AWS DevOps Blog

How MarketAxess® uses AWS Developer Tools to create scalable and secure CI/CD pipelines

Very often, enterprise organizations strive to adopt modern DevOps practices, to focus on governance and security without sacrificing development velocity. In this guest post, Prashant Joshi, Senior Cloud Engineer at MarketAxess, explains how they use the AWS Cloud Development Kit (AWS CDK), AWS CodePipeline, and AWS CodeBuild to simplify the developer experience by dynamically provisioning pipelines and maintaining governance at MarketAxess.

Problem Statement

MarketAxess is a financial technology company that operates an e-trading platform, for institutional credit markets. As MarketAxess adopted DevOps firm-wide, we struggled to ensure pipeline consistency. We had developers using static code analysis and linting, but it wasn’t enforced. As more teams began to adopt DevOps practices, the importance of providing consistency over code quality, security scanning, and artifact management grew. However, we were challenged with increasing our engineering workforce and implementing best practices in the various pipelines. As a small team, we needed a way to reliably manage and scale pipelines while reducing engineering overhead. We thought about the DevOps tenets, as well as the importance of automation, and we decided to build automation that would provision pipelines for development teams. These pipelines included best practices for Continuous Integration and Continuous Deployment (CI/CD). We wanted to build this automation with self-service, so that teams can get started developing a solution to a business problem, without having to spend too much time around the CI/CD aspects of their projects.

We chose the AWS CDK to deploy AWS CodePipeline, AWS CodeBuild, and AWS Identity and Access Management (IAM) resources, and used an API webhook using AWS Lambda and Amazon API Gateway for integration. In this post, we provide an example of how these services can be used to create dynamic cross account CI/CD pipelines.

Solution

In developing our solution, we wanted to accomplish three main goals:

- Standardization and Governance of Pipelines – We wanted to ensure consistent practices in each team’s pipeline to make sure of code quality and security.

- Simplified Developer Interaction – We wanted developers to focus mainly on interacting with the code repository for their project.

- Improve Management of Dynamically Provisioned Pipelines – Knowing that we would need to make changes, improvements, and enhancements, we wanted tools and a process that was flexible.

We achieved these goals using AWS CDK to automate the creation of CodePipeline and define mandatory actions in the pipeline. We also created a webhook using API Gateway to integrate with our Bitbucket repositories to automatically trigger the automation. The pipelines can dynamically be provisioned or updated based on the YAML manifest file submitted to the repository. We process the manifest file with Amazon Elastic Container Service (Amazon ECS) Fargate tasks, because we had containerized the processing components using Docker. However, with the release of container support in Lambda, we are now considering this as a potential replacement. These pipelines run CI stages based on the programing language defined by development teams in the manifest file, and they deploy a tested versioned artifact to the corresponding environments via standard Software Defined Lifecycle (SDLC) practices. As a part of CI stages, we semantically version our code and tag our commits accordingly. This lets us trace commit to pipeline execution. The following architecture diagram shows a CloudFormation pipeline generated via AWS CDK.

The process flow is as follows:

- Developer pushes a change to the repository.

- A webhook is triggered when the Pull Request is merged that creates or modifies the pipeline based on the manifest file submitted to the repository.

- This triggers a Lambda function that performs the following:

- Clones the repository from Internally hosted BitBucket repos.

- Uploads the repository to the source Amazon Simple Storage Service (Amazon S3) bucket, which is encrypted using Customer Managed Keys (CMK) with the AWS Key Management Service (KMS).

- An ECS Task is run, and a manifest file is passed which gives the project parameters. Pipelines are built according to these project parameters.

- An ECS Task processes the metadata file and runs cdk Logic, finally it triggers the pipeline.

- As source code is progressed through the pipeline, the build stage output to the artifact bucket. Pipeline artifacts are encrypted with a CMK. The IAM roles in the target account only have access to this bucket.

Additionally, through the power of the IAM integration with CodePipeline, the team could implement session tags with IAM roles and Okta to make sure that independent teams only approve pipelines, which are owned by respective teams. Furthermore, we use attribute-based tags to protect the production environment from unauthorized actions, so that deployment to production can only come through the pipeline.

The AWS CDK-based pipelines let MarketAxess enable teams to independently build and obtain immediate feedback, while still centrally governing CI and CD patterns. The solution took six months of two DevOps engineers working full time to build the cdk structure and support for the core languages and their corresponding CI and CD stages. We continue to iterate on the cdk code base and pipelines, incorporating feedback from our development community to ensure developer satisfaction.

Simplified Developer Interaction

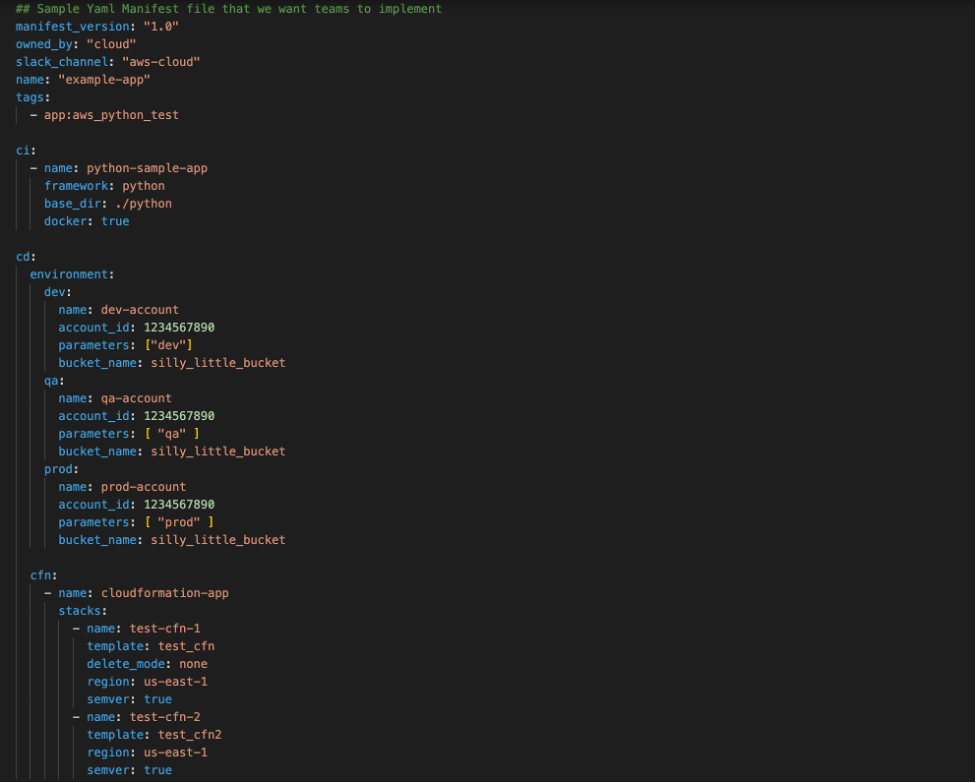

Although we were enforcing standards via the automation, we still wanted to give development teams autonomy through a simple mechanism. We wanted developers to interact with our pipeline creation process through a pipeline manifest file that they submitted to their repository. An example of the manifest file schema is in the following screenshot:

As shown above, the manifest lets developers define custom application configurations, while preserving consistent quality gates. This manifest is checked in to source control, and upon a commit to the code repository it triggers our automation. This lets our pipelines mutate on manifest file changes, and it makes sure that the latest commit goes through the latest quality gates. Each repository gets its own pipeline, and, to maintain the security of the pipeline, we used IAM Session Tags with Okta. We tag each pipeline and its associated resources with a unique attribute that is mapped to the development team so that they only have access to their pipelines, and only authorized individuals may approve production deployments.

Using AWS CDK, AWS CodePipeline, and other AWS Services, we have been able to improve the stability and quality of the code being delivered. CodePipeline and AWS CDK have helped us develop a cloud native pipeline solution that meets our governance best practices and compliance requirements. We met our three goals, and we can iterate and change easily moving forward.

Conclusion

Organizations that achieve the automation and self-service ideals of DevOps can build, release, and deploy features and apps to users faster and at higher levels of quality. In this post, we saw a real-life example of using Infrastructure as Code with AWS CDK to build a service that helps maintain governance and helps developers get work done. Here are two other posts that demonstrate using AWS Service Catalog to create secure DevOps pipelines or DevOps pipelines that deploy containerized applications.