AWS for Industries

Deploying dynamic 5G Edge Discovery architectures with AWS Wavelength

With the introduction of AWS Hybrid Cloud and Edge Computing services, we are rapidly expanding our global footprint of AWS infrastructure to provide developers with lower latency access for compute and storage services. Just within the United States alone, 19 AWS Wavelength Zones are now generally available. With more choices than ever for where to deploy applications, developers have been faced with entirely new challenges regarding locating the optimal location to service application requests.

Edge-aware deployments: Edge Discovery starts with an effective, geo-distributed deployment. Developers seeking to optimize coverage and low-latency access must deploy to multiple Wavelength Zones to meet the end-customer requirements. Workflows that incorporate an automated, deterministic mechanism to select the optimal set of edge zones at scale are critical to reducing the undifferentiated heavy-lifting of leveraging edge computing at scale. In a separate post, we cover popular techniques – such as Pod Topology Spread Constraints for containerized workloads – to address this challenge.

Edge Discovery: Once the application is deployed, developers often ask for the optimal selection criteria, heuristic, or algorithms to determine the optimal application endpoint for a given client session. This often encompasses network latency, available network bandwidth, or network topology.

Although Domain Name System (DNS) is the de-facto approach for reaching resources such as servers using a canonical name (e.g., myedgeapplication.com), it does not always provide the required accuracy and granularity needed for edge-computing environments residing within a CSP’s network. For example, DNS services such as Amazon Route 53 offer flexibility in routing policies, such as location-based routing, whereby a DNS name such as myedgeapplication.com is routed to an endpoint based on the user’s geographic location. This is often determined by the IP address of the user’s DNS resolver.

However, the IP address used in the recursive DNS lookup (unless eDNS is enabled) is from that DNS server(s) rather than the requesting device. When the DNS server’s location doesn’t match that of the UPF in which the client is attached, the result is an inaccurate mapping. Developers have requested a more granular and accurate method to locate a requesting device’s location.

To address this developer feedback, this post positions a reference pattern using Telecommunication provider-developed Edge Discovery Service (EDS) APIs and how they can be directly integrated into powerful event-driven architectures. As an example, this post demonstrates how to use the Verizon Edge Discovery Service to provide a dynamic workflow for mobile clients in highly distributed edge compute environments.

Mobile networking primer

When connecting to mobile edge environments, mobile traffic is routed over the Radio Access Network (RAN), i.e., LTE radio (eNBs) or 5G radio (gNBs) and the packet core network. When a UE attaches to the mobile network, it establishes connectivity with a given Packet Gateway (PGW) in LTE or User-Plane Function (UPF) in 5G, which handles all of the UE’s inbound and outbound data traffic. It uses Carrier-grade Network Address Translation (CG-NAT) to translate and move the UE’s traffic from the private mobile network toward the public Internet.

In mobile networks, UEs can seamlessly move and handover between radio cells in the RAN, while keeping the established IP session with the LTE PGW or (5G UPF) that the UEs are attached to in the core network. In the case of lost coverage or a UE restart (i.e., device turned off and on again), the UE or the network can trigger a re-attach and re-establish the IP session with the core network. Therefore, in most cases, the impact of mobile handover – even if a client traverses hundreds of miles – may not impact the topologically closest Wavelength Zone, even if the geographically closest Wavelength Zone changes. As a result, for mobile applications it is recommended that applications periodically confirm that the current connected endpoint remains the most optimal.

To bring this example to life, imagine a 4G/5G device that powers on one morning in New York City. It is likely that the device attaches to a PGW in the NYC metropolitan area. As a result, the lowest latency Wavelength Zone would be New York City. Even if that mobile device took a 2,000 mile trip west to Denver, the lowest latency Wavelength Zone would still be New York City, in the case that nothing triggered a re-attach to the network.

From Denver, the client would route all of their traffic through the NYC packet core, over the carrier backbone, and back to Denver – all without traversing over the public Internet. Not even geolocation-based discovery would impact this behavior. And this is precisely due to the long-lived nature of established IP sessions with the mobile core network and the session continuity and reliability that carriers have architected by-design. However, in 5G networks carriers have more flexibility with re-anchoring and session continuity. This means that the closest Wavelength Zone in this example could now be the Denver Wavelength Zone.

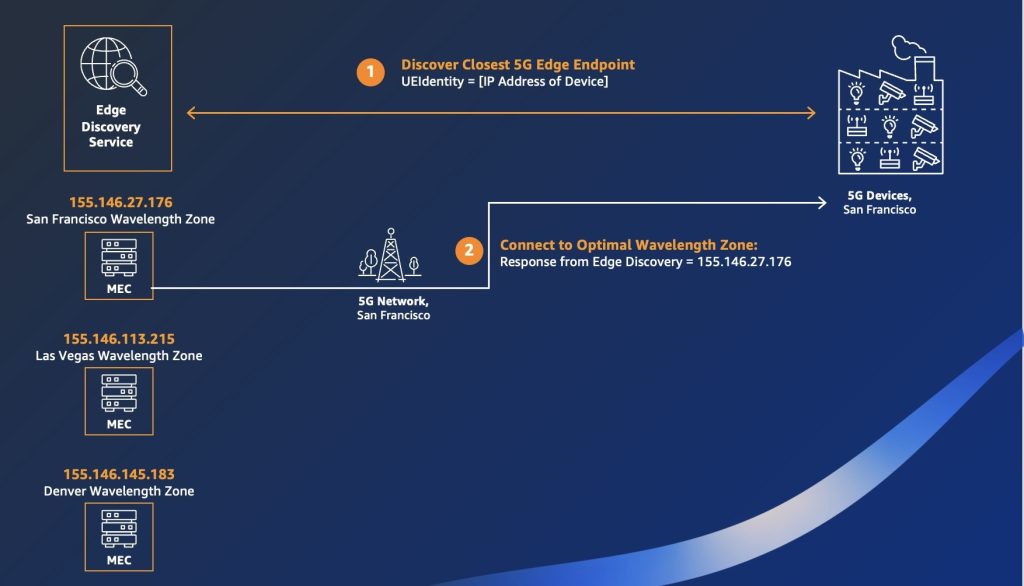

To abstract away deep domain expertise in 5G networks from the developer, Edge Discovery APIs offer an easy-to-use service mesh that exposes a 5G network topology-aware method to route clients to the most optimal edge computing zone. Using user-defined service profiles that account for network performance characteristics (e.g., max latency, desired bandwidth), Edge Discovery APIs can be used to deterministically select the topologically optimal mobile edge compute nodes. In this way, the logic of determining which edge zone is “optimal” is offloaded from the application developer to the Telecommunications provider.

Carrier-developed EDS design

EDS are APIs that let you register any application resources, such as databases, queues, microservices, and other cloud resources, with custom names. Each custom name is a unique identifier referred to as a serviceEndpoints object, consisting of the carrier-facing application service endpoint metadata. Each serviceEndpoint object consists of the carrier IPv4 address (IPv6 not currently supported in AWS Wavelength), and an optional FQDN and port, among other metadata.

When a mobile client seeks to identify the optimal edge endpoint, the client must first determine its CG-NAT Public IP through TURN server or other tools (e.g., ifconfig.me). After determining its IP address, it passes this value to the required UEIdentity attribute alongside the specific serviceEndpoints identifier to retrieve the optimal service endpoint. To learn more about the API reference, visit the 5G Future Forum API specifications or 5G EDS documentation.

Figure 1: EDS workflow

EDS workflow

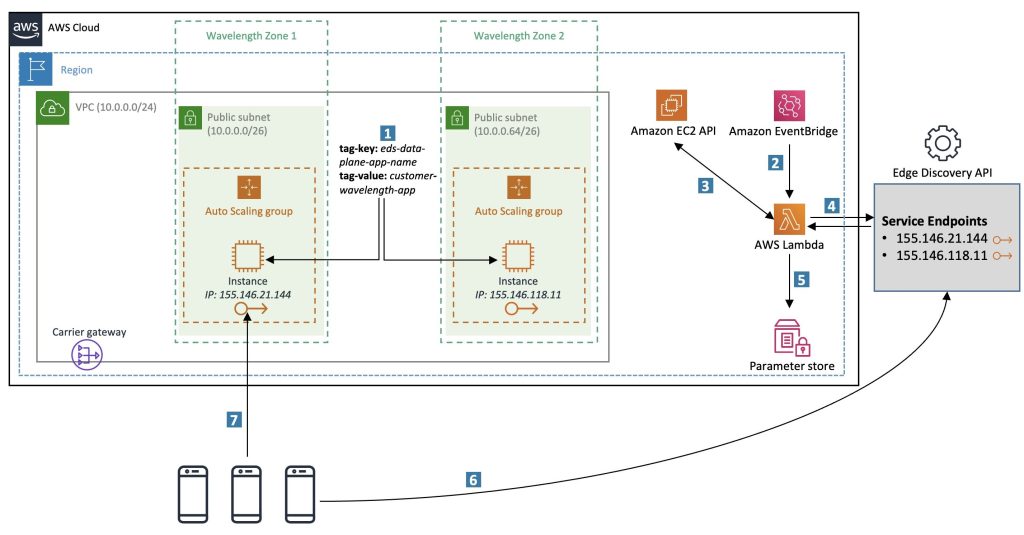

Imagine a VPC (10.0.0.0/24) with two subnets, and in each subnet there is an Amazon Elastic Compute Cloud (Amazon EC2) instance launched with an attached Carrier IP address.

1) San Francisco Wavelength Zone (us-west-2-wl1-sfo-wlz-1) with CIDR range 10.0.0.0/26

Carrier IP address attached: 155.146.21.144

2) Las Vegas Wavelength Zone (us-west-2-wl1-las-wlz-1) with CIDR range 10.0.0.64/26

Carrier IP address attached: 155.146.118.11

Figure 2: Amazon VPC Architecture

Accessing EDS

To use the EDS, you must first authenticate to the API to create a serviceProfile. This object describes the resource needs of your Edge application and defines the selection criteria in which the EDS responds. Notable service profile fields include minimum bandwidth, max request rate, minimum availability, and maximum latency.

Next, attach a serviceEndpoints object to the service profile with a series of records. As an example of the complete workflow, consider the previous diagram which illustrates the relationship between service profiles and service endpoints.

Lastly, for mobile clients wanting to identify the optimal Edge endpoint, they must authenticate the API to retrieve the endpoints from the serviceEndpointsID of choice. The response from the EDS returns a list of Edge endpoints, in order, and corresponding to those most optimal as defined by the service profile.

EDS enhancements: Dynamic Endpoint Updates

Beyond the fundamentals of UEs invoking EDS APIs, further optimizations can be made to the developer experience. For example, integrating the EDS APIs with AWS-native workflows can result in reducing the complexity of managing the mapping of edge compute service endpoints. For example, the following diagram shows what happens if a third Wavelength Zone is introduced. Without manual intervention, UEs within geographic proximity to Wavelength Zone 3 are not routed appropriately because the new location is only mapped when a new query is made to EDS to register the endpoint.

To architect a solution that dynamically registers endpoints, the following AWS services are used:

- Amazon EventBridge: by using a serverless event bus that ingests data from AWS services, you can configure custom rules that trigger an automated response.

- AWS Lambda: you can create a serverless function that extracts the relevant AWS Wavelength metadata and populates the third-party EDS.

- AWS Systems Manager Parameter Store: following the API response from the EDS, you can cache relevant information (e.g., serviceEndpointsId and credentials) using the secrets management capability.

- Amazon EC2 Tags: to make sure that multiple edge applications can be managed concurrently from a single account, make sure that application resources are tagged appropriately, and the specific tag(s) selected are utilized by the Lambda.

Figure 3: Edge Discovery Architecture with Amazon EC2

In the previous architecture, we describe an approach for dynamic Edge Discovery with the following workflow:

- Prior to deployment, pre-select a set of AWS tags that we can use for our application resources. In this example, we selected eds-data-plane-app-name and customer-wavelength-app as our tag key and value, respectively. These tags uniquely identify resources that we would like to automatically register as service endpoints to the EDS API.

- Next, configure EventBridge with a specific rule based on Amazon EC2 lifecycle events. To capture all changes to our edge environment, the most deterministic method is to capture all events in which an EC2 instance changes to the running or terminated state. When an event satisfying this state is detected, EventBridge triggers a Lambda function to capture the metadata associated with the changed environment.

- As the first part of the Lambda function, all EC2 instances that are currently in the running state with matching tags (from Step 1) are pulled from the Amazon EC2 API. For each EC2 instance retrieved, we extract the attached Carrier IP address. Note that the attached address could appear as an Elastic IP (static address attached post-launch) or ephemeral IP address allocated at launch.

- As the second Lambda function, it authenticates to the EDS API and populates the serviceEndpoints object with the latest edge endpoints. This could either entail the creation of new records or the deletion of stale records (e.g., terminated/stopped EC2 instances).

- In the current design of the EDS, each time the serviceEndpoints object is updated, a new serviceEndpointsId identifier is returned. To make sure that the AWS environment has the latest metadata, the Lambda function writes to AWS Systems Manager Parameter Store with the latest value of the serviceEndpointsId.

- Now, from the mobile device’s perspective, each time it seeks to identify the closest AWS Wavelength Zone endpoint, it can authenticate and query the EDS API and make sure it receives the optimal endpoint.

- Following the response of the EDS, the mobile client can connect directly with the <IP Address>:Port or FQDN.

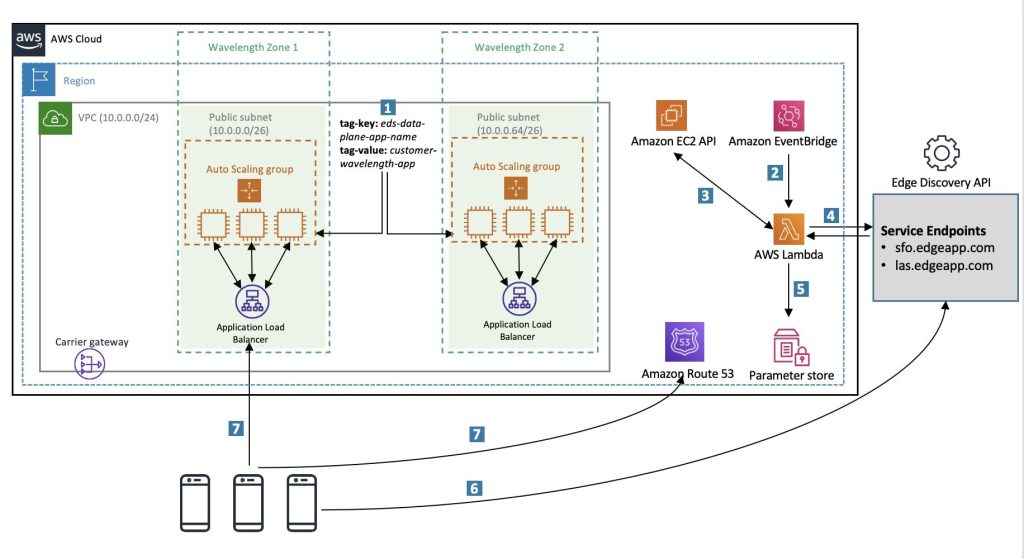

Integrating Auto Scaled Environments with EDS

As an edge application experiences higher traffic volumes, AWS Auto Scaling can be used to dynamically adjust capacity and manage this automatic scaling process. Imagine that the environment above expands to the following:

San Francisco Wavelength Zone (us-west-2-wl1-sfo-wlz-1)

Instance 1: Carrier IP address attached: 155.146.21.144

Instance 2: Carrier IP address attached: 155.146.21.135

Instance 3: Carrier IP address attached: 155.146.21.128

Las Vegas Wavelength Zone (us-west-2-wl1-las-wlz-1)

Instance 1: Carrier IP address attached: 155.146.118.41

Instance 2: Carrier IP address attached: 155.146.118.32

Instance 3: Carrier IP address attached: 155.146.118.2

Figure 4: Edge Discovery Architecture with AWS Auto Scaling

If a mobile client was to query the EDS API and the optimal edge resource name (ERN) was the San Francisco Wavelength Zone (us-west-2-wl1-sfo-wlz-1), then the API response would not natively load balance the three potential instance IP addresses that could be returned: (155.146.21.144, 155.146.21.135, 155.146.21.128). In this scenario, EDS should be used alongside DNS in the following way:

To introduce high availability, create an Application Load Balancer (ALB) in each Wavelength Zone and configure the target group with the instances in each Wavelength Zone. For example, configure an ALB in the San Francisco Wavelength Zone with the three EC2 instances above as the target group. To learn more about load balancing in AWS Wavelength, visit Enabling load-balancing of non-HTTP(S) traffic in AWS Wavelength.

After procuring a domain (e.g., edgeapp.com), create a subdomain for each Wavelength Zone with an alias record pointing to the FQDN of the load balancer. For example, sfo.edgeapp.com would resolve to the FQDN of the San Francisco Wavelength Zone ALB.

Next, configure the EDS API: after creating the servceProfile, create the serviceEndpoints object and populate a single resource record per edge resource name (ERN). In our case, there would be two records: a record corresponding to the San Francisco Wavelength Zone ERN (us-west-2-wl1-sfo-wlz-1) and a record corresponding to the Las Vegas Wavelength Zone ERN (us-west-2-wl1-las-wlz-1).

In the previous scenario, each resource record was passed to a port and an IPv4 address. This time, only pass a fully qualified domain name, corresponding the ALB within that Wavelength Zone (see the revised Step 4). When querying the EDS API from the UE, the output is a DNS name that must be resolved, rather than an explicit IP address (see Steps 7 and 8).

Direct vs. indirect Edge Discovery

Today, EDS APIs are only reachable through the public Internet and not solely within mobile networks, such as the AWS Wavelength infrastructure itself. This provides flexibility in how these APIs are invoked, in particular as it relates to whether the EDS is queried directly or indirectly by the mobile client.

Direct (client-side): In select use cases, developers seek to optimize for simplicity and have each mobile endpoint interact with the EDS directly. In this example, mobile devices follow Steps 6-7 above. The “cost” of the architecture above is twofold: there is a security impact if hundreds (if not thousands) of devices must hold a copy of API keys, as well as the potential for an unnecessarily high call volume to the EDS.

Indirect (server-side): In other cases, developers may want to “offload” the Edge Discovery to an AWS native entity – either as a containerized application or Lambda function – so that mobile devices only interact directly with this caching layer. To see an example of this model, you can check out a presentation from MongoDB World 2022 showcasing a real-time data architecture on Amazon EKS in AWS Wavelength. In this example, the web application embeds JavaScript that directly invokes a serverless function whose sole responsibility is to interact directly with the EDS of choice.

Conclusion

This post demonstrates how EDS can be used in edge computing environments to realize the optimal endpoints for highly geo-distributed, low latency applications. In addition, we presented an architecture that showcases how AWS Serverless services can enhance EDS APIs introduced by 5G and mobile edge compute environments. Furthermore, it shows how serverless workflows via EventBridge and Lambda can introduce automation and dynamic endpoint registration into your Edge Discovery architecture.

Edge Discovery is one of many exciting components of Well-Architected edge applications. To learn more, check out the hands-on lab from re:Invent 2022 or visit Verizon’s presentation on network intelligence from re:Invent 2021.

Ready to get started? Check out the Verizon EDS documentation or the 5G Future Forum website. You can also connect with the Edge Specialist Sales Team if you have questions.