AWS Contact Center

Machine learning-based customer insights with Contact Lens for Amazon Connect

Today, Amazon Web Services (AWS) announced the general availability of Contact Lens, machine learning powered contact center analytics for Amazon Connect. Amazon Connect is an easy-to-use cloud contact center that helps companies of any size deliver superior customer service at lower cost. With Contact Lens, supervisors and quality assurance managers can easily understand the sentiment, trends, and compliance risks of customer conversations within their contact centers. These insights allow companies to effectively train agents, replicate successful interactions, and identify important customer feedback.

With millions of hours of call recordings, contact centers hold valuable insights into the brand perception and customer satisfaction of a company. To extract the information trapped in customer conversations, call recordings need to be converted to text before they can be used to generate additional analysis. The resulting analytics can provide a better understanding of agent effectiveness, emerging trends in customer conversations, and adherence to company guidelines and regulatory requirements. Historically, companies that try to get value from this data use existing contact center analytics offerings, but these technologies are expensive, slow at providing call transcripts, and lack the required level of transcription accuracy. As a result, it is difficult for companies to quickly detect customer experience issues and provide precise feedback to customer service agents and supervisors. Contact Lens for Amazon Connect addresses these challenges and provides an out-of-the-box analytics experience as a part of Amazon Connect. Contact Lens automatically transcribes voice conversations, enables search, redacts sensitive personal information, and extracts customer and agent sentiment. It also detects customer issues, interruptions and non-talk time, and categorizes customer conversations based on your specified criteria. These new machine learning-based analytics features can be accessed with just a few clicks, without the need for any coding experience.

Getting started

Getting started with Contact Lens for Amazon Connect is easy. You can configure which calls you want to analyze in the Amazon Connect contact flow by selecting the Contact Lens speech analytics check box in the Set recording and analytics behavior block. Contact Lens also allows you to redact sensitive information from the call transcripts. You can enable or disable this option by choosing Redact sensitive data in the same contact flow block. Once you have completed this configuration, Contact Lens will automatically start analyzing calls that pass through this contact flow block. Just like Amazon Connect, Contact Lens requires no upfront commitments, and you only pay for what you use.

Contact Lens offers many features that make it easy for companies to analyze contact center conversations and improve overall performance. These features are explained in more detail below.

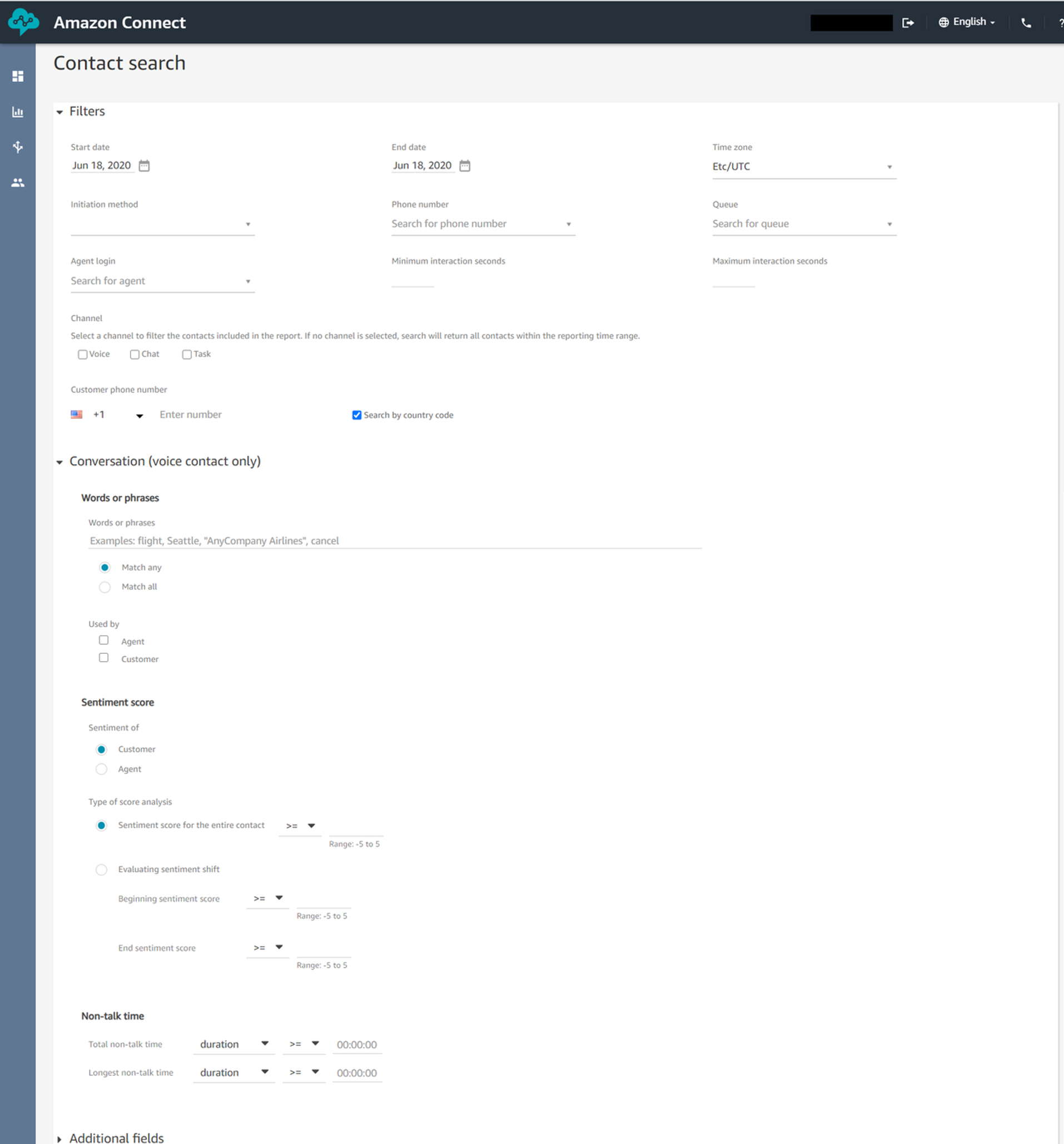

Advanced contact search

The Contact Search page in Amazon Connect allows supervisors to search for historical contacts based on criteria such as date range, agent login, phone number, and queue. When Contact Lens is enabled in an Amazon Connect contact flow, all the specified calls are automatically transcribed using machine learning. These calls are fed through a natural language processing engine to extract sentiment, and are indexed for search. The Contact Search page is enhanced to provide an out-of-the-box experience that allows supervisors to search for calls based on specific words and phrases mentioned by the customer and/or the agent during the call. This helps organizations identify issues that are affecting their customers. For example, recently, an organization realized that their customers were having issues with completing payments on their website. With Contact Lens, they were able to easily search for calls where their customers mentioned this issue. This enabled them to quantify the severity of the issue and complete a deep dive analysis.

Additionally, Contact Lens for Amazon Connect also analyzes the sentiment of words being spoken by the customer and generates a score between -5 (most negative) to +5 (most positive). The Contact Search page makes it easy for organizations to search calls based on these scores to identify customer experience issues. Supervisors can search based on the customer sentiment score at the beginning and/or end of the call. They can also search based on an average customer sentiment score over the entire call duration. For example, a supervisor can search for all calls which ended with a negative customer sentiment. This enables them to find the reasons for customer dissatisfaction and take appropriate resolution steps. Similarly, it is easy to identify calls that produced stellar customer experience based on the end sentiment score. This allows companies to develop best practices and provide required coaching to agents.

Finally, supervisors can also search for calls based on non-talk time (defined as silence plus hold time) that helps identify new customer issues and agent training gaps.

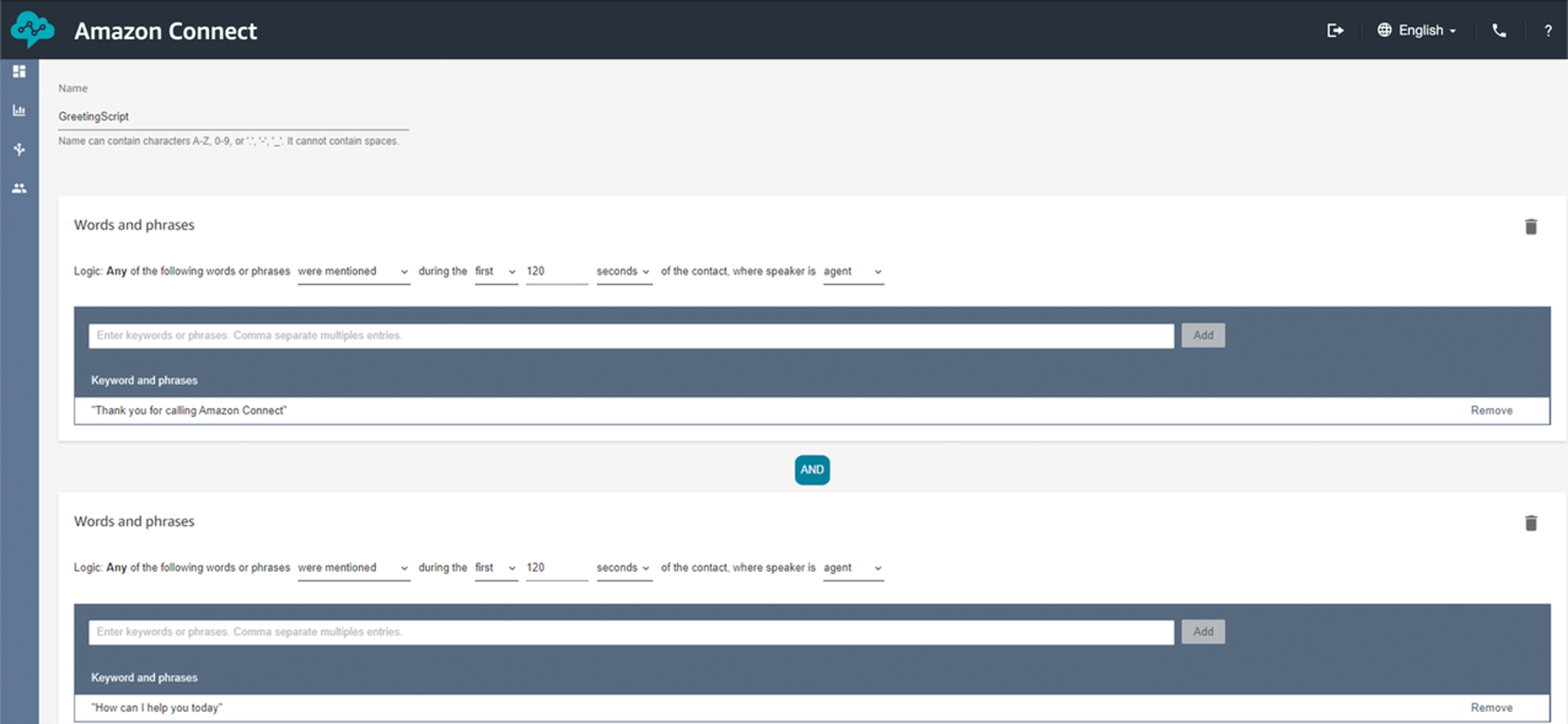

Automated contact categorization

Contact Lens also allows for deeper analysis into known issues related to customer experience, agent script adherence, and competitor mentions. With the automated contact categorization feature, supervisors can use the new Rules page in Amazon Connect to specify a custom criteria based on keywords and phrases. For example, the customer can create a category “Customer Dissatisfaction” and associate it with related phrases such as “not happy”, “quality is not good”,

and “does not work as expected”. Once a category is defined, every customer call is automatically evaluated against the specified criteria. If the criteria is met, the relevant categorization label is assigned to that call. The categorization labels are available as a part of the rich new metadata for every contact analyzed by Contact Lens.

Businesses also use the automated contact categorization feature to understand the frequency of competitor mentions by their customers. Another use case is determining whether their organization’s guidelines and best practices for interacting with customers such as standard greetings and sign-offs are being followed.

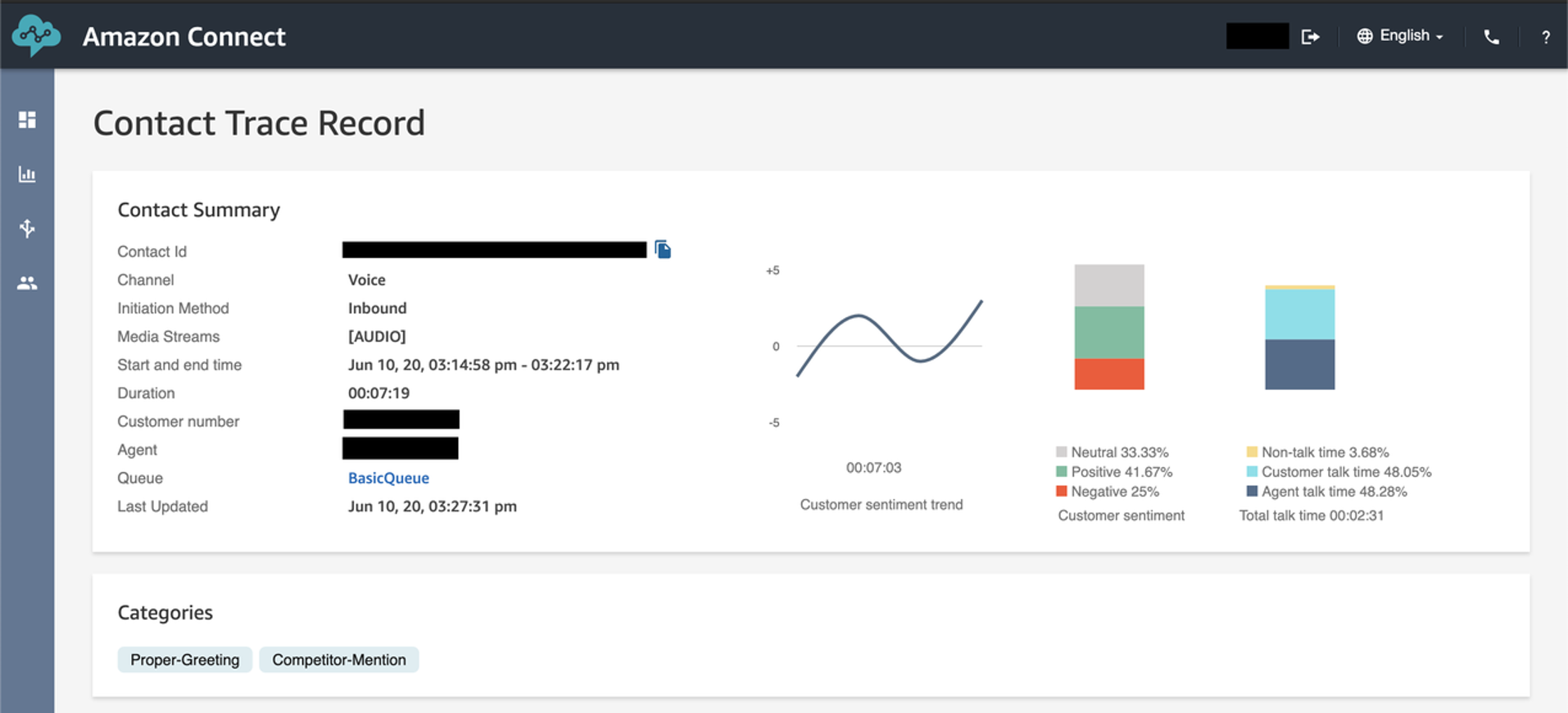

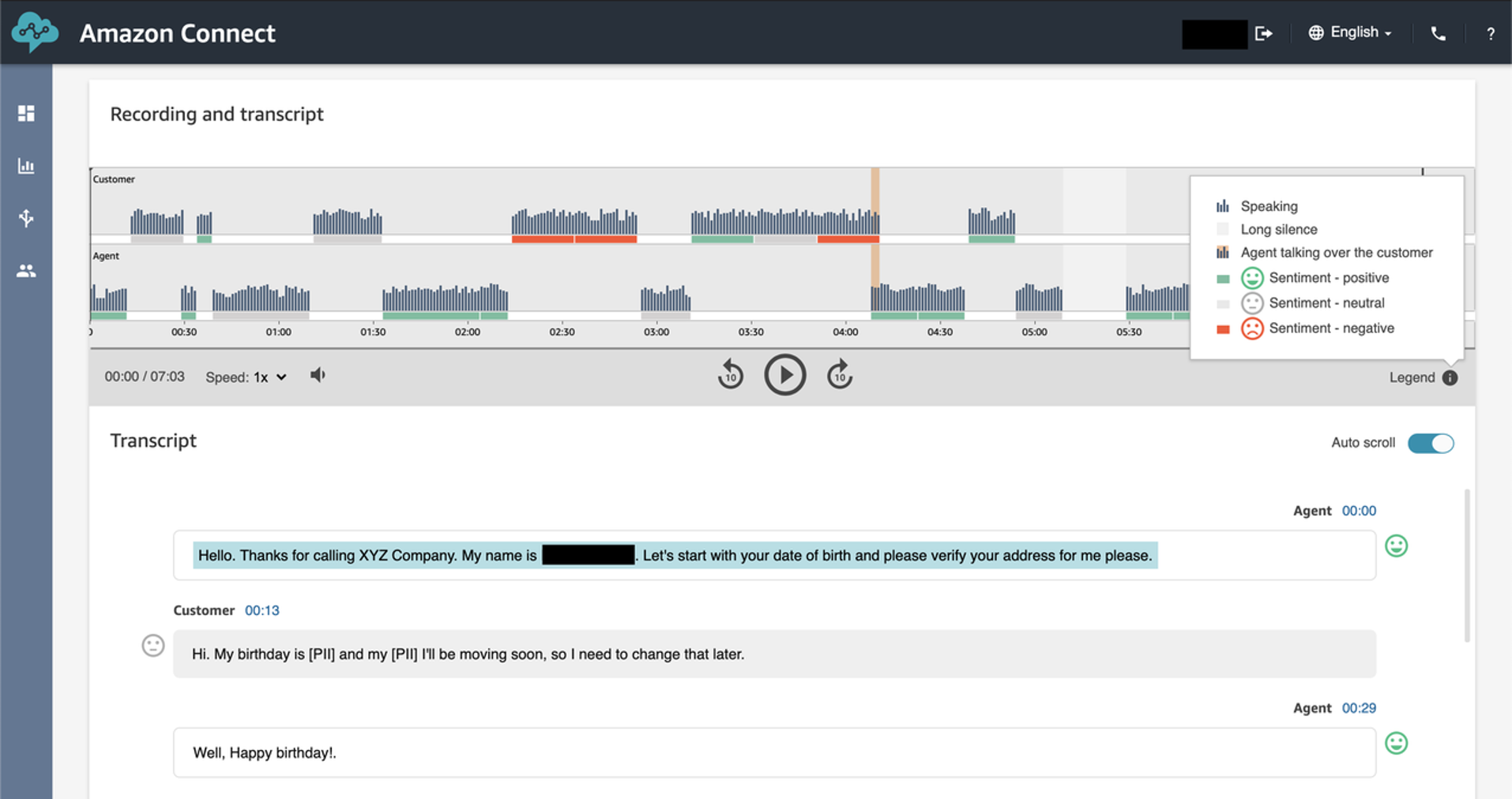

Enhanced contact trace record (CTR) detail page

In the Contact Search page, on clicking on any of the results, a supervisor can see the contact trace record (CTR) of that interaction. This includes details such as contact ID, queue name, agent name, phone number, and the associated call recording. With Contact Lens, supervisors can now have access to several new call details such as sentiment progression, silence, and interruptions where the agent or the customer talked over each other. There is a sentiment trend-line at the top right of the page that displays how the customer sentiment progressed during the call. You can also see a new visual illustration of the audio interaction between the customer and agent. This makes it easy for supervisors to identify specific portions of the call such as interruptions, non-talk time, and sentiment associated with agent and customer utterances.

The new turn-by-turn transcript, and sentiment data allows supervisors to quickly read the entire customer interaction, while only having to hear a specific part of the call recording. This saves them valuable time spent listening to the entire call recording. Supervisors can quickly listen to a particular section of the call by clicking on the timestamp provided at each turn, which brings them to that specific point in the recording.

Issue detection

Contact Lens is also introducing a feature wherein the primary call driver or reason for the customer outreach is underlined in the call transcript. This information can be used to identify common emerging patterns across customer conversations. This can then be provided as feedback to business teams within an organization to rectify common issues or improve product development efforts.

Sensitive data redaction

Contact Lens also provides the ability to redact sensitive data such as name, address, credit card details and social security number from both the call transcript and the audio recording with a high degree of accuracy. Organizations can also set up permissions to provide specific supervisors access to the original (unredacted) transcript. This gives you granular control on who can access sensitive information to keep customer data secure.

Open and flexible data

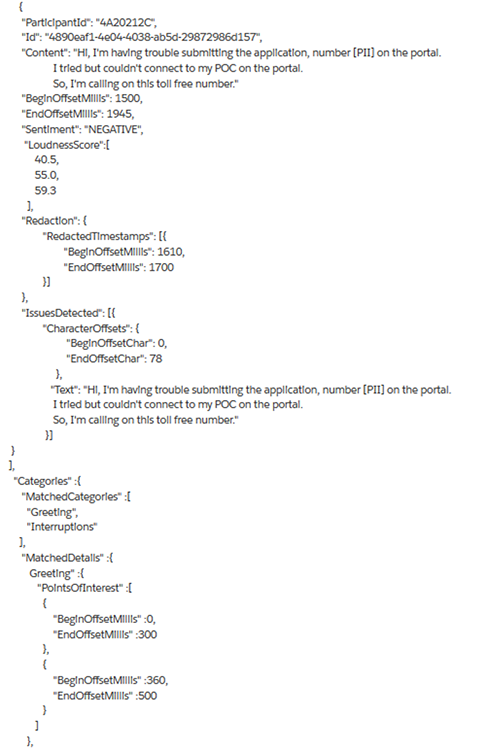

We realize that organizations may want to tackle more use cases beyond the ones that Contact Lens provides and will need the underlying data. Contact Lens produces an output file in your Amazon Simple Storage Service (S3) bucket that contains all the underlying metadata. This includes the call transcriptions, sentiment scores, categorization labels, talk speed, interruptions, and call driver/issues. Organizations can leverage this data to build additional analytics with any tool of their choice. For example, organizations can use business intelligence tools such as Amazon QuickSight or Tableau to run reporting off the Contact Lens data and any existing Customer Relationship Management (CRM) data to analyze customer engagements. You can also have your data science teams use this data to create custom machine learning models with Amazon SageMaker. The following is an example of the output generated by Contact Lens in a JSON format.

Get started

Get started with Contact Lens in your Amazon Connect contact center instance today or reach out to your AWS sales partner for more information. Contact Lens is available in the US West (Oregon), US East (N. Virginia), Europe (London), Europe (Frankfurt), Asia Pacific (Singapore), Asia Pacific (Tokyo), and Asia Pacific (Sydney) regions. As a part of the AWS Free Tier, you can get started with Amazon Connect free for twelve months. Contact Lens for Amazon Connect offers a free tier at 90 minutes for audio calls per month, the same as Amazon Connect free tier usage.