AWS for M&E Blog

Part 2: How to compete with broadcast latency using current adaptive bitrate technologies

Part 1: Defining and Measuring Latency

Part 2: Recommended Optimizations for Encoding, Packaging, and CDN Delivery (this post)

Part 3: Recommended Optimizations for Video Players

Part 4: Reference Architectures and Tests Results

Part 2: Recommended Optimizations for Encoding, Packaging, and CDN Delivery

In the first part of this blogs series, we covered why latency is a problem for OTT streaming and how to measure the responsibility of different workflow steps in end-to-end latency. Let’s start our journey into the possible optimizations, beginning with encoding, packaging, and CDN delivery steps. By manipulating the mentioned parameters, you will be able to prepare a nicely optimized low latency live stream for your viewers.

Encoding

We already had a glimpse of how to optimize the capture latency with AWS Elemental Live by using the Low Latency Mode input parameter. Be aware that this parameter may result in more dropped audio packets on input timestamp discontinuities. The Input Buffer Size parameter could also be used to reduce the number of frames buffered at the input stage to the minimum, although at the risk of dropping some frames.

In the video encoding section, several parameters can influence latency:

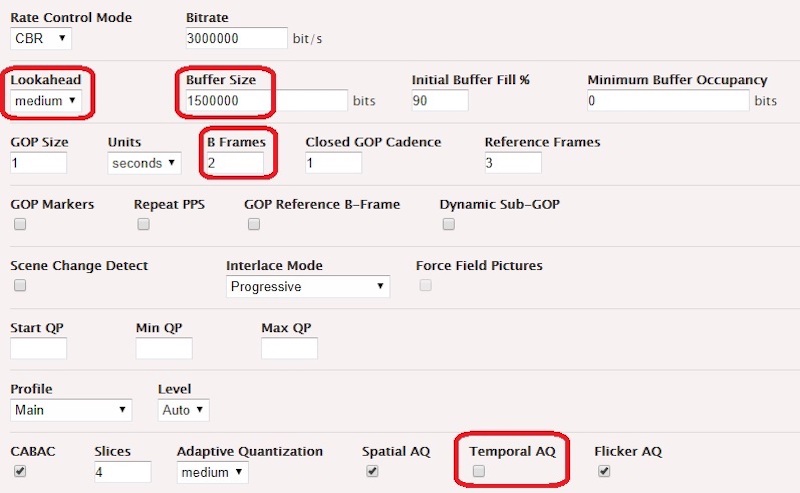

- Lookahead: Setting it to low will improve latency while reducing output quality for demanding scenes. Low works well mainly if there are no or few scene changes, though.

- GOP parameters: It is recommended to use closed GOPs of 1 second duration that can be later on repackaged in 2 seconds segments if needed. Small GOPs without B Frames generally reduce video quality.

- B Frames: The more B Frames used in a GOP, the higher the probability that encoding latency will be increased by a few frames for each B Frame added, as the encoding engine will look ahead to the next P Frames to construct the B frame. Using zero B Frames is possible to evacuate this latency impact, but it will require raising the encoding bitrate to keep the same quality level as when using B Frames.

- Temporal Adaptive Quantization: Turning it off will reduce latency by a few frames. The other Adaptation Quantization options don’t impact latency.

- Encoder Buffer Size: The default value is twice the video bitrate in bits, which generates a 2 second delay on the decoder. If set to 1x bitrate, it generates a 1 second delay and slightly impacts the video quality. For a very aggressive low latency target, the buffer size could be set to half of the bitrate, which results in half a GOP (1/2 second) of delay. Of course, the video quality will be more impacted; under 1/2x bitrate, the impact on video quality is more severe.

- Video Preprocessors: If a Deinterlacer processor is required, the Low Latency interpolation algorithm should be selected.

With AWS Elemental MediaLive, the same settings are available, apart from the last, which is not exposed, past the Progressive scan type in the Stream Settings > Frame Rate section. In terms of the encoding ladder, it is recommended to add a lightweight stream at the lower end of the ladder so that mobile devices under difficult network conditions will still be able to access streams despite the segment size being shorter than usual.

Packaging

With almost every player, there’s a mechanical effect of the segment duration on the latency. With 1 second duration segments, it’s possible to reach 5 second latency. With 2 second duration segments, it’s fairly impossible, and the result will always be between 7 and 10 seconds, unless you apply serious optimizations on the player settings. 1 second segments will automatically generate thin player buffers, so the robustness of the playback session will be inferior unless the player provides specific mechanisms to quickly overcome a dry buffer.

It’s very important to choose the right segment size for your requirements. If you don’t absolutely need to reach a latency below 7 seconds, don’t use 1 second segments, but rather 2 second segments, which are fine for a latency between 7 and 10 seconds. If you are using 2 second segments for your players, it’s also beneficial to:

- Raise the GOP length from 1 to 2 seconds, so that you can increase the encoding quality at constant bitrate.

- Use 2 second segments when ingesting on your origin (if you use HLS as the ingest format), as it reduces stress on the origin storage and packaging computation.

The Index Duration (the length of your DVR window) also impacts latency. Some players will buffer more when presented a one-hour DVR window in the playlist/manifest than when presented a playlist/manifest with only the last three segments referenced.

With AWS Elemental Live, you will need to lower the HLS/DASH Retry Interval publishing as network transfer errors will need to be corrected faster than usual. Set it to 1 second for 2 seconds segments, and to zero seconds for 1 second segments.

CDN Delivery

There is not much that you can change in your CDN configurations in order to reduce latency. Amazon CloudFront doesn’t add artificial buffers along the chain. If you are using other CDNs combined with your AWS Elemental origin service, you may find that there is a CDN-intrinsic buffer intentionally generated by the CDN architecture. In that case, ask your CDN Professional Services to disable it in your delivery configuration if possible.

For HLS playlists and DASH manifests, you should check that your CDN configuration allows it to be served in gzipped format, if the player supports this kind of compression. It will ease loading operations if long DVR windows are used in HLS or DASH/SegmentTimeline.

As players in low latency mode are generally aggressive in their requests compared to live edge time, there’s a high chance that they will request segments in the future, resulting in 404s at the edge. Each CDN has a unique default TTL value for caching these 404s, and generally this value isn’t friendly with low latency streams so you need to adjust it. For an Amazon CloudFront distribution, you can set it to 1 second in the ‘Error Pages’ section of the configuration panel.

Unrelated to low latency, but still important for your workflow: You need to whitelist the ‘Origin’ incoming header so that your CDN forwards it to the origin, as it is the key trigger for all downstream CORS policies returned by your origin. For Amazon CloudFront, you can set this in the ‘Behaviors’ section of the configuration panel.

Finally, if the HLS playlists’ or DASH manifests’ TTLs are set on the CDN side, you should verify that they are shorter or equal to your HLS segmentation interval or DASH manifest update interval.

In our next blog post, we’ll examine what optimization options we can apply to video players.

————