AWS for M&E Blog

Real-Time Streaming Protocol now supported in AWS Elemental Live

Streaming media updates

Like many technologies, video streaming protocols have evolved over decades to provide greater performance, reliability, and efficiency. Modern video technology plays an important part in elevating the viewing experience, and the same can be said about how a video architecture is designed and implemented.

In some cases, legacy protocols can be adapted to newer architectures, paving the way for modernization and cloud service adoption. In this post, we discuss how this pertains to the revival of a legacy video network protocol for AWS Elemental Live, an on-premises video encoder and transcoder.

Protocol input support for Real-Time Streaming Protocol (RTSP) is now available in AWS Elemental Live software version 2.22.4 GA (general availability). Through this feature, AWS Elemental Live can ingest RTSP-formatted media streams and provide conversions to broadcast-grade contribution protocols and web-streaming distribution protocols for broader end-user consumption.

Background

The original RTSP 1.0 specification was published in 1998 under Request for Comments (RFC) 2326. In 2016, RTSP 2.0 became the successor under RFC 7826. The specification states the following:

RTSP is an application-level protocol for control over the delivery of data with real-time properties. RTSP provides an extensible framework to enable controlled, on-demand delivery of real-time data, such as audio and video. Sources of data can include both live data feeds and stored clips. This protocol is intended to control multiple data delivery sessions, provide a means for choosing delivery channels such as UDP [User Datagram Protocol], multicast UDP and TCP [Transmission Control Protocol], and provide a means for choosing delivery mechanisms based upon RTP [Real-time Transport Protocol] (RFC 3550).

Simply put, RTSP acts like a network remote control for multimedia. It works as a bidirectional request and response protocol between client and server devices. For example, a video player acting as a client might request information about multimedia from an internet protocol (IP) camera acting as a server. If a multimedia presentation is available, the client might request a session with the server to control media delivery. Basic network controls include functions like play, pause, or stop/teardown. The integration of RTSP within AWS Elemental Live automates the media delivery functions through the use of an RTSP Uniform Resource Identifier (URI). Similar to a Uniform Resource Locator (URL), a URI is a string of characters that identifies a physical or logical resource such as an IP camera. Setting up the RTSP URI to properly deliver a media stream to AWS Elemental Live is an important configuration step discussed in more detail below.

Contribution vs. distribution in streaming media workflows

Traditional video workflows that rely on RTSP for the complete delivery of streaming media are typically more restrictive and less scalable compared to that of HTTP Live Streaming (HLS) or Dynamic Adaptive Streaming over HTTP (DASH). However, RTSP can be adapted to work with these newer HTTP streaming protocols under a different architectural approach.

Originally, RTSP was designed to transport video from source to destination using a single protocol. In contrast, modern video delivery systems let the architecture separate formats and protocols by contribution or distribution type. This kind of separation optimizes the flow of media from end to end by using the strengths of different protocols for different applications.

Contribution typically refers to the point-to-point transport of content between systems or locations (system to system). The contribution side of a video delivery system is commonly focused on the front end of an architecture such as the media supply chain. Video distribution workflows are associated with the backend of an architecture, which might involve video transcoding to format content for end user devices (system to user).

RTSP conforms very well to a contribution workflow, whereas the distribution workflow might be better served by newer streaming formats such as HLS or DASH. Although this style of architecture isn’t always implemented, contribution to distribution remains a popular model among broadcasters, with flexibility to tune media transport and delivery options for quality, reliability, latency, and accessibility. The tables below separate many popular protocols by contribution and distribution workflows.

Table 1: Contribution Protocols

Table 2: Distribution Protocols

Architecture considerations

Why would you use RTSP as a contribution protocol when there are so many other mainstream contribution protocols available? The answer has less to do with the sophistication of other contribution protocols and more to do with the number of new and existing devices that generate RTSP-formatted media streams.

The most common types of devices that use RTSP are IP cameras or pan-tilt-zoom (PTZ) cameras. The primary use cases for these devices involve remote monitoring, surveillance systems, closed-circuit television (CCTV), and live event production (for example, House of Worship and concert venues). Many IP camera manufacturers have standardized on the use of RTSP to deliver streaming media from cameras to software or hardware decoders.

Although RTSP performs quite well in point-to-point applications, it isn’t optimized for scalable or distributed video distribution. Additionally, over the years, end user consumption has significantly changed from legacy thick-client applications to web browsers and mobile devices.

Taking these challenges into account, AWS Elemental Live can now ingest RTSP streams and output a variety of modern contribution and distribution protocols to fit different architectural models. The solution is also poised to use a variety of AWS services—such as AWS Media Services and Amazon CloudFront, a content delivery network service—to transport, process, and deliver media from the cloud.

The architectural diagrams in Figures 1–3 show some examples of how you can employ IP cameras that use RTSP alongside AWS Elemental Live to set up a contribution or distribution style workflow or simply perform protocol adaptation with other broadcast-grade video systems.

Figure 1: Local RTSP-to-RTP contribution with cloud HLS transcoding, packaging, and delivery

Figure 2: Local RTSP contribution to HLS transcoding with cloud packaging and delivery

Figure 3: Local RTSP-to-broadcast contribution adaptation

Implementation and setup

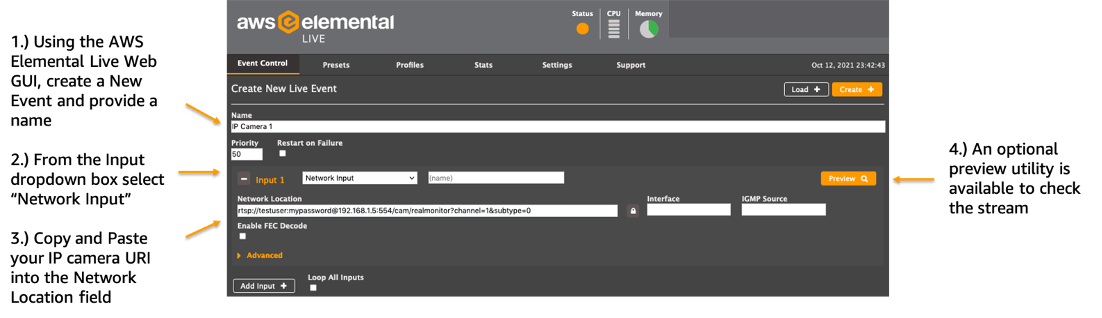

Setting up AWS Elemental Live to ingest RTSP streams is straightforward once you understand the semantics and syntax of the URI. The RTSP URI typically involves a prefix containing conventional syntax and a suffix containing manufacturer-specific syntax. The example in Figure 4 shows an RTSP URI from a third-party IP camera.

(Note: Exact RTSP URI syntax will vary from device manufacturer to device manufacturer. Generally, the prefix remains consistent, but the suffix varies based on device makes, models, and streaming capabilities.)

Figure 4: RTSP URI

In Figure 4, the URI not only targets a specific IP camera but also provides a set of instructions for the type of media delivered to a client. These instructions can include compression formats, video resolution, frame rate, bit rate, and other stream attributes.

Often, IP cameras have the ability to serve streams to more than one channel or session at a time. The IP camera used in this exercise can simulcast up to three channels concurrently. It also lets each channel operate as a preconfigured high-resolution stream or a low-resolution stream as indicated by the subtype value shown.

Preset channel configurations are typically provisioned during initial IP camera setup. A URI is then used to request one or more stored channel configurations to facilitate media delivery. In Figure 4, the instructions are set to authenticate with IP camera 192.168.1.5 and request video over RTSP port 554 using channel 1 and subtype 0 configurations. This results in a single-channel high-resolution 4K High Efficiency Video Coding (HEVC) stream with a constant bit rate of 4 Mbps.

Example implementation

The following steps show how to configure AWS Elemental Live to accept a live 4K RTSP stream, perform transcoding to 1080P HEVC, and provide an output for contribution using RTP.

Step 1: Define input settings

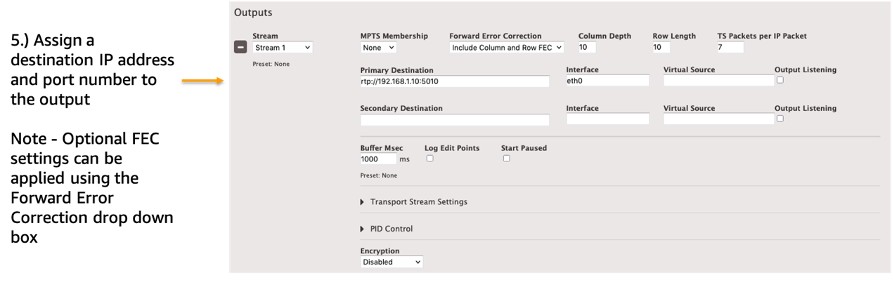

Step 2: Define output settings

Step 3: Define transcoding settings

Summary

RTSP is one of the earliest video network protocols in the industry and is commonly used by IP camera manufacturers to facilitate the delivery of streaming media over IP networks. However, given the limited scalability and point-to-point nature of the protocol, it can be difficult to deliver content to today’s highly mobile and web-centric user environment. By adding support for RTSP inputs, AWS Elemental Live bridges the gap from legacy protocol implementations to newer HTTP streaming protocols such as HLS and DASH.

You can also use this solution alongside AWS Media Services to build channel-based video workflows capable of connecting streaming media from your IP cameras to authorized users on nearly any device type or screen size for viewing.

Get started with this solution today by logging in to the AWS Management Console—the web interface where you can manage your resources on AWS—and going to “Elemental Appliances & Software” under “Media Services.”