AWS Cloud Operations Blog

Ingesting activity events from non-AWS sources to AWS CloudTrail Lake

AWS CloudTrail Lake is a managed data lake for capturing, storing, accessing, and analyzing user and API activity on AWS for audit, security, and operational purposes. You can aggregate and immutably store your activity events, and run SQL-based queries for search and analysis. In Jan 2023, AWS announced the support of ingestion for activity events from non-AWS sources using CloudTrail Lake, making it a single location of immutable user and API activity events for auditing and security investigations.

Providing secure, quick and easy access to audit data is critical to analyzing and resolving security incidents and operational issues. Collecting, storing and securing this data from various sources can however be time-consuming and complex. In this blog post, we will demonstrate how to use Amazon CloudTrail Lake to solve these challenges by aggregating log data from outside of AWS; from any source in your hybrid environments, such as in-house or SaaS applications hosted on-premises or in the cloud, virtual machines, or containers into a single enterprise-wide managed audit store, with built-in role-based access and SQL-like query capabilities.

Prerequisites

To follow along with this walkthrough, you must have the following:

• AWS CLI – Install the AWS CLI

• SAM CLI – Install the SAM CLI. The Serverless Application Model Command Line Interface (SAM CLI) is an extension of the AWS CLI that adds functionality for building and testing AWS Lambda applications.

• Python 3.9 or higher – Install Python

• An AWS account with an AWS Identity and Access Management (IAM) role that has sufficient access to provision the required resources.

• A Google Cloud Platform account that has sufficient access to provision the required resources and has access to the Cloud Audit Logs.

Solution Architecture

You can use CloudTrail Lake integrations to log and store user activity data from outside of AWS; from any source in your hybrid environments, such as in-house or SaaS applications hosted on-premises or in the cloud, virtual machines, or containers. After you create an event data store in CloudTrail Lake and create a channel to log activity events, you call the PutAuditEvents API to ingest your application activity into CloudTrail. You can then use CloudTrail Lake to search, query, and analyze the data that is logged from your applications.

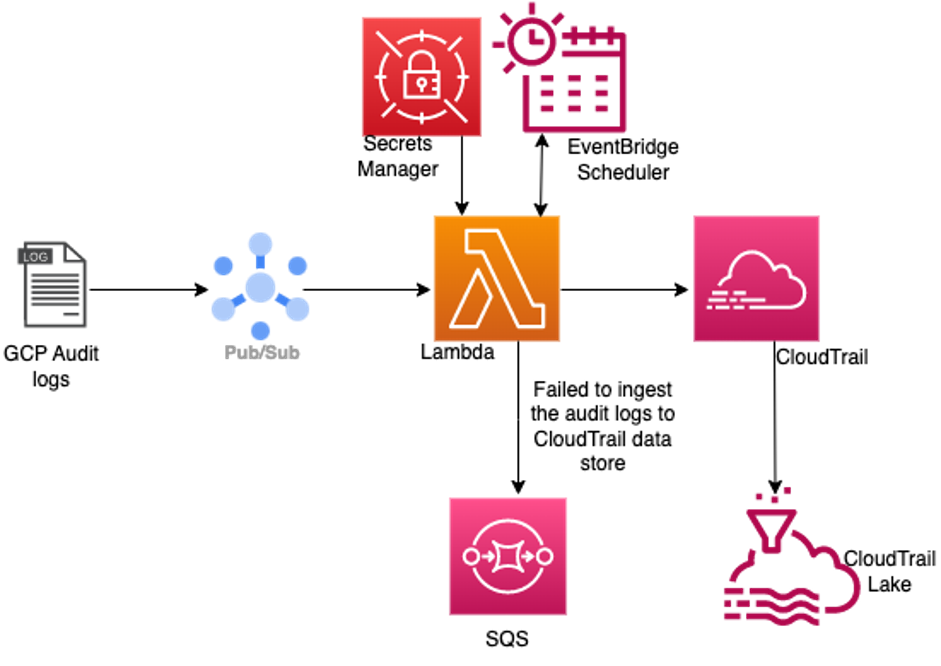

In this workflow, Google Cloud Platform (GCP) Audit Logs are routed to the Pub/Sub topic through the Log Router sink configuration. The GCP Service account key is stored in AWS Secrets Manager. A Lambda function and necessary IAM role polls the Google subscription and ingests Google Cloud Audit Logs to a CloudTrail event data store using an Amazon EventBridge Scheduler. Google Cloud Audit Log events that could not be ingested into CloudTrail Lake for any reason, will be forward to SQS FIFO (First-In-First-Out) queue. Then you can leverage CloudTrail Lake to run SQL-based queries on your events for various customer use cases.

Figure 1: Ingesting GCP Audit Logs into CloudTrail Event Data Store to query using CloudTrail Lake

Setup

GCP Setup

1. Follow the instructions to create a Google Pub/Sub topic: Create and manage topics

2. Add Pub/Sub Subscriber and Pub/Sub Publisher roles to the topic by following the steps here: Controlling access through the Google Cloud console

3. Follow the instructions to create a pull subscription for the topic: Create pull subscriptions

4. Create a sink to forward Cloud Audit Logs to the Pub/Sub topic: Create a sink

• Note: In order to capture only Cloud Audit logs, enter below filter expression in the Build inclusion filter field protoPayload."@type"="type.googleapis.com/google.cloud.audit.AuditLog"

5. Create a service account using Identity and Access Management (IAM) by the following the steps here: Create service accounts

6. Generate a service account key by following the instructions here: Create and delete service account keys

• Note: You will need to store the generated JSON-formatted credentials in an AWS Secrets Manager following the steps here: Create an AWS Secrets Manager secret

7. Make sure that GCP Audit logs are generated to confirm GCP setup is successfully completed.

AWS Setup

1. Enable CloudTrail Lake by following the steps in this blog post

2. Create an AWS Secrets Manager secret to store the service account key generated in GCP setup.

Deploying the Solution

1. Use git to clone this repository to your workspace area. SAM CLI should be configured with AWS credentials from the AWS account where you plan to deploy the example. Run the following commands in your shell

2. Provide values for the CloudFormation stack parameters.

a. CloudTrailEventDataStoreArn (Optional) – Arn of the event data store into which the Google Cloud Audit Logs will be ingested. If no Arn is provided, a new event data store will be created.

Note: Please be aware that there is a quota on the number of event data stores that can be created per region. See Quotas in AWS CloudTrail for more details. Additionally, after you delete an event data store, it remains in the PENDING_DELETION state for seven days before it is permanently deleted, and continues to count against your quota. See Manage event data store lifecycles for more details.

b. CloudTrailEventRetentionPeriod – The number of days to retain events ingested into CloudTrail. The minimum is 7 days and the maximum is 2,557 days. Defaults to 7 days.

c. GCPPubSubProjectName – The project ID or project number, available from the Google Cloud console, to which the Pub/Sub subscription belongs.

d. GCPPubSubSubscriptionName – The name of the pull subscription created for the Pub/Sub topic where Cloud Audit Logs are delivered.

e. GCPCredentialsSecretName – The name of the AWS Secrets Manager secret which contains the Google service account key in JSON format. Please note that this value will be stored as a Lambda function environment variable and will be visible in plaintext when viewing the function properties.

f. MaxMessagesPerRead – Maximum number of messages to read when polling the Pub/Sub subscription. The minimum is 1 and maximum is 10,000. Defaults to 500 messages.

g. ReadTimeout – The maximum time in seconds to wait for a message when polling the Pub/Sub subscription. Defaults to 10 seconds.

h. UserType – The value assigned to the userIdentity.type field for all events ingested into CloudTrail. Defaults to “GoogleCloudUser”.

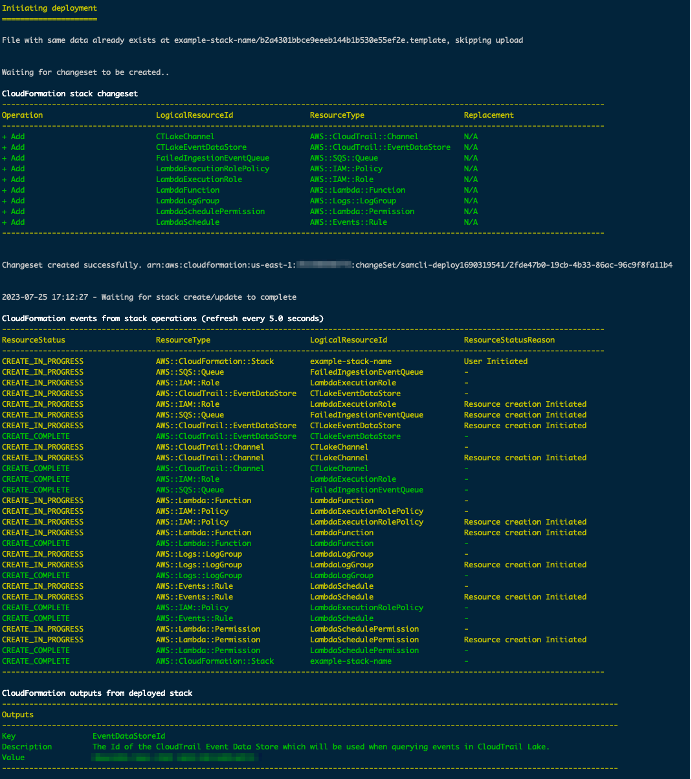

3. After SAM has successfully deployed the example, check the outputs and note the EventDataStoreId value that is returned. This Id will be needed to query the CloudTrail Lake event data store.

Figure 2: Successful SAM deployment output

4. The Lambda function will have a scheduled trigger attached to invoke the function every 5 minutes. After the CloudFormation stack is deployed successfully, the function should be automatically invoked for the first time within 5 minutes.

Either invoke the function manually, or verify that it has been invoked at least once by navigating to the AWS Lambda Console and looking at the Invocations graphs on the Monitoring tab. For more details, see Monitoring functions on the Lambda console.

5. After the Lambda function has been invoked, you can follow the steps below to analyze your Google Cloud Audit Logs using the CloudTrail Lake SQL-based sample queries.

Please note that CloudTrail typically delivers events within an average of about 5 minutes of an API call, though this time is not guaranteed. Therefore, after the Lambda function is invoked there may be an additional delay of about 5 minutes before the events can be queried in CloudTrail Lake.

Note: In the event that the Lambda function encounters any issues during the ingestion of audit events from Google Cloud Platform into CloudTrail, the root cause should be identifiable by inspecting the CloudWatch logs for the function. For more information, see Accessing Amazon CloudWatch logs for AWS Lambda.

Validate the integration

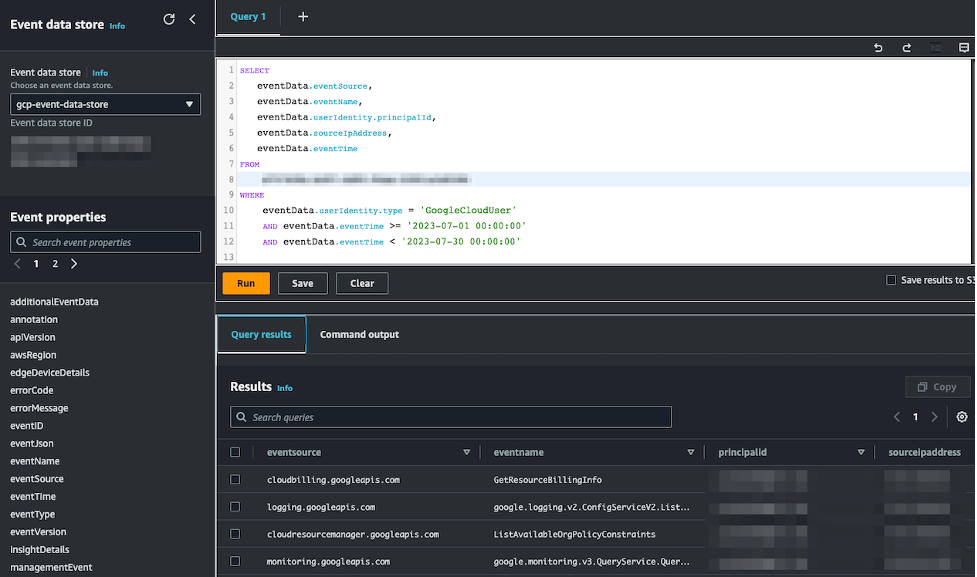

To verify if the GCP audit logs are available in AWS CloudTrail Lake data store, use the sample query below to query your CloudTrail Lake event data store following these instructions: Run a query and save query results.

Make sure you replace <event data store id> with the Id of the event data store, which can be found in the Outputs returned after a successful deployment with SAM.

Also replace GoogleCloudUser with the value that was entered in the UserType parameter supplied to SAM CLI.

Finally, ensure the dates are updated to encompass a period following the deployment of the Lambda function.

Figure 3: To verify audit logs in AWS CloudTrail Lake

Figure 3: To verify audit logs in AWS CloudTrail Lake

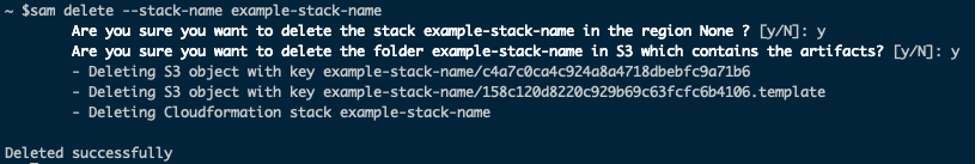

Cleanup

You can use SAM CLI to delete the deployed resources and make sure that you don’t continue to incur charges. To delete the resources, run the following command from your shell and replace <stack-name> with the stack name you provided to SAM when running sam deploy. Follow the prompts to confirm the resource deletion.

sam delete –stack-name <stack-name>

Figure 4: Cleaning up solution using SAM CLI

You can also delete the CloudFormation stack created by the SAM CLI from the console by following these steps.

Conclusion

In this blog post, we demonstrated how AWS CloudTrail Lake has made it simple to manage audit logs from disparate sources and streamline the process of consolidating user activity data from Google Cloud Platform. By deploying this integration in your own AWS account, you gain unparalleled visibility into all user-relevant security activity in GCP by using CloudTrail Lake SQL-based queries for search and analysis. Aggregating activity information from diverse applications across hybrid environments is complex and costly, so this could be a solution for your organization’s security and compliance posture.

About the authors