AWS Public Sector Blog

The Water Institute of the Gulf runs compute-heavy storm surge and wave simulations on AWS

The Water Institute of the Gulf (Water Institute) runs its storm surge and wave analysis models on Amazon Web Services (AWS)—a task that sometimes requires large bursts of compute power. These models are critical in forecasting hurricane storm surge event (like Hurricane Laura in August 2020), evaluating flood risk for Louisiana and other coastal states, helping governments prepare for future conditions, and managing the coast proactively. Unfortunately, all of this can be exacerbated by the land loss problems plaguing Louisiana. The United States Geological Survey calculates that the state loses the equivalent of one football field of land every 100 minutes.

The Water Institute was founded through a collaborative effort involving the State of Louisiana, Senator Mary Landrieu, and the Baton Rouge Area Foundation (BRAF). The Louisiana-based nonprofit is driven by a mission: “Advance science and develop integrated methods to thoughtfully prepare communities for an uncertain future.” The Water Institute links academic, public, and private research partnerships and conducts applied research to better inform the decisions facing communities and industries around the world.

Zach Cobell, a numerical modeler at the Water Institute says, “We’re trying to provide the best scientific information we can about an uncertain future to stakeholders.” Cobell leads the hurricane storm surge and wave analysis modeling team at the Water Institute.

The Louisiana Coastal Master Plan: Modeling risk using the cloud

Cobell said the changing landscape is visible to the locals and beyond: “If you look at historic satellite imagery of the Louisiana coastline, you can see how over time the land has retreated from where it once was. People here remember being able to look in all directions at marshland, and now much of it is open water.”

One of Cobell’s largest projects is storm surge analysis for Louisiana’s Coastal Master Plan, which is updated by the Coastal Protection and Restoration Authority of Louisiana (CPRA) every six years. The master plan aims to estimate how the landscape of the state’s coastline will change over the next 50 years and what steps can be taken to help.

As sea levels rise and Louisiana faces further land loss, the goal of the master planning process spans from research to project implementation. The Master Plan team, which consists of public, private, and academic researchers in addition to the Water Institute, seeks to understand what types of projects—such as levees, marsh creation, or Mississippi River diversions—will have the greatest benefits in terms of creating or maintaining wetland area or reducing flood risk to coastal communities.

The storm surge model is an important component of the analysis that informs updates to Louisiana’s Coastal Master Plan. It uses data generated by landscape models, which estimate how the topography, bathymetry, barrier islands, and vegetation will evolve over time. Cobell uses these data as input to the ADCIRC hydrodynamic model, which simulates how wind, waves, tides, and riverine inflows impact water levels during storm events, to understand how future hurricanes might impact the coast differently and what newly constructed projects can do to mitigate it. “With all the models, we’re trying to understand how coastal ecosystems and communities will be impacted in the future, what are thoughtful ways to keep people safe, and how to make strategic investments in the coast to maintain habitats, people’s way of life, and local economies,” said Cobell.

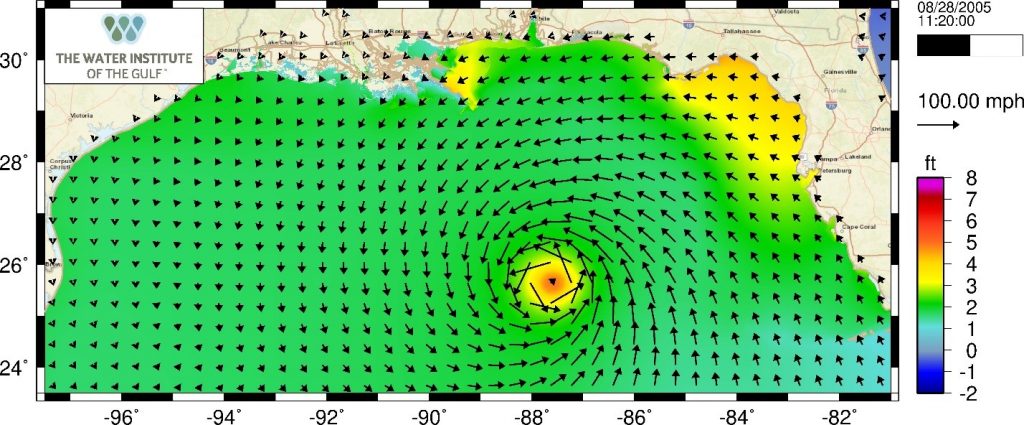

Simulation of Hurricane Katrina as it approaches the Louisiana coast in the ADCIRC model. Colors show the elevation of the ocean water caused by the tides, winds, and atmospheric pressures that occurred during the storm

The storm surge and wave analysis models must account for a number of variables, such as the influence of sea level rise and different climate scenarios, all of which are simulated on a model which covers the Atlantic Ocean, Gulf of Mexico, and Louisiana. “The storm surge model is computationally demanding because we’re simulating large portions of the Atlantic Ocean, Gulf of Mexico, and coastal Louisiana in great detail to ensure that the hurricanes push surge and waves realistically into the areas that they make landfall. Trying to correctly represent how these large storms are able to move the ocean water takes large amounts computational power,” according to Cobell.

ADCIRC Model domain showing the northern Atlantic Ocean and inset showing the model depiction of the Mississippi River delta (top right) and corresponding satellite image (bottom right).

The team has traditionally run these workloads at universities and government supercomputing centers. A consistent challenge was gaining access in rapid succession to many processors at once for long periods of time. To solve this challenge, the team turned to AWS. “With the AWS Cloud, we are able to rethink our workflow. Instead of focusing on maximum speed for individual simulations, we focus on maximum throughput. We can run greater numbers of simulations on fewer cores. For a recent set of simulations, we used 48 processors with AWS for a job which typically runs with 256 at traditional computing centers. This allowed us to run 200 simultaneous simulations whereas we typically would only have four to six running in parallel at traditional computing centers,” said Cobell.

Flood risk due to storm surge is based on probabilistic approaches; running hundreds of individual storms is critical to determining the probability that water levels will exceed some threshold. For storm surge modeling to support Louisiana’s Coastal Master Plan, the team needed to run 645 simulations of potential hurricane events. Running this full suite of simulations might have taken approximately three months at a traditional supercomputing center, depending on availability of the machine and how quickly the simulations could gain priority in the queue, competing with jobs from other users. With AWS, the team completed all 645 simulations in three and a half days.

“Finishing in a fraction of the time, in days, was really beneficial. We had a tight deadline. Instantaneous, on-demand access to compute power was the only reason we were able to get this done on time,” said Cobell. To complete their models, the team used Amazon Elastic Compute Cloud (Amazon EC2) Spot Instances, Amazon FSx for Lustre, and Amazon ParallelCluster resulting in a faster workflow with reduced costs.

“Using Amazon EC2 Spot Instances allowed us to keep costs down. Compared to on-demand pricing at private supercomputing vendors, Amazon EC2 Spot was nearly 45 percent cheaper,” added Cobell. Keeping costs down and responding quickly to requests are important benefits to meet the needs of stakeholders and clients who look to the Water Institute for help. “AWS is an important part of our computing strategy going forward. It’s exciting because we know that with AWS in our toolbox, we can meet schedules that we simply could not in the past, and that ultimately benefits our clients. AWS is a powerful tool to help us achieve our mission,” says Cobell.

Follow the Water Institute at @TheH2OInstitute or learn more at https://thewaterinstitute.org/. Learn more about the cloud for nonprofits and research, and read more stories on HPC.