AWS Storage Blog

Optimize storage costs with new Amazon S3 Lifecycle filters and actions

Managing costs is important to the bottom line of many businesses and their ability to innovate on behalf of customers. You may often find that there is some data that you use frequently, and other data that you access less frequently, if ever. Deciding how to manage costs related to storing such unevenly accessed data can be useful to managing your overall storage costs.

Amazon S3 Lifecycle provides customers with a simple S3 console user interface, or API driven workflow to define and manage the lifecycle of their Amazon S3 data. S3 Lifecycle allows customers to automate the deletion of specific sets of data when there is no longer a business need to retain them, or transition long lived archive data to lower-cost storage tiers as data become less frequently accessed.

In this blog, we review what S3 Lifecycle is, how it works, and how you might be able to further reduce costs by leveraging some recently added features. Whether you are just starting out with your first S3 Lifecycle configuration, or refining your existing ones, this post will help you understand how to achieve optimal cost savings for your datasets stored in Amazon S3.

Anatomy of an S3 Lifecycle configuration

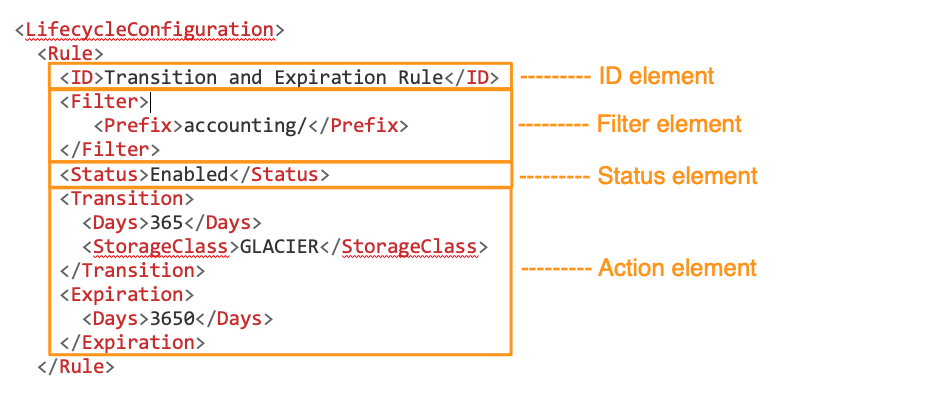

An S3 Lifecycle configuration is defined at the bucket level and is a set of rules that govern how automated lifecycle actions will affect your data. The bucket’s Lifecycle configuration contains up to a thousand lifecycle rules. Each rule consists of four main components including the ID element, status element, filter element, and action(s) element. The ID element is the unique identifier for each of the rules within the Lifecycle configuration. The status element is used to indicate if the rule is currently enabled or disabled. The filter element defines the specific dataset the rule will affect, and the action element defines what action will be taken by S3 Lifecycle.

Here’s how the different configurations elements appear in XML:

See other examples of lifecycle configurations in the Amazon S3 User Guide.

Once you have defined your lifecycle configuration, S3 Lifecycle applies transition and expiration actions daily on matched objects to the filters you have defined in your rules.

When objects become eligible for a lifecycle action, Amazon S3 applies the necessary changes to your bill and asynchronously takes the defined actions on your objects. Whether your lifecycle policy applies to a single object or billions of objects, this fully managed feature will take care of the necessary transition or expiration actions.

Recap of new S3 Lifecycle launches

You can define a subset of your bucket under an S3 Lifecycle rule with prefixes, object tags, or a combination of the two. These options allow flexibility in how you define the datasets affected by each lifecycle rule. In addition, you can now set S3 Lifecycle rules to choose objects to move to other storage classes based on size to optimize cost savings, and to limit the number of version of an objects to retain to optimize your lifecycle transitions.

Object-size filter

One additional filtering dimension that customers often request is object size. In many cases, customers have a large number of objects of varying sizes. It is common in some applications for datasets to contain very small metadata objects in the KB range, alongside much larger objects that could be multiple GBs or more. For these workloads, S3 Lifecycle has added a new filter that selects objects based on size. The object size filter can be combined with prefix and objects tag filters to offer more fine-grained lifecycle controls for your data.

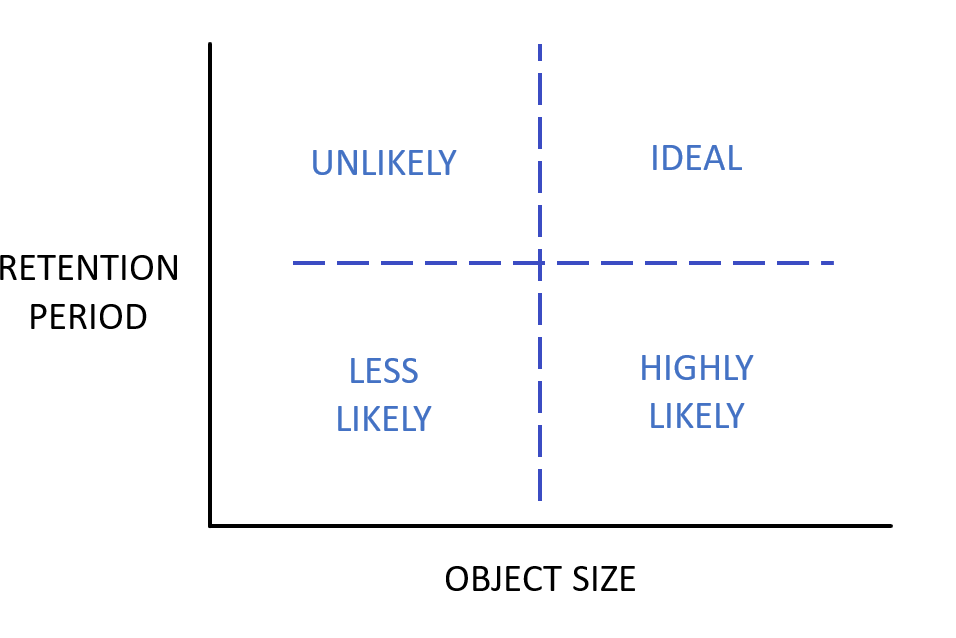

Cost benefits of archiving data based on object size and retention periods

As illustrated in preceding chat, if the size of your S3 objects are small and you retain them over only a very short period of time, S3 Standard is likely the best storage class for you. As your S3 objects get bigger and you need them far less frequently, you can save on your storage spend by transitioning them to S3 Glacier Flexible Retrieval or S3 Glacier Deep Archive. Using the object-size filter within S3 Lifecycle helps you achieve greater storage savings by creating a subset of your objects based on their size to be eligible for your archival lifecycle configurations.

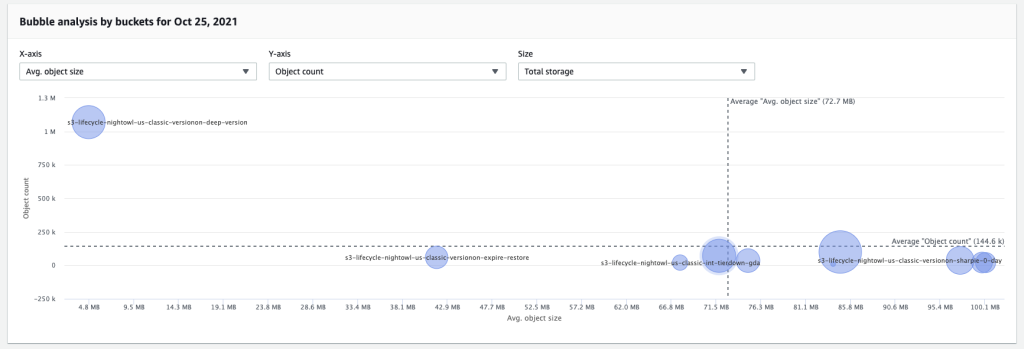

You can use S3 Storage Lens to determine which of your S3 buckets have large, infrequently retrieved objects to make better lifecycle configurations to save on your storage spend.

When transitioning data from one S3 storage class to another there are costs associated with the actual transition of each object, and these costs are specific to the target storage class. In this case we are targeting S3 Glacier Deep Archive as the new storage class, and as of July, 2022, pricing for lifecycle transitions into S3 Glacier Deep Archive in the us-east-1 Region is $0.05 per 1000 objects transitioned. In addition to the lifecycle transition fees, for each object that is stored in S3 Glacier Flexible Retrieval or S3 Glacier Deep Archive, S3 adds 40 KB of chargeable overhead for metadata, with 8 KB charged at S3 Standard rates and 32 KB charged at S3 Glacier Flexible Retrieval or S3 Glacier Deep Archive rates. Therefore, you will save money moving large objects to S3 Glacier Flexible Retrieval or to S3 Glacier Deep Archive, but you may actually spend more by moving many small objects due to the overhead and one-time request costs. More information about S3 pricing can be found here. Here’s a rule showing how you the filter for objects bigger than 128 KB.

<Rule>

<ID>Transition to Glacier Deep Archive</ID>

<Filter>

<Prefix>accounting/</Prefix>

<ObjectSizeGreaterThan>128000</ObjectSizeGreaterThan>

</Filter>

<Status>Enabled</Status>

<Transition>

<Days>60</Days>

<StorageClass>Glacier_Deep_Archive</StorageClass>

</Transition>

</Rule>It’s important to note the obvious: leveraging lifecycle rules to transition data into the appropriate Amazon S3 storage class represents cost reduction opportunities if your archive data is currently stored in Amazon S3 Standard. For this example, we assumed that the restore characteristics of the S3 Glacier Deep Archive storage class are suitable for this archive workload, but make sure that you choose the appropriate storage class based on the business requirements for each of your archive workloads. For more information about Amazon S3 storage classes, visit the storage classes page.

Managing noncurrent object versions

In addition to object size filtering, S3 Lifecycle has introduced a second new feature providing more flexibility when managing the lifecycle of objects stored in versioned buckets. Now it is also possible to define a number of previous versions that will not be affected by the lifecycle policy action. You can be sure that there is always a minimum number of previous versions to satisfy your data protection needs, while also allowing for an aggressive expiration policy for older versions.

If versioning is new to you, it is a bucket level setting that provides data protection against accidentally deleting or overwriting data. Versioning makes sure that your older versions remain intact even if you delete or overwrite your objects. You can roll back to an older, or ‘noncurrent’ versioning of any object in your bucket. More information about versioning is available in our documentation.

You can use S3 Storage Lens dashboards to analyze your noncurrent version counts and bytes.

You can now use S3 Lifecycle actions based on the number of noncurrent versions, in addition to age, so that your S3 Lifecycle policy only applies to noncurrent versions that have at least the specific newer versions you need. You also have the option to transition or expire these noncurrent versions after they have been noncurrent for a specific number of days. For example, you can choose to transition (or expire) noncurrent versions only if they have 5 newer versions. This allows you to have additional versions of your objects as you need, but saves you cost by transitioning or removing them after a period of time. As a result, you can transition or expire your older versions more quickly, saving on storage cost, all while keeping the number of noncurrent versions you need for rollback purposes.

For example, the Lifecycle configuration below uses the NoncurrentVersionExpiration action to remove the noncurrent versions 10 days after they become noncurrent and those have 5 newer versions.

<LifecycleConfiguration>

<Rule>

...

<NoncurrentVersionExpiration>

<NewerNoncurrentVersions>5</NewerNoncurrentVersions>

<NoncurrentDays>10</NoncurrentDays>

</NoncurrentVersionExpiration>

</Rule>

</LifecycleConfiguration>S3 Versioning is a popular feature many customers implement to help meet their data protection needs, but it’s important to understand the impact this can have on your storage costs. Without a lifecycle policy that transitions older versions of your data to lower-cost storage classes, or expires them, storage costs could increase quickly. If you have enabled versioning for buckets in your accounts but are unsure if you have defined the appropriate lifecycle configuration you can leverage S3 Storage lens for insights about previous version bytes in your buckets. Check out this blog post highlighting the top 5 ways to identify cost savings opportunities with S3 Storage Lens, number 4 references noncurrent version bytes.

Conclusion

In this blog, we provided an overview of S3 Lifecycle policies and we highlighted two new feature additions to S3 Lifecycle. Using the object size filter, you can reduce your total cost of storage over time by transitioning larger objects to other storage classes. Using actions to manage your number of noncurrent versions, you can choose to transition or expire older versions more quickly and just keep the ones you need.

We hope you found this feature highlight informative and that it gets you thinking about how you can implement these new features in your accounts to start saving even more on your S3 storage spend. If you have any comments or questions, leave them in the comment section.