AWS Partner Network (APN) Blog

Amazon CloudFront Cache Strategy for Successful Global Game Deployment

By Gabin Lee, Edge Specialist Solutions Architect – AWS

By Byounghwan Oh, Sr. Solutions Architect – SmileShark

|

Game service providers around the world are releasing new games and updating large-scale contents to attract game users.

This post explores how you can leverage the benefits of Amazon CloudFront to deliver successful global gaming content and examines how to leverage CloudFront’s cache, especially in the gaming field.

Amazon CloudFront is a high-speed content delivery network (CDN) that securely transfers data to customers at low latency and fast transfer rates. The CloudFront network has more than 225 Points of Presence (PoP) distributed worldwide, and each PoP is connected to the Amazon Web Services (AWS) backbone network to ensure low latency, high transmission speeds, and high availability.

Cache is one of the most important CloudFront features, as it stores static content in a cache server located in each PoP. This means that when end users request the content, they get a rapid response without origin access. Even for dynamic content that cannot utilize cache, you can accelerate content delivery by leveraging the AWS backbone network, which supports low latency and high throughput.

Additionally, Amazon CloudFront integrates with AWS Shield and AWS WAF to add flexible layer-specific security types from attacks at the network transport and application layers. Moreover, CloudFront can easily integrate with a variety of AWS services to configure a service architecture.

SmileShark is an AWS Advanced Consulting Partner that is specialized in the gaming segment. SmileShark has successfully implemented and launched more than 90 games on AWS with over 30 game developer customers.

SmileShark also achieved the Amazon CloudFront Service Delivery designation in 2020 after passing a rigorous technical validation process conducted by AWS global team. This post explores SmileShark’s CloudFront cache strategy for successful global game deployment.

Amazon CloudFront Caching Strategy

Most AWS gaming customers already take advantage of Amazon CloudFront’s cache feature. Yet, some tend to neglect the caching strategy, which may result in slower performance and different content delivered to each end user.

If the cache is not properly applied, a total service failure may occur due to an unexpected load on the origin server. To avoid these issues, you must be aware of your CloudFront caching strategies and utilize them appropriately.

Thus, here are four strategies for using Amazon CloudFront caching effectively.

Versioning

Due to the nature of the gaming industry, a new deployment may be suddenly conducted due to a new version release or an urgent patch during the service.

Some AWS customers still perform deployments by overwriting existing files into new files. In this case, there is a possibility of service failure.

This may not be an issue if the file to be modified is a single file, but if multiple files are modified and distributed with overwrite there may be a difference between when each file is uploaded and when it’s newly cached in CloudFront.

This can result in a mixture of files, such as those that existed in the past, newly-modified files, and ones delivered to the end user. This can negatively affect the overall quality of service.

To improve this situation, consider the deployment structure based on versioning.

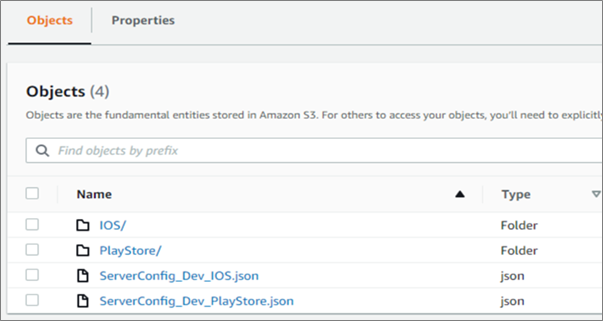

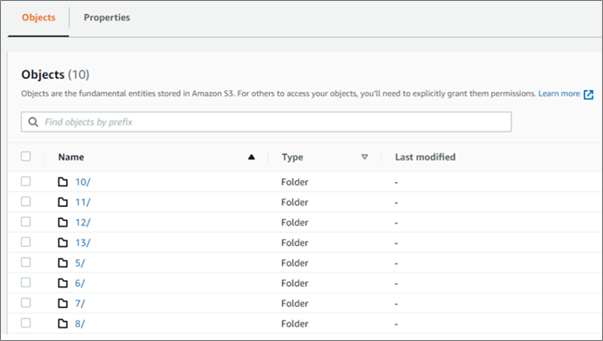

Figure 1 – Version control via JSON file.

If you configure the Manifest file separately in which the assets to be deployed are defined, you can control the assets deployment by modifying the metadata of the Manifest when a new deployment is needed.

Figure 2 – Folder structure according to version.

Newly-deployed assets can be classified and stored for each deployment by creating a new folder and uploading assets to a subfolder without overwriting existing files.

Manifest files defining the asset to be deployed can be configured based on a short cache Time To Live (TTL) so that when a file change occurs in the origin, the latest file can be quickly retrieved. Since the assets stored in a separate folder are unchanged contents, you can set a long caching TTL.

Time To Live (TTL) is the time that an object is stored in a caching system before it’s deleted and refreshed.

If this deployment structure is applicable, end users can read the Manifest file first to check the newly-deployed asset, and can request the assets in a path that’s separate from the existing cached assets. This indicates a situation in which assets were deployed in the past are mixed and transmitted, and can be avoided while providing a consistent experience to the end-user.

Cache TTL and Invalidation

The most common mistake gaming customers make is that, despite changing files frequently, they service the game under long caching TTL.

Moreover, these customers tend to renew a file based on invalidation when they change a file. In the case of invalidation, there is no service level agreement (SLA) for the processing completion time, and additional costs may be incurred if used more than a certain number of times.

Therefore, if frequent file replacements are expected, you need to set the caching TTL itself short and configure an environment in which the files are automatically updated rather than invalidation updates.

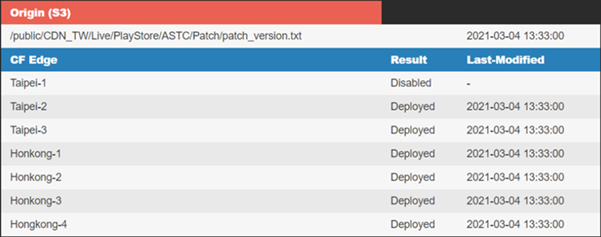

If unexpected file updates are required, invalidation is inevitable. Please note that, as mentioned earlier, there is no SLA for invalidation processing time and Amazon CloudFront does not include invalidation status for each PoP.

If your main service regions are limited and you want to quickly check the invalidation status of your regions, SmileShark can help you monitor the invalidation status through its invalidation monitoring solution. ALOHA is a failure notification solution enabling users to get quick alerts for failures via phone, message, and email, without installing an agent.

Figure 3 – Edge invalidation status monitor.

Pre-Caching (Pre-Warming)

Gaming customers often consider pre-caching at the time of a new deployment event, when pre-caching refers to the act of caching specific resources in the cache server in advance. This is because pre-caching allows end users to achieve faster performance and minimize load in terms of origin.

You have to keep the following points in mind first:

- Performance aspect: Amazon CloudFront doesn’t have to wait for the entire file to be cached. When the first byte arrives from the origin, it starts delivering the file to the users. In other words, it’s not structured to wait for the entire file to be stored on the cache server. As a result, the additional delay caused by the cache-miss is very short.

- Origin load aspect: In the event of a traffic spike (when additional requests for the same content arrive on the cache server before the origin responds to the first request), CloudFront suspends the operation before forwarding additional requests for that content to the origin. This pause can reduce unnecessary load on the origin, even if traffic spikes in cache-miss circumstances.

- Cache eviction aspect: If files are not frequently requested to CloudFront’s cache server, it can remove those files before the caching TTL expires in order to make room for more recently requested files.

It’s important to be aware the benefits of pre-caching with CloudFront may not be significant. Nevertheless, if you still need to review pre-caching, we highly recommend you to request a review to AWS.

Origin Shield

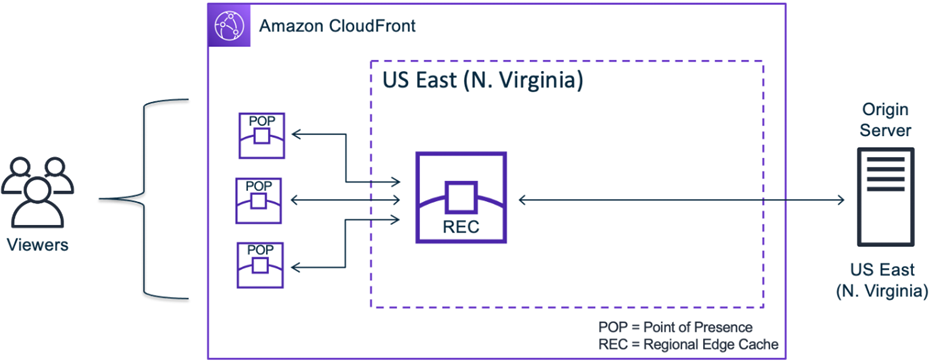

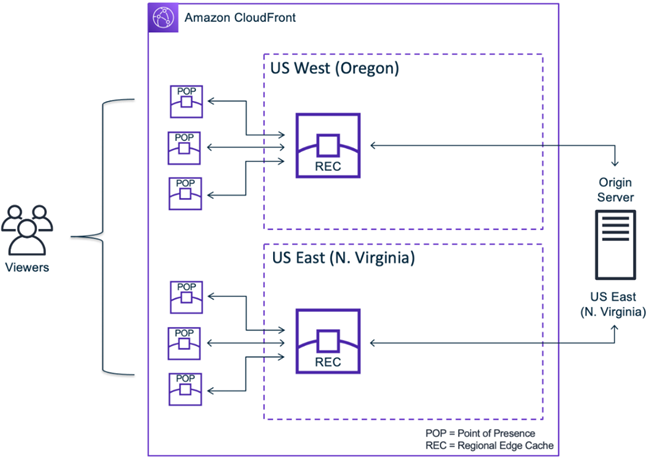

Amazon CloudFront consists of multiple caches and, if a cache-miss occurs in the cache server located in the PoP, the Regional Edge Cache (REC) checks the cache once more.

In this process, requests to the origin are minimized, and the load on the origin is also reduced. However, if you need to perform a game event targeting global end users, CloudFront’s default multi-cache configuration may not be sufficient in terms of cache-hit rates and origin load reduction.

If a service is limited to a specific country, a single REC is typically used. Even if a request for the same content is received from multiple CloudFront PoPs, once the content is cached in the REC, it will respond without additional origin access.

Figure 4 – Single Regional Edge Cache (REC).

If a game deployment or event is requested from global users, multiple RECs can be used. Therefore, even if the content is the same, each request can occur for each REC, which can increase the load.

Figure 5 – Multiple Regional Edge Cache (REC).

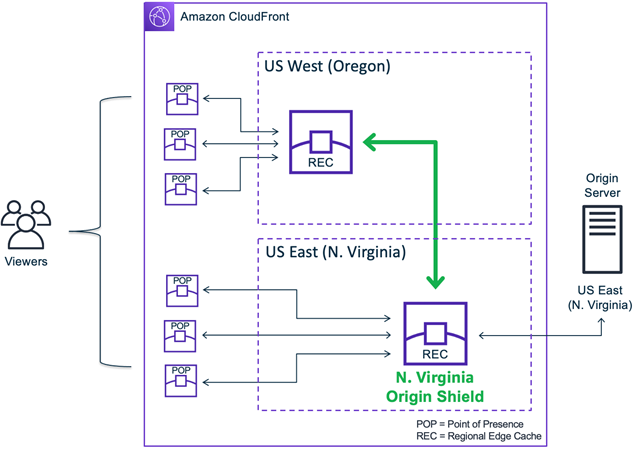

For the events that have to utilize multiple RECs, you should consider Amazon CloudFront Origin Shield to reduce the load on your origin.

Figure 6 – Amazon CloudFront Origin Shield.

When the Origin Shield is enabled, there’s an additional caching layer between REC and the origin. All requests will go through the REC specified by the Origin Shield before being forwarded to the origin.

In other words, Origin Shield will check whether the origin request that occurred for each REC has been cached once again. Especially if the origin is an external resource to AWS, we recommend setting the REC closest to the origin geographically as the Origin Shield.

This can secure a faster and more stable performance by minimizing the network section between the REC and the origin outside of AWS that uses the general public internet network, and maximizing the network section between the edge PoP and REC that can utilize the AWS backbone network.

Conclusion

In recent years, the importance of content delivery networks (CDNs) has been recognized and widely used for global services, especially in the gaming and media entertainment segment.

Still, we have found cases where customers do not use cache or overly rely on cache for all of their contents. If you use Amazon CloudFront without a content deployment strategy, this can turn into a serious problem. We hope this post can be of reference to prevent and resolve the problems.

SmileShark – AWS Partner Spotlight

SmileShark is an AWS Advanced Consulting Partner specializing in gaming and internet business that provides optimized infrastructure costs and supports DDoS defense and response.

Contact SmileShark | Partner Overview

*Already worked with SmileShark? Rate the Partner

*To review an AWS Partner, you must be a customer that has worked with them directly on a project.