AWS Partner Network (APN) Blog

Build and Deploy Secure AI Applications with AIShield and Amazon SageMaker

By Manpreet Dash, Global Marketing Manager, AIShield – Bosch

By Amit Phadke, Chief Product Officer, AIShield – Bosch

By Amlan Jyoti, Lead Product Engineer, AIShield Bosch

By Michael Scrivner, Stefan Schneider, Suman Saurabh – AWS Partner Organization

|

| Bosch |

|

Adversarial machine learning (AML) attacks, also known as “artificial intelligence attacks” (AI attacks), involve deliberate attempts to manipulate or compromise machine learning models or even make it reveal sensitive information.

AML attacks impact one or more of the three main tenets of security–confidentiality, availability, and integrity. A recent Gartner survey indicates that two in five organizations have experienced artificial intelligence (AI) security breaches, underscoring the urgency to address this issue.

In this post, we will explore how AIShield‘s seamless integration within the Amazon SageMaker environment alleviates AI security concerns by mitigating risks before and after deployment, enabling customers to develop and deploy AI applications with confidence.

Bosch Global Software Technologies (BGSW) is an AWS Select Tier Services Partner and AWS Marketplace Seller that is using machine learning solutions to improve its manufacturing, healthcare, and Internet of Things (IoT) solutions.

Risks and Consequences of AI Attacks

Adversarial machine learning attacks lead to various forms of organizational harm and loss—for example, financial, reputational, safety, intellectual property, people’s information, and proprietary data. In healthcare, banking, automotive, telecom, public sector, and other industries, AI adoption suffers due to security risks from emerging attacks which relate to safety, nonconformance to AI principles, regulatory violations, and software security.

AI attacks typically exploit vulnerabilities within data payloads. The traditional defense mechanisms like layer 3/4 network firewalls and layer 7 application firewalls often fall short in providing comprehensive protection against such threats. Specifically, the concept of zero trust architectures, while valuable in theory, can be a complex task to implement efficiently due to the persistent threat of adversarial attacks.

AI Security Challenges

The challenge of securing AI is complicated due to the multifaceted technology landscape, shortage of skilled staff, and unavailability of essential components of AI security in open source. Developing an enterprise solution from scratch can be costly, and responsible AI adoption and uncertainty about regulatory compliance provides further challenges. The cumulative impact includes reduced revenue due to delayed time to market and increased costs, making the return on investment (ROI) in AI less attractive for organizations.

Organizations seek holistic AI security solutions that enhance their security posture, improve developer experience, seamlessly integrate into existing systems, ensure compliance, and offer resilience throughout the AI/ML development lifecycle.

In the DevOps context, this means:

- Developers require a straightforward solution that can scan AI/ML models, identify vulnerabilities, and automatically remediate them during the development phase.

- Deployers and operators, including security teams, need tools such as endpoint detection and response (EDR) specific to AI workloads. They need to rely on solutions capable of detecting and responding to emerging AI attacks to prevent incidents and reduce the mean time to detect (MTTD) and mean time to resolve (MTTR).

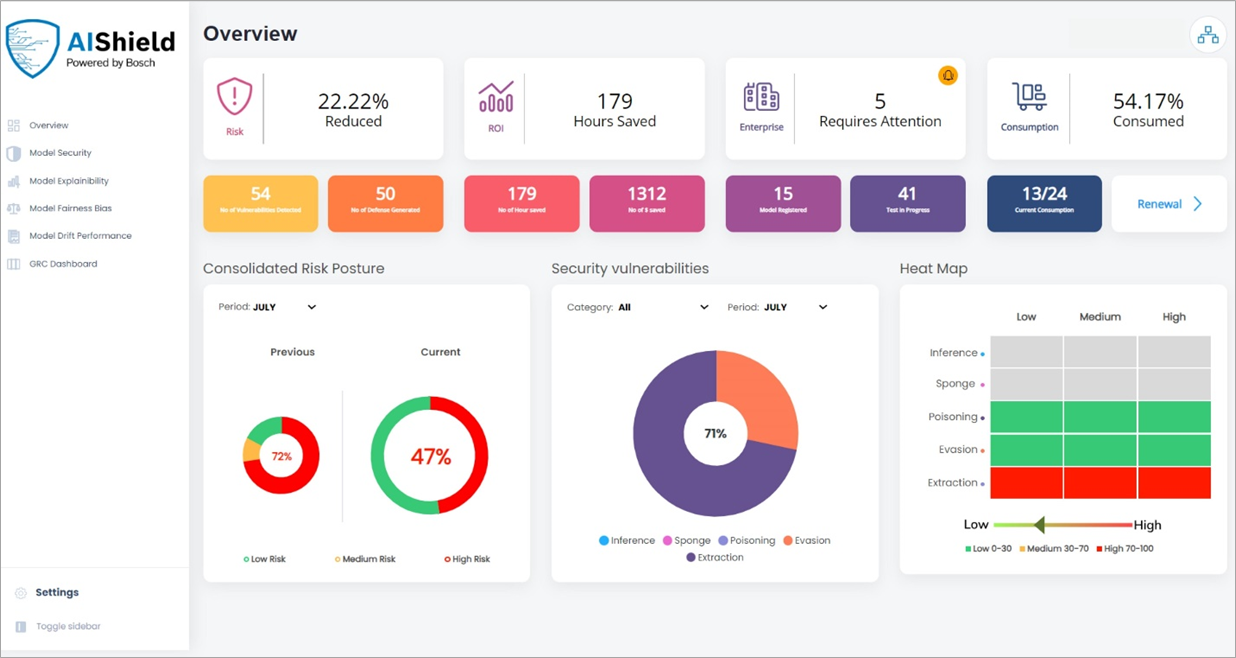

- Managers need visibility into the security posture of the AI/ML models they deploy to ensure better governance, compliance, and model risk management at an organizational level.

Enhancing Security of AI Workloads with AIShield

AIShield is a comprehensive AI security product for organizations running AI workloads on Amazon SageMaker, helping them protect against AML attacks. By leveraging the capabilities of both AIShield and Amazon SageMaker, organizations can maximize the value of their AI/ML efforts.

Let’s explore how AIShield can be used by organizations to overcome AI security challenges in the AI/ML lifecycle.

Figure 1 – Intervention points of AI security in the ML development workflow.

There are two main intervention points for AIShield in the ML development workflow:

- Model evaluation: After model validation and prior to the deployment, AI models undergo evaluation. AIShield offers an API-based AI security vulnerability assessment, which can be used in the development workflow by developers to assess the security posture of their AI application.

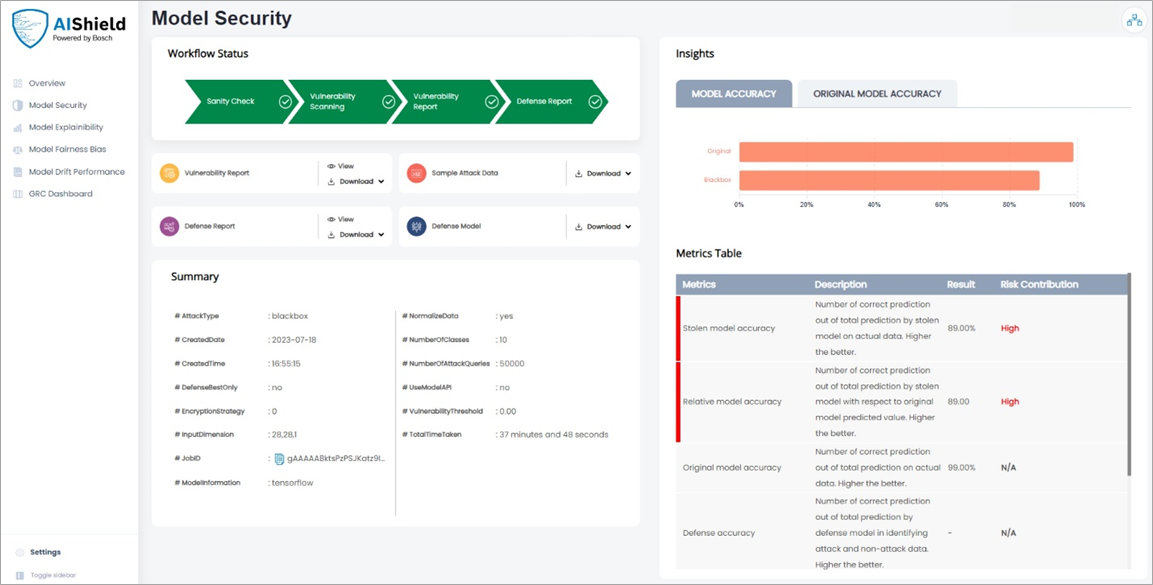

Figure 2 – Outcomes of API-based AI security vulnerability assessment for AI/ML developers.

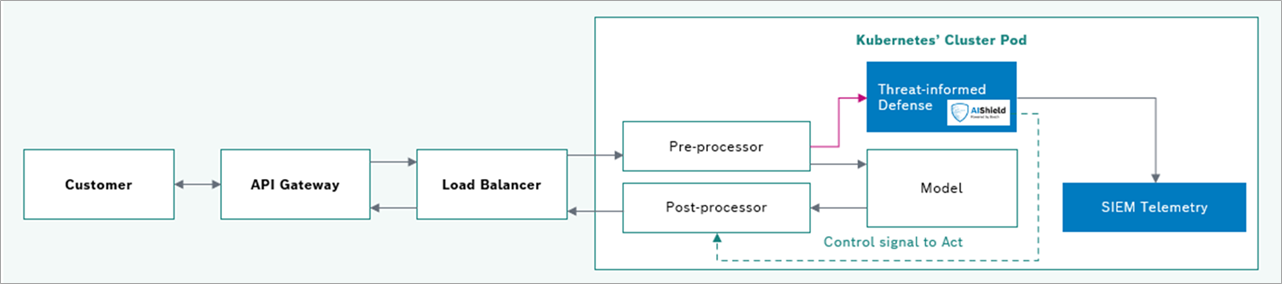

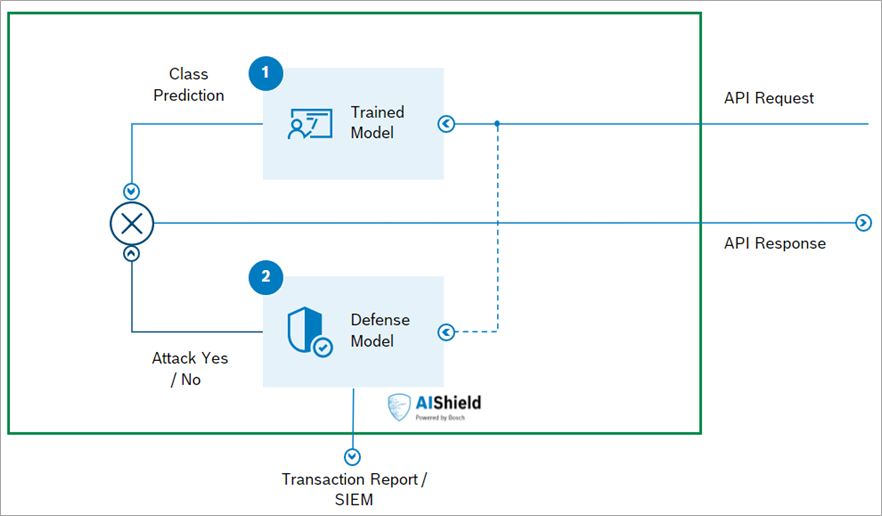

- Model operationalization: The threat-informed defense model can be deployed within the model deployment environment for real-time detection of any attack vector. The defense model is trained to bifurcate the effective attack vectors from the original data.

Figure 3 – Defense model deployment architecture – parallel deployment.

AIShield dashboards provide visualization to the managers on the overall security posture, analytics on the outcomes, and AI security licenses usages which can help lead to better model governance practices.

Figure 4 – AI security posture dashboard for organization leaders.

AIShield in SageMaker Studio ML Development Workflow

AIShield offers seamless integration through AWS Marketplace and provides easy access to its cloud-based solutions. AIShield seamlessly integrates with various AWS machine learning environments, including Amazon SageMaker Studio Notebooks and Amazon SageMaker Notebook Instances.

SageMaker Studio Notebooks are highlighted in this post for their native features, including Amazon SageMaker Experiments and Amazon SageMaker Model Registry. These features offer improved organization, tracking, and management of models, providing valuable capabilities for users leveraging SageMaker Studio Notebooks.

Consider a scenario where a developer is utilizing SageMaker Studio to build a machine learning model for a specific use case, such as digital recognition. In this context, let’s see how AIShield can be integrated into this pipeline quickly.

Figure 5 – Reference architecture on AWS.

This integration process is divided into two parts—developer flow and deployment flow—ensuring a comprehensive end-to-end integration of AIShield API with SageMaker Studio Notebook.

Part 1: Developer Flow

The developer flow focuses on the activities conducted by developers during the integration process (see Figure 2).

1.1 – Set Up SageMaker Studio

When integrating AIShield into a machine learning pipeline like SageMaker Studio, the initial step involves installing the AIShield PyPI package. This installation facilitates rapid experimentation during model development.

On the other hand, AIShield APIs provide scalable solutions that are better suited for MLOps pipelines, enabling seamless integration and deployment of AIShield functionalities.

1.2 – Model Development and Iterative Refinement

As with any model development pipeline, the developer goes through the process of defining, training, and refining the model until it reaches the desired performance level. Following this, the model is typically stored in an Amazon Simple Storage Service (Amazon S3) bucket.

1.3 – API Shield API Integration

After subscribing to AIShield, the AIShield API can be initialized by providing the necessary parameters (URL and organization ID) received during the subscription process. This initialization step allows developers to access and utilize the functionalities provided by the AIShield API.

To leverage AIShield’s model vulnerability analysis, the necessary data, labels, and model file are provided to AIShield, securely stored using pre-signed URLs utilizing Amazon S3 storage. AIShield adopts a low data and no model approach, typically utilizing a representative sample of 2-5% of the dataset to generate test reports and assess model performance.

Furthermore, the model-under-test can be hosted as an API, with traffic routed through AWS PrivateLink to ensure a secure and private connection. For a quick demonstration, the model can be submitted to AIShield for analysis, involving the registration of the model within the AIShield system.

AIShield supports diverse task types, including image and tabular classification, time series forecasting, and offers analysis for various attack types like extraction and evasion. Users have the flexibility to choose specific tasks and desired attack analysis options within AIShield, ensuring customization and adaptability to meet their specific requirements.

After initiating the vulnerability analysis in AIShield, once the analysis is completed the output artifacts such as reports, defense models, and attack samples can be uploaded to an S3 bucket.

1.4 – SageMaker Experiments

To further streamline the workflow and keep track of the AIShield artifacts, the S3 locations of these artifacts can be pushed to SageMaker Experiments. This integration ensures the AIShield output artifacts are organized, tracked, and readily available for analysis and further experimentation within the SageMaker environment.

The process of analyzing model vulnerability and generating corresponding defenses using AIShield can be orchestrated for multiple runs, allowing for flexibility in adjusting parameters such as the number of attack queries and the attack type. This enables comprehensive testing and evaluation of the model’s robustness under various scenarios, enhancing its overall security.

To improve tracking and analytics, the metrics and artifacts generated from multiple runs can be logged to SageMaker Experiments and can be analyzed through charts. This enables comprehensive record-keeping and facilitates in-depth analysis of the results, enhancing the overall monitoring and evaluation process.

This information aids in making informed decisions for selecting the specific run and, consequently, the defense model to deploy in the subsequent process. As the number of attack queries increase, the efficacy of vulnerability detection and defense model accuracy increase (see Figure 6).

Figure 6 – For the three runs: (1) Number of attack queries; (2) Original model accuracy;(3) Vulnerability analysis scores; (4) Defense model accuracy.

Following that, the model card along with its relevant configurations can be saved and pushed to an S3 bucket. This ensures the model card is properly stored and easily accessible for future reference and documentation purposes.

Part 2: Deployment Flow

The deployment flow focuses on the activities involved in deploying the integrated solution (the defense model and original model are shown in Figure 3), making it available for use by end-users. This typically includes the following steps.

2.1 – Model Packaging

Once developers are satisfied with their main application model’s and defense model’s performance as in the developer workflow, both can be downloaded from respective S3 buckets for deployment.

2.2 – Deployment and Monitoring

Both the original and defense models can be deployed to the target environment, leveraging SageMaker deployment capabilities.

Deploy the main application model as usual.

Next, deploy the defense model the same way as the original model.

Figure 7 – Defense model deployment architecture.

2.3 – End-User Interaction

The API abstraction can be done for parallel deployment of the defense model alongside the original model. The decision block can take remedial actions, such as blocking the user or randomizing the response from the main application model when the defense model identifies a malicious payload. Telemetry data can be sent to a Security Information and Event Management (SIEM) system like Splunk for further analysis and monitoring.

Figure 8 – Splunk SIEM reporting dashboard with AIShield integration.

Use Case: Secure a Breast Cancer Screening AI Algorithm

A Germany-based healthcare startup is using patented machine learning algorithms for accurate detection of breast cancer. To protect this core intellectual property from model extraction, evasion, and poisoning, the company needed a comprehensive security solution.

AIShield’s AI security product was leveraged by the company to conduct a risk vulnerability assessment of their AI model and improve its security posture.

Key benefits of AIShield include:

- Secures the core IP of ML algorithms for breast cancer detection.

- Protects against any manipulation with model evasion attacks and ensure patient safety.

- Helps adhere to medical cybersecurity guidelines and AI-based Software as a Medical Device (SaMD) cybersecurity requirements.

Use Case: Reduce Operational Losses from Credit Card Transaction Fraud

A prominent UK bank, handling over 30% of the nation’s £300 billion debit and credit card transactions, experienced nearly £70 million in external fraud losses in 2021 despite having a fraud detection model. This represented about 70% of total losses, marking it as the highest operational risk.

The bank’s CTO—along with AI/ML, risk and cybersecurity teams—aim to strengthen the current fraud detection model to decrease these losses and increase profit margins.

The AIShield team and the bank’s cybersecurity and AI teams conducted a joint proof-of-concept using the AIShield SaaS API. A fraud detection ML model was hosted on-premises and API access via AWS PrivateLink was provided to consume the product API.

AIShield demonstrated automatic triggering of a vulnerability assessment as part of the model development workflow, with a reference implementation of SageMaker. In under six hours, the AIShield product generated a vulnerability assessment report, including explanations and defensive measures. These artifacts were delivered securely through signed URL mechanisms in S3.

With its transparent and objective assessment of residual risk, the solution has the potential to significantly improve the bank’s ability to reduce residual operational risk by up to 15%. The risk management team is now able to make quick and secure releases of fraud detection models in just eight hours, 11x faster and with a 30x productivity boost compared to the previous process.

Solution Benefits

AIShield offers a free trial for users to explore its capabilities and supports on-premises deployment with sales support.

Technically, AIShield reduces vulnerability detection and remediation time for AI models from months to hours and provides reference implementations on GitHub. The benefits to businesses include an augmented return on investment (ROI), decrease in critical vulnerabilities, and estimated cost savings ranging from 40-60% of the security expenditure in AI projects. These advantages ensure a rapid realization of value through brand protection and regulatory compliance.

| Stage/Persona | Features | Usability | Support |

Development

|

|

|

|

Deployment

|

|

|

|

Conclusion

This post demonstrated the importance of AI security for organizations and how the AIShield security product can be integrated into Amazon SageMaker ML pipelines. The solution empowers organizations to leverage the combined capabilities of AIShield and SageMaker ML workflows, maximizing the value derived from their AI/ML initiatives with confidence and security assurance.

AIShield has received notable accolades for its technology, including the CES Innovation Award 2023, IoT World Congress Award 2023: Best Cybersecurity Solution, and recognition from Gartner in its Market Guide for AI Trust, Risk and Security Management.

Learn more about AIShield in AWS Marketplace.

The sample code; software libraries; command line tools; proofs of concept; templates; or other related technology (including any of the foregoing that are provided by our personnel) is provided to you as AWS Content under the AWS Customer Agreement, or the relevant written agreement between you and AWS (whichever applies). You should not use this AWS Content in your production accounts, or on production or other critical data. You are responsible for testing, securing, and optimizing the AWS Content, such as sample code, as appropriate for production grade use based on your specific quality control practices and standards. Deploying AWS Content may incur AWS charges for creating or using AWS chargeable resources, such as running Amazon EC2 instances or using Amazon S3 storage.

Bosch – AWS Partner Spotlight

Bosch Global Software Technologies (BGSW) is an AWS Partner that’s using machine learning solutions to improve its manufacturing, healthcare, and IoT solutions.