AWS Partner Network (APN) Blog

Cazena’s Instant AWS Data Lake: Accelerating Time to Analytics from Months to Minutes

By John Piekos, VP, Engineering at Cazena

By Shashi Raina, Partner Solutions Architect at AWS

|

|

A cloud data lake is an architecture pattern to build a data platform for generalized data processing that is provisioned automatically in the cloud.

It leverages virtualized infrastructure to support near limitless amounts of structured and semi-structured data, as well as a wide variety of analytical processing above and beyond those offered by standard SQL data warehouses.

A production cloud data lake is more than vast amounts of data stored in a cloud object store. It supports a variety of users, from data analysts to data engineers and data scientists.

Given the breadth of use cases, data lakes need to be a complete analytical environment with a variety of analytical tools, engines, and languages (such as SQL, R, Python, Java, and Scala) supporting a variety of workloads. These include traditional analytics, business intelligence, streaming event and Internet of Things (IoT) processing, advanced machine learning, and artificial intelligence processing.

In this post, we will explore how Cazena builds and deploys a production ready data lake in minutes for customers.

Cazena is an AWS Advanced Technology Partner with the AWS Data & Analytics Competency. Cazena delivers a production-ready instant AWS cloud data lake built on the full set of Amazon Web Services (AWS) analytics services.

The Challenge

It’s possible for organizations to build cloud data lakes themselves. AWS makes it easy to spin up analytic engines such as Amazon EMR or Amazon Redshift.

However, there are two challenges in delivering a data lake that is fully secure, production-ready, and capable of servicing a variety of users, use cases, and analytical tooling, all within budget.

The first challenge is staffing, acquiring the engineering talent with the necessary cloud skills to build the data lake. The second challenge is the amount of time it takes to design and implement the production data lake.

The Solution

Cazena’s Instant AWS Data Lake is designed to deliver a production-ready software-as-a-service (SaaS) platform experience for your analytics and machine learning (ML) workloads, instantly.

Just like typical SaaS offerings, Cazena’s AWS data lake for analytics is fully secured, production ready, and provisioned in just minutes. Ongoing maintenance, management, monitoring, and system tuning is handled by Cazena.

This post will examine the following requirements that comprise a SaaS data lake experience:

- Provisioning and SaaS orchestration: Automation allows Cazena to instantly deliver a custom-sized, production-ready cloud data lake, including federated single sign-on (SSO) and data encrypted in motion and at rest.

- Continuous ops: DevOps is integrated and automated, eliminating the need to hire engineers to maintain the ongoing health of your Cazena data lake. A production-ready service must also provide integrated SecOps: a secure environment with built-in intrusion and anomaly detection, malware prevention, vulnerability scans, log capture, and full transparency and auditing capabilities.

- Self-service analytic console: Analytic users can easily connect to endpoints to use best-of-breed tooling. This may include AWS native services such as Amazon SageMaker or Amazon Athena, or third-party tools such as StreamSets, Tableau, or DataRobot.

Data Lake Provisioning and SaaS Orchestration

Cazena delivers a fully capable and secured analytic data lake platform that is ready for production use, once provisioned.

Cazena uses Terraform to automate data lake provisioning, executing over 350 configuration steps to provision a custom-sized, production-ready AWS data lake in less than 30 minutes.

Each data lake is a single-tenant environment deployed in a new virtual private cloud (VPC). In this way, a customer’s data lake resources are not shared with other customers. Further, the size and shape of each EMR environment (the number of nodes and size of each node) is customized to the customer’s expected analytic workloads, automatically scaled as demand dictates.

The services exposed by the EMR environment can vary per data lake, but typically includes Spark, Hive, Livy, a SQL engine (Presto in this case), Hue, Jupyter notebooks, Oozie, and others.

In addition to capacity and compute provisioning, the data lake is provisioned with a set of Cazena-specific services that provide the backbone of Cazena orchestration, SSO, and identity federation.

These services also include automated DevOps and SecOps, keeping the data lake operational 24×7:

- Identity Management Service (IDM): IDM federates with your enterprise LDAP or Active Directory (AD) servers, as well as a Kerberos service and realm, providing user account access and identity. Cazena IDM provides SSO capabilities for all data lake services.

- Cazena Console: Cazena configures the Cazena Console and an application discovery service. The console provides a convenient task-oriented UI through which you can access analytics services.

- Infrastructure management: The Cazena management service provides data lake service monitoring, availability and security.

Once the Cazena Instant AWS Data Lake is provisioned, it’s immediately ready for production use.

Figure 1 – 300+ configuration steps define an enterprise-ready Cazena Instant AWS Data Lake.

Continuous Ops

All components of the Cazena Instant AWS Data Lake are continually monitored for health to ensure uptime and high availability. Alerts are handled automatically or escalated to an on-call engineer if the issue requires manual intervention.

However, Cazena’s continuous DevOps goes beyond basic system availability and resource monitoring, and addresses the following operations:

- Software upgrades: Cazena regularly enhances service components within the cloud data lake and adds new capabilities in the EMR ecosystem as they become available. Periodically, Cazena delivers new product releases automatically, as part of the Cazena operations service.

- Operating system and software patching: Cazena ensures that known security flaws for operating system kernels and software packages are patched within a security SLA timeframe. In addition, operating systems need to be upgraded periodically due to security issues or end-of-life considerations. Cazena software handles these procedures automatically.

- Cloud cost SLA: Cloud costs can skyrocket if left unchecked. Cazena offers a cost SLA, optimally running your workloads to an agreed-upon cost, alerting you if analytic job activity exceeds the capacity of the infrastructure or your budget, in the case of auto scaling.

- Compute capacity expansion: Should jobs be bottlenecked on CPU or network or some other constraint, Cazena is alerted, and takes action to resolve. Infrastructure expansion may happen automatically, or resource allocations adjusted, in order to extract the optimal performance from the infrastructure.

- Key rotation: TLS certificates ensure encrypted communication between processes. These keys occasionally expire and must be rotated without service interruption.

SecOps: Data Lake Security and Compliance

Each Cazena Instant AWS Data Lake is private, delivered and operated in a single-tenant cloud architecture designed to keep organizations secure and isolated from one another.

There are no public IPs; the Instant AWS Data Lake is behind an IPSec firewall which can be paired with a customer’s firewall to provide secure access to services within the data lake.

All communication with the data lake is encrypted over TLS. Data at rest is encrypted with a customer-managed key. Data lakes are continuously monitored for malicious activity and unauthorized behavior using industry-leading firewalls.

Advanced threat prevention features include antivirus, anti-spyware, vulnerability protection, URL filtering, file blocking and data filtering, and more.

Cazena captures all activity for the data lake and delivers the events to a SecOps repository as the historical archive. This archive provides auditable transparency for data lake usage.

Self-Service Analytic Console

Once data resides in your data lake, users can access analytic tools and data lake services as if they reside within your enterprise.

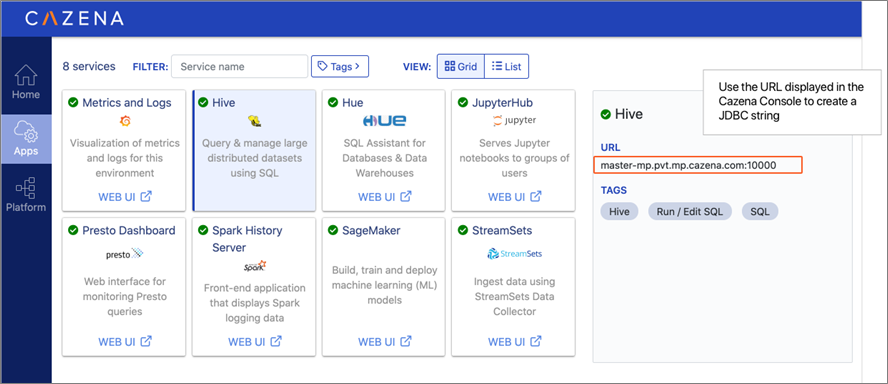

The Cazena console provides links to a wide variety of tools and applications, as well as connection strings for JDBC or ODBC connections. Simply configure the connection strings using the Cazena-supplied private IP (or URL) and provide proper authorization. It’s also possible to add custom or proprietary applications via Cazena’s AppCloud.

Figure 2 – Cazena Instant AWS Data Lake applications.

Cazena in Action

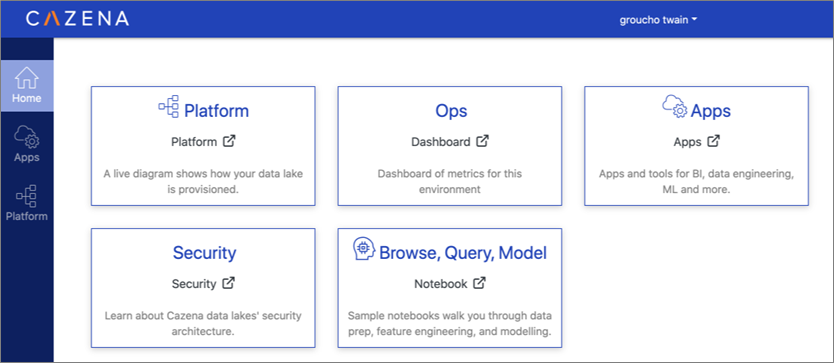

Cazena has provisioned an AWS data lake that you can access instantly. Not only can you see the platform and ops dashboards, you can also run analytics as well as both SQL and machine learning workloads.

Note that a typical Cazena Instant AWS Data Lake is a single-tenant (single customer) deployment, customized to run your analytics and meet your cost SLA. However, the Try Cazena data lake allows the public to log in and interact with the data lake services to get a first-hand view of the Cazena environment. For this reason, the Try Cazena data lake is multi-tenant.

Access the Try Cazena environment and use your LinkedIn login credentials for immediate access. To request personalized login credentials, email info@cazena.com.

The Try Cazena demonstration environment is free to access. In less than 10 minutes, a tutorial notebook will guide you through browsing, preparing, and querying a sample data set. You can even generate an ML model that can make predictions on fares for New York City taxi cabs.

Figure 3 – Try Cazena home page.

At the end of the tutorial, you will have interacted with data in the data lake, accessed many of the services of the data lake through SSO, and operated on data that’s encrypted both in motion and at rest.

Behind the scenes, Cazena handles the data lake maintenance, tuning and monitoring, and captures users’ activities that can be audited as needed via Cazena’s SecOps service.

A Real Data Lake Use Case

Bardess Group is a leading data analytics solution provider. Recently, their data scientists were engaged to analyze water treatment and filtration pipelines for a Central American water provider.

Their challenge was to leverage terabytes of historical machine-generated events from the pipeline, pump, and treatment equipment to glean predictive maintenance and quality alerts for the water delivery pipeline.

Bardess used the Cazena Instant AWS Data Lake and began ingesting this sensitive data on the first day of the project. From there, Bardess data scientists used the data lake and embedded AWS analytics to pre-process the data, and then executed machine learning models using Amazon SageMaker and JupyterHub notebooks, generating insights within the first three days of the project.

“With Cazena’s Instant AWS Data Lake, we can focus on designing the data architecture, doing exploratory data analysis, and starting to train models to support the data science project—all in the same day,” says Daniel Parton, Ph.D., Lead Data Scientist at Bardess.

Next Steps

Cazena’s Instant AWS Data Lake can shorten your time to analytics from months to minutes. Cazena achieves this promise by automatically provisioning a fully capable and secured analytic data lake platform that’s ready for immediate production use.

Cazena also manages and automates the ongoing ops required to keep the system healthy once provisioned.

A Cazena cloud data lake can be customized and provisioned to securely host your own data and analytics in minutes.

If you’d like your own dedicated Instant AWS data lake, please contact us and request a private offer for your own single-tenant deployment from our AWS Marketplace.

.

.

Cazena – AWS Partner Spotlight

Cazena is an AWS Data & Analytics Competency Partner. A launch partner in the AWS ISV Workload Migration Program, Cazena helps enterprises around the world accelerate AWS migrations and drive faster outcomes from data and analytics.

Contact Cazena | Solution Overview | AWS Marketplace

*Already worked with Cazena? Rate this Partner

*To review an AWS Partner, you must be a customer that has worked with them directly on a project.