AWS Partner Network (APN) Blog

SaaS Identity and Routing with Istio Service Mesh and Amazon EKS

By Farooq Ashraf, Sr. Solutions Architect – AWS

Many software-as-a-service (SaaS) providers are leveraging Amazon Elastic Kubernetes Service (EKS) to build their solutions on Amazon Web Services (AWS).

Amazon EKS provides SaaS builders with a range of different constructs that can be used to implement multi-tenant strategies. Recently, we introduced an AWS SaaS Factory EKS Reference Architecture that illustrated how these different constructs could be applied in an end-to-end working environment.

To build on the reference solution, I wanted to look at how Istio Service Mesh could be used to implement an identity model that simplified the mapping of individual tenants and routing of traffic to isolated tenant environments.

In this post, I will develop an architecture based on Amazon EKS that demonstrates a siloed SaaS deployment model, using Istio Service Mesh to manage request authentication and per-tenant routing. We’ll be walking through the solution implementation with a working code sample.

Istio is an open-source service mesh that many SaaS providers use for deploying their multi-tenant applications. It provides features such as traffic management, security, and observability at the Kubernetes pod level.

The SaaS User Pool and Routing Challenge

In the EKS SaaS reference architecture, the system uses Amazon Cognito as its identity provider, associating each tenant with a separate user pool. This approach allows each tenant to have their own separate identity policies. The challenge that comes with this model is that it adds complexity to your authentication flow, requiring tenants to be resolved to their specific user pool as part of the authentication flow.

To support this approach, SaaS providers must build this functionality into their system. Somewhere in the code for your solution, you’ll have to resolve the incoming context to a tenant and retrieve all the information needed to authenticate each user.

Once a user is authenticated, we also face routing challenges. For this example, we have separate EKS namespaces for tenants and we’d like a clear, simple way to have our inbound requests routed to the appropriate namespace.

The general goal here is to move the application-based routes’ resolution, authorization, and authentication to a proxy that can resolve these mapping issues in a more natural and maintainable way.

High-Level Architecture

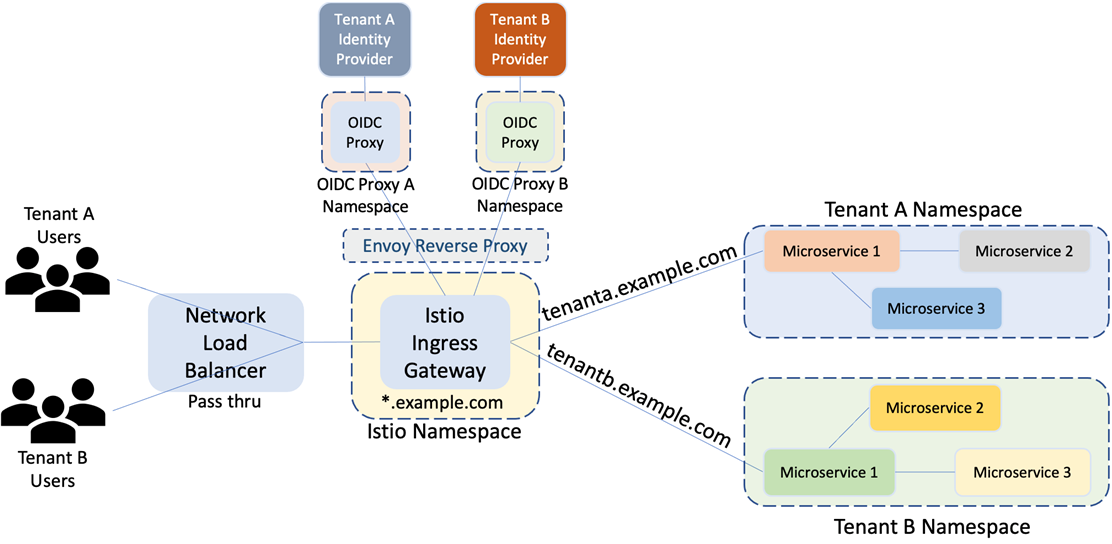

Before we get into the specifics, let’s start by looking at a high-level view of the architecture that is used to support our identity, routing, and authorization model.

In this architecture, Kubernetes namespaces provides the building block for isolation of resources.

Figure 1 – High-level architecture.

In the illustration above, you can see that each tenant’s microservices are deployed into a Kubernetes namespace. With every service deployment, Kubernetes creates a name record in the internal DNS, as <service-name>.<namespace-name>.svc.cluster.local, which becomes the service endpoint referred to by the microservice and the Istio VirtualService construct. This association of a DNS name with the deployed service provides the mechanism for Istio VirtualService to route traffic by mapping an external host DNS name to a microservice.

Routing rules are defined for each VirtualService based on host name, URL path, and other header information, thereby mapping requests that match to backend microservices. Network traffic at the edge is directed by Istio Ingress Gateway which is associated with a load balancer, exposing VirtualService to the external world. The Ingress Gateway is bound to an Envoy Reverse Proxy transparently relaying authorization requests to OpenID Connect (OIDC) proxies that provide external authorization per tenant.

Istio’s External Authorization feature has been introduced in Istio release 1.9 onwards and is built on two facets, an External Authorizer definition and an Authorization Policy. For a detailed discussion on Istio’s new External Authorization feature, refer to Istio’s blog post about better external authorization.

An External Authorizer definition specifies an external authorization service provider with appropriate parameters. This feature works in conjunction with Istio’s Authorization Policy construct, providing the capability to allow or deny unauthorized requests. With this enhanced capability, a policy enforced on an Istio Ingress Gateway enables authorization of incoming requests via the External Authorizer by initiating authorization code flows with an identity provider (IdP), such as Amazon Cognito User Pool.

As requests are initiated from clients to the public host DNS names (endpoints), they are serviced by a Network Load Balancer which acts as a transparent pass through, delivering requests to the Ingress Gateway. The Envoy Proxy, defined as an External Authorizer, receives the requests from the Ingress Gateway, transparently fanning out each request to an OIDC proxy instance matching the host name in the request header.

After successful authorization from the OIDC proxy, the Gateway forwards requests to the matching application in the appropriate namespace, as defined by the routing rules.

Network Load Balancer has been employed as the entry point of choice in this architecture, as it offers several benefits including static public IP, transparent pass-through, low latency, resilience, and high scalability. A Network Load Balancer also makes the architecture usable for both public and AWS PrivateLink connectivity to SaaS applications.

We’ve employed oauth2-proxy as the OIDC proxy solution, deploying a per tenant oauth2-proxy, which provides the following benefits:

- Isolation of tenant-specific sensitive data.

- Scaling each tenant independently.

- Maintenance or configuration changes with controlled impact.

- Reduced scope of impact.

With this architecture, we have essentially moved the authentication and routing management to the gateway and OIDC proxy.

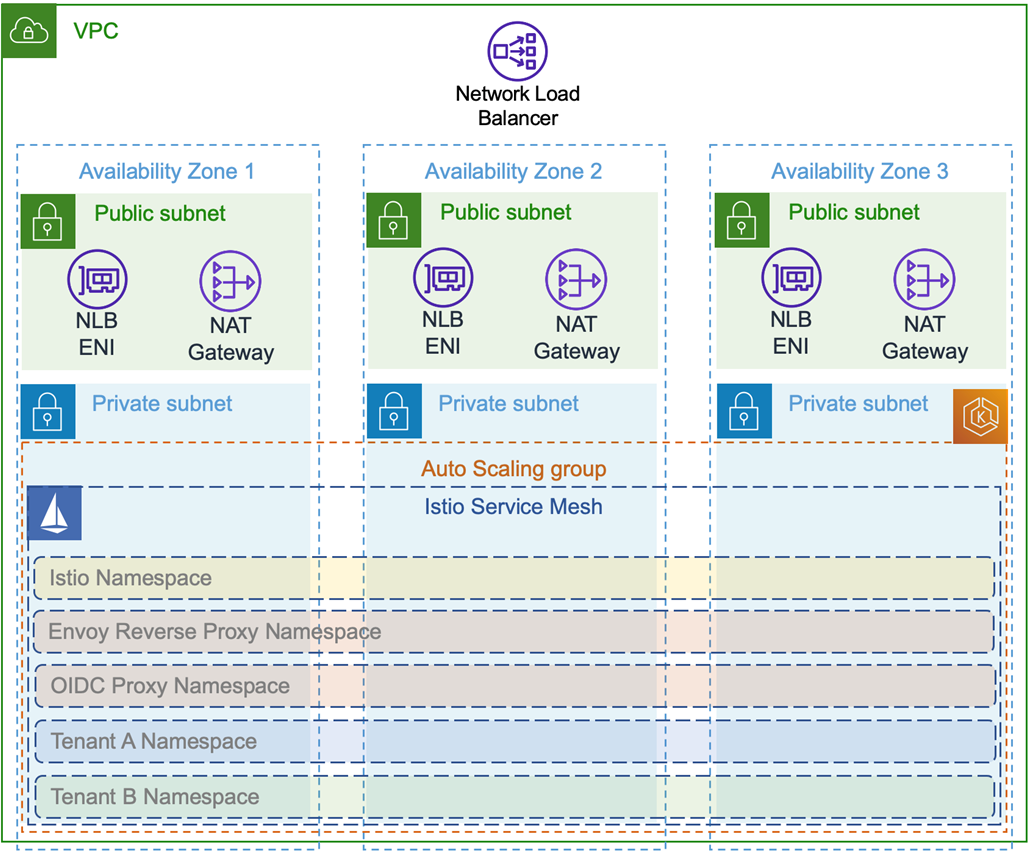

Setting up the Infrastructure

Before we get into the specifics of onboarding tenants, let’s look at the baseline infrastructure we need to have in place to make this work. Below is a view of the key elements.

Figure 2 – Foundational infrastructure.

We deploy a new Amazon Virtual Private Cloud (VPC) with separate public and private subnets, along with NAT Gateways, Internet Gateway, Route Tables, and Security Groups. The EKS worker nodes are deployed in private subnets, while NAT Gateways and Network Load Balancer endpoints in the public subnet.

Network Load Balancer endpoints provide access for clients to the application over the internet, while NAT Gateways allow reachability for Pods deployed in EKS to Docker repositories from where the EnvoyProxy, oauth2-proxy, and sample application images are pulled. Auto scaling is enabled for EKS worker nodes in order to cater for spike in traffic.

We’ll use eksctl CLI to deploy EKS and the related infrastructure, which generates AWS CloudFormation templates to create the various components that are needed to run a functioning EKS cluster. When creating EKS Managed Nodes, eksctl also creates AWS Identity and Access Management (IAM) roles with appropriate permissions required for the operation of the cluster.

We also deploy the AWS Load Balancer Controller add-on for automatic creation of load balancers, and Istio software for enabling the core building blocks of external authorization and request routing.

An Istio Ingress Gateway is configured to be deployed as part of the installation with annotations that trigger the AWS Load Balancer Controller to create an associated Network Load Balancer. Istio services, by default, are deployed in the istio-system Kubernetes namespace.

In order to provide isolation between authorization flow and application traffic, a dedicated namespace is created for each tenant’s workload and also for the OIDC proxies. The Envoy Proxy is deployed in its own dedicated namespace.

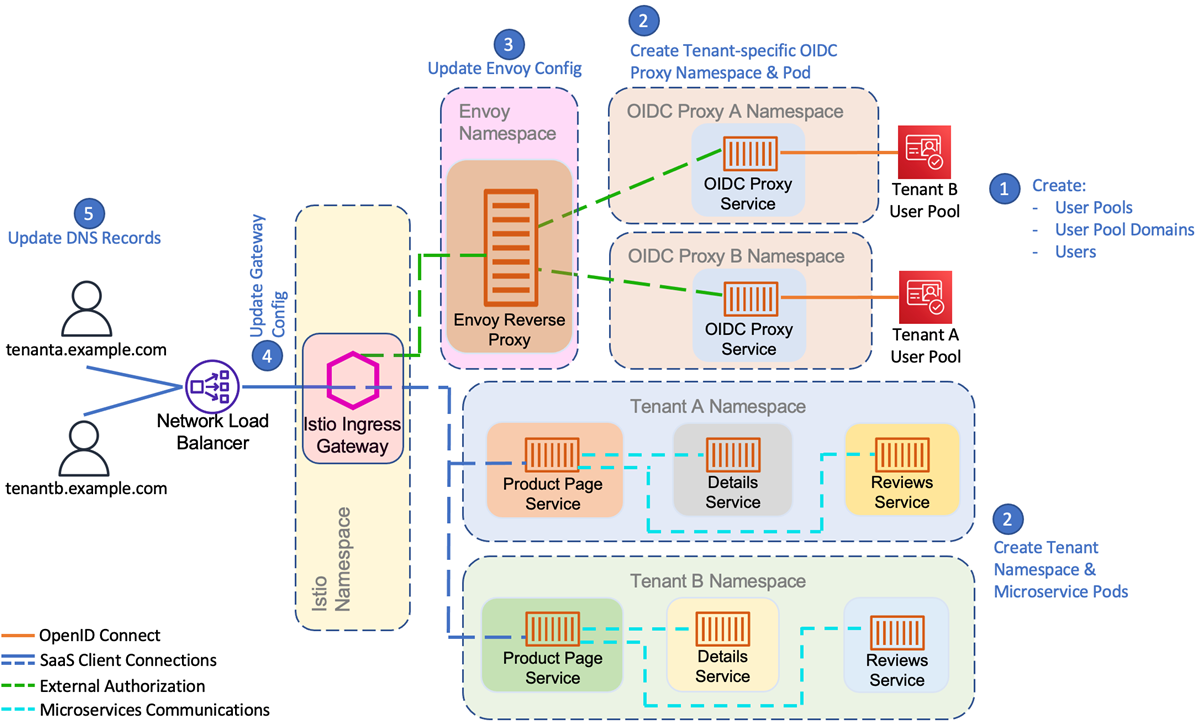

Tenant Onboarding

Now that our environment is set up, let’s look at how we configure this infrastructure as each tenant is onboarded.

Tenant onboarding is carried out using a combination of bash scripts and Python code. The example discussed in this post is deployed by running the scripts from a Linux shell, as described in the README file.

These scripts can be integrated into a CI/CD pipeline such as Amazon CodePipeline for automation purposes, which can then be called from a Tenant Onboarding portal. An onboarding portal and associated CI/CD pipeline are outside the scope of this post. Examples of tenant onboarding portal and provisioning pipeline can be found in the sample code for AWS SaaS Factory EKS Reference Architecture.

In this example, each tenant that signs up for our SaaS service is associated with a single Amazon Cognito user pool. We also create an app client per pool, and a domain for sign in with Cognito Hosted UI. On successful authentication, Amazon Cognito issues JSON Web Tokens (JWTs) encapsulating user claims.

Every user pool is associated with a domain which identifies the Hosted UI for OAuth 2.0-based authorization flows. This Hosted UI manages the user authentication experience and can be customized with brand-specific logos.

The users that belong to a tenant organization are stored within the tenant’s user pool along with their standard and custom attributes. Standard attributes include name, email address, phone number, etc. The custom attributes can store additional data that will be used to define our user’s profile and their binding to a specific tenant.

Figure 3 – Tenant onboarding.

Along with the creation of a user pool, the tenant’s oauth2-proxy configuration is dynamically generated, extracting the required information (clientID, clientSecret, oidc_issuer_url, redirect_url) from each user pool as it gets created. A sample oauth2-proxy configuration file is shown below.

The Envoy Proxy configuration is updated with each tenant’s user pool details. The following is a snippet of a YAML file for configuring Envoy with an associated oauth2-proxy instance.

The YAML file is created and updated using Python. With every update, the YAML configuration is baked into the Docker image for Envoy Proxy.

After the Envoy Proxy configuration, the Ingress Gateway Authorization Policy is updated to add the new tenant. The following is a snippet of a YAML file used for configuring the Gateway Authorization Policy that associates the External Authorizer previously defined with requests arriving for tenantX.example.com.

For this particular solution, you’ll notice that we’ve used the bookinfo sample application. It consists of three microservices:

- Product page: Application landing page.

- Details: Provides book information such as type, number of pages, and publisher.

- Review: Provides reviews about the book.

For demonstration purposes, we use the example.com domain in this post, and use tenanta.example.com and tenantb.example.com for our two sample tenant environments. A third sample tenant—tenantc.example.com—is added to demonstrate onboarding experience.

To direct requests to the deployed Network Load Balancer, we add the public IP addresses of the load balancer to our local desktop’s hosts file against each tenant domain for name resolution. The same can be achieved by adding CNAME records to a DNS zone, pointing to the load balancer’s public endpoint.

With this, you can point your browser to a tenant-specific endpoint, such as https://tenanta.example.com/bookinfo, which should launch the bookinfo application.

It’s important to note the authorization, authentication, and routing solution demonstrated in this architecture are decoupled from the deployed application. This means you can deploy any containerized application that uses this architecture.

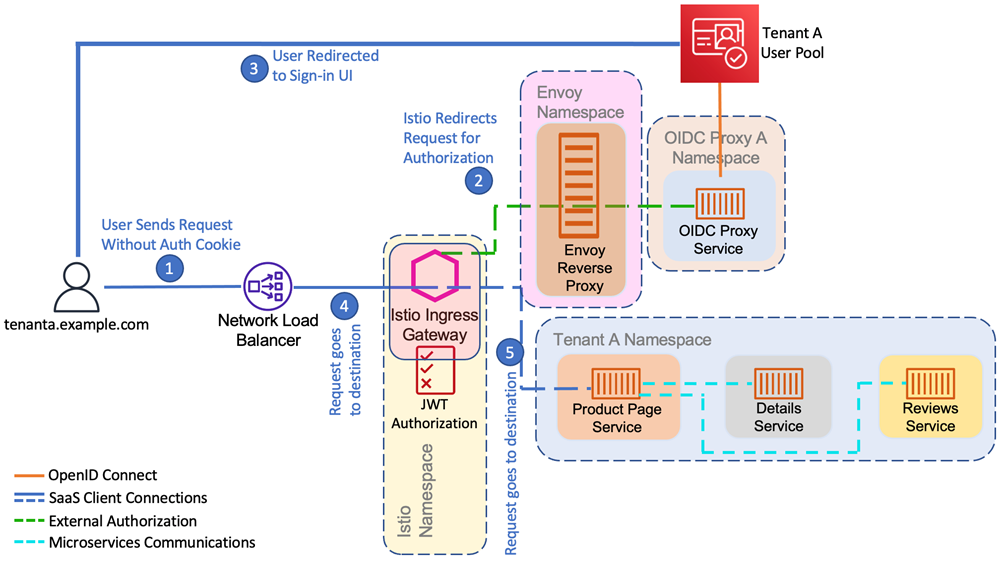

Request Flow

The flow of a request from end users to the SaaS application is shown in the following diagram.

Figure 4 – User request flow.

When an end user belonging to Tenant A initiates a request directed to tenanta.example.com, they are sent by the browser to the Network Load Balancer endpoint. The load balancer passes this request through to the Istio Ingress Gateway. As the Gateway identifies Host information in the HTTP request header, it accepts the request and forwards it via the Envoy Proxy to the tenant-specific oauth2-proxy for authorization.

If the incoming request contains an unexpired cookie, it retrieves the JWT (ID token) from the user pool, injecting the token into the authorization header and allowing the request to flow through to the application. If the request cookie is expired or the user was unauthenticated, the browser is redirected to the Amazon Cognito Hosted UI for authentication.

The following HTTP headers are forwarded from the Istio Gateway after authorization to the application.

When the request is authorized, the Gateway forwards the request based on the host mapping rules to the product page service pod running in the namespace for Tenant A. The application, in turn, communicates the other two microservices details and reviews to render the HTML page, returning it back to the client.

So, the requests initiated by a client, after going through authorization flow, get delivered to the SaaS application along with necessary claims establishing credentials. The application then performs necessary data operations as permitted by the claims, responding with the results of the requested operations.

Conclusion

In this post, I examined the key considerations for implementing SaaS identity and routing using Istio Service Mesh in an Amazon EKS environment.

Amazon EKS offers you a range of constructs to implement multitenancy in your SaaS solution, as we have previously seen in our AWS SaaS Factory EKS Reference Architecture. This post dives deep into identity and routing challenges and how you can use the capabilities of Istio Service Mesh in addressing those challenges.

Some key takeaways from the proposed architecture are:

- Decoupling of application from authentication, authorization, and routing.

- Transformation of a coding challenge into a configuration task.

- Simplification of tenant onboarding.

As you dig into the sample application that we have shared in this Github repository, you’ll get a better sense of the various building blocks of the solution that we have presented. The Github repository provides a more in-depth view of the solution and has detailed instructions to set up and deploy a sample environment, which will help in understanding all of the moving pieces of the environment.