AWS Architecture Blog

One to Many: Evolving VPC Design

Since its inception, the Amazon Virtual Private Cloud (VPC) has acted as the embodiment of security and privacy for customers who are looking to run their applications in a controlled, private, secure, and isolated environment.

This logically isolated space has evolved, and in its evolution has increased the avenues that customers can take to create and manage multi-tenant environments with multiple integration points for access to resources on-premises.

From One VPC: Single Unit of Networking

To be successful with the AWS Virtual Private Cloud you first have to define success for today and what success might look like as your organization’s adoption of the AWS cloud increases and matures. In essence, your VPCs should be designed to satisfy the needs of your applications today and must be scalable to accommodate future needs.

Classless Inter-Domain Routing (CIDR) notations are used to denote the size of your VPC. AWS allows you specify a CIDR block between /16 and /28. The largest, /16, provides you with 65,536 IP addresses and the smallest possible allowed CIDR block, /28, provides you with 16 IP addresses. Note, the first four IP addresses and the last IP address in each subnet CIDR block are not available for you to use, and cannot be assigned to an instance.

AWS VPC supports both IPv4 and IPv6. It is required that you specify an IPv4 CIDR range when creating a VPC. Specifying an IPv6 range is optional.

Customers can specify ANY IPv4 address space for their VPC. This includes but is not limited to RFC 1918 addresses.

After creating your VPC, you divide it into subnets. In an AWS VPC, subnets are not isolation boundaries around your application. Rather, they are containers for routing policies.

Isolation is achieved by attaching an AWS Security Group (SG) to the EC2 instances that host your application. SGs are stateful firewalls, meaning that connections are tracked to ensure return traffic is allowed. They control inbound and outbound access to the elastic network interfaces that are attached to an EC2 instance. These should be tightly configured, only allowing access as needed.

It is our best practice that subnets should be created in categories. There two main categories; public subnets and private subnets. At minimum they should be designed as outlined in the below diagrams for IPv4 and IPv6 subnet design.

Subnet types are denoted by the ability and inability for applications and users on the internet to directly initiate access to infrastructure within a subnet.

Public Subnets

Public subnets are attached to a route table that has a default route to the Internet via an Internet gateway.

Resources in a public subnet can have a public IP or Elastic IP (EIP) that has a NAT to the Elastic Network Interface (ENI) of the virtual machines or containers that hosts your application(s). This is a one-to-one NAT that is performed by the Internet gateway.

Private Subnets

A private subnet contains infrastructure that isn’t directly accessible from the Internet. Unlike the public subnet, this infrastructure only has private IPs.

Infrastructure in a private subnet gain access to resources or users on the Internet through a NAT infrastructure of sorts.

AWS natively provides NAT capability through the use of the NAT Gateway service. Customers can also create NAT instances that they manage or leverage third-party NAT appliances from the AWS Marketplace.

In most scenarios, it is recommended to use the AWS NAT Gateway as it is highly available (in a single Availability Zone) and is provided as a managed service by AWS. It supports 5 Gbps of bandwidth per NAT gateway and automatically scales up to 45 Gbps.

An AWS NAT gateway’s high availability is confined to a single Availability Zone. For high availability across AZs, it is recommended to have a minimum of two NAT gateways (in different AZs). This allows you to switch to an available NAT gateway in the event that one should become unavailable.

This approach allows you to zone your Internet traffic, reducing cross Availability Zone connections to the Internet. More details on NAT gateway are available here.

Illustration of high availability with a multiple NAT Gateways (NAT-GW) attached to their own route table

Illustration of the failure of one NAT Gateway and the fail over to an available NAT Gateway by the manual changing of the default route next hop in private subnet A route table

AWS allocated IPv6 addresses are Global Unicast Addresses by default. That said, you can privatize these subnets by using an Egress-Only Internet Gateway (E-IGW), instead of a regular Internet gateway. E-IGWs are purposely built to prevents users and applications on the Internet from initiating access to infrastructure in your IPv6 subnet(s).

Illustration of internet access for hybrid IPv6 subnets through an Egress-Only Internet Gateway (E-IGW)

Applications hosted on instances living within a private subnet can have different access needs. Some require access to the Internet while others require access to databases, applications, and users that are on-premises. For this type of access, AWS provides two avenues: the Virtual Gateway and the Transit Gateway. The Virtual Gateway can only support a single VPC at a time, while the Transit Gateway is built to simplify the interconnectivity of tens to hundreds of VPCs and then aggregating their connectivity to resources on-premises. Given that we are looking at the VPC as a single unit of networking, all diagrams below contain illustrations of the Virtual Gateway which acts a WAN concentrator for your VPC.

Illustration of private subnets connecting to Data Center using AWS Direct Connect as primary and IPsec as backup

The above diagram illustrates a WAN connection between a VGW attached to a VPC and a customer’s data center.

AWS provides two options for establishing a private connectivity between your VPC and on-premises network: AWS Direct Connect and AWS Site-to-Site VPN.

AWS Site-to-Site VPN configuration leverages IPSec with each connection providing two redundant IPSec tunnels. AWS support both static routing and dynamic routing (through the use of BGP).

BGP is recommended, as it allows dynamic route advertisement, high availability through failure detection, and fail over between tunnels in addition to decreased management complexity.

VPC Endpoints: Gateway & Interface Endpoints

Applications running inside your subnet(s) may need to connect to AWS public services (like Amazon S3, Amazon Simple Notification Service (SNS), Amazon Simple Queue Service (SQS), Amazon API Gateway, etc.) or applications in another VPC that lives in another account. For example, you may have a database in another account that you would like to expose applications that lives in a completely different account and subnet.

For these scenarios you have the option to leverage an Amazon VPC Endpoint.

There are two types of VPC Endpoints: Gateway Endpoints and Interface Endpoints.

Gateway Endpoints only support Amazon S3 and Amazon DynamoDB. Upon creation, a gateway is added to your specified route table(s) and acts as the destination for all requests to the service it is created for.

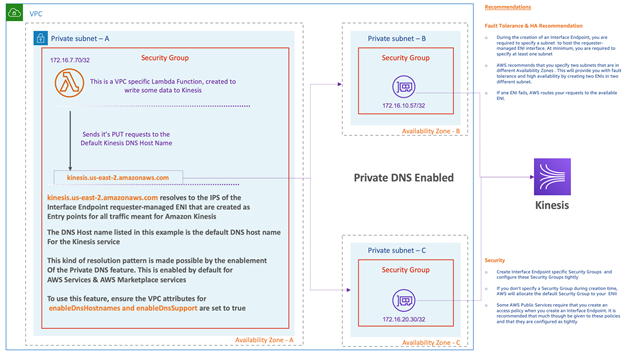

Interface Endpoints differ significantly and can only be created for services that are powered by AWS PrivateLink.

Upon creation, AWS creates an interface endpoint consisting of one or more Elastic Network Interfaces (ENIs). Each AZ can support one interface endpoint ENI. This acts as a point of entry for all traffic destined to a specific PrivateLink service.

When an interface endpoint is created, associated DNS entries are created that point to the endpoint and each ENI that the endpoint contains. To access the PrivateLink service you must send your request to one of these hostnames.

As illustrated below, ensure the Private DNS feature is enabled for AWS public and Marketplace services:

Since interface endpoints leverage ENIs, customers can use cloud techniques they are already familiar with. The interface endpoint can be configured with a restrictive security group. These endpoints can also be easily accessed from both inside and outside the VPC. Access from outside a VPC can be accomplished through Direct Connect and VPN.

Customers can also create AWS Endpoint services for their applications or services running on-premises. This allows access to these services via an interface endpoint which can be extended to other VPCs (even if the VPCs themselves do not have Direct Connect configured).

VPC Sharing

At re:Invent 2018, AWS launched the feature VPC sharing, which helps customers control VPC sprawl by decoupling the boundary of an AWS account from the underlying VPC network that supports its infrastructure.

VPC sharing uses Amazon Resource Access Manager (RAM) to share subnets across accounts within the same AWS organization.

VPC sharing is defined as:

VPC sharing allows customers to centralize the management of network, its IP space and the access paths to resources external to the VPC. This method of centralization and reuse (of VPC components such as NAT Gateway and Direct Connect connections) results in a reduction of cost to manage and maintain this environment.