Containers

GitOps-driven, multi-Region deployment and failover using EKS and Route 53 Application Recovery Controller

One of the key benefits of the AWS Cloud is it allows customers to go global in minutes, easily deploying an application in multiple Regions around the world with just a few clicks. This means you can provide lower latency and a better experience for your customers at minimal cost while targeting higher availability service-level objectives for your most critical workloads.

In this blog post, we’ll show how to deploy an application that survives widespread operational events within an AWS Region by leveraging Amazon Route 53 Application Recovery Controller in conjunction with Amazon Elastic Kubernetes Service (Amazon EKS). We’ll use an open-source CNCF project called Flux to keep the application deployments synchronized across multiple geographic locations.

The AWS Cloud was built from the very beginning around the concepts of Regions and Availability Zones (AZs). A Region is a physical location around the world where we cluster data centers. We call each group of logical data centers an Availability Zone. Each AWS Region consists of multiple, isolated, and physically separate Availability Zones within a geographic area. An Availability Zone (AZ) is one or more discrete data centers with redundant power, networking, and connectivity in an AWS Region. Availability Zones give customers the ability to operate production applications and databases that are more highly available, fault tolerant, and scalable than would be possible from a single data center. All Availability Zones in an AWS Region are interconnected with high-bandwidth, low-latency networking over fully redundant, dedicated metro fiber providing high-throughput, low-latency networking between Availability Zones.

AWS services embrace those two concepts and, depending on the level of abstraction or feature set, you can find examples of zonal, Regional, and global services. For instance, an Amazon EC2 instance is a zonal resource, bound to a specific Availability Zone within an AWS Region. An Amazon S3 bucket, on the other hand, is an example of a Regional resource, that can replicate the stored data across Availability Zones within a Region. Amazon Route 53, a fully managed DNS service, is an example of a global service, where AWS maintains DNS resolvers for your hosted zones across AWS Regions and AWS edge locations.

The Reliability Pillar of the AWS Well-Architected Framework recommends that any production workload in the Cloud should leverage a Multi-AZ design to avoid single points of failures. One of the main reasons we build Regions with 3+ AZs is to keep providing a highly available environment, even during an extensive AZ disruption event. Zonal services like Amazon EC2 provide abstractions such as EC2 Auto Scaling Groups to allow customers to deploy and maintain compute capacity that makes use of multiple AZs. Amazon EBS volumes, which are also zonal resources, can have snapshots that are Regional.

For business-critical applications, it’s worth considering a multi-Region design, in either an active-active or active-passive scenario. Services like Amazon SNS, Amazon Aurora, Amazon DynamoDB, and Amazon S3 provide built-in capabilities to design multi-Region applications on AWS. Amazon SNS provides cross-Region delivery to an Amazon SQS queue or an AWS Lambda function. Aurora has Global Databases while DynamoDB has global tables. Amazon S3 offers Multi-Region Access Points. It’s also possible to deploy a reference architecture that models a serverless active/passive workload with asynchronous replication of application data and failover from a primary to a secondary AWS Region using the Multi-Region Application Architecture, available on AWS Solutions.

In this blog post, we will walk through the process of deploying an application across two Amazon Elastic Kubernetes Service (Amazon EKS) clusters in different AWS Regions in an active-passive scenario. The deployments will be managed centrally through a GitOps workflow by Flux, and Amazon Route 53 Application Recovery Controller will continuously assess failover readiness and enable you to change application traffic in case of any Regional failure, including partial ones.

Enter Amazon EKS

Amazon Elastic Kubernetes Service (Amazon EKS) is a managed service that you can use to run Kubernetes on AWS without needing to install, operate, and maintain your own Kubernetes control plane. Amazon EKS automatically manages the availability and scalability of the control plane components of the Kubernetes cluster. As an example of a Regional service, Amazon EKS runs the Kubernetes control plane across multiple Availability Zones to ensure high availability, and it automatically detects and replaces unhealthy control plane nodes.

Introduction to Route 53 Application Recovery Controller

Amazon Route 53 Application Recovery Controller (ARC) is a set of capabilities built on top of Amazon Route 53 that helps you build applications that require very high availability levels with recovery time objectives (RTO) measured in seconds or minutes and comprises two distinct but complementary capabilities: readiness checks and routing controls. You can use these features together to give you insights into whether your applications and resources are ready for recovery and to help you manage and coordinate failover. To start using Amazon Route 53 ARC, you partition your applications into redundant failure-containment units, or replicas, called cells. The boundary of a cell is typically aligned with an AWS Region or an Availability Zone.

Route 53 Application Recovery Controller key terms

To better understand how Route 53 ARC works, it’s important to define some key terms. For a comprehensive list of Route 53 ARC components, you can review the AWS Developer Guide.

Recovery readiness components

The following are components of the readiness check feature in Route 53 ARC:

- Recovery group: A recovery group represents an application or group of applications that you want to check failover readiness for. It typically consists of two or more cells, or replicas, that mirror each other in terms of functionality.

- Cell: A cell defines your application’s replicas or independent units of failover. It groups all AWS resources that are necessary for your application to run independently within the replica. You may have multiple cells in your environments mapping your faulty domain.

- Readiness check: A readiness check continually audits a set of resources, including AWS resources or DNS target resources, that span multiple cells for recovery readiness. Readiness audits can include checking for capacity, configuration, AWS quotas, or routing policies, depending on the resource type.

Recovery control components

With recovery control configuration in Route 53 ARC, you can use extremely reliable routing control to enable you to recover applications by rerouting traffic, for example, across Availability Zones or Regions:

- Cluster: A cluster is a set of five redundant Regional endpoints against which you can execute API calls to update or get the state of routing controls. You can host multiple control panels and routing controls on one cluster.

- Control panel: A control panel groups together a set of related routing controls. You can associate multiple routing controls with one control panel and then create safety rules for the control panel to ensure that the traffic redirection updates that you make are safe.

- Routing control: A routing control is a simple on/off switch hosted on a cluster that you use to control the routing of client traffic in and out of cells. When you create a routing control, it becomes available to use as a Route 53 health check so that you can direct Amazon Route 53 to reroute traffic when you update the routing control in Application Recovery Controller.

Overview of solution

Our solution comprises:

- Two EKS clusters deployed in separate AWS Regions (Oregon and Ireland), with a node group and an Application Load Balancer on each of them acting as a Kubernetes Ingress to receive external traffic.

- Route 53 Application Recovery Controller to evaluate recovery readiness of our failover stack and change DNS responses according to changes on its own routing control configuration.

- A Git repository hosted on AWS CodeCommit (in the Oregon Region) since we’re using a GitOps approach to manage our EKS clusters and deploying applications centrally.

Deploy the solution

Prerequisites

- Git

- AWS Command Line Interface (AWS CLI)

- Node.js

- AWS Cloud Development Kit (AWS CDK)

- Flux

- Route 53 Public hosted zone configured

Clone the solution repository from AWS Samples and navigate to the parent folder:

This repository contains:

app/: a set of Kubernetes manifests to deploy a third-party sample applicationinfra/: an AWS CDK application that will deploy and configure the corresponding AWS services for you

AWS CDK is a framework for defining cloud infrastructure in code and provisioning it through AWS CloudFormation. AWS CDK lets you build reliable, scalable, cost-effective applications in the cloud with the considerable expressive power of a programming language. Please refer to AWS CDK documentation to learn more.

Bootstrap AWS CDK

To start deploying the solution, you need to install and initialize AWS CDK first. Navigate into the infra repository and run the following in your preferred terminal:

Deploy EKS clusters

After the bootstrapping process, you’re ready to deploy our AWS CDK application, composed of different stacks. Each AWS CDK stack will match 1:1 with an AWS CloudFormation one. The first two stacks will deploy two EKS clusters in different AWS Regions, one in US West (Oregon) and the other in Europe (Ireland):

It will take around 20–30 minutes to deploy each stack.

Run the following commands and add the relevant outputs for both stacks as environment variables to be used on later steps:

Add the configuration for each EKS cluster on your local kubeconfig by running the following:

Please note that this AWS CDK example was designed for demonstration purposes only and should not be used in production environments as is. Please refer to EKS Best Practices, especially the Security Best Practices section, to learn how to properly run production Kubernetes workloads on AWS.

Create a CodeCommit Repository

You can choose to use a different Git repository, such as GitHub or Gitlab. Refer to Flux documentation on how to set up your cluster with them: Github | Gitlab

As of the time this blog post was written, AWS CodeCommit does not offer a native replication mechanism across AWS Regions. You should consider your high-availability requirements and plan accordingly. You can build your own solution to Replicate AWS CodeCommit Repositories between Regions using AWS Fargate, for example.

Run the following command to save your recently created repository URL as an environment variable to be used later:

Generate SSH Key pair for the CodeCommit user

Generate an SSH key pair for the gitops IAM user that will have permissions to the CodeCommit repository generated by CodeCommitRepositoryStack stack and upload the public one to AWS IAM.

Save the SSH key ID after the upload:

Bootstrap Flux

Bootstrapping Flux with your CodeCommit repository

Add the CodeCommit SSH key to your ~/.ssh/known_hosts file:

Append the SSH key ID with the SSH_KEY_ID environment variable you saved earlier to have the SSH URL formed, and bootstrap Flux on each EKS cluster by running the following:

Your final SSH URL should be something like ssh://APKAEIBAERJR2EXAMPLE@git-codecommit.us-west-2.amazonaws.com/v1/repos/gitops-repo. Make sure you confirm giving key access to the repository, answering y when prompted.

Change your current kubectl context to the other EKS cluster and rerun the Flux bootstrap command:

Here we’re using an SSH connection to CodeCommit specifically for Flux compatibility, and temporary credentials should always be your first choice.

Adding a demo application to the gitops-repo repository

Clone the gitops-repo repository using your preferred method. In this case, we’re using the HTTPS repository URL with the CodeCommit credential helper provided by AWS CLI:

Copy everything from app folder to the gitops-repo folder:

The app folder contains our sample application that will be deployed in the two EKS clusters. It will use the microservices-demo app plus an Ingress backed by AWS Load Balancer Controller. Please note that microservices-demo is owned and maintained by a third party.

Navigate into gitops-repo directory and push the changes to the remote gitops-repo repository:

After a few minutes, Flux will deploy the demo application with an Application Load Balancer exposing your application to the world.

Save the created Application Load Balancer ARNs on US West (Oregon) and Europe (Ireland) as environment variables:

Deploy the Route 53 Application Recovery Controller stack

For this step, you will use the environment variables we saved earlier, plus the Application Load Balancer ARNs:

As part of this deployment, two Route 53 health checks will be created. Save the ID of each of them for later use:

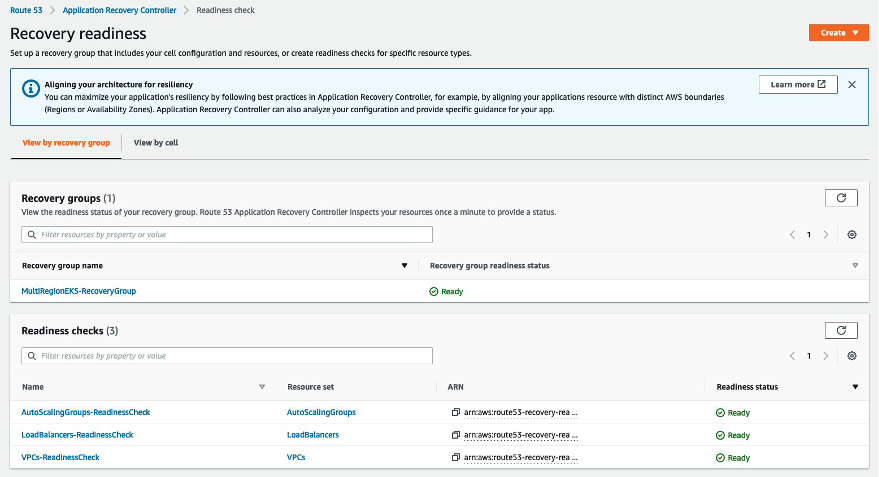

Take a look on the Route 53 Application Recovery Controller console

At this point, you should have the following deployed:

- 2 EKS clusters (one per Region)

- 2 ALB load balancers (one per Region)

- 1 Route 53 ARC cluster

- 1 Route 53 ARC recovery group with two cells (one per Region) with VPCs, ASGs, and ALBs bundled into their respective resource sets

- 2 Route 53 ARC routing controls (one per Region) and 2 Route 53 health checks linked together.

Let’s open the Route 53 Application Recovery Controller console and open the Readiness check portion of it. It’s under the multi-Region section of the left-side navigation pane:

With a readiness check, you can programmatically make sure your application (recovery group) and its cells are healthy, which means your application is ready to fail over.

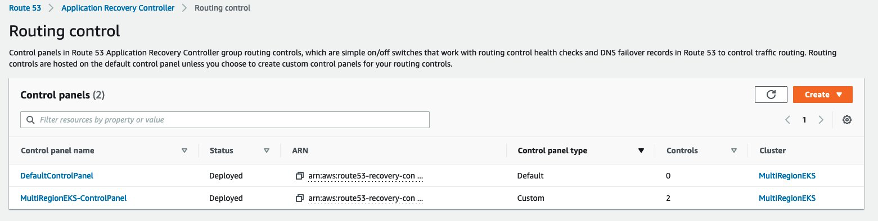

Let’s move to the routing control portion of Route 53 ARC. It’s also within the multi-Region section of the left-side navigation pane:

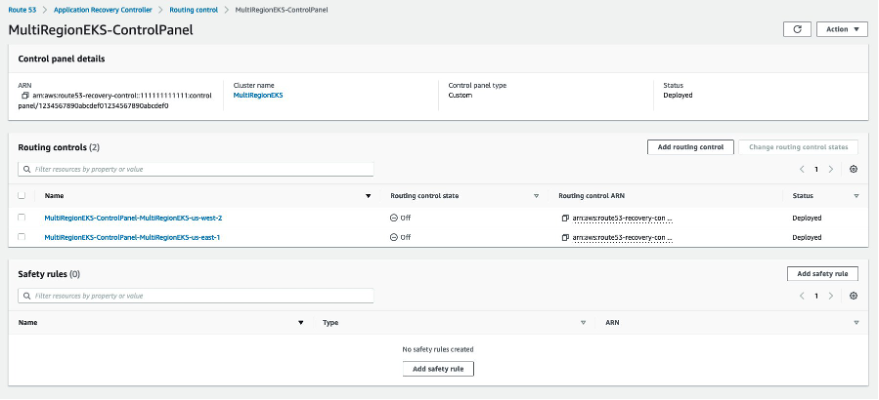

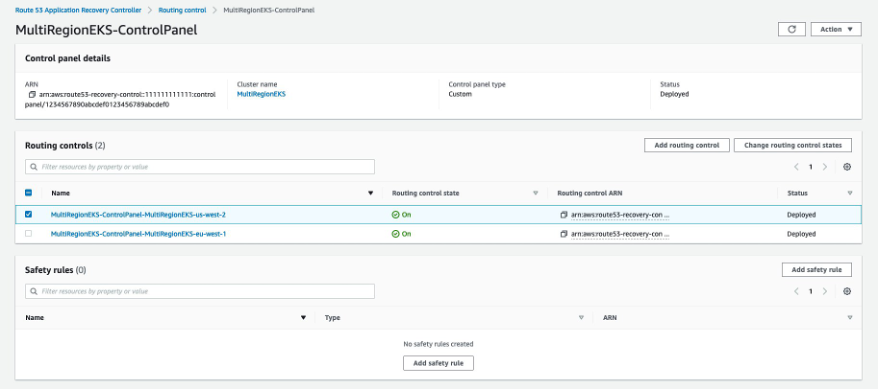

Click on the MultiRegionEKS-ControlPanel control panel:

Turning your routing controls on

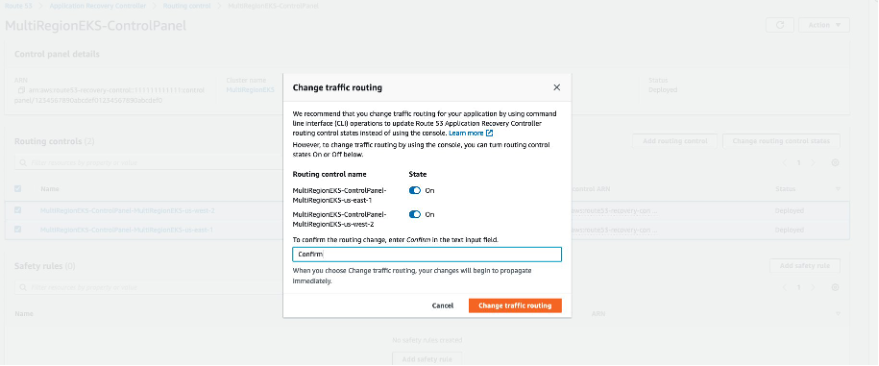

By default, recently created routing controls are switched off, which means the corresponding Route 53 health check will be in the unhealthy state, preventing traffic from being routed. Select the two routing controls created for you, turn them On, enter Confirm in the text input field, and click on Change traffic routing so we can configure our Route 53 hosted zone properly:

Let’s set our Route 53 hosted zone to use those two new health checks

Find your existing Route 53 hosted zone ID by running the following command. Make sure you replace example.com with the public domain you own:

Let’s extract the information we need to create our Route 53 records from each load balancer:

Now we’ll create the corresponding Route 53 records. First, we need to generate a file with the changes per the change-resource-record-sets AWS CLI command documentation:

We’re using the subdomain service and the Route 53 failover policy for this example. Route 53 ARC routing control works regardless of the routing policy you use.

Then, we’ll run the following:

Testing Route 53 ARC failover

The easiest way to verify if our routing controls are working as expected is by doing nslookup queries:

Triggering the failover through Route 53 ARC Routing control console

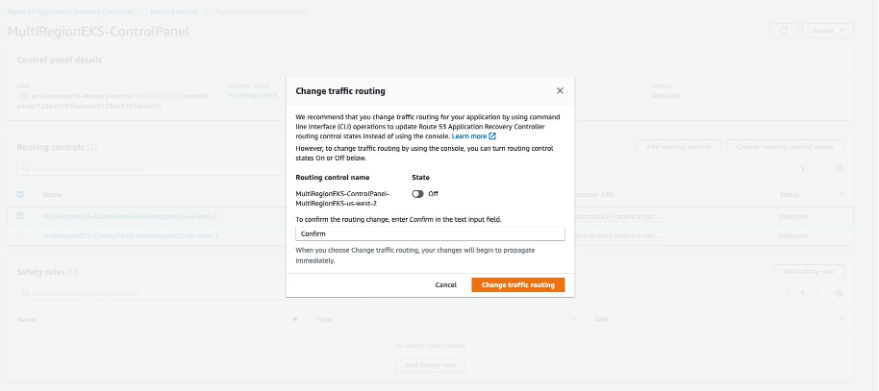

Let’s imagine we’re experiencing intermittent issues with US West (Oregon) and we want to shift traffic from our primary Region to the secondary one (Ireland). Navigate back to the MultiRegionEKS-ControlPanel routing control console and select the MultiRegionEKS-ControlPanel-MultiRegionEKS-us-west-2 routing control and click on Change routing control states:

Turn it Off, enter Confirm on the text input field, and click on Change traffic routing:

The Route 53 health check linked with MultiRegionEKS-ControlPanel-MultiRegionEKS-us-west-2 will start failing, which will make Route 53 stop returning ALB’s IP addresses from our primary Region.

After a few seconds, depending on how your recursive DNS provider caches results, you will see a changed response, pointing to the IP addresses from the ALB we had deployed on our secondary (eu-west-1) Region:

It’s strongly advised to work with Route 53 ARC routing controls through the API (or AWS CLI) instead of the AWS Management Console during production-impacting events since you can leverage the five geo-replicated endpoints directly. Please refer to the Route 53 ARC best practices documentation to learn more.

Cleaning up

Please be mindful that the resources deployed here will incur in charges to your AWS bill. After exploring this solution and adapting it to your own applications architecture, make sure to clean up your environment.

Removing the Application Load Balancers and their respective target groups using Flux

You can delete the corresponding microservices-demo-kustomization.yaml and ingress-kustomization.yaml files plus the ingress/ folder from your gitops-repo that is managed by Flux and push your changes into your Git repository:

Using AWS Management Console

You can also delete the corresponding Load Balancers (Oregon and Ireland) and Target Groups (Oregon and Ireland)

Removing the resources you deployed using AWS CDK

You need to delete the SSH public key for gitops user first:

You can do it through the CloudFormation console in both Regions and removing the corresponding stacks or by issuing a cdk destroy –all within the directory of the CDK app:

Conclusion

In this post, we demonstrated how to apply the GitOps approach for deploying containerized applications on Amazon EKS across multiple AWS Regions using AWS CDK and Flux. We also showed how Route 53 Application Recovery Controller can simplify recovery and maintain high availability for your most demanding applications.