AWS Database Blog

Schema and code validator for Oracle to Amazon RDS for PostgreSQL or Amazon Aurora PostgreSQL migration

Database migration is a sequence of steps from assessment through cutover. One of the key challenges that a migration engineer or an application team may face is validating the migrated database objects like tables, indexes, constraints, procedures, and functions. It is a time-consuming manual task to validate database objects during heterogeneous migration. You have to write a custom application or run multiple database queries to validate and compare schema objects. This validation is required as you continue to convert database objects during migration or as you continue to add incremental changes in Oracle Database.

In this post, we walk you through an automated way to validate Oracle and PostgreSQL schema differences by generating an HTML report. We use SQL queries and the oracle_fdw extension supported by Amazon Aurora PostgreSQL-Compatible Edition and Amazon Relational Database Service (Amazon RDS) for PostgreSQL with the psql command line.

Solution overview

The solution uses the oracle_fdw extension, available with Aurora PostgreSQL or Amazon RDS for PostgreSQL, to query Oracle metadata tables or views and compare them with PostgreSQL. The oracle_fdw PostgreSQL extension provides a Foreign Data Wrapper for easy and efficient access to Oracle databases. For additional details, refer to Connect to Oracle from Amazon RDS for PostgreSQL using the oracle_fdw.

In this post, we perform the following high-level implementation steps:

- Install the

oracle_fdwextension and configure foreign tables for Oracle metadata views or tables. - Run the schema validator script using the psql command line from a bastion host and generate mismatch reports between source and target as an HTML file.

The following diagram illustrates the solution architecture for Aurora PostgreSQL, but the architecture remains the same for Amazon RDS for PostgreSQL.

Prerequisites

The script requires the oracle_fdw extension, which helps you connect to Oracle database. Currently, the oracle_fdw extension is available in Aurora PostgreSQL 12.7 and above or Amazon RDS for PostgreSQL 12.7 and above.

It is also expected that connectivity between your Oracle database and Aurora PostgreSQL 12.7 or Amazon RDS for PostgreSQL is setup either using AWS Site-to-Site VPN or AWS Direct Connect.

We initiate all foreign table installations within a base schema oracle_schema_compare and create necessary extensions with a foreign table or Oracle metadata views of interest. Oracle credentials need minimal privilege (SELECT_CATALOG_ROLE) restricted to limited metadata tables only. Installation of oracle_fdw is encapsulated within the installer_ora_fdw.sql script and is run through the psql command line for PostgreSQL-compatible databases. On the bastion host, create a file (installer_ora_fdw.sql) using your preferred editor, with the following commands.

Using the psql command line, we initiate installation with the following command:

We use the following parameters:

- <postgresql user> – RDS for PostgreSQL primary user

- <pg_password> – Password for PostgreSQL user

- <postgresql endpoint> – Writer endpoint for PostgreSQL cluster

- <postgresql database> – PostgreSQL database that is used to create the objects required by schema and code validator

- <postgresql mapping user> – Same as <postgresql user>

- <oracle hostname> – IP address or DNS of the host containing Oracle Database

- <oracle port> – Port for Oracle Database (default 1521)

- <sid> – SID of the Oracle Database

- <oracle username> – User name that is used to connect to Oracle Database

- <oracle password> – Password for the Oracle user

Oracle vs. Aurora PostgreSQL schema validation

Comparing database objects between Oracle and PostgreSQL involves querying metadata based on different views and comparison. For more information about the scripts involved to get comparison data points, refer to Validating database objects after migration using AWS SCT and AWS DMS. Data type consideration and mapping on migration from Oracle to PostgreSQL is key and impacts storage and performance overall. For more information about why this is important and how to conduct it for the NUMBER data type, refer to Convert the NUMBER data type from Oracle to PostgreSQL – Part 1 and Convert the NUMBER data type from Oracle to PostgreSQL – Part 2.

As part of the current script, we have unified and consolidated views of database object comparisons as an HTML report, including schema, code, and data type matrix. Our reference for validation is objects in Oracle and their availability within the target PostgreSQL-compatible database.

Schema and code validator

The script accepts four input parameters that control the validation of database objects. During the conversion phase, we can use validator scripts post-schema conversion or post-procedural code conversion. We can also use them to identify schema object changes applied to Oracle Database during migration.

By default, the validator script provides only schema object comparison that includes mismatch details for the following database objects:

- Database configuration:

- Size

- Collation, encoding

- Versions

- Schema and code objects summary:

- Tables

- Table partitions

- Indexes

- Table columns

- Sequence

- Sequence max values (controlled with

seq_max_val_compare) - Constraints (primary key, foreign key, unique, check, not NULL, and default)

- Views and materialized views

- Triggers

- Oracle packages and its public object only (controlled with

code_compare) - Functions and procedures (controlled with

code_compare)

- Data type matrix:

- Data type comparison between primary and foreign keys

You can supply flags as part of the psql command line, as detailed in the following table.

| Flag | Mandatory | Sample | Description |

| ora_schema* | Yes | ora_schema=”DMS_SAMPLE” | Oracle source schema |

| pg_schema* | Yes | pg_schema=”dms_sample” | PostgreSQL target schema |

| seq_max_val_compare | No | seq_max_val_compare=”Y” | Validates sequence max value |

| code_compare | No | code_compare=”Y” | Validate procedural objects including packages as schema, procedure, and functions |

For validation samples, we can use the dms_sample schema available as part of the AWS Database Migration Service (AWS DMS) samples GitHub repo.

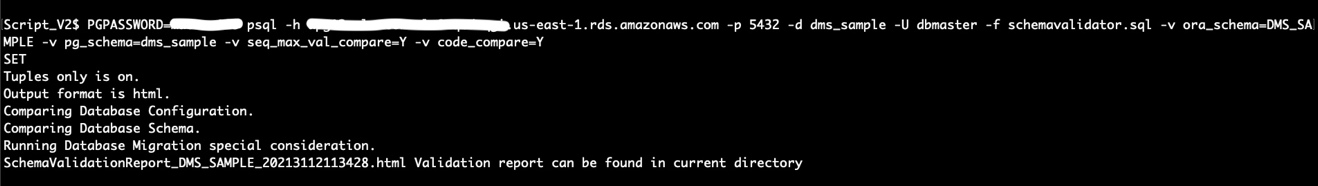

You can choose from two different options to run the script. The first option involves validating all objects, including sequence max value and code objects:

The second option validates only schema objects:

The following screenshot shows running the script to validate storage objects, sequence max value, and code objects.

Oracle vs. PostgreSQL special cases

The validator script also highlights some of the special cases between Oracle and PostgreSQL that are usually identified at later stages during the functional testing of the migration. The validator script helps us be proactive and highlights cases that need additional consideration.

These special cases include the following:

- Oracle partitioned tables with primary keys that don’t include partition keys

- Composite unique indexes having either of the columns as nullable

- Oracle identifiers with length greater than 63

- User-created extended statistics that might influence execution plans

- Oracle default on NULL features as part of 12c

A migration engineer can identify the aforementioned special cases at an earlier stage of project by running the validation script and plan how to address them accordingly.

Schema validation report

You can download a sample report generated as part of sample dms_sample dataset.

The following screenshot shows the version of the source and the target databases in the HTML report generated by the validation script.

For validation, we use the Oracle schema as the base, and the report identifies objects in the PostgreSQL schema that are either missing from or different than the Oracle schema. The following screenshot provides a summary of object types in Oracle and PostgreSQL schemas. It then shows the tables and partitions that are missing in the PostgreSQL schema.

Later sections of the report identify schema differences.

Clean up

After you complete schema validation, clean up the objects created by the scripts with the following command. Note that pgdbuser mentioned in following command was passed as a parameter while running the script installer_ora_fdw.sql.

Conclusion

The schema validator is a tool that provides a mechanism to validate objects between Oracle as source and Aurora PostgreSQL or Amazon RDS for PostgreSQL as target. It removes the manual overhead or the need for a custom application to validate database objects during migration. It also provides an HTML report for tracking and sharing with stakeholders. The oracle_fdw extension, available in Aurora PostgreSQL and Amazon RDS for PostgreSQL, empowers the migration engineer to query metadata from the source database (Oracle), analyze the generated report, and take appropriate action to fix the differences identified by the validation script.

In this post, we described how to generate a comparison report with a custom script with different options to compare the schema objects between Oracle and Aurora PostgreSQL or Amazon RDS for PostgreSQL. Give it a try, and let us know what you think in the comments section. We look forward to your feedback.

About the Authors

Babaiah Valluru is working as Lead Consultant – Databases with the Professional Services team at AWS based out of Hyderabad, India and specializes in database migrations. In addition to helping customers in their transformation journey to cloud, his current passion is to explore and learn ML services. He has a keen interest in open source databases like MySQL, PostgreSQL and MongoDB. He likes to travel, and spend time with family and friends in his free time.

Babaiah Valluru is working as Lead Consultant – Databases with the Professional Services team at AWS based out of Hyderabad, India and specializes in database migrations. In addition to helping customers in their transformation journey to cloud, his current passion is to explore and learn ML services. He has a keen interest in open source databases like MySQL, PostgreSQL and MongoDB. He likes to travel, and spend time with family and friends in his free time.

Jeevan Shetty is a Consultant with the AWS Professional Services Team. He has been supporting and enabling customers to migrate their database from OnPrem data center to AWS cloud and also in migration from commercial database engines to open source database in Amazon.

Jeevan Shetty is a Consultant with the AWS Professional Services Team. He has been supporting and enabling customers to migrate their database from OnPrem data center to AWS cloud and also in migration from commercial database engines to open source database in Amazon.

Deepak Mahto worked as a Consultant with the AWS Professional Services Team in India. His passion is automation and has designed and implemented multiple database or migration related tools.

Deepak Mahto worked as a Consultant with the AWS Professional Services Team in India. His passion is automation and has designed and implemented multiple database or migration related tools.