AWS Machine Learning Blog

Improved OCR and structured data extraction with Amazon Textract

Optical character recognition (OCR) technology, which enables extracting text from an image, has been around since the mid-20th century, and continues to be a research topic today. OCR and document understanding are still vibrant areas of research because they’re both valuable and hard problems to solve.

AWS has been investing in improving OCR and document understanding technology, and our research scientists continue to publish research papers in these areas. For example, the research paper Can you read me now? Content aware rectification using angle supervision describes how to tackle the problem of document rectification which is fundamental to the OCR process on documents. Additionally, the paper SCATTER: Selective Context Attentional Scene Text Recognizer introduces a novel way to perform scene text recognition, which is the task of recognizing text against complex image backgrounds. For more recent publications in this area, see Computer Vision.

Amazon scientists also incorporate these research findings into best-of-breed technologies such as Amazon Textract, a fully managed service that uses machine learning (ML) to identify text and data from tables and forms in documents—such as tax information from a W2, or values from a table in a scanned inventory report—and recognizes a range of document formats, including those specific to financial services, insurance, and healthcare, without requiring customization or human intervention.

One of the advantages of a fully managed service is the automatic and periodic improvement to the underlying ML models to improve accuracy. You may need to extract information from documents that have been scanned or pictured in different lighting conditions, a variety of angles, and numerous document types. As the models are trained using data inputs that encompass these different conditions, they become better at detecting and extracting data.

In this post, we discuss a few recent updates to Amazon Textract that improve the overall accuracy of document detection and extraction.

Currency symbols

Amazon Textract now detects a set of currency symbols (Chinese yuan, Japanese yen, Indian rupee, British pound, and US dollar) and the degree symbol with more precision without much regression on existing symbol detection.

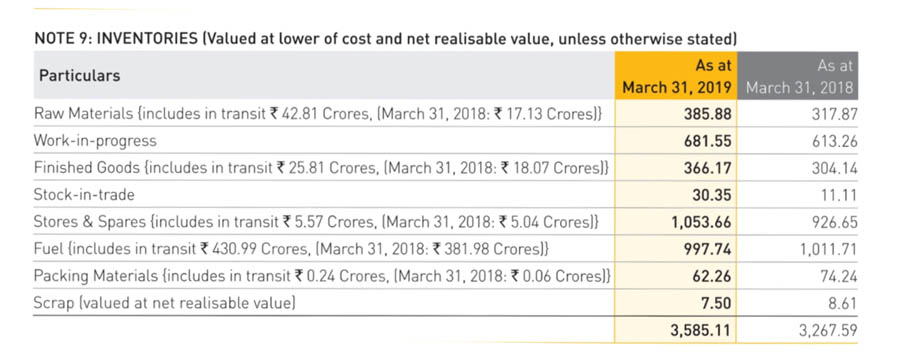

For example, the following is a sample table in a document from a company’s annual report.

The following screenshot shows the output on the Amazon Textract console before the latest update.

Amazon Textract detects all the text accurately. However, the Indian rupee symbol is recognized as an “R” instead of “₹”. The following screenshot shows the output using the updated model.

The rupee symbol is detected and extracted accurately. Similarly, the degree symbol and the other currency symbols (yuan, yen, pound, and dollar) are now supported in Amazon Textract.

Detecting rows and columns in large tables

Amazon Textract released a new table model update that more accurately detects rows and columns of large tables that span an entire page. Overall table detection and extraction of data and text within tables has also been improved.

The following is an example of a table in a personal investment account statement.

The following screenshot shows the Amazon Textract output prior to the new model update.

Even though all the rows, columns, and text is detected properly, the output also contains empty columns. The original table didn’t have a clear separation for columns, so the model included extra columns.

The following screenshot shows the output after the model update.

The output now is much cleaner. Amazon Textract still extracts all the data accurately from this table and now includes the correct number of columns. Similar performance improvement can be seen in tables that span an entire page and columns are not omitted.

Improved accuracy in forms

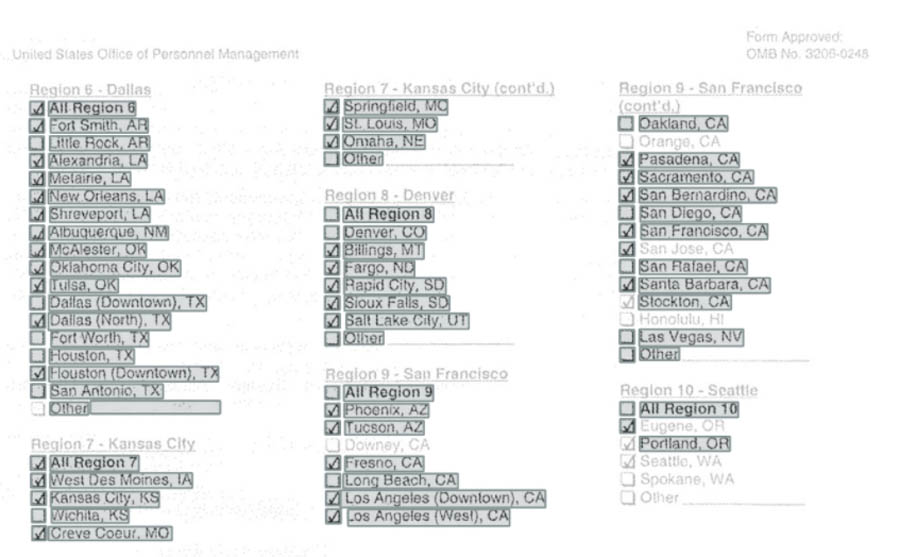

Amazon Textract now has higher accuracy on a variety of forms, especially income verification documents such as pay stubs, bank statements, and tax documents. The following screenshot shows an example of such a form.

The preceding form is not of high-quality resolution. Regardless, you may have to process such documents in your organization. The following screenshot is the Amazon Textract output using one of the previous models.

Although the older model detected many of the check boxes, it didn’t capture all of them. The following screenshot shows the output using the new model.

With this new model, Amazon Textract accurately detected all the check boxes in the document.

Summary

The improvements to the currency symbols and the degree symbol detection will be launched in the Asia Pacific (Singapore) region on September 24th, 2020, followed by other regions where Amazon Textract is available in the next few days. With the latest improvements to Amazon Textract, you can retrieve information from documents with more accuracy. Tables spanning the entire page are detected more accurately, currency symbols (yuan, yen, rupee, pound, and dollar) and the degree symbol are now supported, and key-value pairs and check boxes in financial forms are detected with more precision. To start extracting data from your documents and images, try Amazon Textract for yourself.

About the Author

Raj Copparapu is a Product Manager focused on putting machine learning in the hands of every developer.

Raj Copparapu is a Product Manager focused on putting machine learning in the hands of every developer.