AWS Partner Network (APN) Blog

Best Practices from Blue Sentry Cloud for Scaling Amazon EKS Networking with Ipv6 VPC CNI

By Todd Bernson, CTO – Blue Sentry Cloud

By Vinod Kataria, Principal Partner Solutions Architect – AWS

|

| Blue Sentry Cloud |

|

Customers are using Amazon Elastic Kubernetes Service (Amazon EKS) to build and deploy cloud native-solutions using Kubernetes on Amazon Web Services (AWS) and on premises.

Kubernetes is an open-source system for automating deployment, scaling, and management of containerized applications. In January 2022, Amazon EKS released support for IPv6 to scale containerized applications on Kubernetes to go beyond the limits of IPv4 address space.

In this post, we will look at how Blue Sentry Cloud solved a customer’s scaling challenge using IPv6 on Amazon EKS. Blue Sentry Cloud is an AWS Premier Tier Services Partner and Managed Services Provider (MSP) that specializes in complex enterprise cloud initiatives.

Solution Overview

Blue Sentry worked with a Fortune 500 financial institution which has thousands of services inside 72 Amazon EKS clusters, with more than 86,000 pods in over 6,000 nodes. An issue was discovered where the clusters were running out of available IPv4 addresses.

There were two approaches considered to address the issue:

- The proposed IPv4 workarounds using custom networking and adding additional virtual private cloud (VPC) CIDR range meant creating additional clusters, illogical segmentation of services, and higher cost from a human and technology perspective.

- Using IPv6 addresses for a customer-managed VPC would address the problem solely by expanding the number of IP addresses in use.

For the customer, who uses three /22 private subnets for each EKS cluster (3,057 usable IPv4 addresses), they could attach an IPv6 /64 CIDR to each subnet and add over 55,000,000,000,000,000,000 IP addresses. To put that in perspective, the world currently has eight billion people. Each person on Earth could have 6.9 billion IP addresses for themselves just in these three subnets.

You may need to consider other aspects like compute capacity of Amazon Elastic Compute Cloud (Amazon EC2) instances used for EKS worker nodes. You can use the max-pod-calculator.sh script to find the recommended number of maximum pods based on the EC2 instance type.

No changes are needed for the application, networking, ingress, helm/yaml charts, network policies, or any other piece of the infrastructure. All traffic translation is managed seamlessly by Amazon EKS. Developers, operations, and DevOps teams would notice no difference or change to the application.

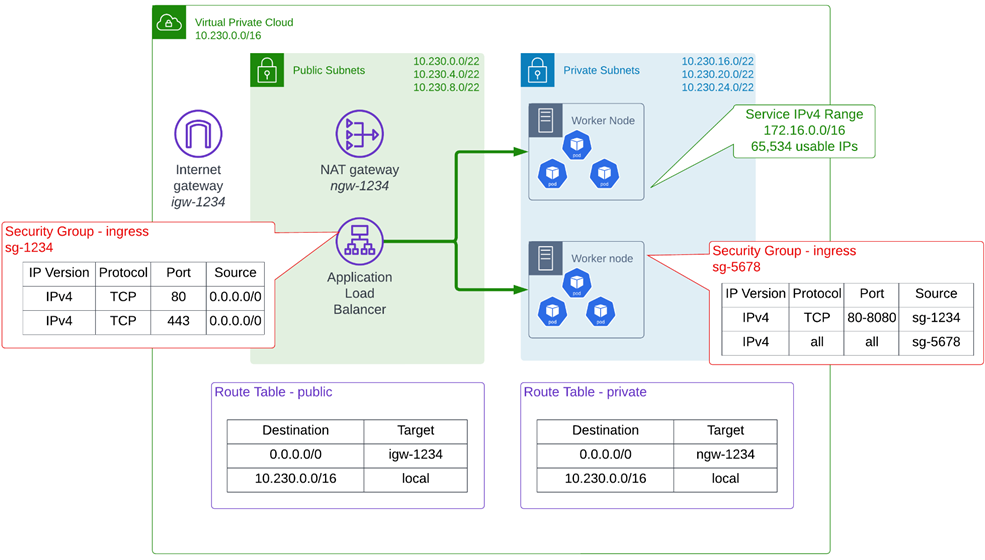

The diagram below represents part of the customer’s EKS environment with IPv4 for a customer-managed EKS VPC.

Figure 1 – Customer’s Amazon EKS environment.

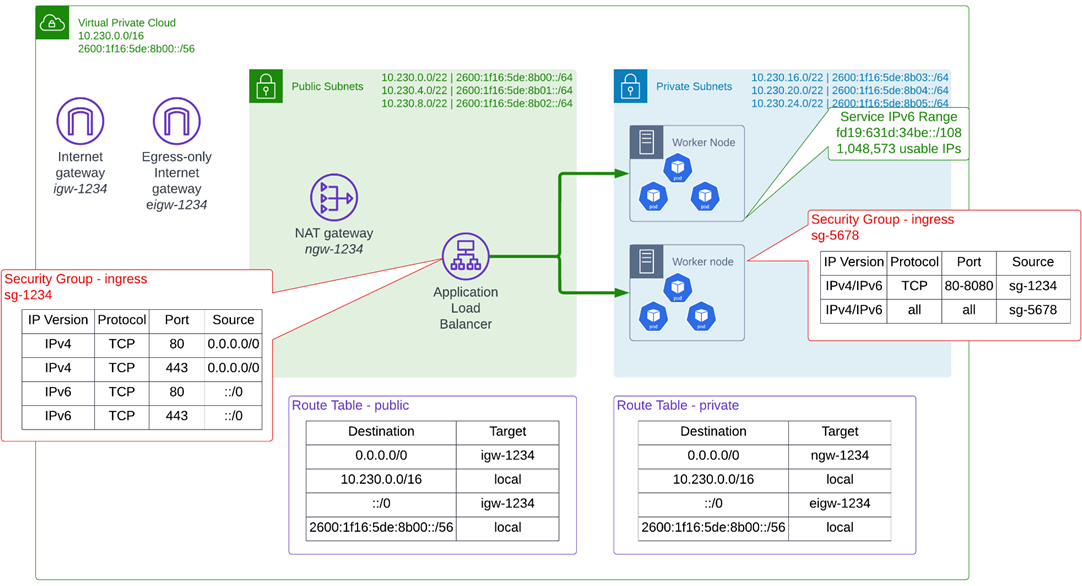

The next figure represents the proposed diagram with IPv6 additions for a customer-managed EKS VPC.

Figure 2 – Proposed architecture on EKS with IPv6.

Tools Used in the Proof of Concept

To solve the issue of severely limited IP address pools, Blue Sentry used the following tools in the proof of concept (PoC) to build and deploy infrastructure and applications:

- HashiCorp Terraform for declarative infrastructure as code (IaC).

- ArgoCD as the GitOps tool for automatic application deployment.

And for automation in deploying to other AWS services from inside the cluster:

- Application ingress controller (Application Load Balancing to EKS ingress).

- Cluster autoscaler (auto scaling of the cluster worker nodes).

- Certificate manager (Amazon Route 53 records).

VPC Considerations

VPCs and subnets created with IPv6 CIDRs were attached. A /56 CIDR was attached to the VPC, and /64 CIDRs were used to create the subnets.

Using Terraform to build the VPC creates consistency and repeatability for each of the needed clusters. It also ensures proper routing and aligns each time with the enterprise security needs.

The deployment enables three public subnets for the Application Load Balancers (ALBs), three private subnets with IPv6 CIDRs attached for the EKS cluster, and three database subnets which are also private and have IPv6 CIDRs attached to them. The database on Amazon Relational Database Service (Amazon RDS) for this PoC remained IPv4.

For the purpose of this PoC, everything outside of EKS is dual-stack. The EKS cluster nodes and ALBs are assigned both IPv4 and IPv6 addresses, and the route tables and security groups have both IPv4 and IPv6 entries.

Everything needing an IP inside the EKS cluster was given an IPv6 IP address, and subnets must have auto-assign IPv6 addressing enabled. Route tables and security groups must also allow/handle IPv6 traffic.

The table below represents the summarized view of the subnets in the VPC.

| Subnet type | Purpose | CIDR | IPv4 or IPv6 |

| Public subnet | ALBs, NATGW | 10.230.0.0/22 | IPv4 only |

| Public subnet | ALBs, NATGW | 10.230.4.0/22 | IPv4 only |

| Public subnet | ALBs, NATGW | 10.230.8.0/22 | IPv4 only |

| Private subnet | EKS master/worker | 10/230.16.0/22 | 2600:1f16:5de:8b03::/64 |

| Private subnet | EKS master/worker | 10/230.20.0/22 | 2600:1f16:5de:8b04::/64 |

| Private subnet | EKS master/worker | 10/230.24.0/22 | 2600:1f16:5de:8b05::/64 |

| Private subnet | Database | 10/230.28.0/22 | 2600:1f16:5de:8b06::/64 |

| Private subnet | Database | 10/230.32.0/22 | 2600:1f16:5de:8b07::/64 |

| Private subnet | Database | 10/230.36.0/22 | 2600:1f16:5de:8b08::/64 |

Amazon EKS Setup

Amazon EKS and the worker node groups are placed into the private subnets. Worker node instances have IPv4 addresses attached to them, but we will select IPv6 as the Cluster IP Address Family.

EKS automatically handles the translation, but if your worker nodes contain an IPv4 address then EKS will configure IPv6 pod routing so that pods can communicate with cluster external IPv4 endpoints.

The service CIDR that EKS uses inside the cluster is a /108, giving us 1,048,576 usable IPs inside the cluster. This also gives us over 333 times the number of usable IP addresses previously available with IPv4. The service CIDR is configured automatically by EKS and is configured from IPv6 ULA address range.

Deploy ArgoCD

Now that the VPC and EKS cluster are built with Terraform, ArgoCD will be deployed as the GitOps tool to automatically deploy other Kubernetes functionality and our applications.

GitOps is a way of implementing continuous deployment for cloud-native applications. It focuses on a developer-centric experience when operating infrastructure by using tools developers are already familiar with, including Git and continuous deployment tools.

Using the helm provider in Terraform, ArgoCD is deployed declaratively and with repeatability. In the same terraform apply, the following resources will be deployed:

- ArgoCD namespace in Kubernetes.

- AWS Certificate Manager (ACM) certificate for the load balancer/ingress not yet built.

- Amazon Route 53 verify record for the ACM certificate.

Using kubectl port-forward, we can access the now deployed ArgoCD application:

Deploy Other Resources

- cert-manager: This is essential for the ALB ingress controller and works to automate certificate management on a Kubernetes cluster.

- ALB ingress controller: Using this custom resource, and with the right AWS Identity and Access Management (IAM) permissions, declared Kubernetes ingresses can deploy and utilize ALBs for internet-based or internal traffic needing to reach the cluster. No changes are needed. Because the ingress and ALB are automated with the ingress controller, migrating from one cluster to another can be completely automated.

- cluster-autoscaler: When testing/growing a deployment to ensure we can go over the 3,000 IP limit that can be run into with IPv4, cluster-autoscaler will scale out the worker nodes as needed.

- external-dns: This automates the creation of Amazon Route 53 records to point to the now automatically created ALBs. It also automatically cleans up any unused record. Mixed with the ALB ingress controller and ArgoCD, external-dns is the last automation needed for effortless deployment/migration.

Test Application Deployment and Scaling

To deploy the applications, there is a simple deployment of NGINX loading an index.html file from a ConfigMap resource. Once committed to the Git repository, ArgoCD automatically updates all three of the applications.

Initially, the deployments are at five replicas:

Testing gives us the following:

Figure 3 – Sample application webpage outputs.

Since a GitOps tool is being used, we can’t scale with kubectl but instead need to declaratively commit the request to Git. Note the change in the values.yaml file for app2 below:

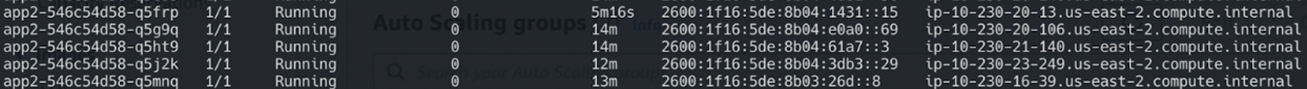

In few minutes, the app2 deployment starts scaling well past the IPv4 address limit:

Figure 4 – kubectl output for total PODS in the application deployment.

And the pods all have IPv6 IP addresses:

Figure 5 – kubcetl output showing PODS with IPv6 address.

IP schema can’t be changed once the cluster is created. Therefore, any apps/services must be migrated from an IPv4 cluster to the IPv6 cluster. With the automation tools listed in this post, migration is as easy as pointing the ArgoCD application to a different cluster.

Windows pods and services aren’t supported on EKS with IPv6 at this time.

Nodes are dual-stacked (have both IPv4 and IPv6 addresses), but pods/services will only have IPv6 addressing. Even though Kubernetes supports dual-stack since version 1.23, Amazon EKS does not support this at this time.

Conclusion

The customer working with Blue Sentry is now able to scale beyond the limits they were experiencing with IPv4 subnets, allowing exponentially more pods and deployments in each cluster. There are a smaller number of IPv4 CIDR ranges to manage as the Amazon EKS footprint grows.

Additionally, this led to fewer clusters to manage, more logical grouping of services, decreased time for management, and overall lower costs. To learn more about IPv6 with Amazon EKS, explore the AWS documentation.

Blue Sentry Cloud – AWS Partner Spotlight

Blue Sentry Cloud is an AWS Premier Tier Services Partner with deep expertise in Kubernetes that focuses on cloud-native application deployment and operations.