AWS Partner Network (APN) Blog

Connectivity Options for VMware Cloud on AWS Software Defined Data Centers

Editor’s Note: This post has been updated following announcements at AWS re:Invent 2018.

By Haider Witwit, Senior Solutions Architect at AWS

By Aarthi Raju, Partner Solutions Architect at AWS

VMware Cloud on AWS enables customers to have a hybrid cloud platform by running their VMware workloads in the cloud while having seamless connectivity to on-premises and Amazon Web Services (AWS) native services.

VMware Cloud on AWS enables customers to have a hybrid cloud platform by running their VMware workloads in the cloud while having seamless connectivity to on-premises and Amazon Web Services (AWS) native services.

Customers can use their existing AWS Direct Connect (DX) or Virtual Private Network (VPN) solutions to connect to their VMware Software Defined Data Center (SDDC) clusters.

In this post, we dive deep into SDDC networking and how it connects to different local and remote customer networks. We also explore options for connecting from your SDDC to Amazon Virtual Private Cloud (Amazon VPC), on-premises networks, and multiple VPCs.

SDDC Networking

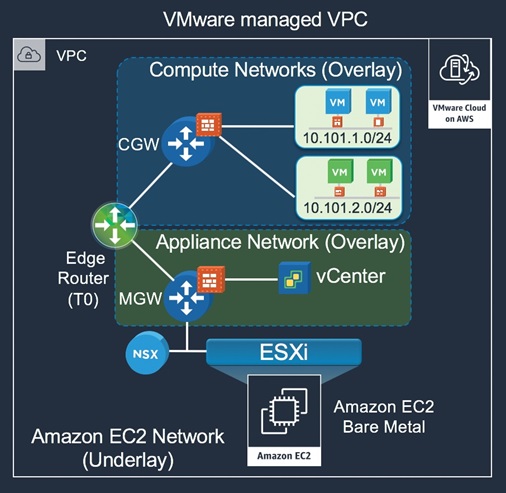

With the SDDC deployed on AWS bare metal hardware provided by Amazon Elastic Compute Cloud (Amazon EC2), the lowest network level to start with is the Amazon EC2 underlay network or the Infrastructure Subnet. This network hosts the vmkernal adapters of the ESXi hosts like vMotion, vSAN, and host management networks.

VMware uses NSX to control access to this network as part of the SDDC management model, and limits access to only remote traffic required to support features like cross-cluster vMotion.

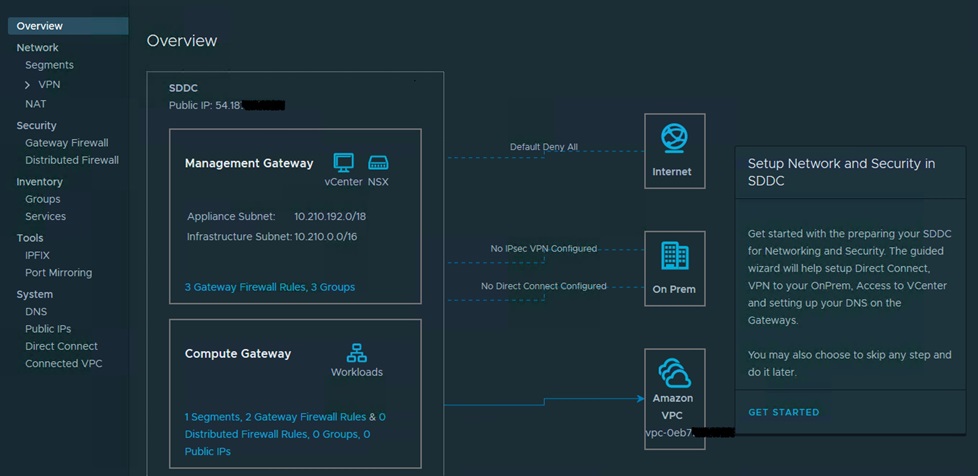

Figure 1 – Networking and security overview in the VMware Cloud on AWS portal.

On the top of the underlay, NSX builds an overlay networks for logical VMware connectivity. Each SDDC has two types of overlay networks:

- Appliance Subnet used to provide connectivity to SDDC management components like vCenter. This network is created during cluster provisioning with carved out network range from the Infrastructure or Management subnet. Customers can optionally specify the network range of the Management subnet during cluster creation for the purpose of avoiding conflicts with other networks that will need to connect to the SDDC. Access to this network is controlled by the NSX Management Gateway (MGW) through firewall rules and IPsec tunnels.

- One or more customer-managed logical networks for VM traffic. Those can be either routed locally within the cluster or stretched from remote on-premises clusters with remote gateway for L3 routing. Access to this network is controlled by the NSX Compute Gateway (CGW) through firewall rules and IPsec capabilities to enable customers to connect securely to their remote workloads and the Internet.

Figure 2 – SDDC network topology.

Connectivity to Customer VPC

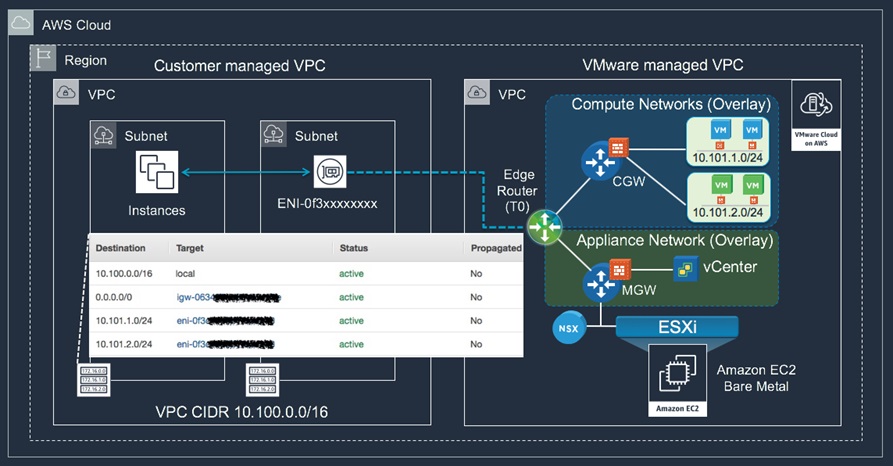

VMware Cloud on AWS enables customers to seamlessly integrate their SDDC cluster to AWS services. This is achieved by connecting the SDDC cluster to the customer’s VPC of choice. During the onboarding process, customers have the ability to choose a VPC and the subnets they want to connect to their SDDC cluster.

Customers will also run an AWS CloudFormation template, which grants VMware Cloud Management Services across account roles with a managed policy. This managed policy allows VMware to perform operations like creating Elastic Network Interfaces (ENIs) and route tables. A detailed description of what the policy allows can be seen in the AWS IAM Console under AWS Managed Policies. The policy’s Amazon Resource Name (ARN) is arn:aws:iam::aws:policy/AmazonVPCCrossAccountNetworkInterfaceOperations.

Once the role has been created and assigned, VMware Cloud Management Services assumes a role in the customer account and creates ENIs in the subnet the customer chooses. These ENIs are directly attached to the ESXi hosts in the VMware SDDC account.

Of all the attached ENIs, only one of them is in use. The NSX edge Router (also called T0 Router) lives on a single ESXi host, and this decides the ENI that’s in active state. This ENI allows for connectivity between the SDDC cluster and customer VPC. In the event of a host failure, VMware vMotions the Compute Gateway to a new host and the customer route table is updated to point to the new active ENI. Customers will be able to see these ENIs in their account with a description set to ‘VMware VMC Interface.’

VMware also makes it easy to update customer route tables based on the logical networks that are created. The default route table has updated routes to all customer logical networks, and this paves the way for services running in the VPC to communicate to the logical networks. Customers need to have the right security group and Network Access Control List (ACL) rules to allow traffic from the logical network.

Customers can view details about the connected account from their VMware Cloud on AWS console. Connectivity between the SDDC and VPC will not incur any data egress costs if the ENI created for this service and SDDC are in the same Availability Zones (AZs). If they are in different AZs, you will incur standard data transfer charges.

Figure 3 – Connectivity model between SDDC and customer VPC.

Connectivity to Other Customer VPCs

There are three primary approaches to enable connectivity between SDDCs and customer-managed VPCs (other than the connected VPC where VMware cloud ENIs are provisioned). Choosing the right approach depends on the number of VPCs you will need to connect to and requirements for additional networking services on traffic flow.

Before we get into these options, we need to mention that the new NSX-T backed SDDCs support both BGP route-based and static policy-based VPNs. Our recommendation is to always use route-based VPNs unless if not supported on the other end of the connection. We’ll focus on route-based VPN practices in this blog.

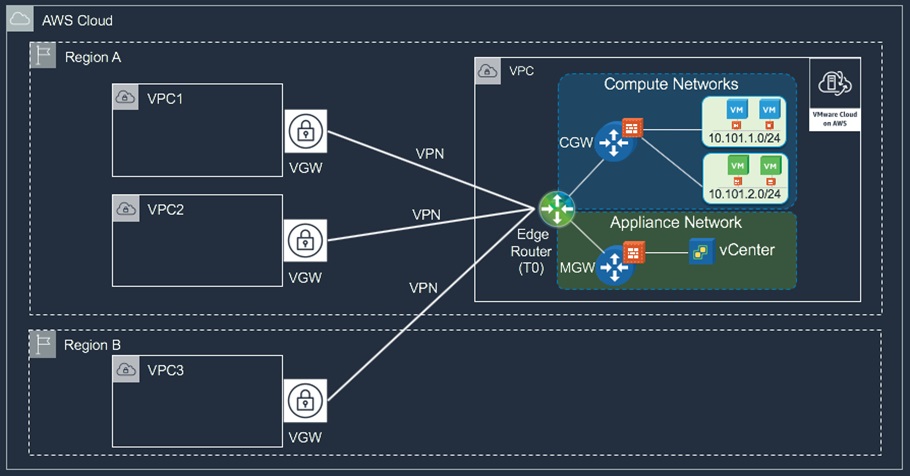

The first approach is to create 1:1 VPN tunnels between the NSX T0 edge router and the different VPCs using the AWS-managed VPN offering (or any Amazon EC2 based VPN, if preferred). To maintain connection resiliency, it’s important to configure both IPSec tunnels provided by the AWS-managed VPN to the edge router. AWS will use BGP to influence the selection of the active tunnel on both sides and failover as necessary.

The T0 edge router is highly available by design through active/stand configuration, so it will not be a single point of failure for the connection. This model works well when connectivity is required to small or limited number of VPCs.

Figure 4 – Approach 1: Direct VPN tunnels from To Router to customer VPCs.

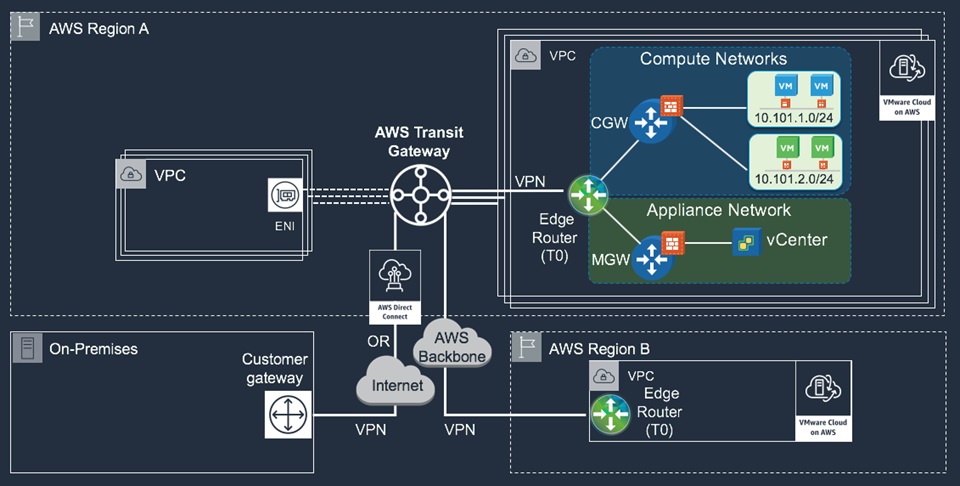

If connectivity is required to multiple VPCs, a different solution using the recently announced AWS Transit Gateway can be used to minimize the number of tunnels and provide simplified hub and spoke routing model for SDDC to SDDC, VPC, and on-premises environments.

Figure 5 – Approach 2: Hub and spoke model using AWS Transit Gateway.

AWS Transit Gateway (TGW) is highly scalable and resilient. It supports multiple Routing Domains for granular routing and network segregation similar to VRF functionality. TGW can have up to 5,000 attachments per Region, and can burst up to 50Gbps of traffic per VPC attachment. It supports route-based VPNs with Equal-Cost Multi-Path (EMCP) to increase VPN bandwidth by equal traffic distribution across multiple tunnels when advertise the same CIDR block. Native Direct Connect support is coming early 2019.

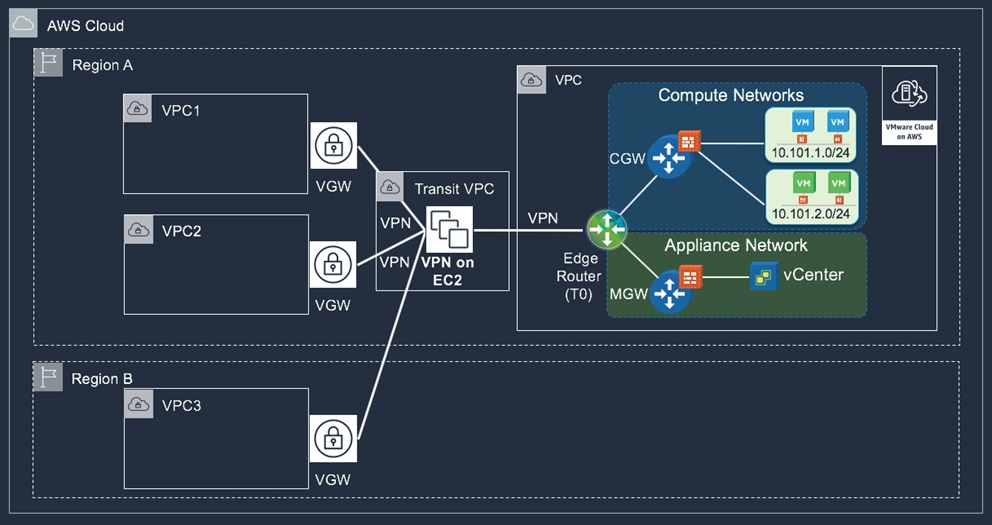

In cases when additional network services, like traffic inspection or IPS/IDS is needed, the Transit VPC approach can still be used. This model suggests the use of third-party software VPN on Amazon EC2 inside a shared/transit VPC. The software router on Amazon EC2 works as a hub that terminates tunnels to the different environments, or spokes. Routing and additional networking capabilities will be provided by the software router based on the software vendor capabilities.

Figure 6 – Approach 3: Transit VPC model.

Connecting to On-Premises

SDDC supports integration with AWS Direct Connect service to provide persistent, reliable, and low latency connection to customers’ on-premises environments. DX uses virtual interfaces (VIFs) to enable access to AWS services. Private virtual interface is used to connect to resources inside Amazon VPC. Public virtual interface connects to public AWS services outside VPC boundaries, such as Amazon Simple Storage Service (Amazon S3), Amazon Glacier, and EC2 Elastic IP addresses (EIP).

AWS Direct Connect is a multi-account service. Connection owners can create and share virtual interfaces with different AWS accounts in a hosted virtual interface model. VMware Cloud on AWS supports the hosted virtual interface model. SDDC customers can create private virtual interfaces from DX connections that they own or request from AWS Partner Network (APN) Partners, and attach to the VMware managed VPC where the SDDC resides.

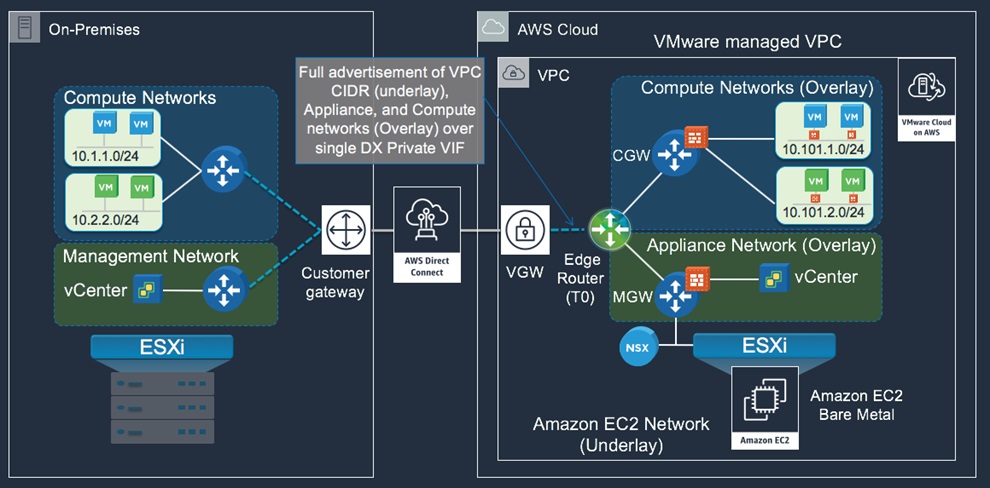

The new VMware Cloud on AWS SDDCs backed by NSX-T networking provides full routing support for Infrastructure (underlay) and overlay networks over a single Virtual Private Interface. This model simplifies the routing requirements between SDDC and on-premises environments using BGP routing without the need for VPN tunnels, unless if encryption is required.

Figure 7 – SDDC integration with AWS Direct Connect.

In cases when encryption is required for some or all network communication to on-premises, SDDC supports the option to either use the public or private address of the T0 edge router when creating VPN connections. This gives customers flexibility on how to route their VPN traffic over Direct Connect:

- In the case of Private VIF, T0 edge router utilizes a private IP from the Appliance subnet space that gets advertised to customer site over Private VIF. VPN tunnels created to the private IP gets routed over the Private VIF by default with no need for additional configuration.

. - In the case of Public VIF, T0 edge router utilizes a public IP from AWS public space that gets advertised to customer site over Public VIF. VPN tunnels created to this Public IP will be routed over DX Public VIF when the on-premises edge router’s public address is included in the customer advertisements to AWS. This provides enhanced connectivity over AWS backbone for the VPN traffic vs. routing over Internet.

Network Requirements for Data Center Extension

Let’s take data center extension as one common use case and touch on how to meet networking requirements for vMotion with AWS Direct Connect today.

One of the most popular and core features of VMware vSphere is vMotion—the ability to live migrate workloads from one physical host to another with no downtime. vMotion capability was later extended to support cross-cluster, cross-vCenter, and long-distance live migrations. These features pushed infrastructure capabilities to its limits to support and maintain reliable migration operations.

VMware has defined three network requirements for reliable virtual machine migrations between on-premises and SDDC:

- L3 connectivity between source and destination vMotion and host management networks. This routing flow can be enabled by Private VIF between on-premises and the SDDC. The Private VIF will enable L3 connectivity between the SDDC underlay (vMotion and host management) and related on-premises networks.

. - L3 connectivity between source and destination vCenter Servers. Same as above. The Private VIF will enable L3 connectivity between the SDDC Appliance network and related on-premises management network.

. - L2 stretch to maintain addressing during VM migration. This extension can also be routed over the same Private VIF. It requires an L2 VPN between the T0 edge and on-premises NSX edge VPN client, which is a free download for VMC customers.

Figure 8 – Network requirements for vMotion between on-premises and SDDC.

Conclusion

VMware Cloud on AWS has different connectivity options available to remote networks. The VMware endpoints in the connected customer VPC provides low latency, high throughput, and seamless connectivity to VPC workloads and other AWS services. SDDC integration with AWS Direct Connect provides reliable, dedicated, and low latency connection between VMware SDDC and on-premises VMware environments. This optimized data transfer speed is required for reliable vMotion operations.

SDDC customers who need to connect to multiple VPCs can create tunnels over AWS backbone using an AWS-managed VPN with multiple options based on SDDC logical networks and connectivity requirements.

Additional Resources

- VMware Cloud on AWS website

- Getting Started guide for VMware Cloud on AWS

- Getting started with Amazon Virtual Private Cloud (VPC)

- Setting up AWS Virtual Private Network (VPN)

- AWS Direct Connect user guide

- AWS re:Invent 2018: Connectivity for VMware Cloud on AWS SDDCs (NET321)

Contact VMware Cloud on AWS

If you would like to see how VMware Cloud on AWS can help transform your business, please contact VMware >>