AWS Partner Network (APN) Blog

Improving the Performance of Distributed Atlassian Applications with Amazon CloudFront

By Pavel Fomin, Director of Cloud Migrations at Cprime

By Dan Taoka, Partner Solutions Architect, AWS ISV Workload Migration Program

By Jacob Cokely, Partner Development Manager, AWS ISV Workload Migration Program

Atlassian users who run Jira, Confluence, or Bitbucket applications across distributed teams find that, due to slower network connectivity, content sometimes loads much slower for team members in geographically distant regions.

Atlassian users who run Jira, Confluence, or Bitbucket applications across distributed teams find that, due to slower network connectivity, content sometimes loads much slower for team members in geographically distant regions.

For instance, team members in Europe or Asia experience much slower web performance for content hosted in the United States than team members in the U.S.

A reasonable solution to this problem may involve setting up a geo-clustering environment that deploys application nodes in multiple global regions to more efficiently serve local users. Unfortunately, latency requirements between individual application nodes do not make this a feasible solution.

A new feature in Atlassian’s Jira, Confluence, and Bitbucket Data Center versions allows the use of Amazon CloudFront to improve the user experience and overall performance of applications hosted in other global regions.

In this post, we walk you through this feature, show you how to set it up, and explain how to measure the results.

Cprime’s Approach

Cprime is an AWS Partner Network (AWS) Advanced Consulting Partner focused on helping transforming businesses get in sync. Cprime is also one of Atlassian’s largest partners.

While helping global enterprises use Atlassian products to advance through their transformation to the cloud, Cprime encountered this hindrance to the performance of distributed teams and came up with the solution in this post.

Atlassian’s approach to this performance challenge is to cache the static content in edge locations. As users access the application, static content is cached on the edge location nearest to them.

Accessing static content for the first time takes the usual amount of time, but all further downloads of the same content will access the cached data, thereby reducing application load time.

For example, assume your Atlassian application is hosted in New York City, with other team members accessing it from Singapore. Without caching content at edge locations, team members in Singapore would have to wait for all the static content to download from New York every time they access it.

With this new feature, Singapore users would download all the static content directly from Singapore’s edge location. This approach has proven to be as much as 50 percent more effective when measured against deployments without this feature enabled.

Currently, this feature is only available for the following versions of Atlassian Data Center applications:

- Jira Software 8.3+

- Jira Service Desk 4.3+

- Confluence 7.0

- Bitbucket 6.8+

What Content Can Be Cached?

Only the static content and assets can be cached. Dynamic content will still be served by the individual application nodes from the region where the application is installed.

Static content, which can be cached, includes:

- CSS

- JavaScript

- Fonts

Dynamic content, which cannot be cached, includes:

- Attachments

- Avatars

- Ticket data

- Page data

- Custom themes

About the Tools and Technologies

The solution described in this post includes multiple AWS and Atlassian products and technologies. To better understand our approach, it may be worthwhile to review them.

AWS Availability Zones and Regions

AWS cloud computing resources are located in different physical locations divided into Regions and Availability Zones.

Regions are large and divided by geographic location. Availability Zones are distinct locations within a Region that are engineered to be isolated from failures in other Availability Zones. They provide inexpensive, low-latency network connectivity to other Availability Zones in the same Region.

Atlassian Project Management Tools

Atlassian Jira is a project management platform that helps teams plan, assign, track, report, and manage their work. Atlassian Confluence helps teams collaborate and share knowledge. Atlassian Bitbucket provides Git code management and allows teams to plan projects, collaborate on code, test, and deploy.

These tools are designed to work together efficiently: Jira helps teams plan and track the work, Confluence provides a single place to organize all the content, and Bitbucket allows developers to securely and privately store source code.

Data Center editions of these Atlassian applications allow active clustering to applications nodes, thereby implementing a highly available and scalable solution. This ensures users have uninterrupted, high performance access to critical applications across multiple Availability Zones within a single Region.

AWS Content Delivery and Load Balancing Tools

Amazon CloudFront is a fast content delivery network (CDN) service that securely delivers data, videos, applications, and APIs to users across the globe with low latency and high transfer speeds

Elastic Load Balancing (ELB) automatically distributes incoming application traffic across multiple targets, such as Amazon Elastic Compute Cloud (Amazon EC2) instances, containers, IP addresses, and AWS Lambda functions. It can handle the varying load of your application traffic in a single Availability Zone or across multiple Availability Zones.

How to Implement the Solution

Implementing the CDN feature for your Atlassian Data Center on AWS is simple, but requires a few important steps to ensure proper configuration:

- Set up an Application Load Balancer

- Set up a Amazon CloudFront CDN

- Enable CDN in Atlassian Jira

1. Setting up Application Load Balancer

If your Atlassian applications are already internet-facing, you can use the existing Application Load Balancer.

However, if your Atlassian applications are not accessible from the internet, you will need to deploy an additional internet-facing Application Load Balancer to make sure that CloudFront can reach its static assets. Lock it down securely to allow only CloudFront to access its static assets, using this process.

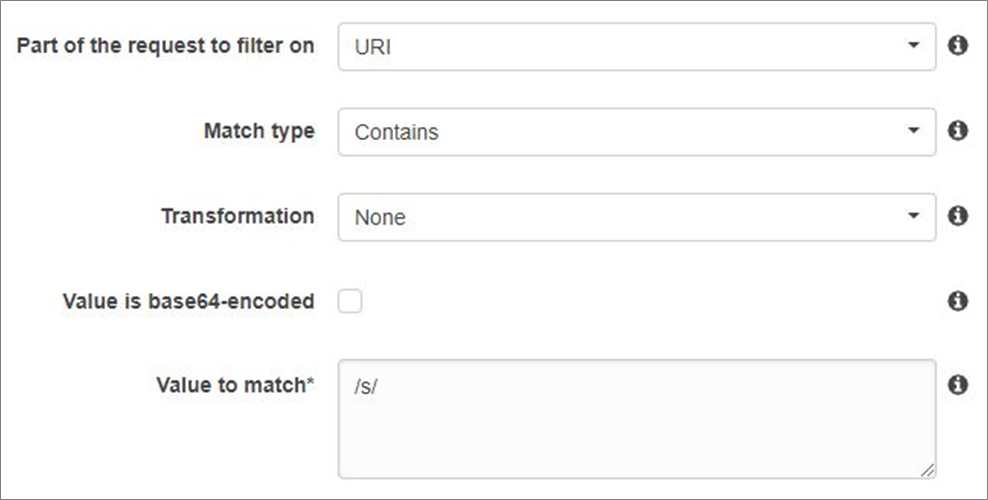

First, configure the web Access Control List (ACL) for the Application Load Balancer. You can find the ACL within the AWS WAF service. Make sure the new Application Load Balanacer

- Allows requests from paths that start with /s/* (required)

- Is limited to GET, HEAD, and OPTIONS HTTP methods (optional)

Figure 1 – Example of how to configure the Application Load Balancer.

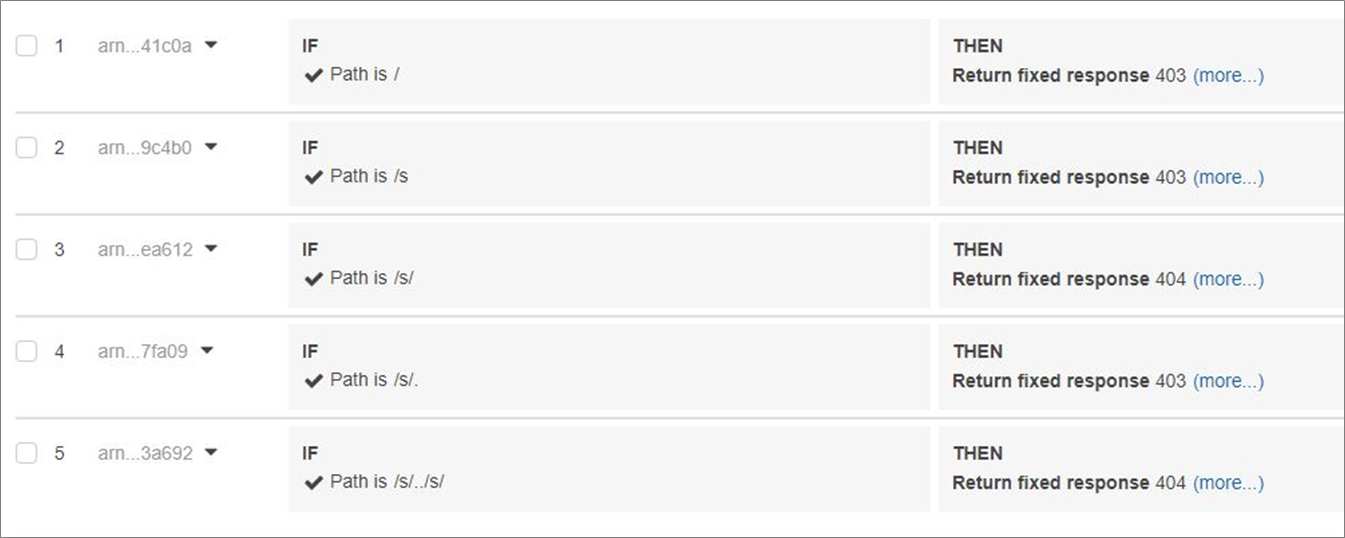

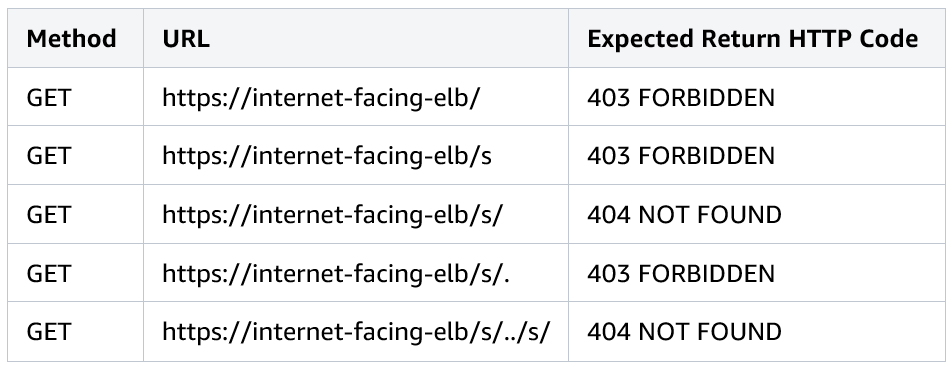

Second, edit the Application Load Balancer’s listener rules to make sure your setup is fully secure.

Figure 2 – Example of setting up the Application Load Balancer listener rules.

Finally, check the proper security settings by performing some of these manual tests.

2. Setting up Amazon CloudFront

Provisioning Amazon CloudFront with default settings should suffice. However, you can customize the following settings:

- Origin Domain Name: example.com/jira

- Allowed HTTP methods: GET, HEAD, OPTIONS

- Viewer protocol policy: Redirect HTTP to HTTPS

- Object caching: User Origin Cache Headers

- Forward cookies: None—this is important to make sure static assets are cached without user context.

- Query string forwarding: Forward all, cache based on all.

- HTTP protocols: Must include HTTP/2

- Error TTL: Set to 10-30 seconds to improve outage recovery time

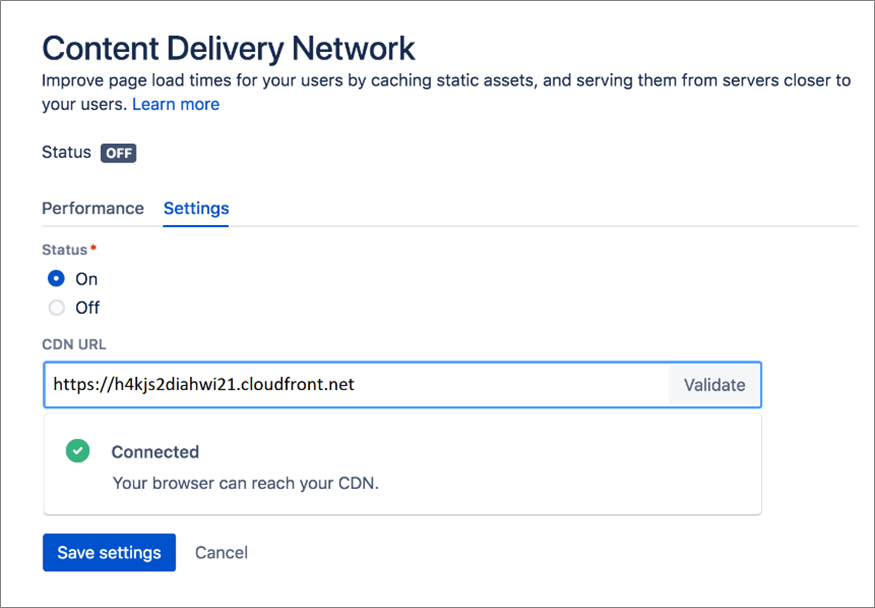

3. Enabling CloudFront in Jira

By default, the CDN feature is turned off in your application configuration. You can enable and configure it in the System area of your application administration.

For example, to enable it in Jira, go to Admin > System > Content Delivery Network > Settings.

Simply copy and paste your CloudFront URL, mark the Status ON, and save the changes.

Figure 3 – How to enable Amazon CloudFront in Jira.

Measuring the Results

There are tools within each application that measure the effect of the CDN feature over time. It measures the time the browser waits while content is being rendered.

The more data collected, the better and more accurate the results.

Caveats

While the download speed of the static content can drastically improve, it’s important to understand this feature will not necessarily make your application perform faster.

It will, however, reduce the overall load on your application node cluster, therefore reducing latency for existing users.

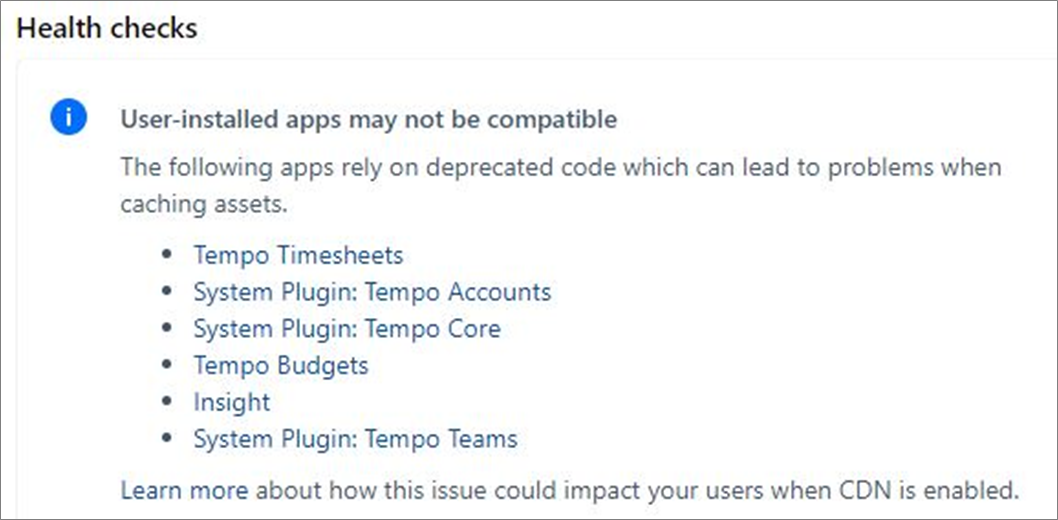

Additionally, not all third-party add-ons to your Atlassian applications may be compatible with this feature. You can find out more by using the Health Check option in the CDN configuration settings.

Even though some of your add-ons may not be compatible with CDN, you can still use the feature. We highly recommend you thoroughly test this feature in test environments prior to enabling this feature.

Conclusion

Using Amazon CloudFront as a content delivery network in an Atlassian Data Center application helps improve performance for users distributed across global regions.

Cprime has helped address this global latency issue for a number of teams. Cprime works closely with AWS and Atlassian, an APN Advanced Technology Partner, to deploy and maintain the Atlassian product suite.

Cprime has also helped customers migrate their Atlassian installations to AWS through the ISV Workload Migration Program, which enables organizations to achieve their business goals and accelerate their cloud journey.

Cprime – APN Partner Spotlight

Cprime is an APN Advanced Consulting Partner focused on helping transforming businesses get in sync. As one of Atlassian’s largest partners, Cprime enables global enterprises to use Atlassian products to advance through their cloud transformation journey.

Contact Cprime | Practice Overview

*Already worked with Cprime? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.