AWS Partner Network (APN) Blog

Scaling Global Connectivity for VMware Cloud on AWS

By Schneider Larbi, Sr. Partner Solutions Architect – AWS

|

As the adoption for VMware Cloud on AWS increases around the world, customers’ network requirements have also grown. At Amazon Web Services (AWS), we want to support their requirements when their network scales.

VMware Cloud on AWS is a jointly engineered solution by VMware and AWS that brings VMware’s Software-Defined Data Center (SDDC) technologies such as vSphere, NSX, vSAN, and more to the AWS global infrastructure.

In this post, I will discuss how customers can scale connectivity globally to support their business requirements using AWS Direct Connect, VMware Transit Connect, AWS Direct Connect Gateway, and the NSX Multi-Edge feature.

Networking is the fundamental pillar required for connectivity to VMware Cloud on AWS and beyond. It’s imperative to ensure the network architecture implemented with VMware Cloud on AWS has the ability to scale in response to customer requirements.

Why Scalability is Important

Gartner defined scalability as “the measure of a system’s ability to increase or decrease in performance and cost in response to changes in application and system processing demands.”

From a networking perspective, customers using VMware Cloud on AWS want the ability to have scalable network functionality for network traffic within VMware Cloud on AWS, between VMware Cloud on AWS and other AWS Cloud resources, and on-premises on a global scale.

Optimizing connectivity costs and having the ability to scale from a networking perspective is critical for customers to achieve their connectivity requirements and continued adoption of VMware Cloud on AWS.

Common Hybrid Cloud Network Architectures

We see customers starting by implementing virtual private networks (VPNs) for connectivity to VMware Cloud on AWS from on premises, and for connectivity within the cloud.

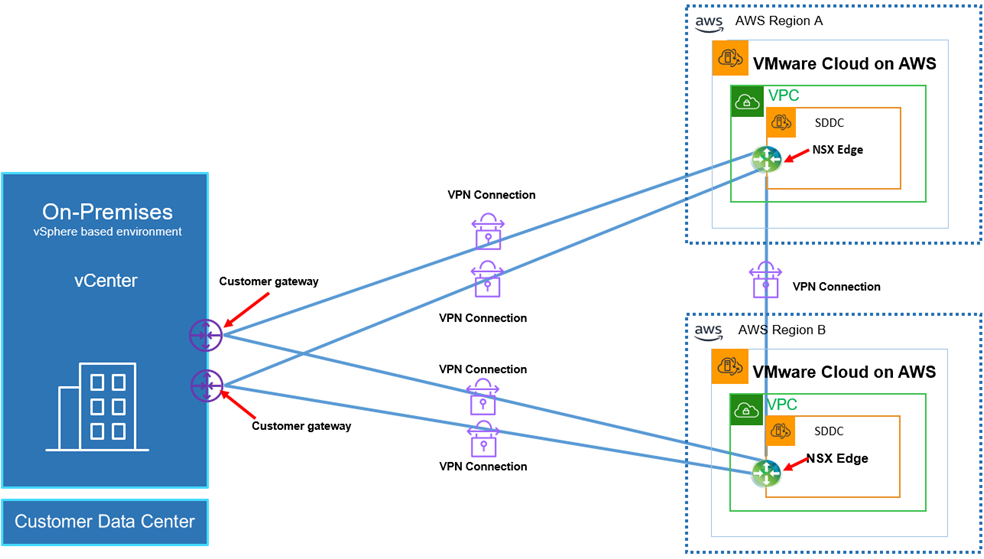

Figure 1 – On-premises to a single SDDC connectivity with VPN.

The configuration from Figure 1 shows how customers implement VPN connectivity to VMware Cloud on AWS in a single SDDC, while Figure 2 shows how to do so in multiple SDDCs.

Figure 2 – On-premises to multiple SDDCs in multiple regions via VPN.

Though this redundant VPN architecture works for connectivity to VMware Cloud on AWS, the performance can be unpredictable because it’s over the public internet path for connectivity, which could be saturated at different times and impact performance. Also, the throughput and packet sizes that can be sent over a VPN tunnel is limited to what the VPN hardware or software can support.

We see customers who have the use case of routing network traffic through a single transit point within an Amazon VPC through a network appliance to different network targets in the cloud, and to on-premises as well. Implementing this transitive routing topology with VPNs can introduce complexity and management overhead for customers in their network architectures.

From the architecture in Figure 1, customers might use a hardware VPN appliance or software version that supports high bandwidth throughput that terminates on a single NSX Edge appliance in VMware Cloud on AWS. That can impact the network performance of the NSX Edge when traffic with large packet sizes are sent over the tunnel to VMware Cloud on AWS.

Similarly, the architecture in Figure 2 shows VPN connectivity to VMware Cloud on AWS in two different regions. These geographic regions could represent different countries as well. In this scenario, customers will have to invest to implement network infrastructure across their global regions to support this VPN connectivity model.

Additionally, the architecture in Figure 2 increases operational overhead because customers will have to implement, manage, and maintain VPN tunnels and hardware/software across different geographic locations, which is not cost effective and not scalable.

Inter-region SDDC VPN tunnels also adds to the operational overhead for managing several VPN tunnels.

Also with VPN tunnels, customers are not able to implement live hybrid vMotion from on-premises vSphere environments to VMware Cloud on AWS.

Common Cloud Network Architectures

Let’s discuss what architectures customers are implementing in the cloud with respect to VMware Cloud on AWS. When customers deploy services on VMware Cloud on AWS, they create logical networks for their applications and configure routing between these logical networks.

Additionally, customers get the ability to connect to native AWS services from an Amazon VPC that they connect to their SDDC at deployment.

Figure 3 – connected VPC to a single SDDC.

The diagram above shows the default connectivity between a single SDDC and an Amazon VPC at deployment. This means if customers create logical networks for their applications on the SDDC and concurrently use bandwidth-intensive native AWS services from the connected VPC, it impacts the performance of a single default size NSX Edge appliance.

We also find that customers build multiple VPCs and would like to scale connectivity to a single or multiple SDDCs. A better approach is therefore required to support this requirement, and we see some customers rely on the Transit VPC architecture to address the use case of transitive routing to multiple targets with third-party appliances.

From a cost perspective, running third-party firewall/VPN appliances on Amazon Elastic Compute Cloud (Amazon EC2) instances can be expensive depending on the size of the instance and throughput required. It’s also challenging from a management perspective, as customers need to manage VPN tunnels and the appliance they choose to use within the Transit VPC.

Scalable Architectures for Hybrid Connectivity

Let’s discuss how customers can implement scalable network architectures for VMware Cloud on AWS. Customers can scale connectivity using AWS Direct Connect, Transit Virtual Interface, Direct Connect Gateway, and VMware Transit Connect (Managed AWS Transit Gateway).

For a detailed explanation of how AWS Direct Connect integrates with VMware Cloud on AWS, see this blog post.

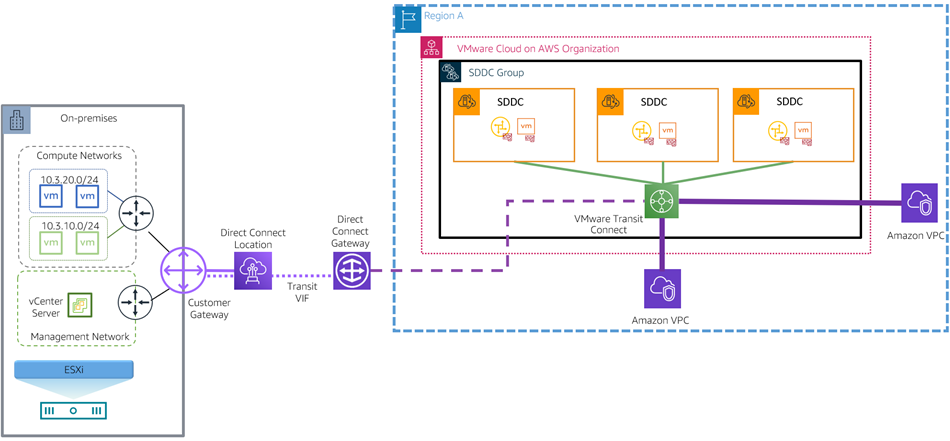

Figure 4 – AWS Direct Connect connectivity to SDDC group within a region.

In the architecture above, customers can use a single AWS Direct Connect connection with a Transit Virtual Interface that terminates on a Direct Connect Gateway to establish connectivity at scale to multiple SDDCs within a region.

SDDC Version M12 and above introduced the concepts of SDDC grouping where customers can group multiple SDDCs into a logical group. The SDDCs within the group are then attached to VMware Transit Connect that is automatically created during the SDDC group creation process. This allows network traffic to be sent between SDDCs and on-premises in a hub-and-spoke model in a scalable way. Connectivity can also be established to VPCs.

The fundamental principle for traffic flow for VMware Transit Connect is that any network flow direction should be towards a SDDC within a SDDC group and vice versa. That means customers cannot directly send traffic from a non-SDDC group towards a native Amazon VPC through the VMware Transit Connect. This principle is applicable to all the architectures involving VMware Transit Connect.

Leveraging an existing Direct Connect connection, a Transit Virtual Interface, and Direct Connect Gateway with a VMware Transit Connect, customers can scale connectivity on a global scale from on-premises to VMware Cloud on AWS.

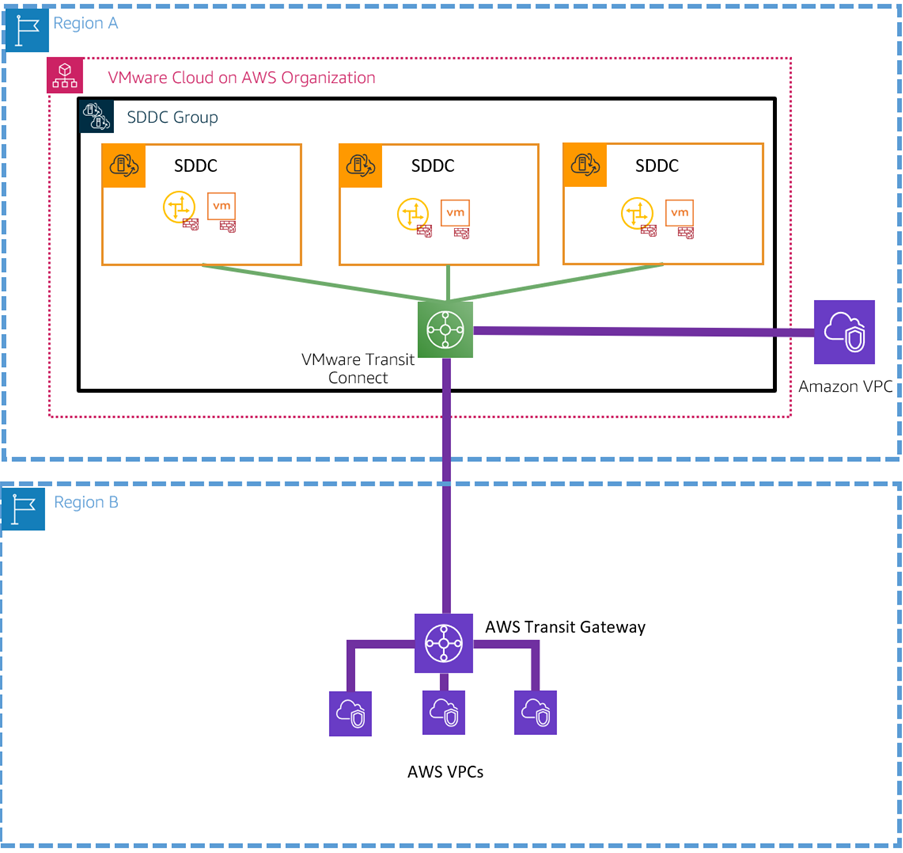

Figure 5 – Inter-region Transit Connect peering.

The architecture above allows customers to use an existing Direct Connect connection with a Transit Virtual Interface, Direct Connect Gateway, and a Transit Connect to scale connectivity from on-premises to multiple SDDCs across different geographic regions or countries.

The VMware Transit Connects inter-region peering feature is designed to scale connectivity on a global scale by peering two transit connects to allow connectivity between SDDCs across two different geographical regions or even countries.

It works by extending the boundaries of an existing SDDC group within a region into a different geographic region by deploying another Transit Connect in the target region and peering it to the existing Transit Connect in the source SDDC group, as shown in Figure 5.

The advantages of this architecture is that customers can have high throughput options using AWS Direct Connect to the AWS Region. Today, Direct Connect offers customers speeds starting at 50Mbps and scaling up to 100Gbps.

Customers can scale up the default NSX Edge appliance to a large size to accommodate the throughput and use i3en.metal instance that has high throughput elastic network adaptor that provides speeds up to 50Gbps.

Additionally, this recommended architecture eliminates the overhead of having to manage several VPN tunnels to different SDDCs and VPCs in the region.

Using a single Direct Connect connection, or at least two for redundancy, customers can scale connectivity on a global scale to vSphere workloads in the AWS Cloud.

Scalable Architectures for Connectivity Within the Cloud

When customers run on VMware Cloud on AWS, they also require the capabilities for scalable connectivity to other native AWS services in other VPCs they use.

Today, SDDC version M16 and above will allow customers to peer native AWS Transit Gateways to VMware Transit Connect to allow for a scalable connectivity from multiple SDDCs to multiple VPCs within the cloud.

Figure 6 – VMware Transit Connect peering with AWS Transit Gateway.

In the architecture above, customers running native AWS Transit Gateway with attached VPCs in a different AWS Region can peer their AWS Transit Gateways with VMware Transit Connect to allow connectivity between AWS services in a different region to an SDDC group in another region.

Alternatively, customers who are already using inter-region peering between SDDCs via VMware Transit Connect can still peer VMware Transit Connect to any of the peered VMware Transit Connects.

Figure 7 – Peering AWS Transit Gateway to peered VMware Transit Connect.

From the architecture above, the SDDCs in Region A can communicate with all SDDCs in Region B, however only the SDDCs in Region B can communicate to the VPCs in Region C.

Conversely, the VPC in Region A can communicate only with the SDDCs in Region A following the same connectivity principle for VMware Transit Connect.

Besides scaling connectivity across different regions for VMware Cloud on AWS and native AWS services, there are use cases where customers implement SDDC groups that need connectivity to other customer VPCs attached to customer managed Transit Gateways.

Until recently, customers using different AWS Transit Gateways in the AWS Region had to scale connectivity to SDDCs using the Transit VPC architecture that allows traffic to be forwarded between two Transit Gateways within the same region.

To scale connectivity for that use case, we announced the availability of intra-region peering feature for VMware Transit Connect during AWS re:Invent 2021. This feature will allow customers to implement architectures to support intra-region peering.

Figure 8 – Intra-region peering for VMware Transit Connect and AWS Transit Gateway.

Customers can leverage the architecture above to implement scalable connectivity for connectivity between SDDCs and VPCs with Transit Gateways in the same Region.

The architecture in Figure 9 will also be supported if customers want to implement connectivity to on-premises.

The architecture below, meanwhile, shows how intra-region peering integrates with AWS Direct Connect with Direct Connect Gateway and Transit Virtual Interface to scale hybrid connectivity and connectivity to the cloud.

Figure 9 – AWS Direct Connect connectivity to different SDDCs and VPC resources in a region.

VMware Transit Connects allows customers to experience secured private connectivity through the AWS network backbone to other services in the cloud.

VMware Transit Connect provides up to 50Gbps burst throughput, so depending on the bare metal instance type used for VMware Cloud on AWS, customers can scale connectivity and achieve network performance within the cloud and from on-premises.

Additional Network Scalability Enhancements

For individual SDDCs attached to a VMware Transit Connect that leverage respective NSX Edge appliances to operate, a single default NSX Edge appliance cannot support the 50Gbps burst throughput to enhance performance.

VMware provides the multi-edge functionality to compliment VMware Transit Connect to provide enhanced throughput performance for network traffic. This feature linearly scales the NSX Edge appliance by adding more appliances to maximize and optimize network connectivity performance.

Multi-edge scale out uses the concepts of traffic groups, which is a logical container of network prefix lists for respective application networks in the cloud.

These traffic groups are attached to the secondary scaled out edge appliances to take pressure off the default NSX Edge appliance to improve network performance.

Figure 10 – Multi-edge scale out within an SDDC in the cloud.

Each traffic group creates an additional active edge and standby edge, with each on a separate host. When using this feature, ensure you have the adequate compute capacity in your management cluster.

Edge scale out supports connectivity and bandwidth enhancement between SDDCs in the cloud and to Amazon VPCs within the cloud. Additionally, it also supports connectivity to on-premises using AWS Direct Connect with a Transit Virtual Interface and Transit Connect.

It’s worth noting this edge scale out feature will not support traffic over AWS Direct Connect with Private Virtual Interface as well as VPN tunnels that terminates on the NSX Edge appliance.

Conclusion

In this post, I have discussed how to implement scalable network connectivity leveraging AWS Direct Connect with a Transit Virtual Interface, Direct Connect Gateway, and a VMware Transit Connect to support scalable global connectivity.

The NSX Multi-Edge scale out feature can help provide a performant network architecture on VMware Cloud on AWS.