AWS Partner Network (APN) Blog

AWS Direct Connect Integration with VMware Cloud on AWS

By Schneider Larbi, Sr. Partner Solutions Architect – AWS

|

VMware Cloud on AWS allows customers to run VMware vSphere workloads on the AWS global infrastructure. This means you can run vSphere workloads across all of the AWS Regions where VMware Cloud on AWS is available.

Customers can choose to implement hybrid cloud architectures where some of their workloads are on-premises and some on VMware Cloud on AWS.

With hybrid implementations, we see a design pattern where customers configure connectivity to allow communication between on-premises and VMware Cloud on AWS networks.

Customers use various methods such us a virtual private networks (VPN) and/or AWS Direct Connect to implement hybrid cloud connectivity.

In this post, I will discuss how customers can leverage AWS Direct Connect to establish hybrid connectivity between on-premises VMware infrastructure and VMware Cloud on AWS, as well as best practices on how to achieve resiliency with AWS Direct Connect and why we recommend it.

To understand how VMware Cloud on AWS integrates with AWS Direct Connect, we need to understand the underlying network architecture of VMware Cloud on AWS.

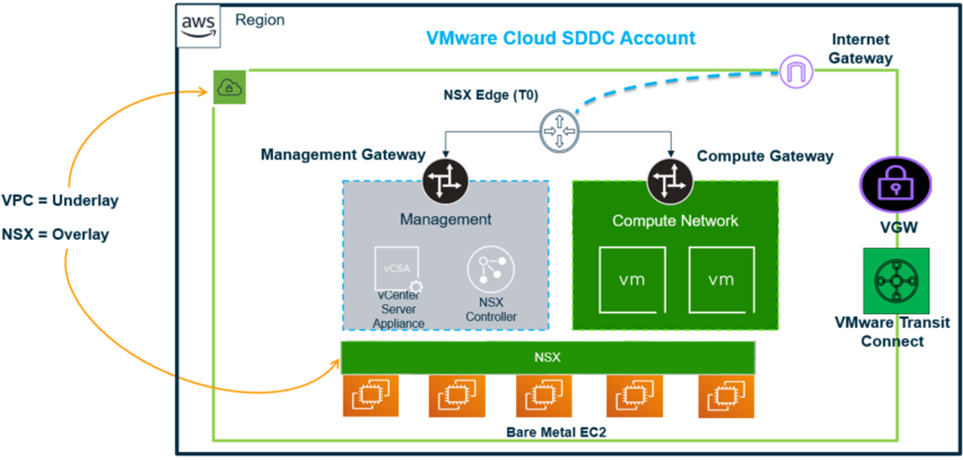

The architecture below provides a high-level glimpse into the network architecture for VMware Cloud on AWS Software Defined Data Center (SDDC).

Figure 1 – VMware Cloud on AWS underlying network.

Every SDDC is deployed into an Amazon Virtual Private Cloud (VPC) in an AWS Region. This allows the SDDC to be in a dedicated network for running the vSphere workloads, and the VPC becomes the underlying software defined network construct that interfaces with the physical uplinks of the bare metal hosts.

NSX-T, the software defined networking system of VMware, is then deployed as the overlay network on the SDDC to provide management and compute workload connectivity in the SDDC.

Figure 1 shows two other VPC constructs—Virtual Private Gateway (VGW) and VMware Transit Connect (vTGW). These components allow AWS Direct Connect to integrate with VMware Cloud on AWS SDDC through the VPC underlay networking.

Why is AWS Direct Connect Important?

Customers who use AWS Direct Connect with VMware Cloud on AWS enjoy a number of benefits, including consistent network performance between VMware Cloud on AWS and on-premises.

Additionally, customers may reduce network bandwidth costs between VMware Cloud on AWS and on-premises when data is sent across AWS Direct Connect.

Hybrid connectivity with AWS Direct Connect can also ensure data protection in transit by taking advantage of the AWS Direct Connect encryption options. 10 Gbps and 100 Gbps connections offer native IEEE 802.1AE (MACsec) point-to-point encryption at select locations.

AWS Direct Connect also offers customers flexible connectivity options based on the connection speeds required by customers.

Finally, AWS Direct Connect unlocks critical vSphere features between SDDCs and VMware workloads on-premises, such as the ability to live migrate virtual machines (hybrid vMotion) and ability to implement connectivity at scale using VMware Transit Connect.

For these reasons, I highly recommend AWS Direct Connect for customers who use VMware Cloud on AWS to run their vSphere workloads in the cloud.

How Does it Work?

AWS Direct Connect allows customers to establish a private, dedicated network connection from on-premises to AWS. It’s essentially a private network circuit from a customer data center to the AWS Availability Zones (AZs) within an associated AWS Region.

AWS Direct Connect routers interconnect with other partner or customer routers that connects at co-location facilities called the AWS Direct Connect Locations or Direct Connect Point of Presence (POPs).

In most cases, a cross connect is done between a customer router at the AWS Direct Connect POP to the AWS Direct Connect routers that connects to a specific AWS Region.

Figure 2 – AWS Direct Connect implementation with VMware Cloud on AWS.

Note that customers can also have connection directly from their on-premises environment into the AWS Direct Connect router at the POP, as depicted below.

Figure 3 – AWS Direct Connect deployment with on-premises router.

Each of the two connectivity models above are all supported on VMware Cloud on AWS.

Virtual Interface Integrations

After the physical network connections for AWS Direct Connect is configured, customers create logical networks called virtual interfaces (VIFs) to transport network traffic between on-premises to VMware Cloud on AWS.

Today, AWS Direct Connect supports three types of VIFs: Private, Public, and Transit VIFs.

Let’s look at how these virtual interfaces work with VMware Cloud on AWS.

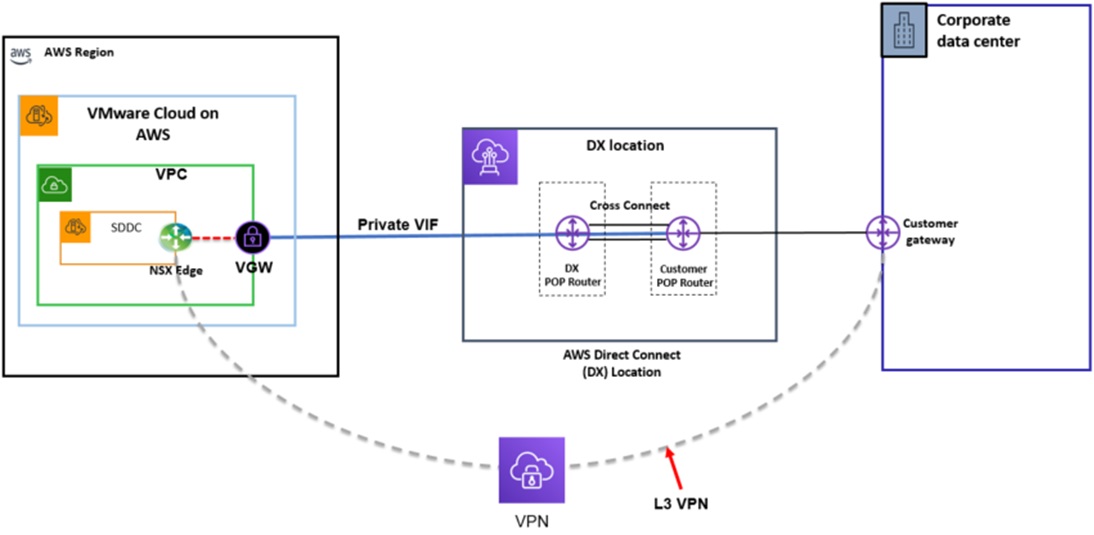

Private Virtual Interface (Private VIF)

Private virtual interfaces allows customers to have a dedicated network connectivity to a VPC in the AWS Region. That means the network termination happens on the customer’s on-premises network device and the Virtual Private Gateway on the AWS side.

A VGW is a logical, fully redundant distributed edge routing function that sits at the edge of a VPC. It has the capability to terminate Direct Connect Private VIFs and VPNs, as well.

This allows AWS Direct Connect Private VIFs to be terminated on the VGW of the VPC that hosts the SDDC.

It also allows private IP addresses to be routed over the private virtual interface to the VGW. These routes are, in turn, propagated into the NSX Edge appliance that allows the on-premises routes to be advertised to the SDDC and vice versa.

Private VIFs support Border Gateway Protocol (BGP) for routing between on-premises and the SDDC. This interface type should be used with VMware Cloud on AWS or SDDCs when customers want to route network traffic privately using their private IP spaces from on-premises without traversing the public internet.

Additionally, you can use private VIFs for the vMotion feature to migrate workloads between on-premises and VMware environments. This virtual interface type must be used, otherwise customers cannot use the vMotion feature between on-premises and SDDC.

Figure 4 – Private VIF integration with VMware Cloud on AWS.

It’s worth noting that AWS public endpoint services are not accessible over a Private VIF. If a customer has a requirement to route to public endpoint services, a different interface type must be configured with VMware Cloud on AWS SDDC for that.

Private VIFs are created from the AWS Management Console and the SDDC console. Today, you can advertise 16 networks from the SDDC through BGP over the Private VIF to on-premises. This number is a soft limit and can be increased upon request.

Conversely, customers can advertise 100 routes from on-premises to the SDDC over the Private VIF using BGP. Always refer to the configuration maximum page for the latest configuration limits.

Customers can use techniques such as route filtering to determine what routes they want to advertise between on-premises and the SDDC.

When configuring a Private VIF, a BGP Autonomous System Number (ASN) is required to identify networks for external routing policy to the internet. Customers can pick any private ASN number between 64512 to 65535 ranges.

Currently, you can have a maximum of four Private VIF attachments per SDDC. This configuration maximum should be considered with customers want to implement resiliency for the Private VIFs.

Public Virtual Interface (Public VIF)

This interface type terminates at the AWS Region and allows access to all AWS public services using public IP addresses via BGP. When configured, all AWS prefixes are advertised to your on-premises device. Here’s the current list of AWS IP Address Ranges.

To use a Public VIF with VMware Cloud on AWS, there’s an additional requirement since Public VIFs do not terminate inside the VPC hosting the SDDC.

IPsec VPN tunnel must be configured to the NSX Edge over the Public VIF. You need to ensure your VPN gateway is routable over the Public VIF, as well.

Figure 5 – Public VIF integration with VMware Cloud on AWS.

This type of virtual interface is used when customers have requirements to directly route traffic from their vSphere workloads to public endpoint services in AWS such as Amazon Simple Storage Service (Amazon S3), Amazon DynamoDB, and Amazon Elastic Compute Cloud (Amazon EC2), among others.

Note that Public VIFs do not support the vMotion feature of live migrating virtual machines bi-directionally between the SDDCs and on-premises. To work around this, customers can configure Hybrid Cloud Extension (HCX) service and use that to vMotion their workloads.

The reason for this is that Public VIFs do not transport ESXi management and vMotion traffic.

Transit Virtual Interface (Transit VIF)

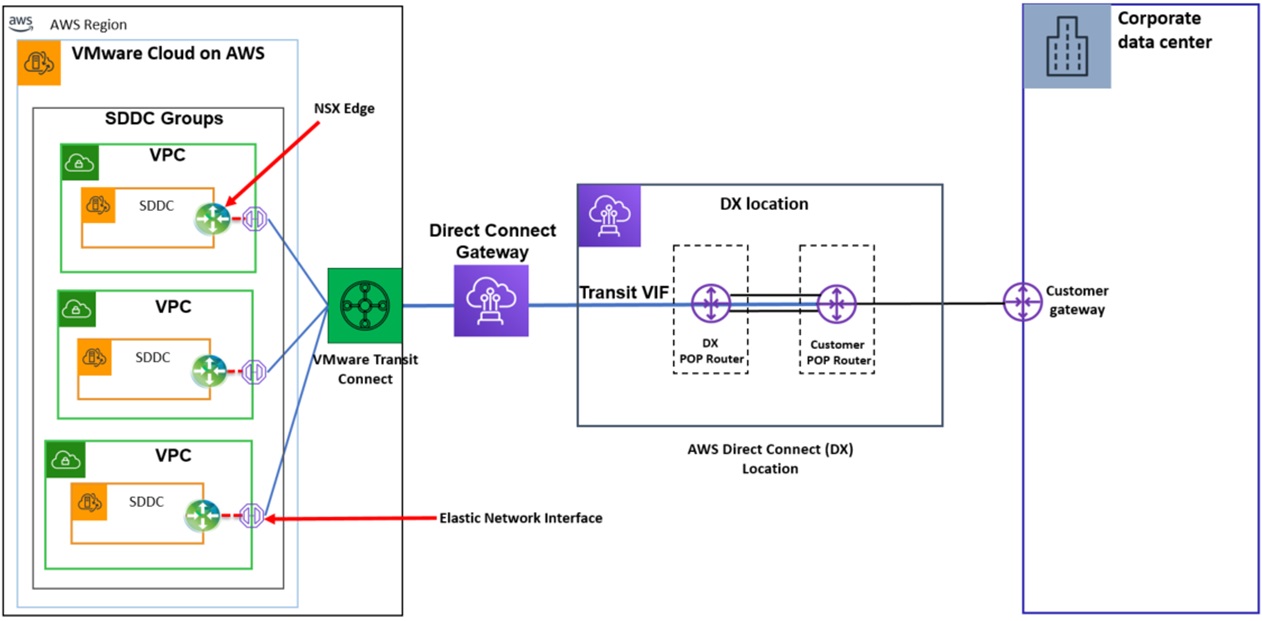

This interface type is associated with an AWS Direct Connect Gateway that’s attached to a VMware Transit Connect.

VMware Transit Connect is a managed AWS Transit Gateway that acts as a distributed router to route traffic from on-premises to SDDC Groups, between SDDCs and Amazon VPCs in the cloud.

This virtual interface type allows customers to advertise their on-premises networks, as well as ESXi management and vMotion networks to the SDDC from on-premises.

Figure 6 – Transit VIF integration with VMware Cloud on AWS.

This virtual interface type is also used when customers want to implement hybrid connectivity at scale to connect multiple SDDCs to on-premises.

One thing to note with Transit VIF is that only one can be configured per AWS Direct Connect connection. This should be factored in during your design for hybrid network connectivity.

Deployment Considerations

When deploying WS Direct Connect connections for use with VMware Cloud on AWS, I recommend using the AWS Direct Connect quota published online when making decisions on the number of VIFs you need to configure for your use case per connection.

It’s worth noting that customers also have the option to use dedicated or hosted AWS Direct Connect connections with VMware Cloud on AWS.

A dedicated AWS Direct Connect connection is when the physical connection is associated with a single customer. You can then provision multiple VIFs on the same connections for use with VMware Cloud on AWS or to support other AWS services. The VIFs created on a dedicated connection will share the bandwidth of the physical connection.

A hosted AWS Direct Connect connection is a connection provisioned by an AWS Direct Connect Partner to create a connection on behalf of customers. A dedicated bandwidth is allocated for the AWS Direct Connect connection rather than having multiple VIFs on the same parent connection competing for bandwidth.

It’s also worth noting that customers who use this connection type can only have a single VIF created per hosted connection, whether a private, public, or a transit VIF. This means if you have multiple SDDCs and want to use AWS Direct Connect, you’ll need to order for another hosted connection for connectivity to the SDDC.

AWS Direct Connect Resiliency

Just having AWS Direct Connect connectivity between VMware Cloud on AWS and on-premises does not provide automatic resiliency for hybrid connectivity. Resiliency must be built across the physical AWS Direct Connect layer as well as the virtual interface layer.

AWS provides general recommendations around AWS Direct Connect topologies to consider when planning resiliency.

Let’s now talk about building resiliency into the physical layer for AWS Direct Connect with VMware Cloud on AWS.

The physical AWS Direct Connect components start from customers’ on-premises devices to devices at the Direct Connect locations. This means you must start from on-premises by building termination endpoints for AWS Direct Connect across different locations for redundancy.

AWS Direct Connect provides hybrid connectivity to SDDCs through physical connections to endpoints in a Direct Connect location from customer networks. If you don’t have any presence in the Direct Connect location, you can work with a partner to provide the last mile connectivity to on-premises networks.

On the customer’s on-premises network, AWS Direct Connect connections terminate on a customer gateway. This could be a router or a firewall appliance capable of IP routing and forwarding.

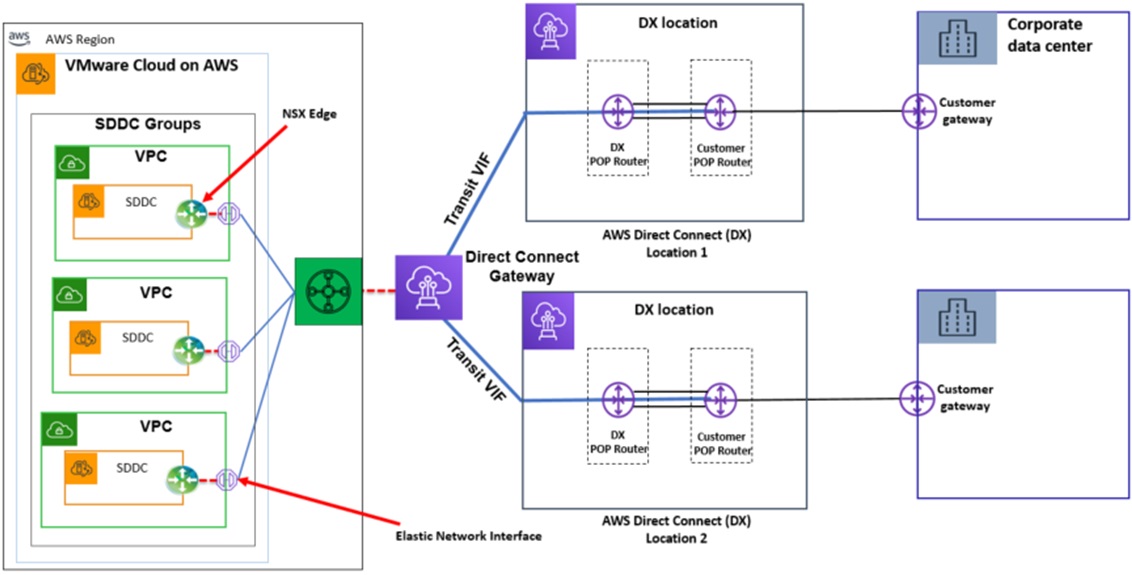

Additionally, I highly recommend having at least one AWS Direct Connect connection at multiple locations as displayed in the following architecture.

Figure 7 – Single AWS Direct Connect connection across two locations.

In this configuration, a customer will use two different AWS Direct Connect connections from two different locations. This ensures resilience to connectivity failure due to a fiber cut, router device failure or complete AWS Direct Connect location failure.

In case there’s maintenance work on one of the devices in one location, it ensures your connectivity is still up due to the second link in the other location. This helps customers to achieve the desired AWS Direct Connect SLAs respecting their connectivity.

For this specific topology, customers can get 99.9 percent SLA with AWS Direct Connect if they have enterprise support agreement and are compliant with the AWS Well-Architected Framework.

I recommend the sample architecture below for a 99.99 percent SLA on critical workloads for connectivity to VMware Cloud on AWS SDDC.

Figure 8 – Dual connection across two locations.

With the topology above, customers and AWS Direct Connect Partners can achieve resiliency by configuring two separate Direct Connect connections that terminate on two separate routers and customer gateways in more than one location.

This configuration offers resiliency with an SLA of 99.99 percent to connectivity failure to VMware Cloud on AWS SDDC from on-premises, as long as customers have had their VMware Cloud on AWS reviewed against the Well-Architected Frame work.

When you have multiple AWS Direct Connect connections in the same location, it’s a best practice to always check if the connections has been provisioned on difference devices in both ends of the connection. If the connections are ordered from the same AWS account, AWS will try to assign them to different devices in the Direct Connect location for higher resiliency.

You can also check the device allocated on the AWS Direct Connect Letter of Authorization and Connecting Facility Assignment (LOA-CFA) for the cross connect when you order your dedicated connection. If you have your connection provisioned by an AWS Direct Connect Partner, you can work with them to implement this architecture.

Now, let’s consider what needs to be done at the virtual level in connection to AWS Direct Connect and VMware Cloud on AWS.

Virtual Interface Resiliency

AWS Direct Connect relies on virtual interfaces to route network traffic, so each of the supported VIFs on VMware Cloud on AWS must be configured redundantly on top of the underlying physical AWS Direct Connect connections.

Private VIF

When running critical workloads, in order to have the end-to-end resiliency from on-premises to your SDDC with a Private VIF, you must configure a Private VIF across a minimum of two AWS Direct Connect connections.

Figure 9 – Private VIF resiliency for AWS Direct Connect with VMware Cloud on AWS.

Customers can configure two Private VIFs across two different AWS Direct Connect connections in two different locations that terminates on the VGW for the respective VMware Cloud on AWS SDDC.

To achieve route resiliency for this architecture, you need to use BGP for route management across each of the Private VIFs. You have two options on how to configure BGP to support this architecture:

- Active/Active: This is the default configuration for AWS Direct Connect. In this case, the service uses BGP multipath to load balance network traffic across the Private VIFs. If there are two connections and one fails, the traffic will be routed across the other connection.

. - Active/Passive: With this configuration, one connection is active and handles all the traffic, while the other one is on standby. In case of an outage and the main link fails, the traffic is routed to the standby link that becomes active. AS-Path prepending algorithm and Local preferences are the BGP algorithms and attributes that helps you to achieve that.

.

With AS-Path prepending, you influence the path for the routes advertised over AWS Direct Connect. If you want one of your connections to be passive, you must ensure your on-premises router advertise a longer AS-Path for that connection.

.

In that case, the traffic from the SDDC to the on-premises will chose the active connection, the one with a shorter AS-Path length and lowest Autonomous System number that it needs to go through until the destination.

.

If the AWS Direct Connect locations are different, you also need to use Local Preference – BGP communities. The following example of local preference BGP community tags are supported:- 7224:7100—Low preference

- 7224:7200—Medium preference

- 7224:7300—High preference

.

- Local preference BGP community tags are evaluated before any AS_PATH [BD3] attribute, and are evaluated in order from lowest to highest preference (where highest preference is preferred).

Additionally, customers can enable Bidirectional Forwarding Detection (BFD) over AWS Direct Connect connections to facilitate failover times during a site outage.

Figure 10 – Configuration with an Active/Passive configuration.

SDDC console displays dual AWS Direct Connect configuration, as shown below.

Figure 11 – Two redundant Private VIFs.

The architecture in Figure 10 above can be configured with to a maximum of four Private VIFs with VMware Cloud on AWS. These VIFs can be spread across different AWS Direct Connect locations.

For customers who cannot afford having multiple connections across different locations, using Private VIFs can allow you to have one single connection and use L3 route based VPN as a backup to AWS Direct Connect.

Figure 12 – VPN backup for AWS Direct Connect Private VIF for SDDC.

When using this architecture, all traffic advertised through BGP will use the VPN path by default. The exceptions are ESXi and vMotion traffic, which always use the AWS Direct Connect path over the Private VIF.

If you want to prefer routes on AWS Direct Connect Private VIF over the VPN, you can enable the option: “Use VPN as backup to AWS Direct Connect.”

Figure 13 – Enabling VPN option as Direct Connect backup.

Public VIF

Resiliency for Public VIFs leverages the same principle of spreading the VIFs across at least two AWS Direct Connect locations.

The difference here is that Public VIFs require VPN tunnels to operate, meaning customers will need to configure VPN tunnels across any connectivity used for resiliency, as shown in the architecture below.

Figure 14 – Public VIF resiliency for VMware Cloud on AWS.

In this architecture, customers can use BGP to manage routing to enforce a resilient network flow across the VPN tunnels that supports the Public VIFs.

Transit VIF

Resiliency for Transit VIFs leverages the same principle of spreading the VIFs across at least two AWS Direct Connect locations.

The difference is that these VIFs terminate on the DXGW, which is a redundant construct in AWS, and that associates with a VMware Transit Connect to allow routing to SDDCs within an SDDC group.

Figure 15 – Transit VIF resiliency for VMware Cloud on AWS.

Conclusion

In this post, I have shared how AWS Direct Connect integrates with VMware Cloud on AWS. I’ve also discussed the supported virtual interface types and how each can be used with VMware Cloud on AWS depending on the use case.

I’ve explored how to implement resiliency for AWS Direct Connect when implementing it with VMware Cloud on AWS.

Feel free to contact us here at AWS for any support with VMware Cloud on AWS.