AWS Big Data Blog

Category: Analytics

Decentralize LF-tag management with AWS Lake Formation

In today’s data-driven world, organizations face unprecedented challenges in managing and extracting valuable insights from their ever-expanding data ecosystems. As the number of data assets and users grow, the traditional approaches to data management and governance are no longer sufficient. Customers are now building more advanced architectures to decentralize permissions management to allow for individual […]

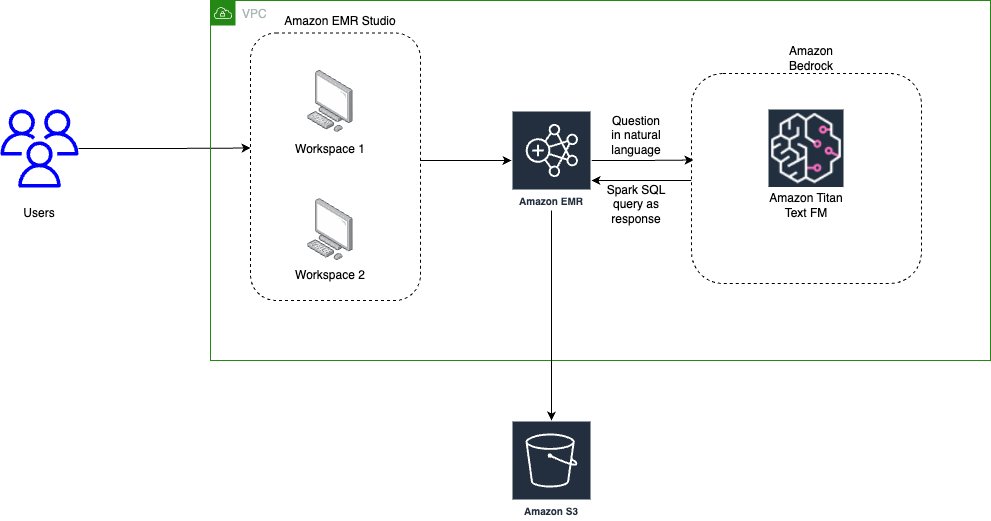

Use generative AI with Amazon EMR, Amazon Bedrock, and English SDK for Apache Spark to unlock insights

In this era of big data, organizations worldwide are constantly searching for innovative ways to extract value and insights from their vast datasets. Apache Spark offers the scalability and speed needed to process large amounts of data efficiently. Amazon EMR is the industry-leading cloud big data solution for petabyte-scale data processing, interactive analytics, and machine […]

Unlock innovation in data and AI at AWS re:Invent 2023

For organizations seeking to unlock innovation with data and AI, AWS re:Invent 2023 offers several opportunities. Attendees will discover services, strategies, and solutions for tackling any data challenge. In this post, we provide a curated list of keynotes, sessions, demos, and exhibits that will showcase how you can unlock innovation in data and AI using […]

What’s cooking with Amazon Redshift at AWS re:Invent 2023

AWS re:Invent is a powerhouse of a learning event and every time I have attended, I’ve been amazed at its scale and impact. There are keynotes packed with announcements from AWS leaders, training and certification opportunities, access to more than 2,000 technical sessions, an elaborate expo, executive summits, after-hours events, demos, and much more. The […]

BMW Cloud Efficiency Analytics powered by Amazon QuickSight and Amazon Athena

This post is written in collaboration with Philipp Karg and Alex Gutfreund from BMW Group. Bayerische Motoren Werke AG (BMW) is a motor vehicle manufacturer headquartered in Germany with 149,475 employees worldwide and the profit before tax in the financial year 2022 was € 23.5 billion on revenues amounting to € 142.6 billion. BMW Group is one of the […]

Implement Apache Flink real-time data enrichment patterns

You can use several approaches to enrich your real-time data in Amazon Managed Service for Apache Flink depending on your use case and Apache Flink abstraction level. Each method has different effects on the throughput, network traffic, and CPU (or memory) utilization. For a general overview of data enrichment patterns, refer to Common streaming data enrichment patterns in Amazon Managed Service for Apache Flink. This post covers how you can implement data enrichment for real-time streaming events with Apache Flink and how you can optimize performance. To compare the performance of the enrichment patterns, we ran performance testing based on synthetic data. The result of this test is useful as a general reference. It’s important to note that the actual performance for your Flink workload will depend on various and different factors, such as API latency, throughput, size of the event, and cache hit ratio.

Clean up your Excel and CSV files without writing code using AWS Glue DataBrew

Managing data within an organization is complex. Handling data from outside the organization adds even more complexity. As the organization receives data from multiple external vendors, it often arrives in different formats, typically Excel or CSV files, with each vendor using their own unique data layout and structure. In this blog post, we’ll explore a […]

Amazon Kinesis Data Streams: celebrating a decade of real-time data innovation

Data is a key strategic asset for every organization, and every company is a data business at its core. However, in many organizations, data is typically spread across a number of different systems such as software as a service (SaaS) applications, operational databases, and data warehouses. Such data silos make it difficult to get unified […]

How Wallapop improved performance of analytics workloads with Amazon Redshift Serverless and data sharing

Amazon Redshift is a fast, fully managed cloud data warehouse that makes it straightforward and cost-effective to analyze all your data at petabyte scale, using standard SQL and your existing business intelligence (BI) tools. Today, tens of thousands of customers run business-critical workloads on Amazon Redshift. Amazon Redshift Serverless makes it effortless to run and […]

Amazon MSK Serverless now supports Kafka clients written in all programming languages

Amazon MSK Serverless is a cluster type for Amazon Managed Streaming for Apache Kafka (Amazon MSK) that is the most straightforward way to run Apache Kafka clusters without having to manage compute and storage capacity. With MSK Serverless, you can run your applications without having to provision, configure, or optimize clusters, and you pay for […]