AWS for Games Blog

Using machine learning to understand a user community

This guest post is authored by Alexander Gee, co-founder of Oterlu AI.

For two years I led a Child Safety team at Google in Silicon Valley that worked hard to keep children safe when using different online services. Over my career I have built up experience and know-how using technology to tackle everything from hate speech to large scale spam networks in Google Search. I have been able to utilize the latest technological break-throughs together with an array of technical resources to make sure users were kept safe. Unfortunately, this kind of safety net is sorely missing in many other online services, especially those that have a social interaction component such as multiplayer games.

After moving back to the colder climate of Gothenburg, Sweden from the United States, I teamed up with two colleagues, Ludvig and Sebastian, and formed what would become Oterlu. Both my new team partners have years of experience using machine learning to solve problems, from chat bots to forecasting problems and everything in-between.The Oterlu team set out to answer one important question: “How do we make people feel welcome and secure in online communities?”

Firstly, we knew there had been a huge leap in how machine learning could be used to understand social behavior, and we knew what was commercially available was lagging behind. We focused on bridging this gap. This focus enabled us to understand social interactions in a much more nuanced and scalable way. We needed to be able to differentiate between someone who is bullying another person and someone who is simply trash-talking with a friend. But at the same time, we needed to ensure that we were mindful of each individual’s privacy, building algorithms to erase personal information so that data was anonymized and strictly used for identifying certain behaviors.

Secondly, we hoped our technology could empower game developers who clearly understand this issue and who truly care for the community they are serving. Thanks to feedback from community managers and developers, we built a tool suite that not only allows teams to understand their user community better but also the ramifications of their actions.

We wanted blanket banning of all users for any breach of a policy to become a thing of the past, and to enable games businesses to recognize the positive players who bring so much good to the game. A lot of this work started with our first customer, Recolor, a digital coloring book available on mobile that has a global user base.

Case Study: Recolor

When we first started working with Recolor, we were struck by how vibrant and diverse their online community was. Being the most popular coloring book on mobile, they not only provide a creative and stress-relieving environment for their users, but they also provide a platform where users can interact with one another. The Oterlu team’s aim was to empower Recolor’s community management team to continue growing their diverse community whilst keeping their users safe in a scalable way.

The first step in our process was to do a deep dive with the community management team to understand their needs and the needs of the user base. Their team knows the Recolor community inside out, and they are also responsible for determining what type of behavior is and isn’t allowed on their platform. We found collaborating with the community management team to be the best way to go because online communities are not homogenous – they all differ to some extent – and understanding the particular rules for the platform is important (for example, whether or not swearing is allowed on the platform.)

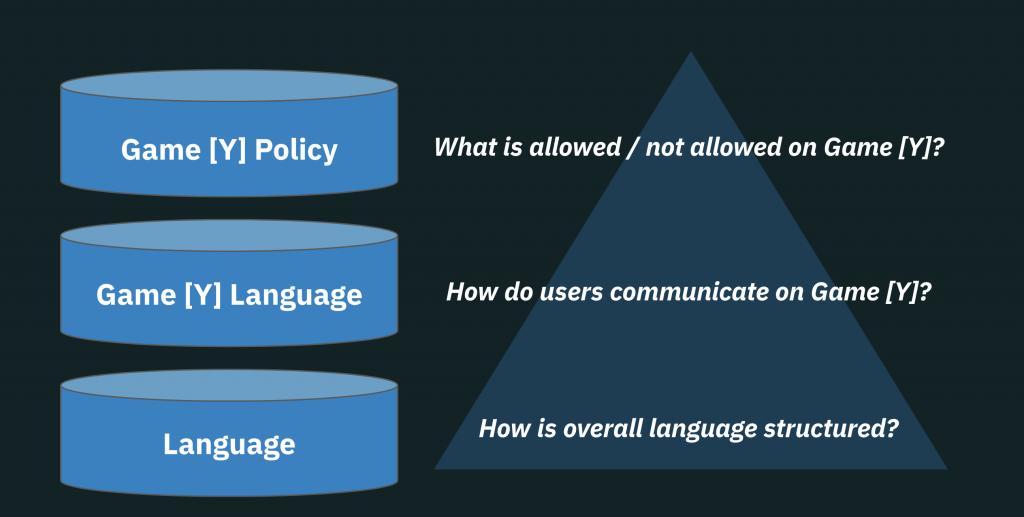

After we conducted the deep dive, we had a pretty clear picture of the community itself and of the needs of the management team. We then set about customizing our machine learning model to fit Recolor’s community. We started off with a baseline language model that understands language and then learns how language is used on Recolor, such as the use of slang expressions. Next, we tuned the model to search for some of the nuanced policies specific for the platform. Here Amazon’s selection of GPU instances allowed us to set up and train our models effectively.

Once we trained the customized machine learning model, the next step was to use it in a live environment. For this we made the model available through an API. It needed to be able to scale with unpredictable traffic loads, like spikes in user activity. Our team decided the serverless framework from AWS would work best to deploy our various services because it uses AWS Lambda functions and Amazon Simple Queue Service (Amazon SQS) queues to communicate between them. The models are packaged in docker containers and deployed to Amazon Elastic Container Service (Amazon ECS) due to their size. With this setup, we can handle all sizes of customer requirements, ranging from a couple of thousand messages to a billion messages a day.

The final focal point of our service, aimed to help the management team in their work both on a daily basis and in the long time horizon, was providing access to the Oterlu Community tool. This tool allows the team to truly harness the power of our machine learning models. It provides analytics of what is going on in the day-to-day environment, such as which time zones were seeing peaks in specific policy violations.

Today, our service covers the global Recolor community and is able to provide real-time feedback on when a user is committing a policy violation.

Conclusion

One of the gaps Oterlu hopes to bridge is between game developers and the players of their games so that they can understand each other better. When you build a multiplayer game it isn’t only the game mechanics that are important – it is also the social aspect. Most developers don’t want one of their new players to enter a game for the first time feeling anxious about being harassed. Instead, developers might hope that one of their superstar players will welcome new players to the game.

If you are a game developer, make sure that you are continuously listening to your community for feedback: not only how they feel about the next big feature but also how players interact with each other. This can be hard if you have a few loud voices in a room who dominate the conversation, but you do have options for getting different perspectives. Qualitative data gathered by interviewing a few randomly selected players that might not be as forward in the conversation can give you very different perspectives from those you might usually encounter. Quantitative data, which is what we focus on at Oterlu, can give you a bird’s-eye perspective on the entire in-game community and how people are behaving towards each other.

Ultimately, it will be up to game developers to work with their players to help shape the user experience for a specific game. We at Oterlu are consciously trying to give developers the tools to offer a better experience.