AWS HPC Blog

Simcenter STAR-CCM+ price-performance on AWS

Organizations such as Amazon Prime Air and Joby Aviation use Simcenter STAR-CCM+ for running CFD simulations on AWS so they can reduce product manufacturing cycles and achieve faster times to market. (You can read more about them in our Prime Air and Joby Aviation case studies).

In this post today, we describe the performance and price analysis of running Computational Fluid Dynamics (CFD) simulations using Siemens SimcenterTM STAR-CCM+TM software on AWS HPC clusters.

For this post, we evaluate automotive and aerospace industry relevant test cases with mesh sizes between 17 million cells and 147 million cells. We selected these benchmarks as they cover the typical range of case sizes by Simcenter STAR-CCM+ customers. By referring to the benchmarking analysis presented in this blog, you will be able to understand the performance and costs for running your Simcenter STAR-CCM+ CFD workloads on AWS HPC-optimized infrastructure.

Benchmark environment

Amazon EC2

CFD applications benefit from features such as high memory bandwidth, low-latency / high-bandwidth network interconnect, and access to a fast parallel file system in order to efficiently scale the simulations to a large number of instances. As such, a mix of Elastic Fabric Adapter (EFA) enabled instances have been used for running benchmarks for this blog.

Amazon recently announced the Amazon EC2 Hpc6a instance type which delivers 100 Gbps networking through EFA with 96 cores of third-generation AMD EPYC™ (Milan) and 384 GB RAM. Along with Hpc6a, we have used the Intel Xeon Scalable processor (Ice Lake) based, Amazon EC2 C6i instance type, and the Intel Xeon (Skylake) based Amazon EC2 C5n instances for running these benchmarks. All three instance types offer EFA support with up to 100 Gbps networking. These instances are powered by the AWS Nitro System, an advanced hypervisor technology delivering the required compute and memory resources for increased performance and security.

The following table (Table 1) summarizes the configurations of the Amazon EC2 instance types used:

| Instance Type | Processor | No. of Physical Cores (per instance) | Memory (GiB) | EFA Network Bandwidth (Gbps) |

| Hpc6a.48xlarge | AMD EPYC Milan 3rd gen | 96 | 384 | 100 |

| C6i.32xlarge | Intel Ice Lake | 64 | 256 | 50 |

| C5n.18xlarge | Intel Skylake | 36 | 192 | 100 |

HPC solution components and case descriptions

AWS HPC infrastructure used for performing these benchmarks is deployed using AWS ParallelCluster, an AWS-supported open-source cluster orchestration tool. ParallelCluster version 3.1.1 is used for this post. Along with the instance types mentioned above, we used Amazon FSx for Lustre, a fully-managed high-performance Lustre file system offering up to hundreds of GB/s of throughput and sub-millisecond latencies for improved I/O performance. Tests were run with hyper-threading disabled on all the instance types. For more information on the AWS HPC solution architecture components, along with step-by-step instructions for deploying your HPC cluster and running Simcenter STAR-CCM+, refer to our CFD Workshops page.

The test cases used for this post include the external aerodynamics Le Mans car model with 17 million polyhedral cells which is part of the standard STAR-CCM+ benchmark suite and a high-lift aircraft model with 147 million polyhedral cells. The benchmarks are steady-state Reynolds-Averaged Navier-Stokes (RANS) simulations, and were run with Simcenter STAR-CCM+ 2021.2 (build 16.04.007). For our purposes here, we ran the simulations until the convergence criteria were reached, and we used the last 100 iterations for deriving the performance and cost-related metrics described in the sections below.

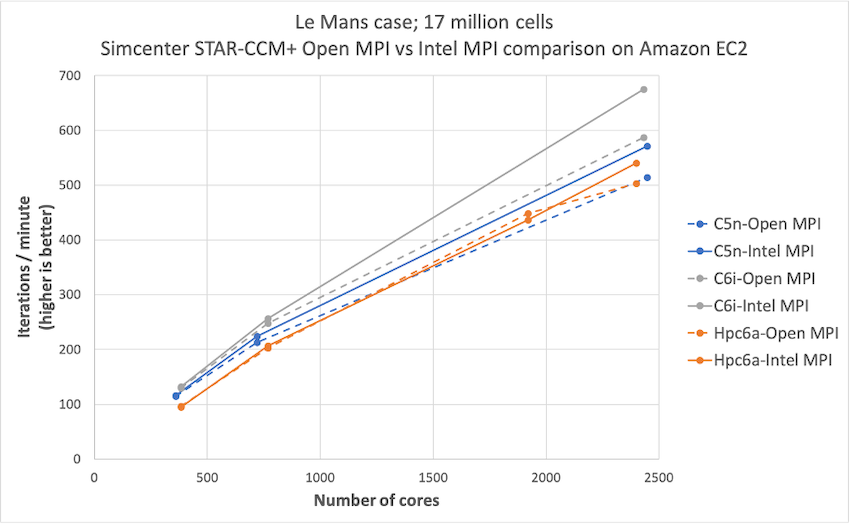

Choosing the MPI implementation

Simcenter STAR-CCM+ 2021.1 onwards has built-in Message Passing Interface (MPI) support for both Open MPI and Intel MPI implementations. MPI protocol is used in parallel programming for intra- and inter-node communication and computation. To determine the appropriate MPI implementation for representing the results in the blog, we first ran Simcenter STAR-CCM+ benchmarks with both MPI options. Figure 1 compares performance of Open MPI to Intel MPI. Open MPI results are represented using dashed lines, and Intel MPI results are represented using solid lines, with colors for different Amazon EC2 instance types.

Figure 1: Comparison of simulation performance for the Le Mans test case run with Open MPI and Intel MPI. Intel MPI offers better performance compared to Open MPI.

From the above plot (Figure 1), we see Intel MPI offers an average 6% performance improvement over Open MPI for C5n and C6i instances, and about 2% improvement for Hpc6a instances. Intel MPI performance gains grow larger (around 7% to 12%) at higher core counts. Because of the higher performance, we used Intel MPI v2019.8.254 for deriving results in the remainder of the blog.

Performance analysis

To understand how Simcenter STAR-CCM+ performs on various Amazon EC2 instance types, we plotted how simulation performance varies versus number of cores for each of the two test cases in Figure 2 (a, b). The Le Mans case with 17 million cells is scaled to 3000 cores, and the high-lift aircraft case with 147 million cells is scaled to 9600 cores. Simulation performance is represented in terms of iterations per minute that are averaged over the last 100 iterations of the simulation.

Figure 2: Comparison of simulation performance for different Amazon EC2 instances for – (a) Le Mans test case scaled to 3000 cores; (b) high-lift aircraft test case scaled to 9600 cores. On per-core basis, C6i is the best performing instance type.

Figure 3 (a, b) represents the variation of simulation performance with number of instances.

Figure 3: Comparison of simulation performance for different Amazon EC2 instances for – (a) Le Mans test case scaled to 80 instances; (b) high-lift aircraft test case scaled to 267 instances. On per-instance basis, Hpc6a is the best performing instance type.

Relative performance differs when based on number of cores compared to number of instances because each instance type has a different number of physical cores. When comparing performance based on cores, note that at any given core count, there are more C5n instances than C6i and Hpc6a instances. For the Le Mans case, C6i offers about 17% improvement over C5n and Hpc6a instances, at 3200 cores. For the high-lift aircraft case, C6i offers about 18% to 30% improvement over C5n and Hpc6a instances, at 6400 cores.

Compared per instance, Hpc6a offers the best performance across all instance types. For the Le Mans case, Hpc6a offers 6% improvement over C6i and about 49% improvement over C5n, at 20 instances. For the high-lift aircraft case, Hpc6a offers 7% improvement over C6i and about 47% improvement over C5n, at around 100 instances.

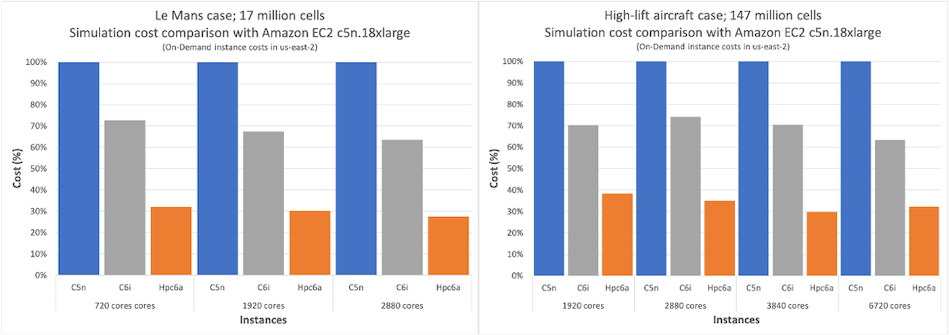

Cost analysis

Application performance is an important indicator of project turnaround times and ultimately, the final time to market. However, customers also want to understand and cut project costs, especially when running large simulations. Figure 4 (a, b) show plots of simulation cost for running the last 100 iterations for both test cases. Simulation costs highlighted in this post reflect the On Demand Amazon EC2 Instance costs in the Ohio (us-east-2) Region. Other factors impacting the total cost, such as the software license costs, I/O file system costs, and data transfer costs, are not considered as they can vary based on user selections.

Figure 4: Comparison of simulation cost for different Amazon EC2 instances for – (a) Le Mans test case; (b) high-lift aircraft test case. Hpc6a consistently exhibits the lowest simulation cost for both the test cases

From the above plots (Figure 4), we see that, depending on the case size and core count, C6i offers about 30% – 60% cost savings compared to C5n instances. On the other hand, Hpc6a consistently offers about 65% – 70% cost savings compared to C5n instances. This is further illustrated in Figure 5 (a, b) below.

Figure 5: Comparison of simulation cost (%) for with Amazon EC2 c5n.18xlarge – (a) Le Mans test case; (b) high-lift aircraft test case. Hpc6a offers about 65% cost savings compared to c5n.

Summary

This post describes the price and performance summary of running CFD simulations using Simcenter STAR-CCM+ software on AWS. Amazon EC2 Hpc6a offers the lowest cost, with about 65% cost savings over C5n, and about 40% cost savings over C6i, depending on the test case. Watch out in the future for further posts on the recently-released GPU-enabled version of Simcenter STAR-CCM+, where we’ll also compare GPU versus CPU results on AWS.

If you are interested in getting started with running CFD workloads on AWS, more information can be found on the AWS CFD solutions page. For detailed HPC-specific best practices based on the five pillars of the AWS Well-Architected Framework, download the whitepaper, High Performance Computing Lens.

A list of relevant Siemens trademarks can be found on their site. Other trademarks belong to their respective owners.