AWS for Industries

Enable agile mainframe development, test, and CI/CD with AWS and Micro Focus

Mainframes are still used by numerous organizations in sectors such as financial services or manufacturing. To thrive in today’s fast-moving economy, customers tell us they must accelerate the software development lifecycle (SDLC) for applications hosted on their mainframes. Micro Focus development and test solutions combined with AWS DevOps services provide the speed, the elasticity, and the cost effectiveness required by modern agile development teams. In this post, we describe an incremental approach and the building blocks for a complete automated development pipeline for mainframes.

Customer mainframe development challenges

Organizations with mainframes look for application development solutions to solve challenges on several dimensions:

- Slow mainframe development lifecycle – Customers see an increase in volume and frequency of business changes requested to their mainframe applications written in COBOL or PL/1. But their current mainframe development processes and tools cannot keep up with the pace of the business generating a growing backlog. For example, mainframe software builds may be manually executed. Or, there could be a complex and manual process to provision a mainframe test environment. This can be solved with automation and the modernization of tools and processes targeting continuous integration and continuous delivery.

- Limited and expensive compute resources – Any mainframe is limited by the physical machines’ finite resources, or limited by hard or soft capping limits. Furthermore, the same expensive mainframe hardware and software is shared between production and development or test environments, although non-production environments do not require the same expensive quality of service. In addition, we see large enterprises with hundreds of applications, geographically dispersed teams, and many regulated environments with an ever-increasing need for the flexibility to create and terminate large numbers of environments on demand. This can be solved with the scalability and the elasticity of a cost-optimized infrastructure.

- Outdated development practices – Some mainframe development practices and culture cannot support modern approaches because they rely on rigid monolithic practices. For example, mainframe developers and testers can be working in silos. There can be no integration and no automation across environments and teams along with a plethora of disjoint tools. This develops a perception that mainframes cannot be part of modern practices. This can be solved with DevOps and Agile practices introduced incrementally.

- Outdated tools – Some mainframe development tools still have archaic interfaces. They often limit developers’ productivity because they lack smart features around editing, debugging, compilation, testing, and more. Further, they lack integration capabilities that streamline development from source control integration to teamwork and integrated testing and deployment. Consequently, it makes it difficult to find and train new mainframe developers. Modern development teams find it difficult to work with mainframes. This can be solved with modern Integrated Development Environments (IDE) along with DevOps tools.

We will now describe how we can overcome these challenges with a recommended approach.

Approach for increasing mainframe development and test agility

Over time, mainframe development teams and system programmers have created rigorous and complex processes in order to ensure new features are deployed in production while minimizing the risks of failure for the core-business workloads currently executing. The recommended approach is not a rip-and-replace approach, but on the contrary, favors modular and incremental add-ons preserving the reliability of the existing deployment processes while accelerating the development and test lifecycle.

The process of transformation starts with the development, test, and deployment pipeline for a given mainframe application. Every organization has a software process in place, which is the current pipeline. This existing pipeline is the starting point that is documented and analyzed for optimization. There are three main areas where we can introduce efficiencies for such a pipeline:

- Increase application development agility – It starts with a modern Integrated Development Environments (IDE) that enables developers to write code quicker with smart editing and debugging, instant code compilation, local mainframe dataset access – all this within a familiar Eclipse or Visual Studio user interface. Such toolset facilitates the mainframe applications modernization to work with Java, .NET, web services, REST-based APIs, and AWS Cloud. We show this solution within section “Option 1: On-demand development for mainframe” below. Taking this one step further, developers gain speed when they perform unit tests within their local development environment and they don’t have to wait for an outside test process. This is shown within section “Option 2: On-Demand development and unit test for mainframe” below. Mainframe applications have evolved over many years or decades. They are typically large and complex. Consequently, developers benefit from elevating their application understanding via analyzers, intelligent code analysis, and reuse of business rules and logic.

- Increase pipeline infrastructure agility – Rather than having fixed environments and resources limited by physical or capped mainframe machines, customers benefit from an infrastructure consumed on demand based on their projects, developers, and needs. We increase agility by creating or terminating development environments on-demand, by automatically starting test servers during actual testing, by using environments in parallel for increased speed, and by leveraging managed services that minimize the administrative overhead. The other tremendous increase in infrastructure agility is when using automation everywhere possible: automation for code promotion for builds, for tests, and for deployment. Elasticity and automation are shown in the three technical options later in this post.

- Increase pipeline processes agility – For this purpose, we first simplify processes and make them repeatable. Then, we integrate processes and tools with automation at every stage for speed and reliability. Next, we break down the silos with continuity of processes and improved visibility across stages. This cross-platform, cross-team, cross-environment, and cross-stage continuity are delivered by an automated Continuous Integration and Continuous Delivery (CI/CD) pipeline. This is shown within section “Option 3: CI/CD automation for mainframe” below. With such a pipeline, we can test early and often, increasing speed and quality altogether.

After analyzing the existing pipeline, we should continue with what is working well, and we should focus the improvements in areas that are causing most pain and where we can have a material impact. By starting small and doing incremental improvements, we can demonstrate the value of every change and at the same time, transform the organization with a culture change in the way software is delivered. This then provides a framework for a continual improvement process that demonstrates improvement at each stage. The change value has two dimensions: The value to the business, such as time-to-market, is important because it is the main driver for the transformation. The value to the IT organization, such as agile software development or measuring the speed and quality, brings efficiencies and synergies with other teams that are already more mature in these practices.

Practically and tactically speaking, we can increase the agilities previously described with the following technical solution options:

- On-demand development for mainframe – This option brings a modern IDE deployed on elastic compute Cloud providing both application and infrastructure agility.

- On-demand development and unit test for mainframe – This option adds local unit testing for even more application development agility.

- CI/CD automation for mainframe – This option includes the complete automated pipeline for development and testing, drastically increasing the processes’ agility and speed.

With these options, depending on maturity and goals, we synchronize the new pipeline source code either after development stage, after unit test, after System Integration Test (SIT), or after User Acceptance Test (UAT). This gives a realistic continuous improvement process with low risk transitions.

These options add capabilities and pipelines to the existing mainframe development practices. On the mainframe side, it integrates as early as the mainframe source code management system. Doing so, we reduce risks and increase confidence making sure all the current mainframe SDLC quality controls and gates are still used and not bypassed. We will now describe each solution option.

Option 1: On-demand development for mainframe

With this first solution option, application developers have a modern IDE deployed on elastic compute and available on demand. No matter whether there are one or multiple projects, no matter whether there are one or multiple developers per project, each developer has one IDE instance created on a pay-as-you-go model and terminated when they are done with the work. There is virtually an unlimited number of developer IDE instances that can be started and then terminated. Also, with the modern IDE, developers benefit from the accelerators we mentioned previously for writing code faster.

Figure 1: On-demand development for mainframe with Micro Focus and AWS

For this option, developers use Micro Focus Enterprise Developer IDE installed on Amazon EC2 instances. This IDE relies on the popular Eclipse or Visual Studio. For providing more knowledge at the developer’s fingertips, we can also include Micro Focus Enterprise Analyzer in the same instance. Developers can pull and push code straight from the existing z/OS Source Code Management system via a Micro Focus code synchronization process. Once the code is pushed to the mainframe, all tests happen with the regular mainframe test processes and tools. Instances are started from an Amazon Machine Image (AMI) with Micro Focus Enterprise Developer pre-installed. This means new instances can be made available to new developers in minutes. From a performance perspective, AWS provides a wide selection of Amazon EC2 instance types accommodating various development needs for CPU, memory, or network speed. In addition, these instances can be deployed in AWS Regions, or AWS Local Zones, or AWS Outposts closest to the developer’s location. We see Micro Focus Enterprise Developer on Amazon EC2 in action later in section “Pipeline in action.”

Option 2: On-demand development and unit test for mainframe

In this second option, developers are empowered with the ability to perform local unit testing within their development environment. With local compilation, local datasets, and local unit tests, developers don’t have to wait for a test process on the mainframe side, but can immediately discover and locally debug errors. It aligns with a shift-left testing approach and the “test early and often” maxim, increasing quality and speed. Another benefit of local testing is that it removes mainframe test resources contentions, which happen when multiple testers try to execute their tests on the mainframe at the same time. Removing these mainframe test contentions provide greater efficiency in development and testing.

Figure 2: On-demand development and unit test with Micro Focus and AWS

Collocated with the IDE, each developer now has an additional Micro Focus Enterprise Test Server within the same Amazon EC2 instance. Code is developed, compiled, and unit tested quickly within the same environment. If unit tests are successful, developers can push the code to the z/OS Source Code Management system for the remaining z/OS test and deployment tasks. We see Micro Focus Enterprise Test Server on Amazon EC2 in action later in section “Pipeline in action.”

Option 3: CI/CD automation for mainframe

We now describe how to boost agility and release velocity by introducing automation within every stage and across every stage of the development and test pipeline. For this purpose, we use a Continuous Integration / Continuous Delivery (CI/CD) pipeline.

Continuous integration / continuous delivery

CI/CD enables application development teams to more frequently and reliably deliver code changes. It relies on continuous automation and monitoring throughout the lifecycle of applications, from integration and testing stages to delivery and deployment.

- With Continuous Integration, developers regularly merge their code changes into a central repository, after which automated builds and tests are run. The key goals of continuous integration are to find and address bugs quicker, improve software quality, and reduce the time it takes to validate and release new software updates.

- With Continuous Delivery, every code change is built, tested, and then pushed to a staging environment or production environment with speed.

Figure 3: CI/CD pipeline

The pipeline phases are the same regardless of the target production execution environment, whether it’s a mainframe or a cloud target. Introducing automated tooling around the release process mitigates risks, allowing synchronized releases to be distributed in mainframe and cloud environments. This brings with it accelerated time to value by increasing the number of releases, therefore fulfilling business requirements.

It is important to monitor the pipeline for iterating on improvements. We can measure velocity with frequency and speed of deployments and build verifications. We can measure security with security test pass rate. We can measure quality with test pass rate, deployment success rate, and code analysis ratings. With the use of code quality and code coverage metrics, we see a measurable increase in application quality. This brings dramatic improvements in testing speed and code quality, while considerably reducing the cost of testing.

Modular tool chain

At every stage of the pipeline, there are multiple tools available. The pipeline is meant to be flexible and is designed to be modular. There is no one-size-fits-all, and it is often a good idea to keep or reuse the proven tools from existing pipelines. This minimizes risks and facilitates transitions. Nonetheless, for mainframe development and testing, we must make sure the tools can support development, compilation, build, and test of typical COBOL or PL/1 applications and their communication protocols and interfaces, which may include 3270 screens.

Figure 4: CI/CD pipeline and tools for mainframe applications

Figure 4 shows some example tools, software, or platforms that can be used at every stage of the pipeline. We focus here on some tools supporting a pipeline for COBOL or PL/1 applications. Depending on the existing pipeline(s) maturity, we could pick one or multiple tools suggested on Figure 4.

CI/CD with Micro Focus and AWS

With a CI/CD pipeline, we increase speed, agility, quality, and cost-efficiency with as much automation as possible. We provide automation within each stage, across each stage, and for the supporting infrastructure. For example, automation within the test stage is provided by the testing tool, which executes the many test cases and reports on results. For instance, automation across stages is provided by the continuous integration tool that coordinates the tasks across each stage. As an example, automation for the supporting infrastructure is provided by fully managed services, which have no software to install and no operation administrative tasks. Such fully managed services are highly available and scale automatically following a pay-as-you-go model. Another example of infrastructure automation is the ability to automatically provision and terminate build and test environments on demand.

Figure 5: CI/CD pipeline for mainframe applications with Micro Focus and AWS

Developers use the modern IDE, which we previously described. It provides the elasticity of the Amazon EC2 instances combined with the smart coding features of Micro Focus Enterprise Developer, and the speed of local unit testing with Micro Focus Enterprise Test Server. Initially, the source code is pulled from the mainframe source code repository, which stays as the master source code repository. For this pipeline, multiple teams and developers collaborate and store their source code in AWS CodeCommit, which is a fully managed source control service that hosts secure Git-based repositories. AWS CodePipeline, also fully managed, coordinates and automates the build, test, and deploy phases of the pipeline every time there is a source code change. AWS CodeBuild, which is fully managed, automatically provisions the build environments that contain Micro Focus Enterprise Developer Build tools. For testing, Micro Focus UFT (Unified Functional Testing) automates the test cases’ execution against the applications deployed on Micro Focus Enterprise Test Server. AWS CodeDeploy, also fully managed, automates the deployment of code on test servers or to the next code repository on the mainframe. AWS Lambda, which is fully managed, allows running any logic, such as triggering test executions or transferring source code files with the mainframe. This pipeline allows multiple teams and developers to write, update, and test code that is eventually merged with the existing mainframe master source code repository.

Pipeline in action

Let’s now look into how the pipeline components interact with one another and how a code change moves from development, to source control, to build, to test, and to the production environment on the mainframe.

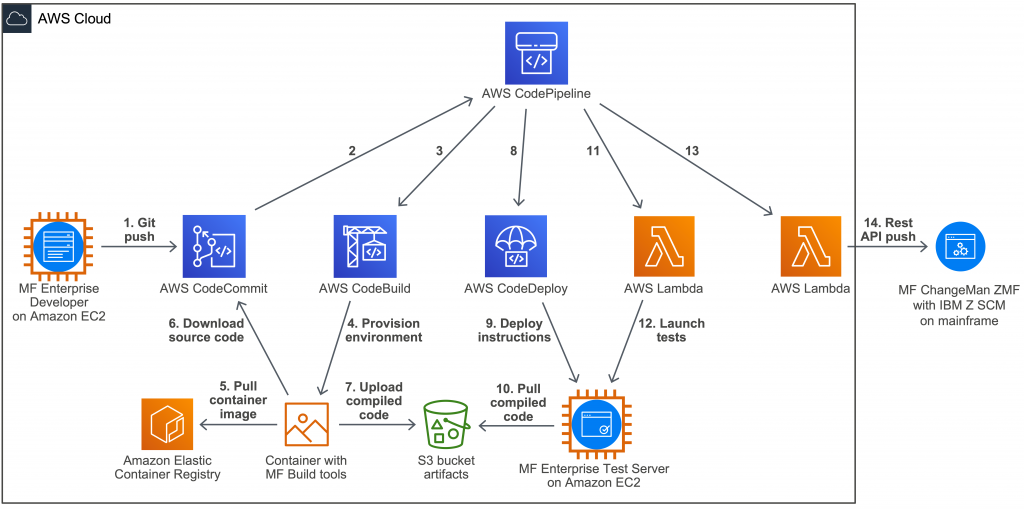

Figure 6: CI/CD pipeline for mainframe applications in action

The CI/CD pipeline shown on Figure 6 executes the following steps:

- A developer makes changes to the source code and commits the changes to the local Git repository. These source code changes are pushed to the upstream repository hosted by AWS CodeCommit.

- The source code changes in CodeCommit trigger an Amazon CloudWatch event, which starts the pipeline in AWS CodePipeline.

- The pipeline calls AWS CodeBuild in order to start the build phase.

- AWS CodeBuild provisions a build environment in a container with Micro Focus Enterprise Developer Build tools.

- This container is based on a container image pulled from Amazon Container Registry.

- Once the container is provisioned, the build environments download the source code from AWS CodeCommit. The source code is compiled and linked.

- Then, the compiled code is uploaded in an Amazon S3 bucket that the generated build artifacts.

- With the build phase being complete, the pipeline then calls AWS CodeDeploy as part of the test phase.

- AWS CodeDeploy send code deployment instructions to the CodeDeploy agent residing on the Amazon EC2 instance hosting Micro Focus Enterprise Test Server.

- The AWS CodeDeploy agent pulls the compiled code from the S3 bucket with the artifacts and deploys it to the proper destination folders and restarts Micro Focus Enterprise Test Server.

- Once the code is deployed, the pipeline calls AWS Lambda to start the tests.

- AWS Lambda sends the test command to the Amazon EC2 test instance via AWS Systems Manager (SSM). The test command triggers a batch test script on the test instance. The batch test script calls a Visual Basic script that can either trigger a Rumba or a UFT automation script. The test script executes test cases against the modified compiled code and Micro Focus Enterprise Test Server verifying the new code is operational. The result of the tests is sent back to the pipeline in AWS CodePipeline.

- If tests are successful, the pipeline calls AWS Lambda in order to send the source code back to the mainframe Source Code Management (SCM) system.

- The AWS Lambda function retrieves the code changes from AWS CodeCommit, and sends the modified files to the destination mainframe code repository such as Micro Focus ChangeMan ZMF.

To learn more about this pipeline, check back on this blog and the GitHub repository where we will describe more details and the key configuration aspects of this pipeline.

Additional capabilities for a mainframe pipeline

The pipeline presented in this post shows a minimum set of tools from development, to build, test, and deployment. Again, the pipeline is modular. This means we can enhance it with complementary tools. As examples, we mention the following beneficial tools in the context of mainframe code development and test:

- Code Analyzer – Such tool provides application intelligence, knowledge and analysis for developers to better understand millions of lines of code. It is common for mainframe applications to grow with large numbers of lines of code within a complex application portfolio. For example, Micro Focus Enterprise Analyzer can sit next to the IDE as a knowledge repository and assist the developer in identifying relevant business logic, and rules reducing reliance on subject-matter experts while increasing the understanding the code changes impact.

- Code Quality Checker – With such capability, we can automatically review the quality of the static code and get notifications or rejections when the code does not meet a desired quality bar for coding standards or security. Fortify Static Code Analyzer provides automated static code analysis which helps developers eliminate vulnerabilities and build secure software.

- Application Lifecycle Manager – This enables teams to collaborate, manage the CI/CD pipeline, and visualize the impact of changes. It can show real-time status into the CI/CD pipeline with end-to-end traceability, identifying root cause of failures, and tracking commits associated with specific user stories and defects. For example, Micro Focus ALM Octane provides application lifecycle analytics, insights, traceability, and automation for quality delivery.

- Elastic COBOL and PL/1 execution environment – Such capability allows offloading or extending of the existing mainframe execution environment. If there is a need for more processing capacity, or presence in a different geographical Region, or elastic compute to absorb a spike in demand, or a need for cost reduction, the elastic execution environment provides such flexibility. For example, Micro Focus Enterprise Server on AWS meets or exceeds the quality of service required by typical mainframe workloads.

Go build

Introducing agility into mainframe development and test can follow an incremental and iterative approach minimizing the risks for existing mainframe development and deployment practices. Starting from a modern IDE, adding local testing, we can increase development speed drastically by leveraging a CI/CD pipeline. Micro Focus solutions bring enterprise development capabilities for many stages of COBOL and PL/1 pipelines. AWS services bring extensive automation and elasticity to accelerate the pipeline in a cost-optimized manner. The combined solution increases the agility, velocity, and quality of mainframe application development.

You are invited to go build and experiment the value of such pipeline. To get started with a pipeline similar to the one presented in this post, you can access and reuse the pipeline configuration artifacts and details available in GitHub.