AWS for Industries

Running Ad Tech Workloads on AWS with Aerospike at Petabyte Scale

Introduction

Few industries test the limit of database throughput and latency like advertising technology (ad tech). Industry leaders like The Trade Desk run workloads with tens of millions of read and write events per second and single-digit millisecond response times. Growing media consumption in areas like connected TV, over-the-top (OTT) streaming, and retail means that ad tech businesses continue to see request volumes and throughput requirements grow—making performance, maintenance, and cost optimization significant technological challenges. There is a broad spectrum of open-source and commercial data storage and caching solutions available for such workloads, but selecting the right one that scales to petabyte-level processing cost effectively is not trivial.

Today we’re excited to announce a new industry solution for ad tech customers running Aerospike, a real-time NoSQL data platform that powers ultra-low latency applications with predictable sub-millisecond performance at petabyte scale. Customers can use the solution to accelerate deployments of Aerospike using an Amazon Web Services (AWS) Quick Start guide, reference architectures, customer examples, and prescriptive benchmarks for running Aerospike on Amazon Elastic Compute Cloud (Amazon EC2). This blog post overviews the solution and highlights some of the performance benchmarks and best practices we recommend for running Aerospike on AWS specifically for ad tech workloads.

About Aerospike

The Aerospike Real-Time Data Platform enables customers to act in milliseconds across billions of transactions by exploiting hardware optimizations, including multi-core processors with non-uniform memory access (NUMA), non-volatile memory extended (NVMe) Flash drives, and network application device queues (ADQ). On AWS, Aerospike uses caching on ephemeral devices and backs up data on Amazon Elastic Block Store (Amazon EBS). Coupled with multithreaded architecture, these features provide ad tech customers with distinct advantages when running their Aerospike workloads on AWS for high-write-volume and low-latency workloads. As an example, Aerospike automatically distributes data evenly across its shared-nothing clusters, dynamically rebalances workloads, and accommodates software upgrades and most cluster changes without downtime.

Running Aerospike on Amazon EC2

A single ad tech company may buy, sell, or serve hundreds of billions or trillions of ads per day, pushing the limits of performance on a second-by-second basis. Under the hood, this level of throughput requires extreme capacity and deliberate measures to optimize costs. Using Amazon EC2, customers can rapidly scale up depending on demand—and use a broad slate of capabilities such as Amazon EC2 Auto Scaling, which lets users automatically add or remove Amazon EC2 instances according to conditions they define, and Amazon EC2 Spot Instances, which lets users take advantage of unused Amazon EC2 capacity in the cloud, to reduce costs. Amazon EC2 also offers a broad and deep choice of processor, storage, networking, operating system, and purchase model, which make AWS ideal for ad tech firms looking to pair Aerospike together with the most high-performing, optimized instance types. For example, we consistently see ad tech customers use storage- and memory-optimized instance families such as I3/I3en, D3/D3en, M5d, and R5/R6 as a best practice for running Aerospike workloads requiring high, sequential read and write access to very large datasets on local storage.

Aerospike Architectural Options

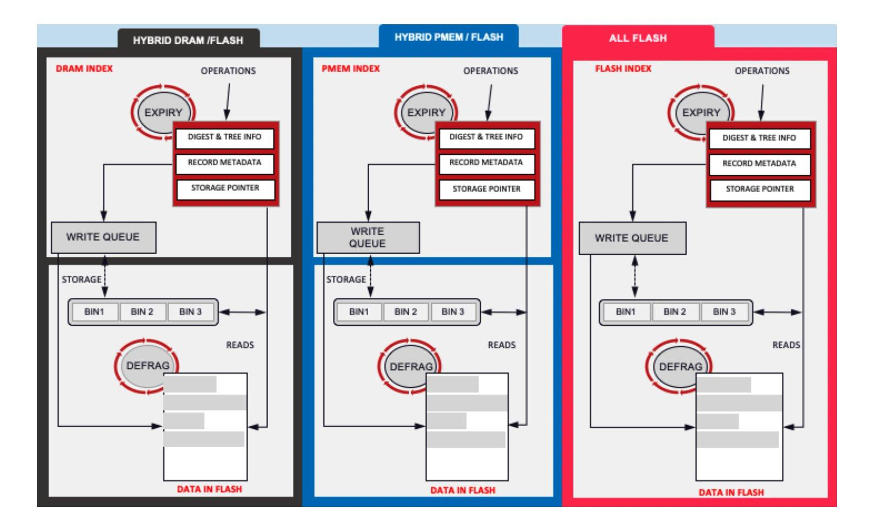

Aerospike has the flexibility to be deployed in multiple different configurations. In the all-dynamic random-access memory (DRAM) configuration, the index and user data reside in traditional memory and data is persisted to disk storage as a backup. Similarly all-PMEM, all-flash, and hybrid configurations (where the index can reside in PMEM/DRAM and data can reside in flash memory) are possible, letting customers choose the right configuration based on business needs and application requirements.

Benchmark Configuration and Setup

Together with AWS and Intel, Aerospike set up a 20 node cluster on AWS with each server node running on an i3en.24xlarge node featuring 768 GB of DRAM and 8 x 7,500 GB NVMe solid state drive (SSD) to benchmark performance. Client nodes were hosted on 40 c5n.9xlarge nodes featuring 96 GB DRAM each and backed by EBS volumes. Load was generated on the client nodes using the Aerospike C client. Before performing the benchmark tests, Aerospike primed the environment by running a generic read/write workload for several days. This was done to ensure that data is suitably randomized and periodic background jobs like defragmentation are triggered as in a production environment.

The Aerospike server was configured with compression that yielded a 75 percent reduction in size for the user profile database, causing it to store 500 TB of compressed user data (250 TB of unique user data and 250 TB of replicated data). The campaign database also used a compression factor of 4, so it managed 750 GB of compressed user data (375 GB of unique user data and 375 GB of replicated data).

Benchmark Results and Total Cost of Ownership (TCO) Analysis

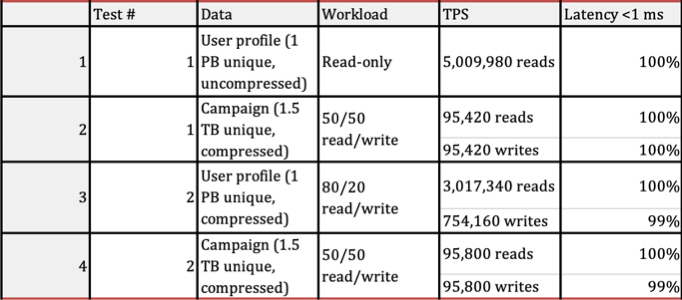

Aerospike ran two sets of tests using the workloads described earlier to measure transaction throughput with sub-millisecond server-side latencies. Each test ran for 4 hours and featured concurrent operations spanning all keys in the user profile and campaign databases.

Table: Benchmark results. User profile and campaign workloads were run concurrently.

The benchmark results show that Aerospike processed more than 5 million read-only transactions per second (TPS) with sub-millisecond latencies for user profile applications while also processing nearly 200,000 read/write TPS with sub-millisecond latencies for campaign applications. Test 2 featured an 80/20 mix of read/write mix operations run against the user profile database and a 50/50 read/write mix of operations run concurrently against the campaign database. Under those conditions, Aerospike delivered more than 3.7 million TPS for user profile applications, nearly all with sub-millisecond latencies; in addition, Aerospike also processed almost 200,000 read/write TPS for campaign applications, nearly all with sub-millisecond latencies. This level of performance was achieved on an Aerospike cluster on AWS with only 20 Amazon EC2 instances.

Aerospike’s benchmark was conducted on Amazon EC2 i3en.24xlarge server nodes, beginning with a 20-node cluster in Year 1; based on up-front AWS annual fees for the compute instances, this configuration resulted in just over $1.2 million in infrastructure costs for the year. By contrast, Cassandra’s Year 1 configuration was sized at a 514-node cluster of i3.4xlarge servers, which yielded an infrastructure cost of nearly $3.58 million for Year 1. Projections were made for Year 2 and Year 3 on an assumption of a 15% rate of data growth. As can be seen, growth in Aerospike’s expenses is much lower than Cassandra’s with an estimated $11.2 million in savings over a 3-year period. Note that the cost estimations are based on On-Demand pricing of Amazon EC2 instances, which lets users pay for compute capacity by the hour or second (minimum of 60 seconds) with no long-term commitments. Customers can take advantage of AWS Savings Plans, a flexible pricing model offering lower prices compared to On-Demand pricing, which can reduce the operational costs of Aerospike further, by up to 72% depending upon the Amazon EC2 instance types chosen to host the Aerospike server nodes.

Customers such as The Trade Desk have noted even greater performance running Aerospike with write volumes as high as 32 million key-value pairs / tuples per second with larger clusters with 150+ m5d.metal instances. In a 2020 re:Invent session, Matt Cochran, director of engineering at The Trade Desk, noted, “The elastic nature of Amazon EC2 got us to full scale pretty quickly and let us do a full-scale prototype. We were able to distribute data around the world and actually make sure that our problem was solved. The diversity of instance types we found on Amazon [EC2] was super helpful in this case too.” Cochran also recommended testing instance types per workload using the Aerospike Certification Tool (ACT) to select the right instance for the job. “Using ACT is absolutely key. You need to run this to understand what the implications are on your system, and it will prove to you what you need.”

Summary

As ad tech continues to push the limits of petabyte-scale and millisecond-latency workloads, industry customers can use the new solution for Aerospike on AWS to accelerate deployments and optimize high-throughput, low-latency workloads running on Amazon EC2. You can review ad tech customer examples and reference architectures here, or get started using Aerospike on AWS through the AWS Quick Start deployment guide for Aerospike Database Enterprise Edition. Customers can also directly subscribe to the Aerospike Database Enterprise Edition via AWS Marketplace.

Check out the full results from Aerospike’s petabyte-scale benchmark study using AWS and Intel on their website.