AWS for Industries

Using Machine Learning for Programmatic Product Placement in TV Advertising

TripleLift is a technology company rooted at the intersection of creative and media. The company’s real-time advertising platform inserts products and brands natively into media across desktop, mobile, TV, and streaming video.

Research shows that native advertising that matches the look and feel of media experiences drives improved consumer recollection and impact. For example, product integrations showed a 50% increase in brand recall when paired with 30-second ad spots, according to a 2020 study on native advertising by MediaScience Audience Research Labs and TripleLift.

This post covers how TripleLift used AWS to create four new types of native ad products for streaming TV and OTT video. Each product fits seamlessly into the video experience, can be bought in real time, and is delivered with the same tracking capabilities as standard digital ads. We’ll show how TripleLift uses Amazon Rekognition and Amazon SageMaker to perform video analysis and find available surface areas for ad placements. We’ll also walk you through how TripleLift used Amazon SageMaker to train and deploy custom models for finding placements.

Video Analysis Workflow

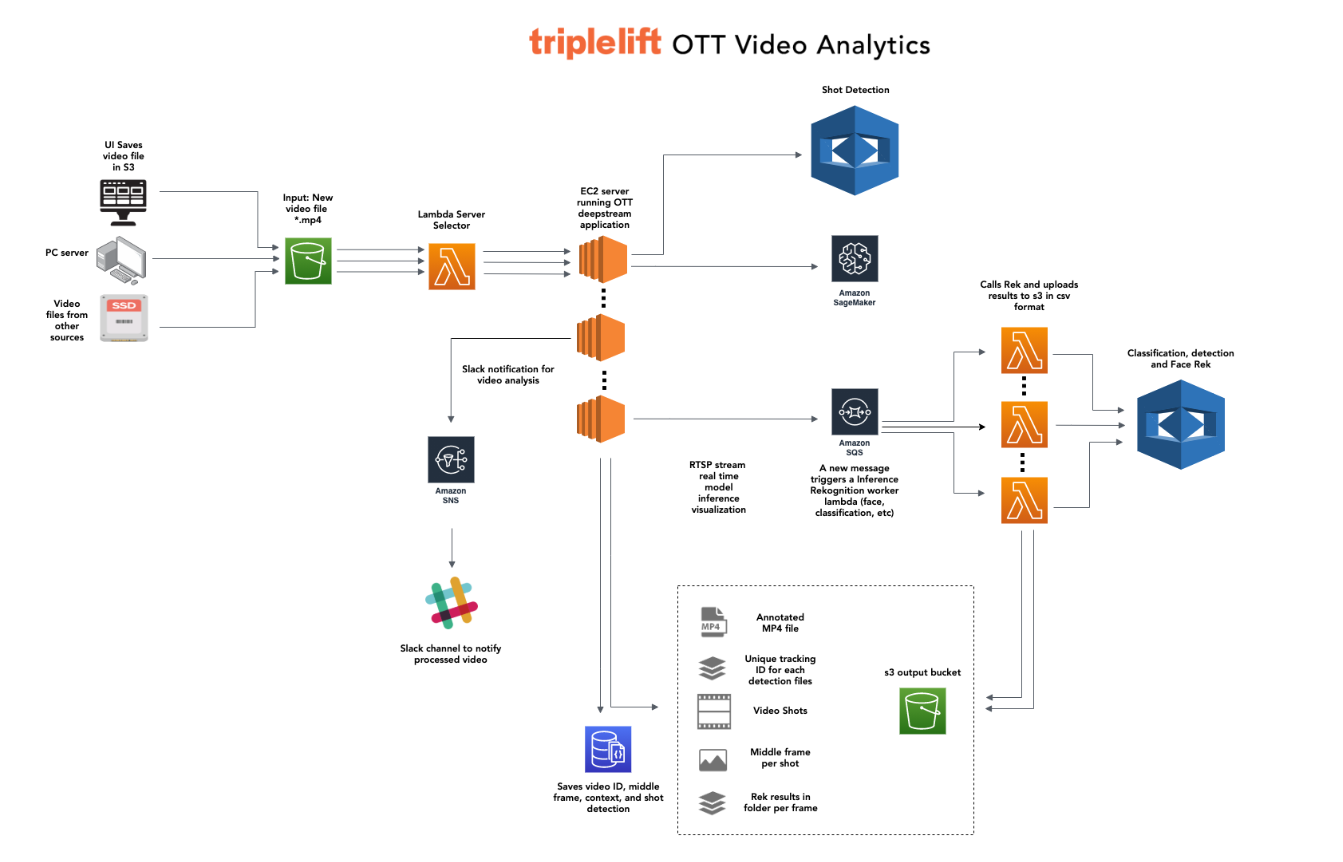

TripleLift’s video analysis workflow is triggered when we onboard publisher video content by uploading a video file into an S3 bucket (shown in the left side of the figure below). This kicks off the following steps:

- A Lambda function triggers when a file is uploaded into the S3 bucket. The Lambda function starts our analysis pipeline by triggering an EC2 instance running the TripleLift OTT application from a fleet of instances. This Lambda function also serves to load balance and keep a record of the jobs assigned to each server machine.

- After the EC2 instance receives the video details from Lambda function, it retrieves the video from the S3 bucket and calls the Amazon Rekognition Shot Detection API to break the video up into individual shots. Shots are the non-fungible currency of our Brand and Product Insertion formats, in which brands and products appear in any number of shots.

- Once the Shot Detection results are available, the server will create new video files for each shot within the TV episode.

- Using Amazon Rekognition video analysis (media asset search and indexing features), we analyze shots for various contextual elements that could be used to exclude candidate shots. For example, we avoid shots with images that brands may want to avoid—such as guns or violence.

- We use Amazon SageMaker to run a custom OTT DeepStream model that analyzes the video for flat surfaces and selects surfaces that are appropriate for product and brand insertions (referred to as “placements”). SageMaker provides an annotated video file and unique tracking ID for each detection, which are then used to minimize the search space for viable placement candidates, as well as compare similarities with previously successful placements. The files are then pushed to an S3 output bucket.

- In parallel with the SageMaker models, the server also pushes some video frames to an SQS queue that triggers a Lambda function for Amazon Rekognition Image inference to analyze the image for additional points of classification, detection, face recognition and contextual information.

- The output of Amazon Rekognition is pushed to S3 output bucket by Lambda function. All of the output data is written to a database for later access via an interface we provide our creative and business development teams.

Inserting ads into shots and video streams in real time

After our machine learning pipeline identifies appropriate locations to place native ads, TripleLift’s creative teams then go through a compositing process to insert brand images and ad units into frames. The team uses EC2 instances running VFX software such as Nuke to perform the compositing. Once brand assets have been composited into a shot, they’re able to be swapped for other brand assets, truly showing the programmatic nature of this advertising.

The final step to deliver the ad units to a viewer requires server-side ad insertion (SSAI) technology. When an advertiser buys an ad unit through TripleLift’s real-time advertising platform, TripleLift’s servers call AWS Elemental MediaTailor or another SSAI service to request insertion. The ad-enhanced shot—which will include the ad creative natively composited into it—will be injected into the video stream and seen by the end user.

Results

We’ve seen several noteworthy results from developing this pipeline and these new products with AWS. At a business level, we’ve been able to expand into a new market and invent a never-before-seen type of ad format that’s seamless inside video and has all the measurement capabilities of a display ad. With tools like SageMaker and Amazon Rekognition, our team can also process videos faster with fewer people—and we’ve been able to expand our capacity to provide meaningful ad placements more quickly than before. Overall, AWS allows us flexibility, control and convenience in building, deploying and maintaining our Content Analysis Workflow. Over the next year, we look forward to continuing to add to this pipeline with new analysis modules that build on our existing ones.

To learn more about this workload, check out TripleLift’s AWS on Air presentation from re:Invent 2020.

—

About the Authors

Sam Shapiro is a product and engineering manager at TripleLift.

Sam Shapiro is a product and engineering manager at TripleLift.

Luis Bracamontes is a computer vision engineer at TripleLift.

Luis Bracamontes is a computer vision engineer at TripleLift.

Michael Shields is GM of advanced advertising at TripleLift.

Michael Shields is GM of advanced advertising at TripleLift.