AWS Open Source Blog

24 open source tools for the serverless developer: Part 2

September 8, 2021: Amazon Elasticsearch Service has been renamed to Amazon OpenSearch Service. Visit the website to learn more.

This article is a guest post from AWS Serverless Hero Yan Cui.

In the first part of this two-part series, we looked at deployment frameworks and explored some of the best Serverless Framework plugins. We also looked at org-formation and lumigo-cli and how they can make your life easier when you work with AWS and building serverless applications.

In part 2, we will look at popular libraries for writing AWS Lambda functions in Node.js, and we will explore useful AWS Serverless Application Repository apps that can take care of many common chores for you.

Libraries

docker-lambda

If you use AWS Serverless Application Model’s (AWS SAM) local invoke or the Serverless Framework’s invoke local command, then you are already using docker-lambda, a docker image that replicates the live AWS Lambda environment. Besides invoking Lambda functions locally, it also is useful when you need to compile native dependencies.

middy

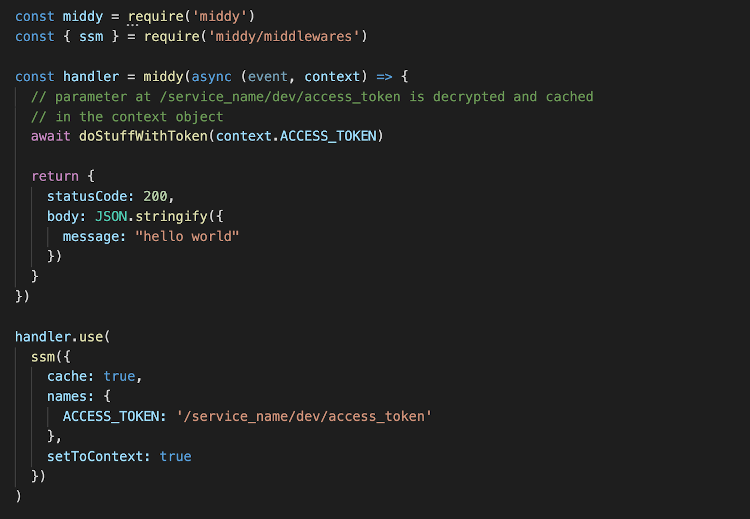

Middy is a middleware engine for Node.js Lambda functions, and makes it easy for users to handle cross-cutting concerns and encapsulate them into middleware. More than 15 built-in middleware address common concerns, such as setting CORS headers in Amazon API Gateway responses.

My favorites are the ssm and secretsManager middleware, which implement best practices for loading secrets into Lambda functions. My rule of thumb is never to store secrets in unencrypted form in environment variables, which is the first place an attacker would look if they manage to compromise my application, perhaps through a compromised or malicious dependency. Rather, my suggestion is to:

- Load the secrets from SSM or Secrets Manager during cold start.

- Cache the secret so you don’t have to read from source on every invocation.

- Set the secret to the

contextobject, not the environment variables. - Access secrets through the

contextobject inside the handler code.

Optionally, you should also enable cache invalidation and set the expiry to a few minutes. That way, when you rotate the secrets at the source — which you should do — all concurrent executions would automatically update when their local cache is invalidated.

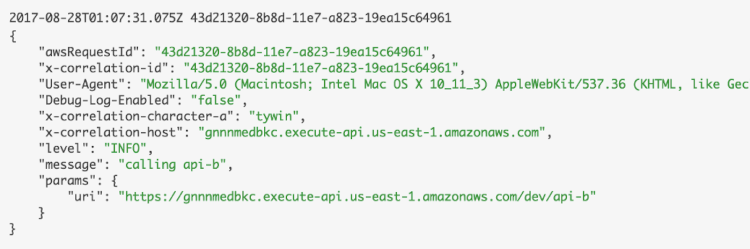

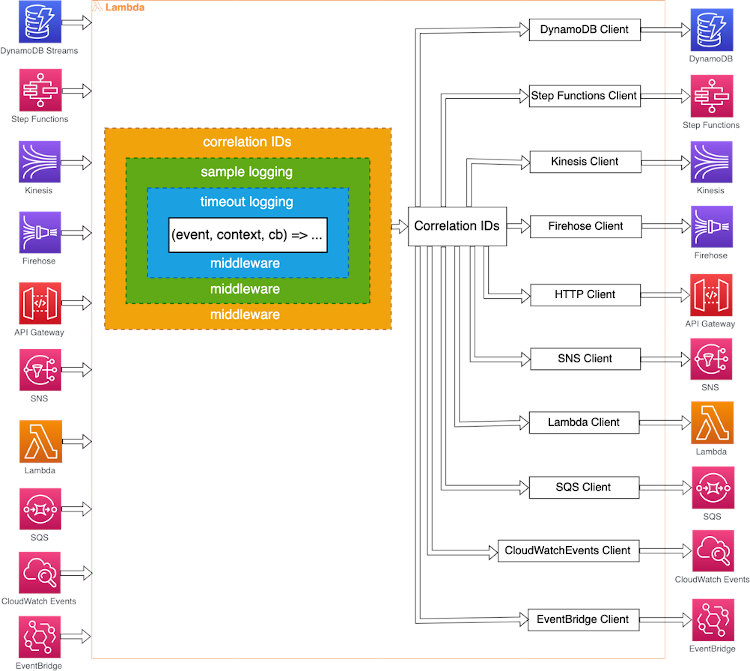

dazn-lambda-powertools

The dazn-lambda-powertools is a suite of NPM packages that make it easy for you to build production-grade serverless applications in Node.js. Among other things, it allows you to automatically capture and forward correlation IDs with many AWS services. Using these tools, your functions would automatically include correlation IDs in their logs.

Also, these correlation IDs automatically will be propagated to downstream functions when you use the provided SDK clients (that wraps the official AWS SDK clients). Currently these are the supported AWS services:

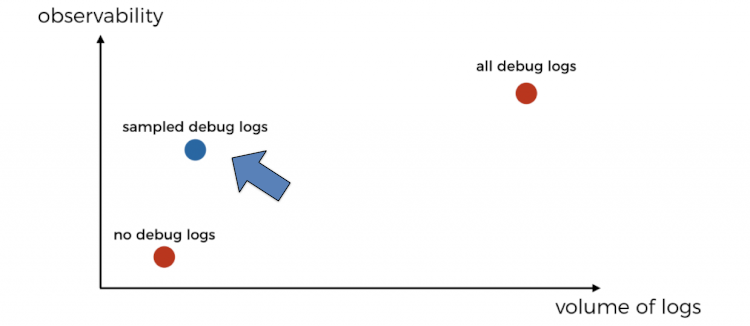

The lightweight logger supports structured logging with JSON and configurable log level. When the logger is used together with other middleware from the suite, you can also enable sampling of debug logs in production. You typically log at the WARN level in production to reduce the volume of logs and the cost associated with processing them. This often means you miss useful debug log messages that can help you debug issues quickly when problems arise in production. Sampling allows you to retain a small percentage of debug logs in production, which hopefully would cover every scenario and code path execution. These would come in handy when an issue does occur, and you wouldn’t have to enable debug logging and redeploy your application.

Also, it’s important to note that sampling happens at the transaction level rather than individual functions. By default, dazn-lambda-powertools samples debug logs for 1% of transactions. That way, you’ll see everything that happened in the relevant Lambda functions even as the transaction spans across asynchronous event sources, such as Amazon Simple Notification Service (Amazon SNS), Amazon Simple Queue Service (Amazon SQS), Amazon EventBridge, and more.

If you use Python, then there is also the aws-lambda-powertools project for Python, which supports some of the features from the dazn-lambda-powertools.

AWS Serverless Application Repository apps

Finally, here are a handful of applications in the AWS Serverless Application Repository that I have found useful.

lambda-janitor

Once deployed, the lambda-janitor app would clean up old, unused versions of your functions in the whole region. This way, you no longer have to constantly worry about hitting the 75GB code storage limit.

There are a number of built-in safeguards to ensure it only deletes versions that you don’t need anymore. For example, it doesn’t delete any versions that are still referenced by an alias. You can also configure the number of most recent versions to keep so you can quickly roll back to a previous version during an emergency.

aws-lambda-power-tuning

The aws-lambda-power-tuning app, developed by AWS developer advocate Alex Casalboni, deploys a Step Functions state machine that you can run to help you find the optimal memory setting for your functions. This is what powers the lumigo-cli’s powertune-lambda command. I recommend using the lumigo-cli as it takes care of deploying and upgrading this AWS Serverless Application Repository app to make sure you always run on the latest version of the app.

auto-subscribe-log-group-to-arn

The auto-subscribe-log-group-to-arn app does exactly what it says on the tin; it automatically subscribes Amazon CloudWatch log groups to the ARN you configure, which can be Lambda, Amazon Kinesis, Amazon Kinesis Data Firehose, or Amazon Elasticsearch Service (Amazon ES).

Once deployed, it’ll subscribe all the existing CloudWatch log groups in the region to the configured destination right away. When you create a new log group either yourself or when you create a new Lambda function, the new log group would be subscribed to the destination automatically, too. If you don’t want to subscribe all the log groups in the region, then there are a number of configurations that allow you to target specific log groups by prefix as well as tags.

auto-set-log-group-retention

The auto-set-log-group-rention app is closely related to auto-subscribe-log-group-to-arn, except it automatically updates the retention policy of log groups instead.

By default, CloudWatch log groups are set to **Never Expire**. This has a cost implication as CloudWatch charges $0.03 per GB per month. It’s rarely useful to keep logs in CloudWatch Logs forever, especially if you are shipping logs somewhere else already.

sfn-callback-urls

Step Functions lets you implement callback patterns using task tokens. But it’s tricky to use in some situations, such as sending an email with a callback link, which often necessitates adding an API Gateway and Lambda to handle the callback URL. The sfn-callback-urls app makes it easy to do exactly that.

Summary

That’s it! I hope you have enjoyed this two-part series and learned about open source tools that will make your life easier when you work with AWS and serverless technologies.

Here are the open source tools I mentioned in the series and where you can find them:

Deployment frameworks

Serverless framework plugins

- serverless-iam-roles-per-function

- serverless-webpack

- serverless-offline

- serverless-domain-manager

- serverless-step-functions

- serverless-finch

CLIs

Libraries

AWS Serverless Application Repository applications

- lambda-janitor

- aws-lambda-power-tuning

- auto-subscribe-log-group-to-arn

- auto-set-log-group-retention

- sfn-callback-urls

There are a lot more great open source tools out there that I have not been able to cover here. Tools such as serverless-appsync-plugin, for instance, deserve a mention here too.

Open source tools depend on its community to survive and thrive. If any of these tools strike a chord with you, please give them a star on GitHub, join their community and contribute towards them. It can be improving their documentation, raising issues or making improvements to their codebase. Whatever it is, every contribution helps!

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.

Feature image via Pixabay.