AWS Storage Blog

Tag: AWS Identity and Access Management (IAM)

Amazon S3 audit logging, Part 3: Analyzing S3 Metadata journal tables for object lifecycle tracking

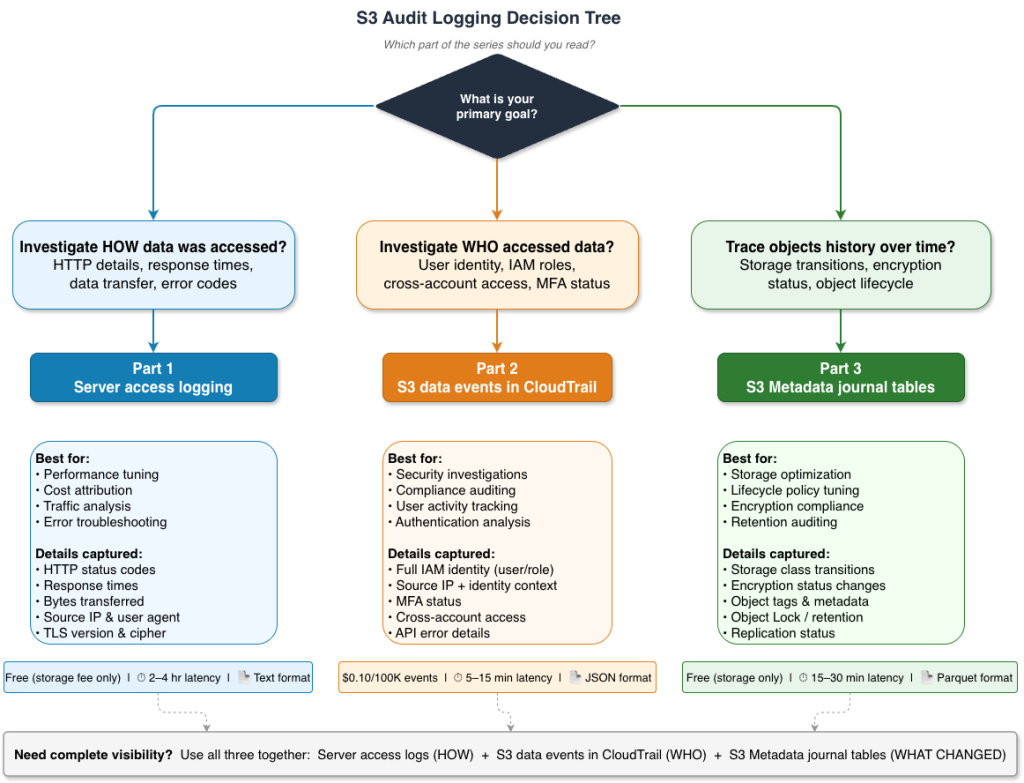

This is Part 3 of our three-part series on Amazon S3 audit logging. In Part 1, we covered server access logs for HTTP-level requests and performance analysis. In Part 2, we covered S3 data events in AWS CloudTrail for identity-focused security investigations. As data volumes grow and storage costs become a significant line item, organizations […]

Amazon S3 audit logging, Part 2: Centralized logging and analysis of S3 data events in AWS CloudTrail for security and compliance

This is Part 2 of our three-part series on Amazon S3 audit logging, focusing on identity-driven security investigations. In Part 1, we covered S3 server access logs for HTTP-level performance analysis and cost attribution. When a security incident occurs—an unauthorized download, a bulk deletion, or suspicious access from an unfamiliar location—the first question is always, […]

Amazon S3 audit logging, Part 1: Analyzing server access logs with Amazon Athena for performance insights

Organizations storing sensitive data must maintain complete visibility into how it’s accessed, by whom, and what changes occur over time. Regulatory frameworks demand detailed audit trails, security teams need rapid answers during investigations, and finance teams require granular cost attribution. Yet as data grows from terabytes to petabytes, the scale that makes centralized storage attractive […]

Scalable cross-cloud data migration to Amazon S3 with distributed rclone

Migrating petabytes of data across cloud providers is one of the most operationally demanding tasks an organization can take on. At this scale, simple transfer approaches break down. Teams lose track of what has been copied and what has failed. Transfers stall and require constant manual intervention to restart. In some cases, teams need to […]

Implement single-exchange tokens for short-lived Amazon S3 presigned URLs with Terraform

Organizations across industries use signed URLs to grant temporary, credential-less access to private resources such as receipts, medical or financial records, legal files, or confidential reports. However, signed URLs can be reused by anyone until they expire, creating security risks if a URL is shared or inadvertently disclosed. This risk can be mitigated by vending […]

Enabling natural language access to structured data using Amazon S3 Tables and Amazon Bedrock Knowledge Bases

Organizations generate massive volumes of structured data from customer transactions, operational metrics, product catalogs, and compliance records. This data contains insights that can help businesses make better and timely decisions. Financial advisors need to review client transaction histories, retail analysts track inventory trends, and healthcare administrators monitor patient outcomes. Yet accessing these insights creates a […]

Migrate to Amazon S3 account regional namespaces

Since its launch in 2006, Amazon S3 has used a global namespace where bucket names must be unique across all AWS accounts and AWS Regions. This design has served customers well at scale, but organizations managing multiple accounts and environments often encounter naming collisions. When a bucket is deleted, its name returns to the global […]

Building automated AWS Regional availability checks with Amazon S3

Every day, organizations expand into new markets, migrate critical workloads across geographies, and build systems that need to operate reliably in multiple locations. At the root of these efforts is a simple question: “What can I deploy, and where?” The answer shapes important architecture decisions, from which AWS Regions to expand into, to how you […]

Automatically decompress files in Amazon S3 using AWS Step Functions

Every day, AWS customers process millions of compressed files in Amazon S3, from small ZIP archives to multi-gigabyte datasets. While decompressing a single file is straightforward, processing thousands of files efficiently requires complex orchestration, error handling, and infrastructure management. Consider this scenario: Your organization receives over 10,000 compressed files daily from partners, ranging from 5 […]

Applying Amazon S3 Object Lock at scale for petabytes of existing data

Organizations with petabytes of data in the cloud need a way to apply immutable storage protections to data that’s already been stored—whether for regulatory compliance or cyber resilience. Although you can enable write-once-read-many (WORM) controls for newly created storage, applying these protections to existing enterprise data at scale requires a systematic approach. Regulated industries have […]