AWS Partner Network (APN) Blog

Centralized Traffic Inspection with Gateway Load Balancer on AWS

By Nikolay Bunev, Sr. Consultant and Professional Services Lead – SoftwareOne

|

| SoftwareOne |

|

SoftwareOne, as an AWS Premier Tier Consulting Partner and Managed Cloud Services Provider (MSP), has helped a lot of customers embrace cloud and partially or fully migrate their workloads to Amazon Web Services (AWS).

One of the most common questions we receive from customers is how they can continue to enforce their security policies in the cloud in the same way they used to on-premises in order to prevent data exfiltration.

The AWS Shared Responsibility Model states that “security in the cloud” is the customer’s responsibility. On AWS, you can use network access control lists (NACLs), security groups, or web application firewall (WAF) rules to prevent unauthorized access to your workloads coming from the internet.

Until a year ago, the only way to enforce content-aware outbound filtering (up to OSI Layer-7) for traffic originating from your virtual private cloud (VPC) towards the internet was to rely on network appliances provided by independent software vendors (ISVs).

Vendor-provided firewalls remain an option, and the focus of this post is their integration with Gateway Load Balancer (GWLB). Customers also now have the possibility to use the AWS Network Firewall managed service as well.

Solution Overview

Prior to the introduction of Gateway Load Balancer, two main connectivity options were available to route your outbound traffic from one or more VPCs towards the internet via the Amazon Elastic Compute Cloud (Amazon EC2) instances on which the network firewalls reside.

- Option 1: Create site-to-site VPN tunnels between the firewalls and AWS Transit Gateway (TGW) and run a routing protocol Border Gateway Protocol (BGP) on top.

Figure 1 – Simplified diagram of centralized north-south inspection with site-to-site VPN.

- Option 2: Deploy the firewalls in an active-standby mode, and semi-automatically change the subnet route table to route traffic to one or the other.

The main tradeoffs in both options were the performance and scalability of the solution, as virtual private network (VPN) attachments to a TGW are limited to 1.25Gbps. In the second case, you can only have one active firewall at a certain time. In addition, both were hard to manage and maintain.

In the weeks leading up to re:Invent 2020, AWS introduced a new load balancer type called Gateway Load Balancer. This is a managed load balancer specifically crafted to route traffic to network appliances and intrusion prevention systems, which helps solve the tradeoffs outlined above.

The announcement was timely, as I was about to deploy a solution around Option 1 for one of our clients at SoftwareOne. We were thinking about testing GWLB right away, but as the workload of this customer was in the eu-west-2 (London) region, the service was not yet available at the time (GWLB is available in London at the time of writing).

Thus, we had to continue with our original plan and then migrate to a GWLB design at a later stage.

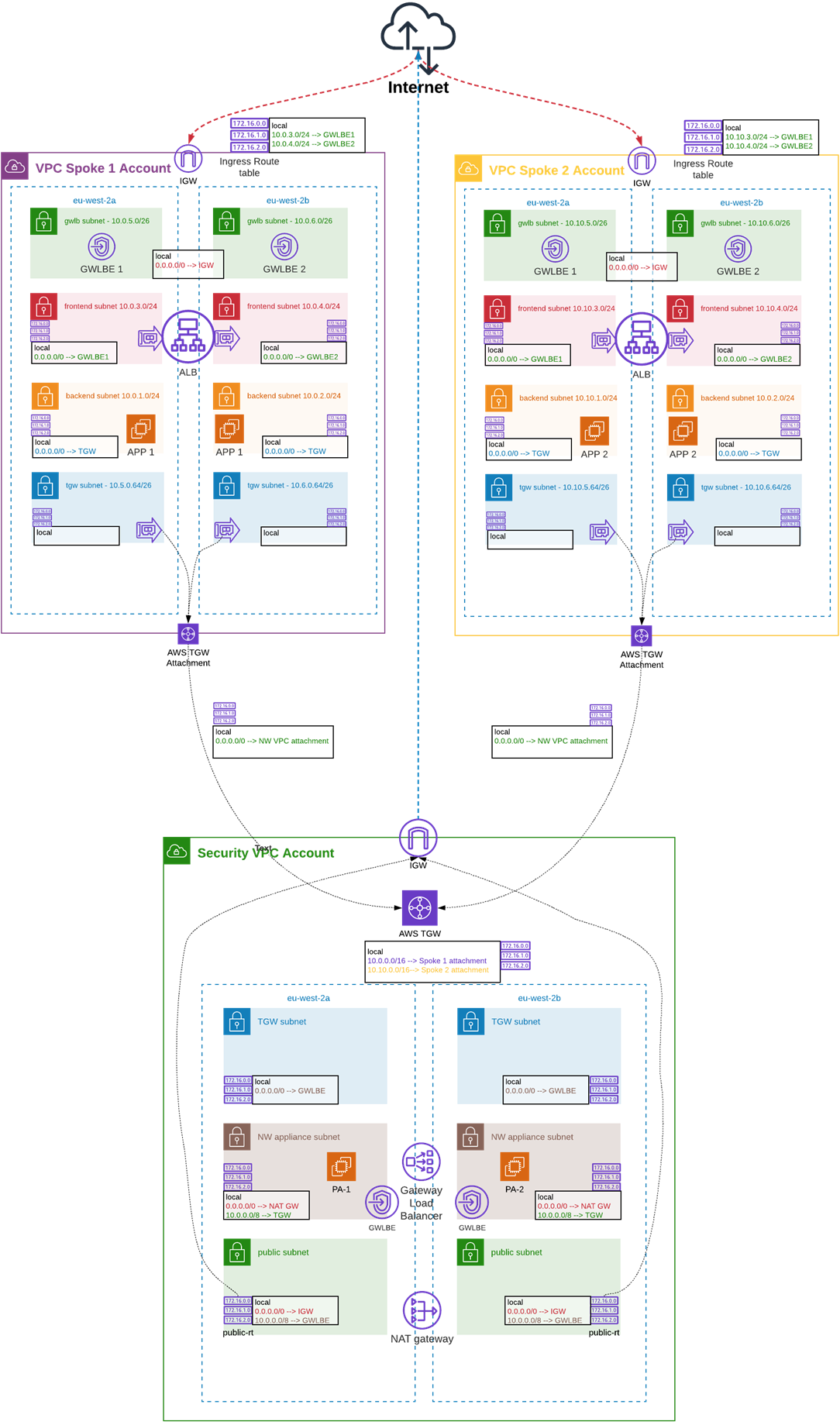

Figure 2 – Centralized north-south inspection with Gateway Load Balancer.

In the next sections of this post, I will share some of the questions we have asked ourselves at SoftwareOne. I will also explore the decisions we took while migrating from centralized north-south inspection with Transit Gateway VPN attachments (Figure 1) to centralized inspection with GWLB in front of the Palo Alto VM-Series Firewalls (Figure 2).

Prerequisites

I will assume you are familiar with the basic AWS networking concepts and services such as:

- Virtual private cloud (VPC)

- Subnets

- Route tables

- Internet and NAT gateways

- Endpoints and AWS PrivateLink

- AWS Transit Gateway

- Transit Gateway VPC attachments

- Route propagation

Whilst I will briefly touch on how GWLB is working, I won’t go into details. There is an elaborate series on the topic you can read on the AWS Networking Blog.

Field Notes

When you are about to implement a newly-released service, start with the documentation, move on to the drawing board, create a proof of concept (PoC), and then proceed with the actual implementation.

What makes Gateway Load Balancer distinctive from the other types of load balancers on AWS is that it helps direct traffic and scale appliances running behind, and it acts as a transparent network gateway since it operates on Layer 3 from the OSI model. Essentially, it’s a single entry and exit point for all traffic.

To do so, GWLB has to be combined with VPC ingress routing. This helps intercept any traffic entering our application VPC; however, in order to send this traffic to the firewall appliances running behind GWLB in the security VPC, we need to create Gateway Load Balancer endpoints.

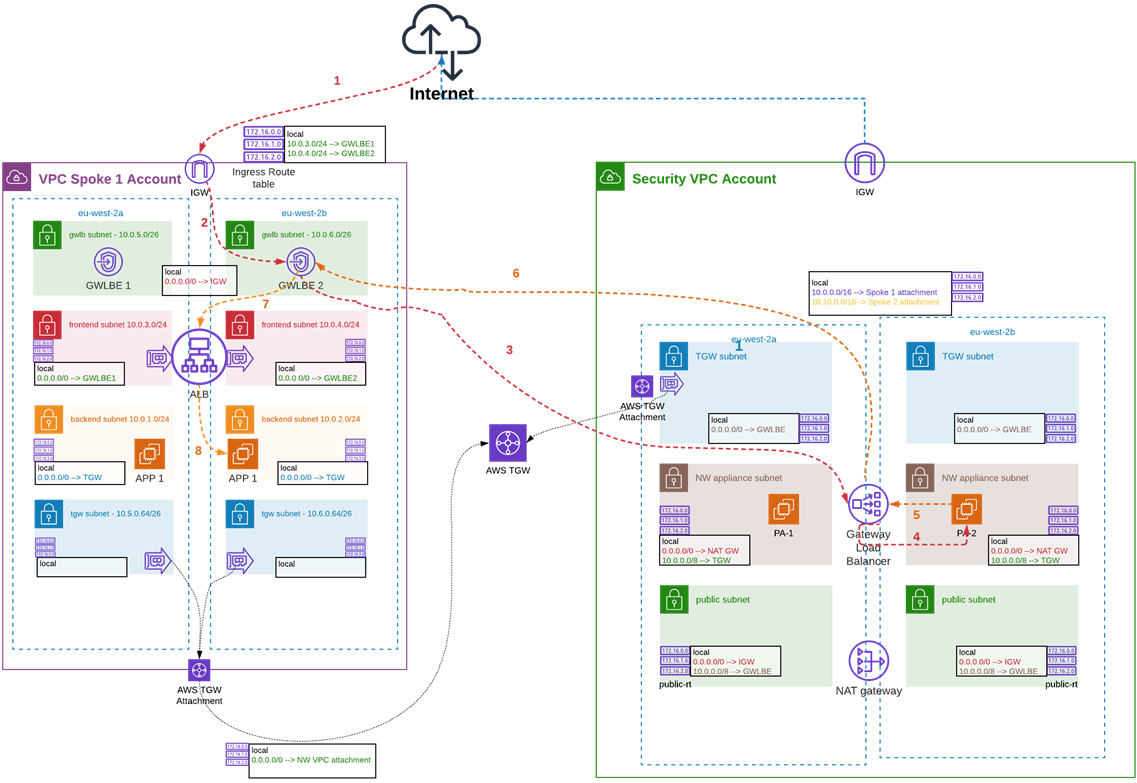

The actual ingress traffic flow with GWLB in place is illustrated below.

Figure 3 – North-south inspection with GWLB – Inbound.

Follow the Dependency Chain

Let’s break it down to the components we need to set up based on the information above and the ingress traffic flow:

- Implement VPC ingress by creating an Internet Gateway (IGW) edge association with a route to the GWLB endpoint.

- Create GWLB endpoints in the spoke (application) VPC pointed to the service endpoint of the GWLB in the security VPC. This requires acceptance from the service endpoint provider account.

- Create GWLB and respective service endpoint for it in the security VPC.

You cannot create a service endpoint if the GWLB doesn’t exist. You won’t be able to make a GWLB endpoint without the service endpoint being present, nor you will be allowed to create a route in the edge association route table pointed to a non-existing GWLB endpoint.

It becomes clear there is a dependency chain we need to follow whilst implementing our design, which starts from the GWLB creation in the security VPC.

Map Subnets to Route Tables and Availability Zones

Having a single route table associated with the same type of subnets (one route table for all private subnets) is a commonly used design choice for application VPCs. However, as outlined in the documentation the GWLB endpoints are tied to specific AWS Availability Zones (AZs).

For each GWLB endpoint, you can choose only one AZ (subnet) in your VPC. You cannot change the subnet later. You can create a single GWLB endpoint per AZ for a service, but only for the AZ the Gateway Load Balancer supports.

This means we can only create GWLB endpoints in the same Availability Zones in which the Gateway Load Balancer itself has been deployed. In most regions, AZ names are randomly allocated in each account; thus, we should use AZ IDs instead to better differentiate in which subnets and AZs to deploy our endpoints.

GWLB endpoints being AZ-specific signifies we need targeted routes to specific CIDRs via a dedicated GWLB endpoint.

For example, in the route table associated with the IGW, we have:

| Destination | Target |

| local | |

| 10.0.3.0/24 | vpc-endpoint-id-az-a |

| 10.0.4.0/24 | vpc-endpoint-id-az-b |

We need to have a 1:1 map between subnets and route tables, and to create routes that will target a particular endpoint.

For example, in the route table for AZ A in the frontend subnet for AZ A, we have:

| Destination | Target |

| local | |

| 0.0.0.0/0 | vpc-endpoint-id-az-a |

As we are targeting the dedicated GWLB endpoint for AZ A, we can’t associate this route table with the frontend subnet for AZ B. Rather, we need to create a route table for AZ B, with the same route targeting the GWLB endpoint in AZ B instead.

| Destination | Target |

| local | |

| 0.0.0.0/0 | vpc-endpoint-id-az-b |

Appliance Mode and North-South Traffic Inspection

Most if not all blog posts, including the AWS Networking series about Gateway Load Balancer cited above, describe a Transit Gateway feature named appliance mode.

This is meant to prevent asymmetric routing and complex source network address translation (SNAT) workarounds to make sure bi-directional traffic will end up for inspection on the same firewall appliance.

- When appliance mode is enabled, TGW uses a hash algorithm and selects a single network interface in the security VPC to send the traffic to (for the life of the flow). It uses the exact same VPC attachment elastic network interface (ENI) for the return traffic. This ensures bi-directional traffic is routed symmetrically through the same AZ (in the security VPC).

- When appliance mode is not enabled, TGW tries to keep the traffic routed between the VPC attachments in the same AZ from which the traffic is originating from until it reaches its destination.

To recap, with appliance mode enabled Transit Gateway will use a single TGW attachment ENI in the security VPC to route the traffic in both directions and thus have a session affinity. If appliance mode is disabled (which is the default), TGW will maintain source AZ affinity instead.

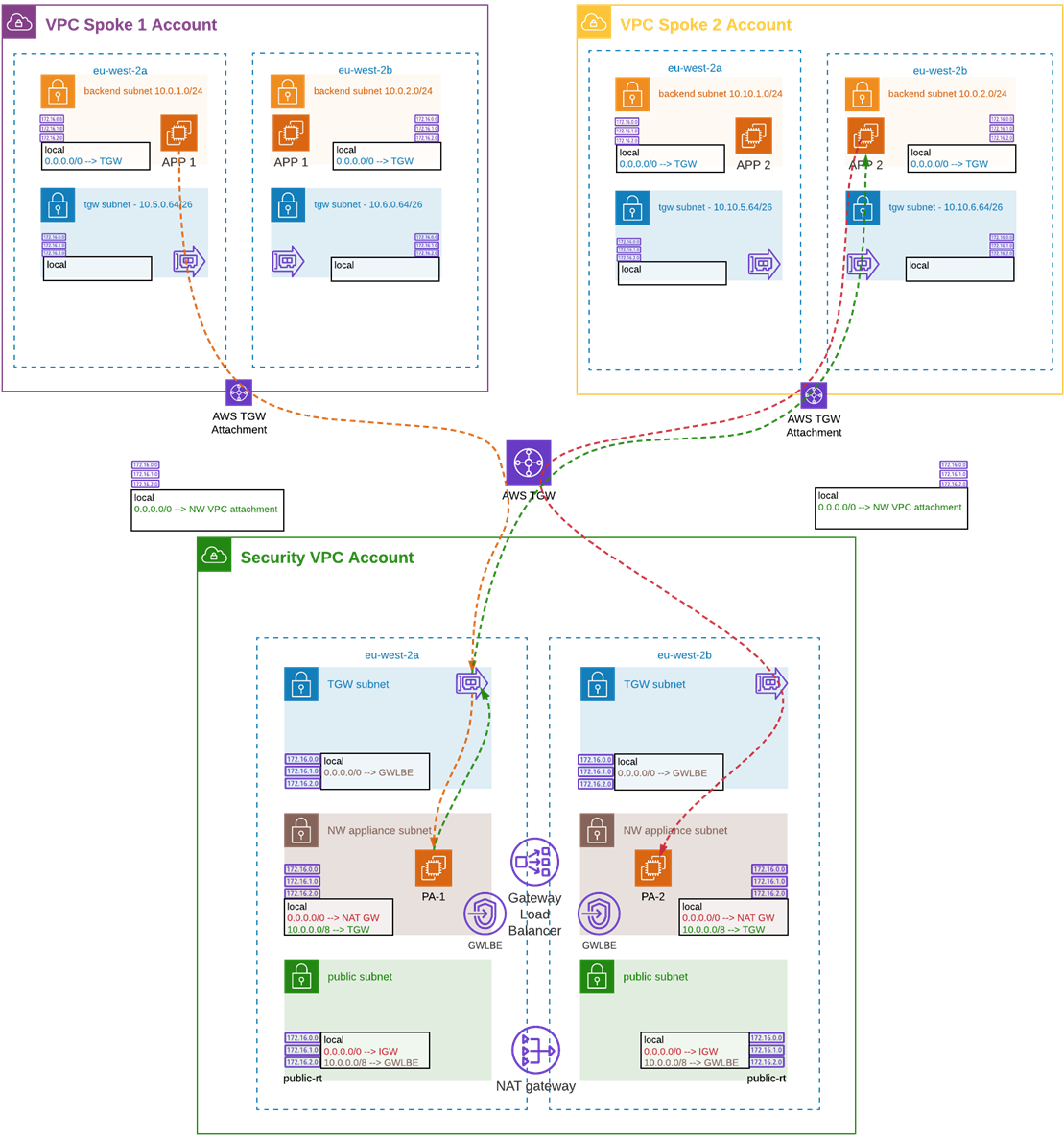

Let’s imagine that an Amazon EC2 instance in the spoke 1 VPC, which resides in AZ X, wants to reach a resource in the spoke 2 VPC, which happens to be in AZ Y.

The originating traffic from the instance in the spoke 1 VPC will enter the security VPC through the TGW attachment in AZ X and be inspected from the appliance residing in the same AZ X. If the firewall permits the traffic, it will send back to the same TGW ENI and exit towards its destination through the AZ it came.

However, in this case, the return traffic coming back from the resource in the destination account (spoke 2 VPC) may enter the security VPC on the TGW attachment ENI in AZ Y. It will then be sent for inspection to the second firewall residing in AZ Y. As this appliance is not aware of the originating request from the EC2 instance in spoke 1 VPC, the traffic will be dropped.

Figure 4 – Transit Gateway appliance mode.

Based on the above, it seems logical that whenever we have a centralized inspection scenario, appliance mode should be enabled. It certainly must be for east-west connectivity, but what about north-south?

During our PoC tests with appliance mode enabled for north-south traffic inspection, we observed an issue with FTP passive connections where we saw the error “IP addresses of control and data connection do not match” while trying to connect to an FTP server. This looked strange at first, as with appliance mode enabled we should have session affinity. Why were we seeing an IP mismatch error?

In fact, FTP uses two different connections one for control and another for data. In our case, those were ending up on different TGW VPC attachment ENIs/AZs and were exiting through different firewalls. After confirming this behavior with AWS, we solved the issue by disabling appliance mode for inbound and outbound inspection.

In practice, this means if you want to inspect both east-west and north-south traffic you will need two security VPCs (one for each leg). As appliance mode is configured on the TGW attachment, you can have only one attachment to the Transit Gateway per VPC. It should be enabled on the east-west VPC attachment and disabled on the north-south attachment.

How Many Gateway Load Balancers Should We Have?

We can have up to 20 GWLBs per region and up to 10 per VPC. On its own, each GWLB could have up to 300 targets per Availability Zone. One or more firewall appliances could be targets for more than one GWLB.

Cost-wise, GWLBs are charged on “running per hour” plus load capacity units (LCUs) used.

If, for example, we have two GWLBs running within our security VPC we will distribute the “running per hour” cost equally between the two. The LCU cost would be distributed, but as it depends on dynamic dimensions such as new or active connections and bandwidth, the cost for them will be dispersed unevenly.

If we have a single GWLB targeting a pair of firewall appliances, the traffic in both directions will hit the same firewall interface. Depending on how you define the zones on the firewall, it will usually mean that no matter where the traffic is originating from, on the firewall it will be tagged as coming from the same zone.

You can still inspect it without issues by applying the respective policies and rules, but it could be counter-intuitive for firewall administrators if they are not familiar with this behavior.

To help with this, and if we are willing to absorb the cost, we can logically isolate the inbound and outbound traffic if we create two GWLBs (respectively, two service endpoints): one for the inbound and one for the outbound leg. Both GWLBs could still target the same firewall appliances, but on different physical interfaces (ENIs). Thus, the network appliance will see the traffic as it’s coming from two distinctive zones.

We should take into account this may lead to additional cost from the one described above, as we’ll need to create distinctive GWLB endpoints for each leg. We must also take into account the inbound and outbound endpoints will be multiplied by the number of AZs in which our solution is operating.

Which Device Should Do the NAT?

Depending on the network appliance type—Palo Alto VM-Series Firewalls, for example—we can potentially enable overlay routing. This empowers us to use two-zone policy to inspect traffic leaving (egressing) our AWS estate. It allows packets to exit the firewall through a different interface than the one from which they entered through.

If the encapsulated packet received over the GENEVE protocol used between the GWLB and the network appliance is going to an outbound destination, the firewall will decapsulate it. Then, it will forward it to the IGW in case the secondary interface has a route to it.

The outbound traffic flows will differ in each case.

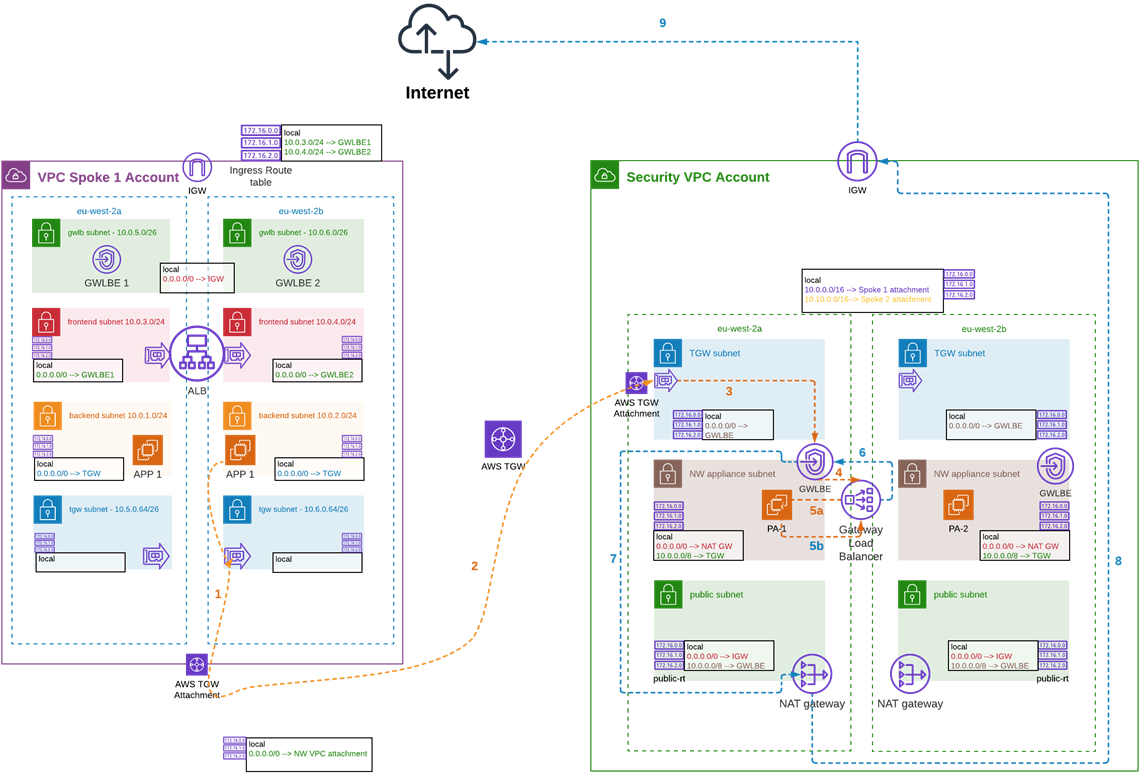

- If we use NAT Gateway:

Figure 5 – North-south inspection with GWLB – Outbound with NAT GW.

- If we use overlay routing on the firewall:

Figure 6 – North-south inspection with GWLB – Outbound with Overlay Routing.

As we need the firewall appliances to inspect our traffic, and since they are going to run 24/7 by utilizing overlay routing, we could potentially save up some of the cost of running NAT gateways in the Security VPC.

Conclusion

SoftwareOne has a proven record of successful implementations of centralized inspection with AWS Transit Gateway and site-to-site VPN attachments for customers.

Since Gateway Load Balancer (GWLB) was announced at re:Invent 2020, we have migrated several of them to use the new approach and benefit from the performance and scalability of the updated solution.

We are interested in hearing how you secure your workloads in the cloud, as well as the challenges you face and the solutions that work for you.

Here are a few resources to help you learn more:

- Introducing AWS Gateway Load Balancer

- Scaling network traffic inspection using GWLB

- Centralized inspection architecture with GWLB and AWS Transit Gateway

- Gateway Load Balancer category within the AWS Networking Blog

The content and opinions in this blog are those of the third-party author and AWS is not responsible for the content or accuracy of this post.

SoftwareOne – AWS Partner Spotlight

SoftwareOne is an AWS Premier Tier Consulting Partner and leading global provider of end-to-end software and cloud technology solutions that help clients build, migrate, manage, and modernize their applications and workloads.