AWS Big Data Blog

Category: Amazon Redshift

Fine-grained entitlements in Amazon Redshift: A case study from TrustLogix

This post is co-written with Srikanth Sallaka from TrustLogix as the lead author. TrustLogix is a cloud data access governance platform that monitors data usage to discover patterns, provide insights on least privileged access controls, and manage fine-grained data entitlements across data lake storage solutions like Amazon Simple Storage Service (Amazon S3), data warehouses like […]

Cross-account streaming ingestion for Amazon Redshift

As AWS’s fast, petabyte-scale cloud data warehouse delivering the best price-performance, Amazon Redshift makes it simple and cost-effective to analyze all your data using standard SQL, your existing ETL (extract, transform, and load), business intelligence (BI), and reporting tools quickly and securely. Tens of thousands of customers use Amazon Redshift to analyze exabytes of data […]

Use Amazon Redshift Spectrum with row-level and cell-level security policies defined in AWS Lake Formation

Data warehouses and data lakes are key to an enterprise data management strategy. A data lake is a centralized repository that consolidates your data in any format at any scale and makes it available for different kinds of analytics. A data warehouse, on the other hand, has cleansed, enriched, and transformed data that is optimized […]

Easy analytics and cost-optimization with Amazon Redshift Serverless

Amazon Redshift Serverless makes it easy to run and scale analytics in seconds without the need to setup and manage data warehouse clusters. With Redshift Serverless, users such as data analysts, developers, business professionals, and data scientists can get insights from data by simply loading and querying data in the data warehouse. With Redshift Serverless, […]

Convert Oracle XML BLOB data using Amazon EMR and load to Amazon Redshift

In legacy relational database management systems, data is stored in several complex data types, such XML, JSON, BLOB, or CLOB. This data might contain valuable information that is often difficult to transform into insights, so you might be looking for ways to load and use this data in a modern cloud data warehouse such as […]

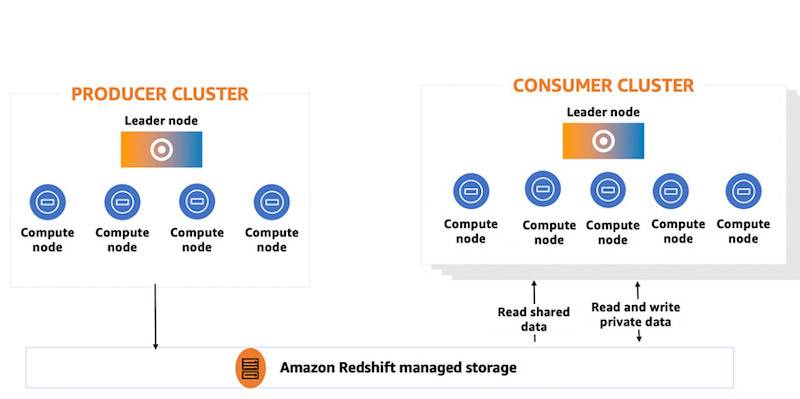

How Fannie Mae built a data mesh architecture to enable self-service using Amazon Redshift data sharing

This post is co-written by Kiran Ramineni and Basava Hubli, from Fannie Mae. Amazon Redshift data sharing enables instant, granular, and fast data access across Amazon Redshift clusters without the need to copy or move data around. Data sharing provides live access to data so that users always see the most up-to-date and transactionally consistent […]

Set up federated access to Amazon Athena for Microsoft AD FS users using AWS Lake Formation and a JDBC client

Tens of thousands of AWS customers choose Amazon Simple Storage Service (Amazon S3) as their data lake to run big data analytics, interactive queries, high-performance computing, and artificial intelligence (AI) and machine learning (ML) applications to gain business insights from their data. On top of these data lakes, you can use AWS Lake Formation to […]

Amazon Redshift data sharing best practices and considerations

Amazon Redshift is a fast, fully managed cloud data warehouse that makes it simple and cost-effective to analyze all your data using standard SQL and your existing business intelligence (BI) tools. Amazon Redshift data sharing allows for a secure and easy way to share live data for reading across Amazon Redshift clusters. It allows an […]

From centralized architecture to decentralized architecture: How data sharing fine-tunes Amazon Redshift workloads

Amazon Redshift is a fast, petabyte-scale cloud data warehouse delivering the best price-performance. It makes it fast, simple, and cost-effective to analyze all your data using standard SQL and your existing business intelligence (BI) tools. Today, tens of thousands of customers run business-critical workloads on Amazon Redshift. With the significant growth of data for big […]

Build a resilient Amazon Redshift architecture with automatic recovery enabled

Amazon Redshift provides resiliency in the event of a single point of failure in a cluster, including automatically detecting and recovering from drive and node failures. The Amazon Redshift relocation feature adds an additional level of availability, and this post is focused on explaining this automatic recovery feature. When the cluster relocation feature is enabled […]