AWS Database Blog

A serverless solution to schedule your Amazon DynamoDB On-Demand Backup

We recently released On-Demand Backup for Amazon DynamoDB. Using On-Demand Backup, you can create full backups of your DynamoDB tables, helping you meet your corporate and governmental regulatory requirements for data archiving. Now you can back up any table from a few megabytes to hundreds of terabytes of data in size, with the same performance for and availability to your production applications.

With On-Demand Backup, you can initiate a backup process on your own, but what if you want to schedule backups? Adding the power of serverless computing, you can create an AWS Lambda function that complements On-Demand Backup with the ability to set a schedule.

This blog post explains how you can create a serverless solution to schedule backups of your DynamoDB tables.

Solution overview

Let’s assume you want to take a backup of one of your DynamoDB tables each day. A simple way to achieve this is to use an Amazon CloudWatch Events rule to trigger an AWS Lambda function daily. In this scenario, in your Lambda function you have the code required to call the dynamodb:CreateBackup API operation. Setting this up requires configuring an IAM role, setting a CloudWatch rule, and creating a Lambda function. Using AWS CloudFormation, you can automate all these steps.

Following, you can find an example showing how to configure the schedule backup using CloudFormation. The following Python script and CloudFormation template are also available at this GitHub location.

Create a Python script

First, you need to create a Python script for Lambda. To do so, take the following steps:

- Create a file named

ddbbackup.pyand copy the following code. This sample code takes backups of a table name you specify while creating the CloudFormation Stack in the AWS region where the Lambda function executes. It also deletes any existing backups of that table except the last few days backups you specify as backup retention days in the CloudFormation Stack. - Add the ddbbackup.py to a .zip file.

- Upload the .zip file to an Amazon S3 bucket.

Deploy the Lambda function

Now your code is ready. Let’s deploy this code using AWS CloudFormation:

- Copy the cloud formation template from Github. This template performs these operations:

- Creates an IAM role with permissions to perform DynamoDB backups.

- Creates a Lambda function using the Python script that you created earlier. The template asks for the Amazon S3 path for the zip file, name of your DynamoDB table and Backup retention as a parameter during stack creation.

- Schedules a CloudWatch Event rule to trigger the Lambda function daily.

- Go to the AWS CloudFormation console and choose the desired AWS Region. In this example, I use the N. Virginia (US-EAST-1) Region.

- Choose Create Stack.

- On the Select Template page, choose File, and then choose the CloudFormation template that you created earlier (

DynamoDBScheduleBackup.json).

- Choose Next.

- Enter a value for Stack Name.

- Choose the names of the S3 bucket, file where you uploaded the Python script, table name and backup retention.

- Choose Next, and then choose Next on the Options

- Select I acknowledge that AWS CloudFormation might create IAM resources, and then choose Create.

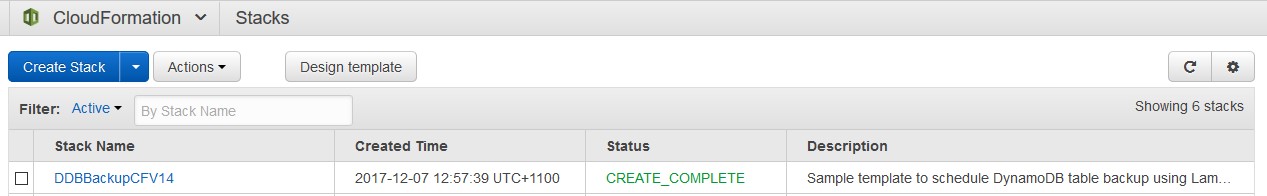

At this stage, CloudFormation starts creating the resources based on the template that you uploaded earlier.After few minutes, you should see that the stack has been completed successfully.

Check the resources created

Let’s check all the resources created by the CloudFormation template:

- Open the CloudWatch console, and then choose Rules. On the Rules page, you see a new rule name that starts with the CloudFormation stack name that you assigned at CloudFormation stack creation.

- Select the rule. On the summary page, you can see that the rule is attached to a Lambda function and triggers the function once a day.

- Choose your resource for Resource name on the Rules summary page. The Lambda console opens for the function that CloudFormation created to perform the DynamoDB table backup.

- Choose Test to test whether the Lambda function can take a backup of your DynamoDB table.

- On the Configure test event page, type an event name, ignore the other settings, and choose Create.

- Choose Test It should show a successful execution result.

- Open the DynamoDB console, and choose Backups. The Backups page shows you the backup that you took using the Lambda function.

- Wait for the CloudWatch rule to trigger the next backup job that you have scheduled.

Summary

In this post, I demonstrate a solution to schedule your DynamoDB backups using Lambda, CloudWatch, and CloudFormation. Although the scenario I describe is for a single table backup, you can extend this example to take backups of multiple tables.

To learn more about DynamoDB On-Demand Backup and Restore, see On-Demand Backup and Restore for DynamoDB in the DynamoDB Developer Guide.

About the Authors

Masudur Rahaman Sayem is a solutions architect at Amazon Web Services. He works with our customers to provide guidance and technical assistance on database projects, helping them improving the value of their solutions when using AWS.

Masudur Rahaman Sayem is a solutions architect at Amazon Web Services. He works with our customers to provide guidance and technical assistance on database projects, helping them improving the value of their solutions when using AWS.