Artificial Intelligence

Accelerate your learning towards AWS Certification exams with automated quiz generation using Amazon SageMaker foundations models

Getting AWS Certified can help you propel your career, whether you’re looking to find a new role, showcase your skills to take on a new project, or become your team’s go-to expert. And because AWS Certification exams are created by experts in the relevant role or technical area, preparing for one of these exams helps you build the required skills identified by skilled practitioners in the field.

Reading the FAQ page of the AWS services relevant for your certification exam is important in order to acquire a deeper understanding of the service. However, this could take quite some time. Reading FAQs of even one service can take half a day to read and understand. For example, the Amazon SageMaker FAQ contains about 33 pages (printed) of content just on SageMaker.

Wouldn’t it be an easier and more fun learning experience if you could use a system to test yourself on the AWS service FAQ pages? Actually, you can develop such a system using state-of-the-art language models and a few lines of Python.

In this post, we present a comprehensive guide of deploying a multiple-choice quiz solution for the FAQ pages of any AWS service, based on the AI21 Jurassic-2 Jumbo Instruct foundation model on Amazon SageMaker Jumpstart.

Large language models

In recent years, language models have seen a huge surge in size and popularity. In 2018, BERT-large made its debut with its 340 million parameters and innovative transformer architecture, setting the benchmark for performance on NLP tasks. In a few short years, the state-of-the-art in terms of model size has ballooned by over 500 times; OpenAI’s GPT-3 and Bloom 176 B, both with 175 billion parameters, and AI21 Jurassic-2 Jumbo Instruct with 178 billion parameters are just three examples of large language models (LLMs) raising the bar on natural language processing (NLP) accuracy.

SageMaker foundation models

SageMaker provides a range of models from popular model hubs including Hugging Face, PyTorch Hub, and TensorFlow Hub, and propriety ones from AI21, Cohere, and LightOn, which you can access within your machine learning (ML) development workflow in SageMaker. Recent advances in ML have given rise to a new class of models known as foundation models, which have billions of parameters and are trained on massive amounts of data. Those foundation models can be adapted to a wide range of use cases, such as text summarization, generating digital art, and language translation. Because these models can be expensive to train, customers want to use existing pre-trained foundation models and fine-tune them as needed, rather than train these models themselves. SageMaker provides a curated list of models that you can choose from on the SageMaker console.

With JumpStart, you can find foundation models from different providers, enabling you to get started with foundation models quickly. You can review model characteristics and usage terms, and try out these models using a test UI widget. When you’re ready to use a foundation model at scale, you can do so easily without leaving SageMaker by using pre-built notebooks from model providers. Your data, whether used for evaluating or using the model at scale, is never shared with third parties because the models are hosted and deployed on AWS.

AI21 Jurassic-2 Jumbo Instruct

Jurassic-2 Jumbo Instruct is an LLM by AI21 Labs that can be applied to any language comprehension or generation task. It’s optimized to follow natural language instructions and context, so there is no need to provide it with any examples. The endpoint comes pre-loaded with the model and ready to serve queries via an easy-to-use API and Python SDK, so you can hit the ground running. Jurassic-2 Jumbo Instruct is a top performer at HELM, particularly in tasks related to reading and writing.

Solution overview

In the following sections, we go through the steps to test the Jurassic-2 Jumbo instruct model in SageMaker:

- Choose the Jurassic-2 Jumbo instruct model on the SageMaker console.

- Evaluate the model using the playground.

- Use a notebook associated with the foundation model to deploy it in your environment.

Access Jurassic-2 Jumbo Instruct through the SageMaker console

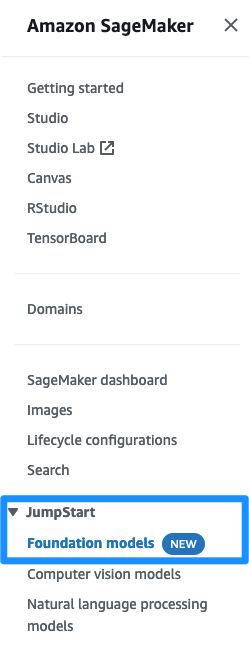

The first step is to log in to the SageMaker console. Under JumpStart in the navigation pane, choose Foundation models to request access to the model list.

After your account is allow listed, you can see a list of models on this page and search for the Jurassic-2 Jumbo Instruct model.

Evaluate the Jurassic-2 Jumbo Instruct model in the model playground

On the AI21 Jurassic-2 Jumbo Instruct listing, choose View Model. You will see a description of the model and the tasks that you can perform. Read through the EULA for the model before proceeding.

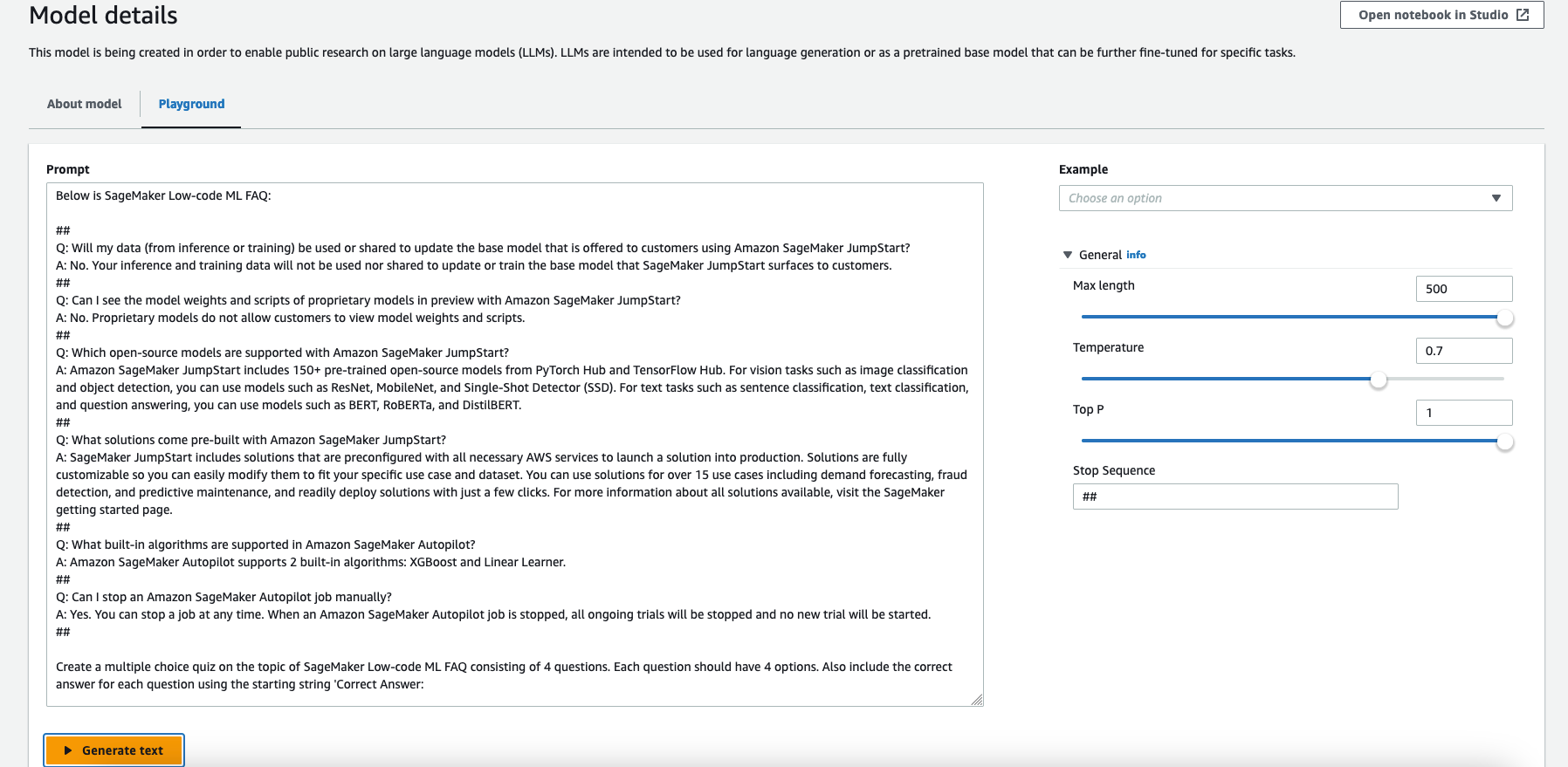

Let’s first try out the model to generate a test based on the SageMaker FAQ page. Navigate to the Playground tab.

On the Playground tab, you can provide sample prompts to the Jurassic-2 Jumbo Instruct model and view the output.

Note that you can use a maximum of 500 tokens. We set the Max length to 500, which is the maximum number of tokens to generate. This model has an 8,192-token context window (the length of the prompt plus completion should be at most 8,192 tokens).

To make it easier to see the prompt, you can enlarge the Prompt box.

Because we can use a maximum of 500 tokens, we take a small portion of the Amazon SageMaker FAQs page, the Low-code ML section, for our test prompt.

We use the following prompt:

Prompt engineering is an iterative process. You should be clear and specific, and give the model time to think.

Here we specified the context with ## as stop sequences, which signals the model to stop generating after this character or string is generated. It’s useful when using a few-shot prompt.

Next, we are clear and very specific in our prompt, asking for a multiple-choice quiz, consisting of four questions with four options. We ask the model to include the correct answer for each question using the starting string 'Correct Answer:' so we can parse it later using Python:

A well-designed prompt can make the model more creative and generalized so that it can easily adapt to new tasks. Prompts can also help incorporate domain knowledge on specific tasks and improve interpretability. Prompt engineering can greatly improve the performance of zero-shot and few-shot learning models. Creating high-quality prompts requires careful consideration of the task at hand, as well as a deep understanding of the model’s strengths and limitations.

In the scope of this post, we don’t cover this wide area further.

Copy the prompt and enter it in the Prompt box, then choose Generate text.

This sends the prompt to the Jurassic-2 Jumbo Instruct model for inference. Note that experimenting in the playground is free.

Also keep in mind that despite the cutting-edge nature of LLMs, they are still prone to biases, errors, and hallucinations.

After reading the model output thoroughly and carefully, we can see that the model generated quite a good quiz!

After you have played with the model, it’s time to use the notebook and deploy it as an endpoint in your environment. We use a small Python function to parse the output and simulate an interactive test.

Deploy the Jurassic-2 Jumbo Instruct foundation model from a notebook

You can use the following sample notebook to deploy Jurassic-2 Jumbo Instruct using SageMaker. Note that this example uses an ml.p4d.24xlarge instance. If your default limit for your AWS account is 0, you need to request a limit increase for this GPU instance.

Let’s create the endpoint using SageMaker inference. First, we set the necessary variables, then we deploy the model from the model package:

After the endpoint is deployed, you can run inference queries against the model.

After the model is deployed, you can interact with the deployed endpoint using the following code snippet:

With the Jurassic-2 Jumbo Instruct foundation model deployed on an ml.p4d.24xlarge instance SageMaker endpoint, you can use a prompt with 4,096 tokens. You can take the same prompt we used in the playground and add many more questions. In this example, we added the FAQ’s entire Low-code ML section as context into the prompt.

We can see the output of the model, which generated a multiple-choice quiz with four questions and four options for each question.

Now you can develop a Python function to parse the output and create an interactive multiple-choice quiz.

It’s quite straightforward to develop such a function with a few lines of code. You can parse the answer easily because the model created a line with “Correct Answer: ” for each question, exactly as we requested in the prompt. We don’t provide the Python code for the quiz generation in the scope of this post.

Run the quiz in the notebook

Using the Python function we created earlier and the output from the Jurassic-2 Jumbo Instruct foundation model, we run the interactive quiz in the notebook.

You can see I answered three out of four questions correctly and got a 75% grade. Perhaps I need to read the SageMaker FAQ a few more times!

Clean up

After you have tried out the endpoint, make sure to remove the SageMaker inference endpoint and the model to prevent any charges:

Conclusion

In this post, we showed you how you can test and use AI21’s Jurassic-2 Jumbo Instruct model using SageMaker to build an automated quiz generation system. This was achieved using a rather simple prompt with a publicly available SageMaker FAQ page’s text embedded and a few lines of Python code.

Similar to this example mentioned in the post, you can customize a foundation model for your business with just a few labeled examples. Because all the data is encrypted and doesn’t leave your AWS account, you can trust that your data will remain private and confidential.

Request access to try out the foundation model in SageMaker today, and let us know your feedback!

About the Author

Eitan Sela is a Machine Learning Specialist Solutions Architect with Amazon Web Services. He works with AWS customers to provide guidance and technical assistance, helping them build and operate machine learning solutions on AWS. In his spare time, Eitan enjoys jogging and reading the latest machine learning articles.

Eitan Sela is a Machine Learning Specialist Solutions Architect with Amazon Web Services. He works with AWS customers to provide guidance and technical assistance, helping them build and operate machine learning solutions on AWS. In his spare time, Eitan enjoys jogging and reading the latest machine learning articles.