Artificial Intelligence

Category: Learning Levels

Streamline GitHub workflows with generative AI using Amazon Bedrock and MCP

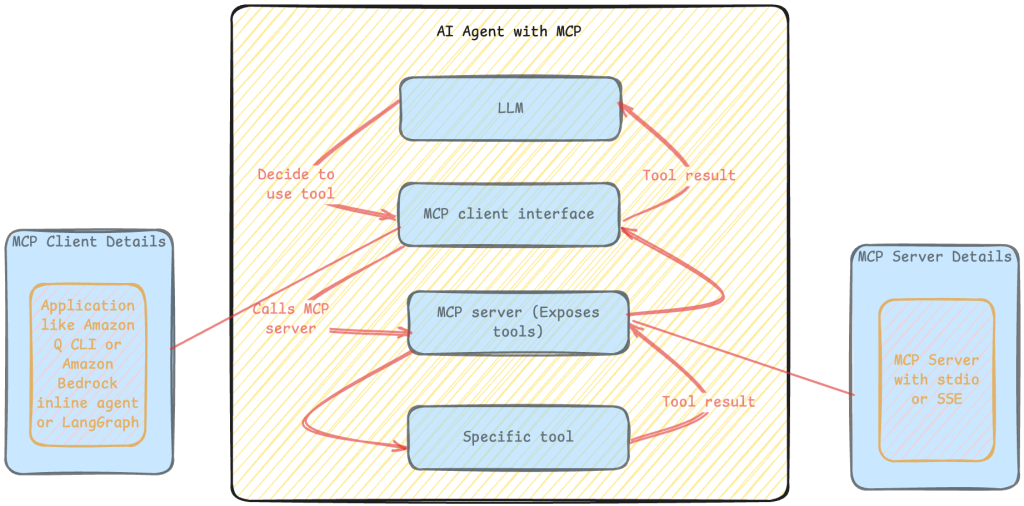

This blog post explores how to create powerful agentic applications using the Amazon Bedrock FMs, LangGraph, and the Model Context Protocol (MCP), with a practical scenario of handling a GitHub workflow of issue analysis, code fixes, and pull request generation.

Generate suspicious transaction report drafts for financial compliance using generative AI

A suspicious transaction report (STR) or suspicious activity report (SAR) is a type of report that a financial organization must submit to a financial regulator if they have reasonable grounds to suspect any financial transaction that has occurred or was attempted during their activities. In this post, we explore a solution that uses FMs available in Amazon Bedrock to create a draft STR.

Fine-tune and deploy Meta Llama 3.2 Vision for generative AI-powered web automation using AWS DLCs, Amazon EKS, and Amazon Bedrock

In this post, we present a complete solution for fine-tuning and deploying the Llama-3.2-11B-Vision-Instruct model for web automation tasks. We demonstrate how to build a secure, scalable, and efficient infrastructure using AWS Deep Learning Containers (DLCs) on Amazon Elastic Kubernetes Service (Amazon EKS).

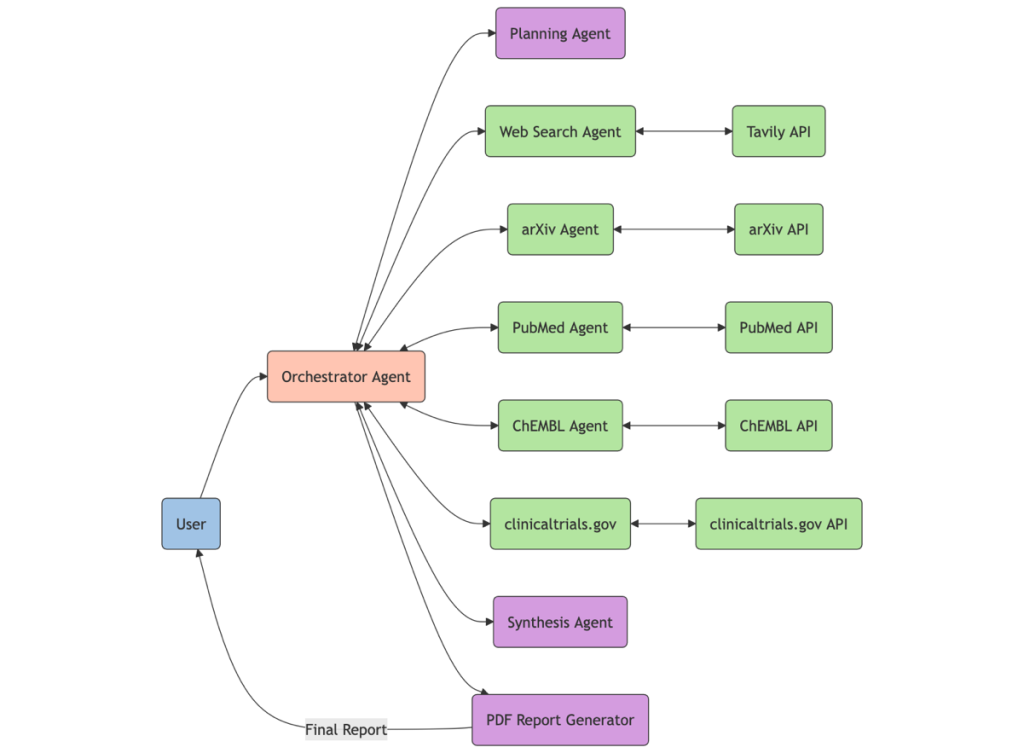

Build a drug discovery research assistant using Strands Agents and Amazon Bedrock

In this post, we demonstrate how to create a powerful research assistant for drug discovery using Strands Agents and Amazon Bedrock. This AI assistant can search multiple scientific databases simultaneously using the Model Context Protocol (MCP), synthesize its findings, and generate comprehensive reports on drug targets, disease mechanisms, and therapeutic areas.

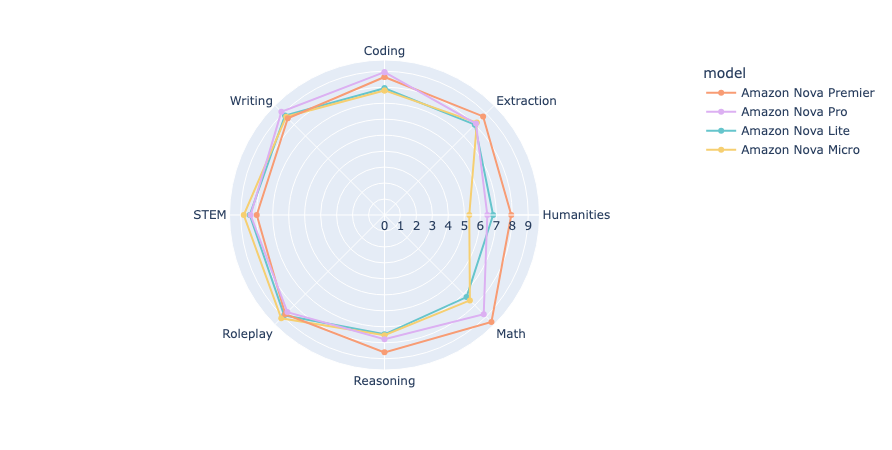

Benchmarking Amazon Nova: A comprehensive analysis through MT-Bench and Arena-Hard-Auto

The repositories for MT-Bench and Arena-Hard were originally developed using OpenAI’s GPT API, primarily employing GPT-4 as the judge. Our team has expanded its functionality by integrating it with the Amazon Bedrock API to enable using Anthropic’s Claude Sonnet on Amazon as judge. In this post, we use both MT-Bench and Arena-Hard to benchmark Amazon Nova models by comparing them to other leading LLMs available through Amazon Bedrock.

Customize Amazon Nova in Amazon SageMaker AI using Direct Preference Optimization

At the AWS Summit in New York City, we introduced a comprehensive suite of model customization capabilities for Amazon Nova foundation models. Available as ready-to-use recipes on Amazon SageMaker AI, you can use them to adapt Nova Micro, Nova Lite, and Nova Pro across the model training lifecycle, including pre-training, supervised fine-tuning, and alignment. In this post, we present a streamlined approach to customize Nova Micro in SageMaker training jobs.

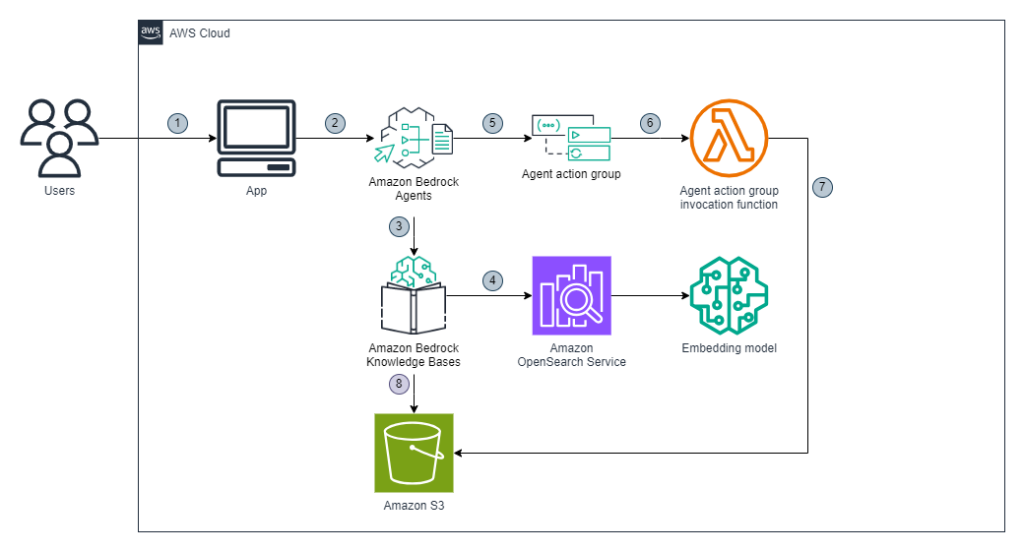

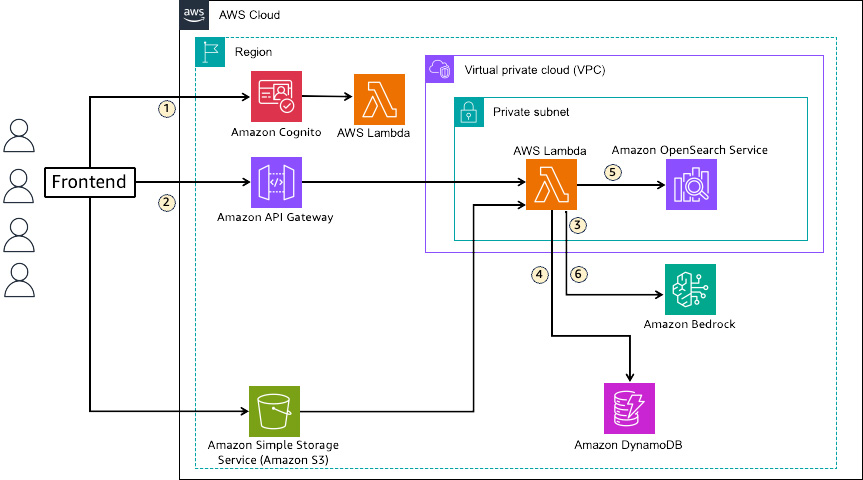

Multi-tenant RAG implementation with Amazon Bedrock and Amazon OpenSearch Service for SaaS using JWT

In this post, we introduce a solution that uses OpenSearch Service as a vector data store in multi-tenant RAG, achieving data isolation and routing using JWT and FGAC. This solution uses a combination of JWT and FGAC to implement strict tenant data access isolation and routing, necessitating the use of OpenSearch Service.

Deploy conversational agents with Vonage and Amazon Nova Sonic

In this post, we explore how developers can integrate Amazon Nova Sonic with the Vonage communications service to build responsive, natural-sounding voice experiences in real time. By combining the Vonage Voice API with the low-latency and expressive speech capabilities of Amazon Nova Sonic, businesses can deploy AI voice agents that deliver more human-like interactions than traditional voice interfaces. These agents can be used as customer support, virtual assistants, and more.

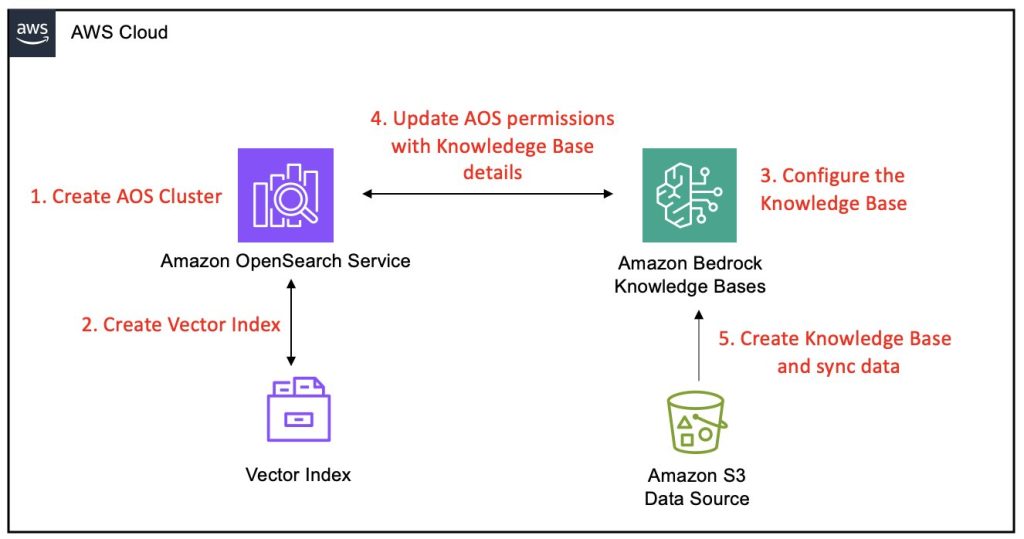

Amazon Bedrock Knowledge Bases now supports Amazon OpenSearch Service Managed Cluster as vector store

Amazon Bedrock Knowledge Bases has extended its vector store options by enabling support for Amazon OpenSearch Service managed clusters, further strengthening its capabilities as a fully managed Retrieval Augmented Generation (RAG) solution. This enhancement builds on the core functionality of Amazon Bedrock Knowledge Bases , which is designed to seamlessly connect foundation models (FMs) with internal data sources. This post provides a comprehensive, step-by-step guide on integrating an Amazon Bedrock knowledge base with an OpenSearch Service managed cluster as its vector store.

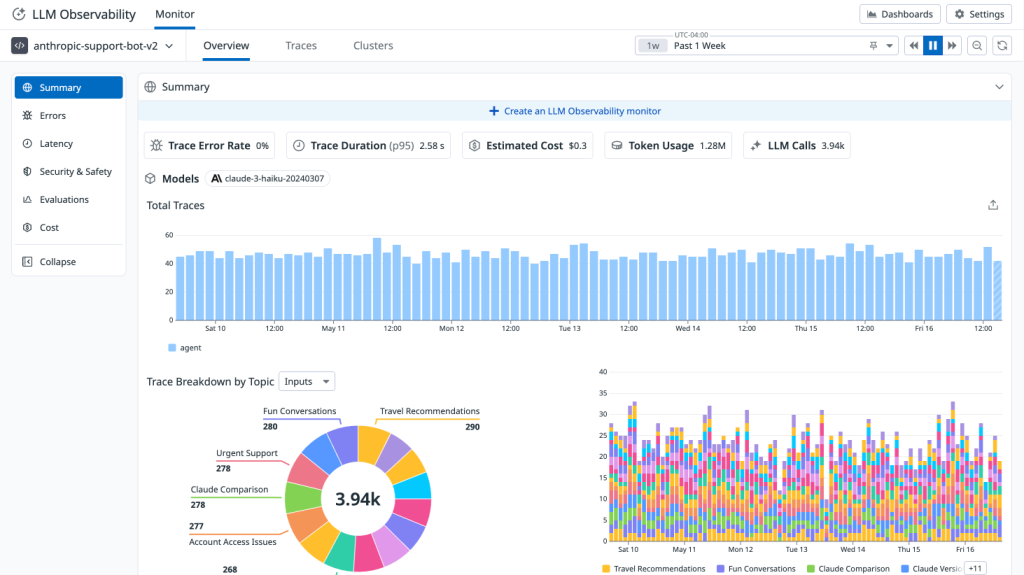

Monitor agents built on Amazon Bedrock with Datadog LLM Observability

We’re excited to announce a new integration between Datadog LLM Observability and Amazon Bedrock Agents that helps monitor agentic applications built on Amazon Bedrock. In this post, we’ll explore how Datadog’s LLM Observability provides the visibility and control needed to successfully monitor, operate, and debug production-grade agentic applications built on Amazon Bedrock Agents.