Artificial Intelligence

Use facial recognition to deliver high-end consumer experience with Amazon Kinesis Video Streams and Amazon Rekognition Video

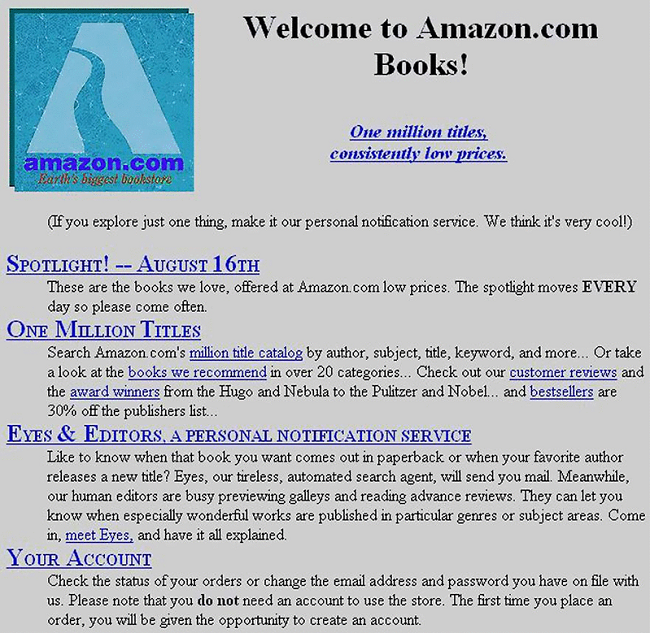

This is a screenshot of the Amazon.com website when it was just a month old, back in 1995. I want to point out a couple of things, one is obvious and the other is not. First, it’s pretty clear that web design has come a long way in the last 23 years. Second, hidden in the text is the announcement of Eyes, a “tireless, automated search agent that will send you mail” when it finds a book that it thinks you might find interesting.

This is a screenshot of the Amazon.com website when it was just a month old, back in 1995. I want to point out a couple of things, one is obvious and the other is not. First, it’s pretty clear that web design has come a long way in the last 23 years. Second, hidden in the text is the announcement of Eyes, a “tireless, automated search agent that will send you mail” when it finds a book that it thinks you might find interesting.

Eyes was the first time Amazon personalized online shopping. This was a step on a journey to reproduce the best service that is traditionally provided by local establishments who know their customers. We’ve all been lucky enough to get this type of service at some point, receiving an experience that’s felt tailored to our every need.

Jump forward 23 years and, in many ways, the online experience now leads. You can log on to Amazon.com from anywhere in the world and receive a consistent experience built around your shopping habits, simply because the website knows who you are. This isn’t so easy in person. Walk into your favorite store, even in your home town, and the chances are you’ll be treated as just another anonymous customer.

How can you change that? Imagine that you work for a clothing retailer. You could offer your customers great service if you knew who they were and sensibly used this information. You could suggest items to complement what they’ve already bought. You could not suggest items if you don’t have their size in stock. You could be sensitive to any issues or complaints the customer has previously made. You just need a shop assistant with a very good memory for faces.

After reading about machine learning on AWS you’ve decided to build just that. At the heart of your architecture are two new services that were announced at re:Invent 2017: Amazon Kinesis Video Streams and Amazon Rekognition Video. Kinesis Video Streams makes it easy to securely stream video from connected devices to AWS for analytics and machine learning. Rekognition Video easily integrates with Kinesis Video Streams and enables you to perform real-time face recognition against a private database of face metadata. Here’s the architecture of your solution:

To quickly prototype the system, you’ll use a camera hosted on a Raspberry Pi to watch your store and stream video to AWS using Amazon Kinesis Video Streams. You’ll use Amazon Rekognition Video to analyze this stream, sending its output to a Kinesis Data Stream. An AWS Lambda function will read from this stream and emit messages to an AWS IoT Topic. In store, assistants will be given a tablet that’s subscribed to the AWS IoT Topic over a web sockets connection. The tablet can notify the assistant when a known customer enters the shop. The visibility of the tablet will help to make this experience feel more natural. Nobody wants to interact with a shop assistant who appears to magically know everything, but if it’s obvious where the help is coming from then customers will be much more likely to accept the technology. Including AWS IoT in your architecture enables you to further build out your smart store in the future.

Preparing the Raspberry Pi

Instructions on how to install the Kinesis Video Streams C++ Producer SDK on a Raspberry Pi are in the AWS Documentation. At this stage, follow these through until the end of the section Download and Build the Kinesis Video Streams C++ Producer SDK.

As part of the instructions, an IAM user with permissions to write to Kinesis Video Streams is created. If you move this project to production, perhaps with multiple cameras, it will be better to manage identity using Amazon Cognito. However, for now, an IAM user will do.

Create a Kinesis Video Stream and Kinesis Data Stream

After the install script has finished, use the AWS Management Console to create a new Kinesis Video Stream. Go to the Amazon Kinesis Video Streams Console, and choose the Create button. Your call the stream my-stream and check the box to use the default settings:

Whilst you are using the Kinesis console, you also create the Kinesis Data Stream where Rekognition Video will output its results. Go to the Amazon Kinesis console, choose the Data Streams link on the right and then choose the Create Kinesis stream button. Call this stream AmazonRekogntionResults. The data flow on this stream won’t be large, so a single shard is sufficient:

Using the AWS Management Console, find and note down the Amazon Resource Name (ARN) of both the video stream and data stream.

Start the Video Streaming

Back on the Raspberry Pi, you run the following commands from you home directory to start the camera streaming data to AWS:

The first and second parameters sent to the sample app (-w 640 -h 480) determine the resolution of the video stream. The third parameter (my-stream) is the name of the Kinesis Video Stream you created earlier.

If the above command fails with a library not found error, enter the following commands to verify the project has correctly linked to its open source libraries.

After a few seconds, you can see pictures from your camera in the AWS Management Console for your Kinesis Video Stream. You’ve managed to get video from your Raspberry Pi camera into the Cloud! Now, you need to analyze it.

Amazon Rekognition Video

The Rekognition Video service provides a stream processor that you can use to manage the analysis of data from a Kinesis Video Stream. The processor needs permissions to do this, so you go to the IAM console and choose to create a new IAM role. You select Rekognition as the service that will use the role and attach the AmazonRekognitionServiceRole managed policy. This role grants Rekognition the rights to read from any Kinesis Video Stream and write to any Kinesis Data Stream prefixed with AmazonRekognition. After the role has been created, note down the ARN.

There’s one final task before you create your stream processor. You need to create a face collection. This is a private database that stores facial information of known people that you want Rekognition Video to detect. Create a face collection called my-customers using the AWS Command Line Interface (CLI) by typing the following command:

When a customer opts-in to your pilot, you’ll take a photo of them, upload the image to Amazon S3, and add them to your face collection with the CLI command:

You modify the statement to replace:

- <bucket> with the name of the Amazon S3 bucket

- <key> with key to the Amazon S3 object (a PNG or JPEG file)

- <name> with the name of the customer

Amazon Rekognition doesn’t store the actual faces in the collection. Instead, it extracts features from the face and stores this information in a database. This information is used in subsequent operations such as searching a collection for a matching face.

You can now create your stream processor with the following CLI command:

Modify the statement with these replacements

- <video stream ARN> with the ARN of the Kinesis Video Stream

- <role ARN> with the ARN of the IAM role you created

- <data stream ARN> with the ARN of the Kinesis Data Stream

The CLI command returns the ARN of the newly created stream processor. You can start this by running the command:

Giving great service

Rekognition can now analyze the video stream from your Raspberry Pi and put the results of its analysis onto a Kinesis Data Stream. The service matches faces it finds in your Kinesis Video Stream to your customers in the face collection. For each frame it analyses, Rekognition Video may find many faces and each face may have many potential matches. This information is detailed in JSON documents that Rekognition Video puts onto the Kinesis Data Stream. Such a document might look like this:

You set up an AWS Lambda function to process these JSON documents from the stream. Rekognition Video will tell you about all the faces it detects in your store, not just those that it identifies. You can use this information to further enhance the shopping experience in your store. How long do people spend in your store? How long do people spend looking at a particular display? How well do people flow from the store entrance to the displays further into the premises?

The Rekognition Video output also tells you if any of the faces it finds match to those in the face collection. Your Lambda function uses this information to publish to topics in AWS IoT. Your shop assistants hold tablets that are subscribed to these topics. As soon as a known customer is detected in your store, the tablet can start to retrieve relevant information that the assistant can use to deliver great service.

Summary

Whatever your use case, real-time face recognition with Kinesis Video Streams and Rekognition Video is easy to set up and doesn’t require expensive hardware. The entire system built here is serverless and Rekognition Video qualifies for the AWS Free Tier. If you’ve been building your own solution while reading this post, make sure that you delete any items you’ve created to avoid unnecessary cost, specifically:

- Stop and delete the Rekognition Stream Processor

- Delete the Kinesis Video Stream

- Delete the Kinesis Data Stream

- Delete the Lambda function

- Delete the IAM roles for the Lambda Function and the Stream Processor

- Delete the IAM user the Raspberry Pi uses for access.

In the meantime, you can find out more about Amazon Kinesis or Amazon Rekognition by going to the AWS website.

About the author

Nick Corbett works in Solution Prototyping and Development. He works with our customers to provide leadership on big data projects, helping them shorten their time to value when using AWS. In his spare time, he follows the Jürgen Klopp revolution.

Nick Corbett works in Solution Prototyping and Development. He works with our customers to provide leadership on big data projects, helping them shorten their time to value when using AWS. In his spare time, he follows the Jürgen Klopp revolution.