Artificial Intelligence

Save costs by automatically shutting down idle resources within Amazon SageMaker Studio

July 2023: This post was reviewed for accuracy. The Github repository is maintained up to date.

Amazon SageMaker Studio provides a unified, web-based visual interface where you can perform all machine learning (ML) development steps, making data science teams up to 10 times more productive. Studio gives you complete access, control, and visibility into each step required to build, train, and deploy models. Studio notebooks are collaborative notebooks that you can launch quickly because you don’t need to set up compute instances and file storage beforehand. Amazon SageMaker is a fully managed service that offers capabilities that abstract the heavy lifting of infrastructure management and provides the agility and scalability you desire for large-scale ML activities with different features and a pay-as-you-use pricing model.

In this post, we demonstrate how to do the following:

- Detect and stop idle resources that are incurring costs within Studio using an auto-shutdown Jupyter extension using a Lifecycle Configuration script.

- Enable event notifications to track user profiles within Studio domains that haven’t installed the auto-shutdown extension

- Use the installed auto-shutdown extension to manage Amazon SageMaker Data Wrangler costs by automatically shutting down instances that may result in larger than expected costs

Studio components

In Studio, running notebooks are containerized separately from the JupyterServer UI in order to de-couple compute infrastructure sizing. A Studio notebook runs in an environment defined by the following:

- Instance type – The underlying hardware configuration, which determines the pricing rate. This includes the number and type of processors (vCPU and GPU), and the amount and type of memory.

- SageMaker image – A compatible container image (either SageMaker-provided or custom) that hosts the notebook kernel. The image defines what kernel specs it offers, such as the built-in Python 3 (Data Science) kernel.

- SageMaker kernel gateway app – A running instance of the container image on the particular instance type. Multiple apps can share a running instance.

- Running kernel session – The process that inspects and runs the code contained in the notebook. Multiple open notebooks (kernels) of the same spec and instance type are opened in the same app.

The Studio UI runs as a separate app of type JupyterServer instead of KernelGateway, which allows you to switch an open notebook to different kernels or instance types from within the Studio UI. For more information about how a notebook kernel runs in relation to the KernelGateway app, user, and Studio domain, see Using Amazon SageMaker Studio Notebooks.

Studio billing

There is no additional charge for using Studio. The costs incurred for running Studio notebooks, interactive shells, consoles, and terminals are based on Studio instance type usage. For information about billing along with pricing examples, see Amazon SageMaker Pricing.

When you run a Studio notebook, interactive shell, or image terminal within Studio, you must choose a kernel and an instance type. These resources are launched using a Studio instance based on the chosen type from the UI. If an instance of that type was previously launched and is available, the resource is run on that instance. For CPU-based images, the default instance type is ml.t3.medium. For GPU-based images, the default instance type is ml.g4dn.xlarge. The costs incurred are based on the instance type, and you’re billed separately for each instance. Metering starts when an instance is created, and ends when all the apps on the instance are shut down, or the instance is shut down.

Shut down the instance to stop incurring charges. If you shut down the notebook running on the instance but don’t shut down the instance, you still incur charges. When you open multiple notebooks on the same instance type, the notebooks run on the same instance even if they’re using different kernels. You’re billed only for the time that one instance is running. You can change the instance type from within the notebook after you open it and shut down individual resources, including notebooks, terminals, kernels, apps, and instances. You can also shut down all resources in one of these categories at the same time. When you shut down a notebook, any unsaved information in the notebook is lost. The notebook is not deleted.

You can shut down an open notebook from the Studio File menu or from the Running Terminal and Kernels pane. The Running Terminals and Kernels pane consists of four sections. Each section lists all the resources of that type. You can shut down each resource individually or shut down all the resources in a section at the same time. When you choose to shut down all resources in a section, the following occurs:

- Running Instances/Running Apps – All instances, apps, notebooks, kernel sessions, Data Wrangler sessions, consoles or shells, and image terminals are shut down. System terminals aren’t shut down. Choose this option to stop the accrual of all charges.

- Kernel Sessions – All kernels, notebooks, and consoles or shells are shut down.

- Terminal Sessions – All image terminals and system terminals are shut down.

To shut down resources, in the left sidebar, choose the Running Terminals and Kernels icon. To shut down a specific resource, choose the Power icon on the same row as the resource.

For running instances, a confirmation dialog lists all the resources that will be shut down. For running apps, a confirmation dialog is displayed. Choose Shut Down All to proceed. No confirmation dialog is displayed for kernel sessions or terminal sessions. To shut down all resources in a section, choose the X icon to the right of the section label. A confirmation dialog is displayed. Choose Shut Down All to proceed.

Automatically shut down idle kernels with the Jupyter Server extension

Instead of relying on users to shut down resources they’re no longer using, you can use the Studio auto-shutdown extension to automatically detect and shut down idle resources saving costs. Jupyter Server extensions are typically packages or modules that adds extra request handlers to the server’s web application. The extension automatically shuts down kernels, apps, and instances running within Studio when they’re idle for a stipulated period of time. After the kernels have stayed idle long enough, the extension automatically turns them off.

For instructions to install the auto shutdown extension, see Customize Amazon SageMaker Studio using Lifecycle Configurations. You can view the lifecycle configuration script in the github repo here. The lifecycle configuration script installs the extension and the idle timeout can be set using a command-line script created by the LCC. The idle time limit parameter is to set a time after which idle resources with no active notebook sessions are shut down. By default, the idle time limit is set to 120 mins. You can update the time by changing the TIMEOUT_IN_MINS parameter in the script and running the set-time-interval.sh script. To verify if the extension is installed correctly, run this Python script provided in the extension repository. This script sends a GET call to the extension and returns the idle timeout that you have set.

You can also make this the default lifecycle configuration for all users in the domain. You can accomplish this by adding the lifecycle configuration to the default settings of the domain using the DefaultResourceSpec settings. This way, the script runs by default whenever users in the domain log in to Studio for the first time or restart Studio.

Troubleshooting

To troubleshoot, you can check the extension logs in Amazon CloudWatch under the /aws/sagemaker/studio log group, and by going through the <Studio_domain>/<user_profile>/JupyterServer/default log stream.

Studio auto-shutdown extension checker

The following diagram illustrates how to enable email notifications to track idle resources running within multiple user profiles residing under a Studio.

Regardless of how you install the auto-shutdown extension in your Studio domain, administrators may want to track and alert any users running without it. To help track compliance and optimize costs, you can follow the instructions in the GitHub repo to set up the auto-shutdown extension checker and enable event notifications.

As per the architecture diagram, a CloudWatch Events rule is triggered on a periodic schedule (for example, hourly or nightly). To create the rule, we choose a fixed schedule and specify how often the task runs. For our target, we choose an AWS Lambda function that periodically checks whether all user profiles under a Studio domain have installed the extension for auto-shutdown or not. This function collects user profiles names that have failed to meet this requirement.

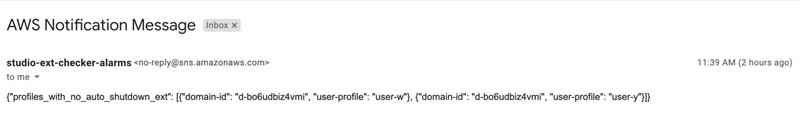

The user profiles are then routed to an Amazon Simple Notification Service (Amazon SNS) topic that Studio admins and other stakeholders can subscribe to in order to get notifications (such as via email or Slack). The following screenshot shows an email alert notification in which the user profiles user-w and user-y within the SageMaker domain d-bo6udbiz4vmi haven’t installed the auto-shutdown extension.

Auto-shutdown Data Wrangler resources

To further demonstrate how the auto-shutdown extension works, let’s look at it from the perspective of Data Wrangler within Studio. Data Wrangler is a new capability of SageMaker that makes it faster for data scientists and engineers to prepare data for ML applications by using a visual interface.

When you start Data Wrangler from Studio, it automatically spins up an ml.m5.4xlarge instance and starts the kernel using that instance. When you’re not using Data Wrangler, it’s important to shut down the instance on which it runs to avoid incurring additional fees.

Data Wrangler automatically saves your data flow every 60 seconds. To avoid losing work, save your data flow manually before shutting Data Wrangler down. To do so, choose File and then choose Save Data Wrangler Flow.

To shut down the Data Wrangler instance in Studio, choose the Running Instances and Kernels icon. Under Running Apps, locate the sagemaker-data-wrangler-1.0 app. Choose the Power icon next to this app.

Following these steps manually can be cumbersome, and it’s easy to forget. With the auto-shutdown extension, you can ensure that idle resources powering Data Wrangler are shut down cautiously to avoid extra SageMaker costs.

Conclusion

In this post, we demonstrated how to reduce SageMaker costs by using an auto-shutdown Jupyter extension to shut down idle resources running within Studio automatically using lifecycle configurations. We also showed how to set up an auto-shutdown extension checker and enable event notifications to track user profiles within Studio who haven’t installed the extension. Finally, we showed how the extension can reduce Data Wrangler costs by shutting down idle resources powering Data Wrangler.

For more information about optimizing resource usage and costs, see Right-sizing resources and avoiding unnecessary costs in Amazon SageMaker.

If you have any comments or questions, please leave them in the comments section.

About the Authors

Arunprasath Shankar is an Artificial Intelligence and Machine Learning (AI/ML) Specialist Solutions Architect with AWS, helping global customers scale their AI solutions effectively and efficiently in the cloud. In his spare time, Arun enjoys watching sci-fi movies and listening to classical music.

Arunprasath Shankar is an Artificial Intelligence and Machine Learning (AI/ML) Specialist Solutions Architect with AWS, helping global customers scale their AI solutions effectively and efficiently in the cloud. In his spare time, Arun enjoys watching sci-fi movies and listening to classical music.

Andras Garzo is a ML Solutions Architect in the AWS AI Platforms team and helps customers to migrate to SageMaker, adopt best practices and save cost.

Andras Garzo is a ML Solutions Architect in the AWS AI Platforms team and helps customers to migrate to SageMaker, adopt best practices and save cost.

Pavan Kumar Sunder is a Senior R&D Engineer with Amazon Web Services. He provides technical guidance and helps customers accelerate their ability to innovate through showing the art of the possible on AWS. He has built multiple prototypes around AI/ML, IoT, and Robotics for our customers.

Pavan Kumar Sunder is a Senior R&D Engineer with Amazon Web Services. He provides technical guidance and helps customers accelerate their ability to innovate through showing the art of the possible on AWS. He has built multiple prototypes around AI/ML, IoT, and Robotics for our customers.

Alex Thewsey is a Machine Learning Specialist Solutions Architect at AWS, based in Singapore. Alex helps customers across Southeast Asia to design and implement solutions with AI and ML. He also enjoys karting, working with open source projects, and trying to keep up with new ML research.

Alex Thewsey is a Machine Learning Specialist Solutions Architect at AWS, based in Singapore. Alex helps customers across Southeast Asia to design and implement solutions with AI and ML. He also enjoys karting, working with open source projects, and trying to keep up with new ML research.

Raghu Ramesha is an ML Solution Architect in AWS SageMaker SA Team. He focuses on helping customers migrate ML production workloads to SageMaker at scale. He specializes in machine learning, AI, and computer vision domains, and holds a master’s degree in Computer Science from UT Dallas. In his free time, he enjoys traveling and photography.

Raghu Ramesha is an ML Solution Architect in AWS SageMaker SA Team. He focuses on helping customers migrate ML production workloads to SageMaker at scale. He specializes in machine learning, AI, and computer vision domains, and holds a master’s degree in Computer Science from UT Dallas. In his free time, he enjoys traveling and photography.

Durga Sury is an ML Solution Architect at AWS SageMaker SA Team. Before AWS, she enabled non-profit and government agencies derive insights from their data to improve education outcomes. At AWS, she focuses on Natural Language Processing and MLOps.

Durga Sury is an ML Solution Architect at AWS SageMaker SA Team. Before AWS, she enabled non-profit and government agencies derive insights from their data to improve education outcomes. At AWS, she focuses on Natural Language Processing and MLOps.