AWS Startups Blog

How FINANZCHECK.de Combines Regulatory Compliance and Agility Using AWS

Guest post by Andreas Reich, Platform & Infrastructure Lead, FINANZCHECK.de

FINANZCHECK.de is a leading, independent comparison site for consumer loans and financial products. Founded in 2010, FINANZCHECK.de has matured from a small start-up to a 400-employee strong organization headquartered in Hamburg, Germany, with branch offices in Berlin, Brunswick, and Munich.

At FINANZCHECK.de, our core business is handling our customers’ personal financial information and as such, data protection is of the highest priority. Not only is this information categorized as particularly sensitive data by the GDPR; when handling such financial data there are often additional regulatory requirements to be followed, be it from individual contracts with banking partners or frameworks such as PSD2.

In this post, we will highlight some of the challenges that arise from operating in a regulated world as an organization focused on agility and take a deep dive into three specific regulatory requirements we faced and how we used the technology offered by AWS to help solve each of them.

Traditionally, the banking world is not known for agility and fast processes. At FINANZCHECK.de, however, we decided from the very start to focus on rapid product development so we could react to changing market conditions and new insights as quickly as possible. To achieve this, a number of fundamental principles guide us:

- Cross-functional teams: Each of our product teams consists of all functions – from business to DevOps – that are required to take on full end-to-end responsibility for a given product or service. Finanzcheck’s product development is hence not organized in departments but in product teams.

- You build it, you run it: Giving each team the responsibility not only to build but also to operate their services leads to a very direct user feedback loop and high service reliability.

- Don’t guess, measure: Every team is given full transparency about their KPIs and enabled to make business decisions on their own without losing time on lengthy discussions with a separate business department.

Having the liberty to design our own internal collaboration model, we still needed to avoid at all cost that external dependencies such as regulatory compliance would slow our fast pace of innovation to a crawl by introducing bureaucratic processes and approval bottlenecks. At the same time, it still had to be ensured that high-quality due diligence is conducted and highest data protection measures are implemented.

To complement this dynamic and flexible organizational structure, FINANZCHECK.de decided early on to leverage AWS for all workloads. Its elasticity allows our infrastructure to grow as the company does, and the wide variety of AWS-managed high-level services lets teams focus on engineering their software products without having to worry about managing individual servers. After reviewing the audit and compliance program documentation provided by AWS, Finanzcheck’s management team concluded that AWS would be the best choice of cloud platform and the legal department gave them the green light.

AWS already offers a widespread variety of services that aid in achieving compliance and adhering to best practices. To give a few examples:

- Setting up a multi-account AWS Organizations helps ensure isolation of environments

- AWS Key Management Service (AWS KMS) easily enables encryption-at-rest

- AWS Identity Access Management (AWS IAM) allows fine grained control of permissions

- AWS Config lets you monitor and track your infrastructure’s compliance using a wide range of rules

- Amazon GuardDuty makes you stay on top of possible security or data protection incidents

- AWS Audit Manager automatically provides data for compliance audits

Beyond that, we had to solve several specific regulatory requirements in a way that would work well within our organizational structure. We will now have a closer look at three examples of such requirements and how we used AWS’s cloud technology to our advantage to help solve them.

Administrative Audit Trail

Regulatory requirement:

All administrative actions to production infrastructure need to be tracked and logged.

Challenges:

Administrative access is spread across multiple AWS accounts and multiple teams; occasionally it may be necessary to gain global administrative access quickly without waiting for a central instance to approve it, but at the same time it must be made sure that this ability is not abused.

How we solved it:

The core requirement is easy enough to solve thanks to services readily provided by AWS: AWS CloudTrail automatically tracks and logs all actions taken in the AWS Console or via API calls. It also offers the ability to aggregate these logs from multiple AWS accounts and store them in Amazon CloudWatch Logs as well as an Amazon Simple Storage Service (Amazon S3) bucket in a separate AWS account. Using IAM, this bucket can be protected against manipulation, and S3 lifecycle rules together with S3 Glacier help achieve cost-effective long-term storage.

However, there is an organizational challenge here: In emergency situations, it may be necessary to quickly elevate your permissions and gain administrative access to global infrastructure. We specifically wanted to avoid restricting global administrative access to one person or team: the ability to elevate your permissions is deliberately spread across multiple teams. But if global administrative access is spread across many people, how can we ensure that this will not be abused and only utilized when truly necessary?

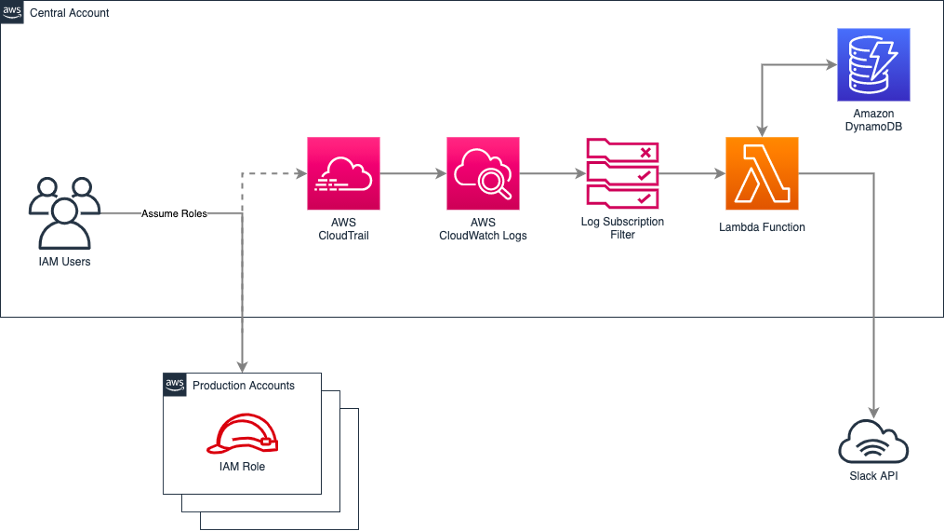

We opted for a model of mutual supervision. Whenever a team member elevates their permissions by assuming an administrative IAM role, a CloudWatch log subscription triggers a Lambda function, which then posts a message in a Slack channel, prompting the user to provide the reason for using this role. A cooldown timer is stored in a Amazon DynamoDB table to prevent multiple notifications for the same role and AWS account within a short timeframe.

As a condition for being added to the user group that is able to assume administrative IAM roles, each member of this group is required to:

- Be present in the Slack channel that the role notifications get posted to

- Respond to each notification they caused, giving a summary of their administrative actions, ideally referencing a ticket number

- Help ensure that everyone else does the same, reminding them if necessary

This model of distributed governance helps avoid that team members use administrative access for their daily work out of convenience. This is complemented by AWS CloudTrail, which tracks and stores each individual administrative action for later inspection if necessary.

Customer Data Audit Trail

Regulatory requirement:

All interaction with customer data, both manual and by automated processes, needs to be tracked in a tamper-proof audit trail.

Challenges:

With hundreds of customer service agents plus many automated backend processes, tracking every interaction with customer data amounts to a significant number of records. It must be ensured that collecting and storing these records will not slow down the application.

Due to FINANZCHECK.de’s structure of self-dependent product teams that each choose the technology that fits their workload best, a variety of programming languages and frameworks is in use. The audit trail needs to be integrated into each of them without investing a large amount of engineering effort to implement custom API clients.

How we solved it:

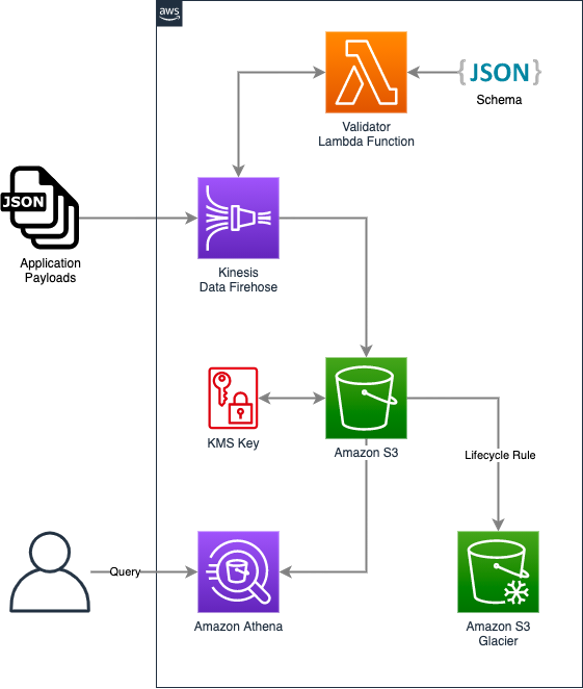

The first step was choosing a cost-effective infrastructure that could handle a steady stream of audit records without leading to slowdowns or outages. We quickly decided on Amazon Kinesis Data Firehose. It easily and automatically scales to thousands of records per second and is an entirely serverless and managed solution, meaning that all we had to do was provision a Delivery Stream and never need to worry about the infrastructure again. Kinesis Data Firehose provides multiple methods of data ingest, one of them is through API calls in the AWS SDK, which is readily available for all major programming languages and is already included in most of our projects, minimizing implementation overhead.

Because the audit records are archival data that can become substantial in size but rarely needs to be read back, Amazon S3 was chosen as the Delivery Stream’s destination. S3 lifecycle rules ensure cost-effectiveness by automatically moving records to S3 Glacier after a configured amount of time and deleting them altogether once the legally required retention period has expired. Whenever audit records need to be queried due to a legal request, Amazon Athena can be utilized to run SQL queries directly on the S3 data.

Because audit data can contain personally identifiable information (PII), there are access and encryption requirements but those were easy to fulfill: All data is encrypted by enabling SSE-KMS, and access to the S3 bucket and the AWS KMS encryption key is restricted via IAM permissions and automatically monitored by AWS CloudTrail.

Now that the infrastructure was in place, integrity of the data itself still needed to be ensured. This was achieved by creating a JSON Schema defining all required and optional fields of an audit record as well as each field’s data type and format. JSON Schema is an established and documented format that allows records delivered by the individual workloads to be automatically validated by readily available validator packages.

Kinesis Data Firehose has a built-in data transformation feature that allows triggering an AWS Lambda function on ingested records, validating and transforming them as necessary. So the final piece of the puzzle was a custom Lambda function that validates each record against the JSON Schema and reformats it to make sure the resulting data in S3 is uniform and consistently queriable by Amazon Athena.

Through this combination of AWS services, we have implemented a fully serverless audit trail solution that ensures data integrity, allows querying records via SQL, is highly cost effective, and can automatically scale up to handle tens of thousands of ingested records per second.

IAM Permission Change Approval and Logging

Regulatory requirement:

All changes to an employee’s access permissions need to be confirmed and audited by more than one person and must be logged.

Challenges:

Due to our culture of fast-paced development cycles and rapid assumption testing, teams may often require access to additional services they are testing or rolling out. Engineers also occasionally change teams or intermittently join a cross-team project group, which requires them to acquire additional access permissions.

A central approval and auditing authority could quickly become a bottleneck and introduce unwanted bureaucracy.

How we solved it:

The key to solving this challenge without creating manual process and documentation overhead is using Infrastructure as Code and combining it with automation.

By defining all AWS IAM and other access permissions in declarative code using Infrastructure as Code frameworks such as AWS CloudFormation or, in our case, Terraform, a well-documented state of the current permission sets always exists, provided that a number of rules are followed:

- The code and its changes have to be stored in a centralized, tamper-proof location

- Changes to permissions have to only be possible by updating the code, not manually

- There has to be constant synchronization between the code and the actual permissions, ensuring that the code reflects reality

In most organizations there already is a well-established centralized location for code: A version control system such as GitHub, GitLab,or AWS CodeCommit. As long as permissions are properly set up so that modifications cannot be retroactively deleted, and administrative access to the version control system is restricted and monitored, this ticks the box for storage and documentation. Of course, the permissions to access the version control system itself can also be defined as code!

The next step is ensuring that this documented code is the single source of truth for the actual permission sets that are in effect. For all major Infrastructure as Code frameworks there are solutions readily available that hook into the version control system and automatically update the infrastructure whenever the code is changed.

After setting up this automation, it must be ensured that it is the only entity that can change permissions, manual permission changes that would circumvent the process have to be prohibited. Fortunately, that is just another set of permissions that can be implemented and deployed through the very same process.

Since this automation needs to have full administrative access to IAM, it has to be very well guarded against illegitimate access. We therefore decided to deploy it in a separate AWS account that regular users do not have any access to. This AWS account is able to assume administrative IAM roles in our production accounts in order to update permissions. But what about administrative access to this automation account – is this a catch-22? Not really: Once the automation has been set up, administrative access to it is very rarely needed. It can therefore be secured by more traditional methods such as locking credentials in a physical place that needs multiple different authorization levels to be accessed.

Finally, regulation requires dual control for permissions changes. Whenever an employee’s access permissions are changed, these changes always have to be checked and acknowledged by another person. While this sounds bureaucratic at first, we realized that our version control system already has a solution for this. All we had to do was configure our GitHub repositories so that direct pushes to the code are not allowed and instead, every change needs to be proposed as a pull request that has to be reviewed and acknowledged by another member of the team. Once the pull request has been acknowledged and merged, the automation takes over and deploys those changes to the production infrastructure.

While the regulatory requirement sounded daunting and complex to fulfill at first, all it took was combining technologies and processes that developers and administrators are already familiar with. Through version control, Infrastructure as Code, automation, and the right configuration, we were able to integrate logging, documentation and review of permissions changes into our existing workflow without much overhead.

Summary

Operating in a regulated environment does not need to stop you from running a modern, agile IT environment relying on public cloud infrastructure. On the contrary, using the resources provided by AWS can give you a kick-start on regulatory compliance:

- Governance and security tools such as AWS CloudTrail, IAM, and KMS allow you to tick a lot of compliance boxes with minimal effort

- Each AWS managed service you utilize automatically means one less component you need to worry about keeping up to date with the latest security patches

- With most AWS services, encryption at rest can be enabled by just clicking a checkbox

- Monitoring and auditing tools such as Amazon GuardDuty, AWS Config, and AWS Audit Manager help you continuously monitor your entire environment and make you stay on top of compliance violations and security incidents

- AWS Artifact provides comprehensive physical security and compliance documentation available for download

Some regulatory requirements may at first sound like a bureaucratic nightmare that will slow your development and operations down to a crawl. But if you look into the reasoning behind those requirements you will often find that, with the help of cloud technology, you can achieve compliance without compromising agility or flexibility. Experience has shown that risk assessors and legal consultants are also well aware of AWS and its benefits in a regulated environment.