AWS Partner Network (APN) Blog

Connecting AWS and Salesforce Enables Enterprises to Do More with Customer Data

|

|

|

|

By Venkatesh Krishnan, Sr. Product Manager at AWS

By Jai Menon, Sr. Solutions Architect at AWS

By Sang Kong, Sr. Solutions Architect at AWS

As organizations seek to innovate and build customer experiences faster by leveraging data about their customers, a closer integration of Salesforce—a leading customer relationship management (CRM) platform—and Amazon Web Services (AWS) opens up a lot of possibilities.

In this post, we’ll explore some specific Salesforce integration scenarios that customers often ask about. Salesforce is an AWS Partner Network (APN) Advanced Technology Partner with the AWS DevOps Competency. The announcement from AWS and Salesforce about our extended strategic alliance and how we’re integrating our products underscores the opportunity to help enterprises get more out of their customer data.

Let’s start by putting things into context. Data within organizations is often stored in individual silos across multiple systems and applications. Enterprises are then forced to invest in undifferentiated heavy lifting to achieve a comprehensive 360-degree view of their customers and archive their data cost effectively.

Security of the data is critical as data is shared between these environments. Organizations need reliable and private connectivity between their applications hosted on AWS and Salesforce, without having to traverse the internet.

Enterprises are differentiating the customer experiences they provide by being responsive to events happening in their ecosystem. A complete view of data that is synchronized in real-time across various sources enables them to draw valuable insights by employing data analytics and machine learning techniques.

The primary AWS services used in our integration scenarios include Amazon Simple Storage Service (Amazon S3), which provides cost effective storage and archival that customers use to underpin their data lake; AWS Lambda is a compute service ideally suited for processing events without provisioning or managing servers; Amazon Athena for interactive queries to analyze data in Amazon S3 using standard SQL; and AWS PrivateLink, which provides private connectivity between Virtual Private Clouds (VPCs), AWS services, and on-premises applications hosted on AWS.

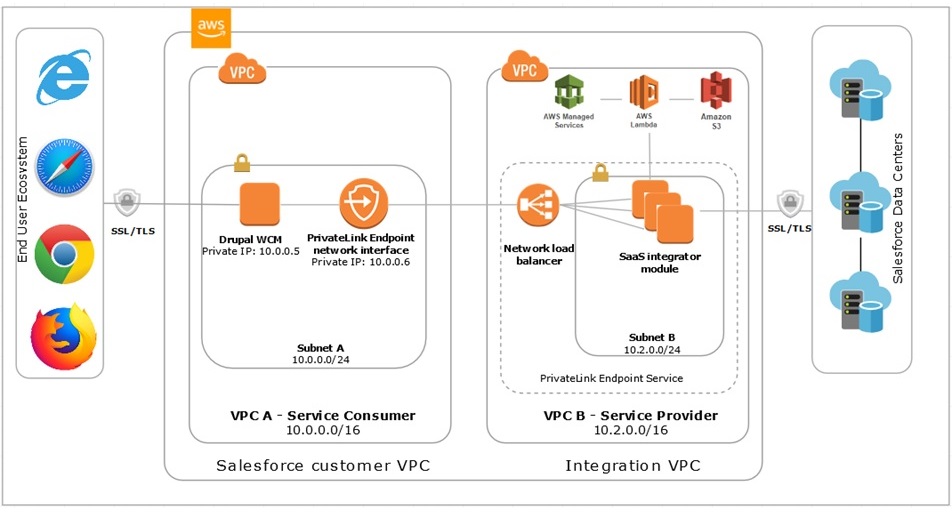

Leveraging these and other AWS services, the architecture in Figure 1 shows how to establish connectivity for data and events between Salesforce and AWS.

Figure 1 – Integrate and extend your applications with AWS and Salesforce.

SaaS Integration Service

To demonstrate an approach to connecting Salesforce and AWS, we have created a software-as-a-service (SaaS) integration service running in an Amazon Elastic Compute Cloud (Amazon EC2) instance within a VPC.

The SaaS integration service is a collection of AWS services specifically designed to facilitate seamless, secure, and real-time flow of data and events between a customer’s VPC and Salesforce. An enterprise that hosts applications and services in its VPC can include a VPC Endpoint powered by AWS PrivateLink that talks to the SaaS integration service and accesses Salesforce APIs privately.

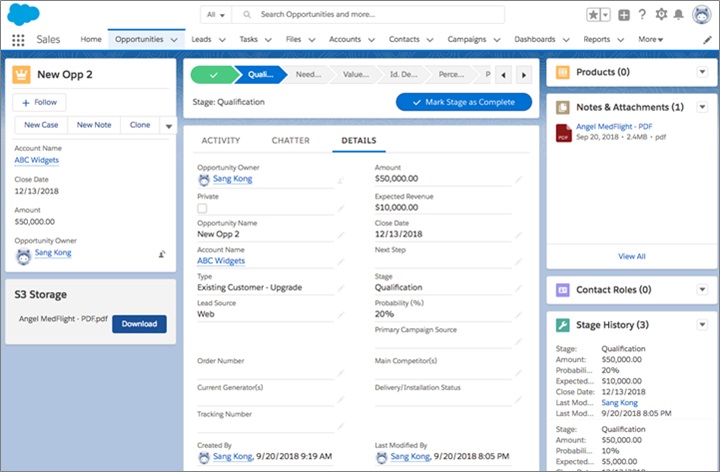

Consider the use case where a Salesforce administrator or developer wants the Salesforce opportunity record, along with any other digital assets, to be stored and updated in Amazon S3 at the same time as it’s ingested/updated in Salesforce. This helps administrators run real-time analytics and queries, and update status fields back in Salesforce.

Another use case is where an AWS developer creates a web application hosting a blog or survey that runs in a customer VPC and collects data about customers when they register on a web page. If the registered customer data can be reflected in Salesforce as a contact automatically, then a cumbersome manual upload of the data can be avoided.

Let’s look at some example scenarios of what AWS and Salesforce connectivity can accomplish.

Scenario 1: Attaching Contracts to Opportunity Records

John, an IT Manager at Acme Inc., who is responsible for managing his company’s Salesforce subscription, is looking for an easy way for the sales team to attach contracts to customer Opportunity Records in Salesforce. John is also looking to create an archive for the entire Opportunity in Amazon S3, which is Acme’s data lake back-end service.

Ideally, besides archiving the opportunity data, John would like to manage versions of the attached digital assets and use this data with Acme’s data from other sources to train machine learning models. Services offered by AWS, including Amazon SageMaker, AWS Lambda, and Amazon Kinesis, are very popular in Acme, and John has heard how great it would be to leverage these services for analyzing customer data from Salesforce.

Acme’s sales representatives manually upload data from Salesforce to their Amazon S3 buckets, but John is concerned about the security of the data since users often store this data locally before uploading it via the public internet to Amazon S3. He’s looking for seamless, secure, and cost-effective ways of extending the use of Salesforce objects using AWS services to unlock new capabilities for Acme.

Proposed Solution

Let’s examine the proposed solution to John’s problem. A Salesforce administrator like John uses Salesforce Process Builder to configure a new process that triggers a Platform Event when a sales rep at Acme attaches a contract to an Opportunity Record. With Platform Events, John can monitor for changes in an Opportunity and take action from within Salesforce or externally.

Our AWS Lambda function that runs as part of the SaaS integration service uses the Platform Events API to listen for new events.

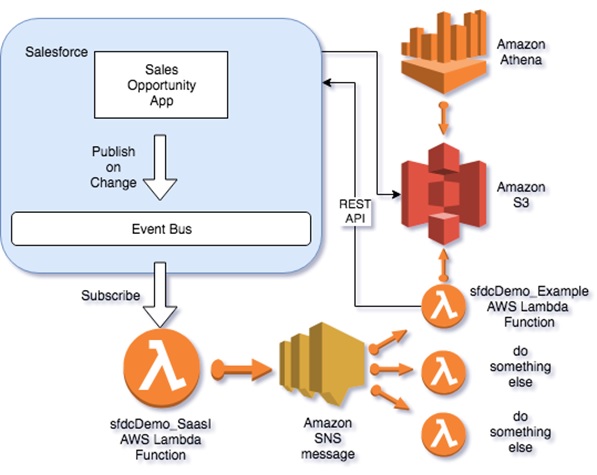

Here’s a summary of the sequence of steps that follow in this post, which we’ve shown in Figure 2:

- An Opportunity is created or updated, which triggers a Platform Event.

- The AWS Lambda function

sfdcDemo_SaasIis subscribed to that Platform Event and sends the message to Amazon Simple Notification Service (SNS) where any subscribed Lambda can then take action. - The Lambda function

sfdcDemo_Exampleis triggered and:- Retrieves the entire Opportunity Record

- Retrieves the attachment associated with the Opportunity Record

- Copies the attachment to Amazon S3

- Writes the Opportunity Record to Amazon S3

- Updates the Sales Opportunity Record with a link to Amazon S3

- Once we have the data in Amazon S3, we can use AWS Athena to query it via SQL.

Figure 2 – Ingestion and query of the data.

Implementation

Let’s deep dive into our specific implementation for John’s problem in Scenario 1.

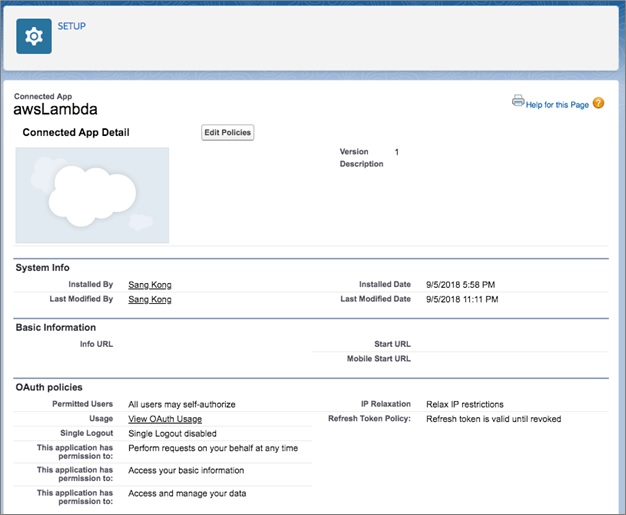

First, we created a Connected App in the Salesforce Lightning Setup (All) under Apps, App Manager. The key permissions are: Perform requests on your behalf, Access your basic information, and Access and manage your data. It’s also important to relax IP restrictions if you do not want to set-up IP rules.

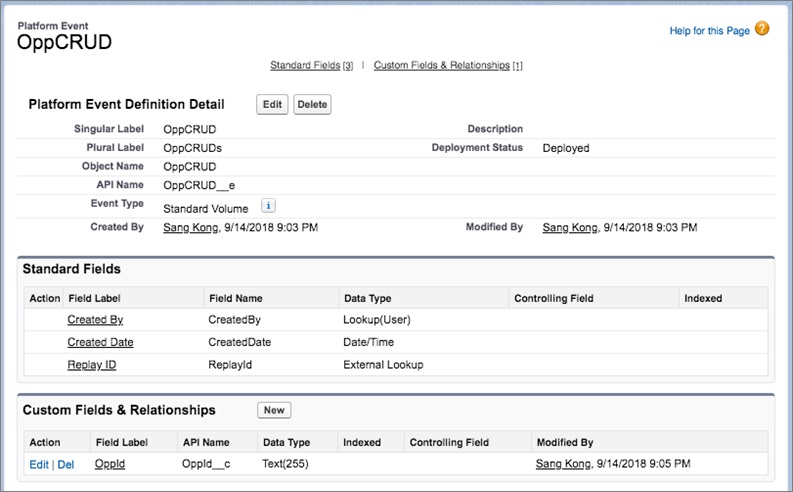

We then created a platform event called “OppCRUD” with the custom field “OppId”. This is defined in the Salesforce Lightning Setup (All).

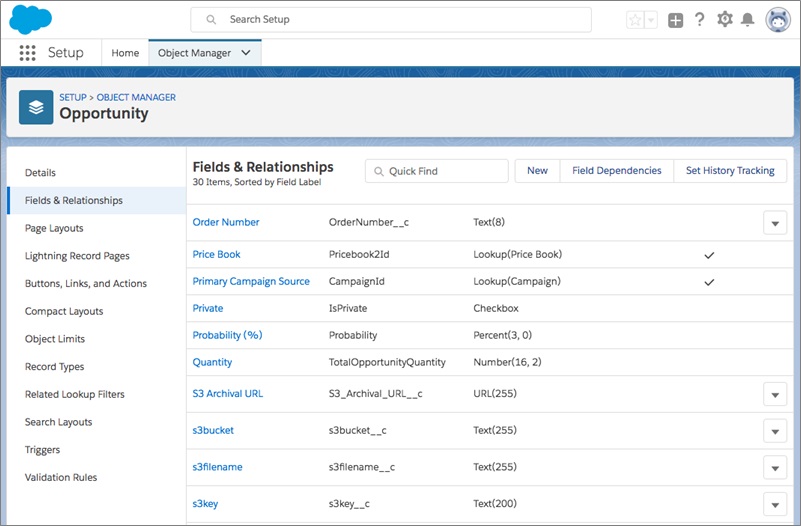

Next, we created a few custom fields for Opportunity. We’ll use these to prepare an Amazon S3 file download button that is secure.

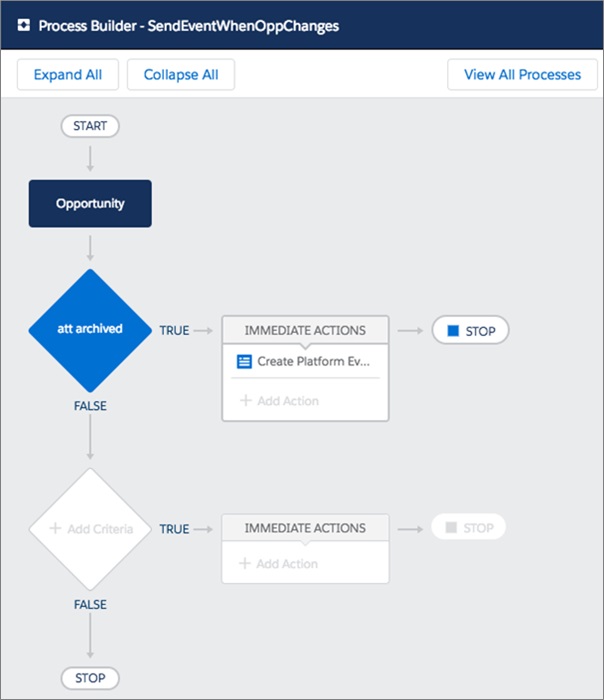

We then created a Process in the Salesforce Process Builder called “SendEventWhenOppChanges”. When an Opportunity Record is either created or updated, if the S3_archive_url field is not blank, we can publish an “OppCRUD” Platform Event with the OppId set to the OpportunityId from the Record that was created/updated.

The Process Builder makes it easy to respond to changes within Salesforce.

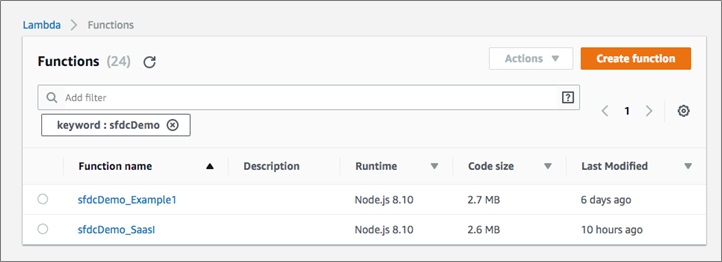

There are two Lambda functions in our solution. The first function sfdcDemo_SaasI subscribes to the Salesforce Platform Events and is set to the topic “OppCRUD__e”. It uses the OAuth mechanism to grab a token, and we leveraged the node.js library “nforce” to make it easier to work with Salesforce APIs.

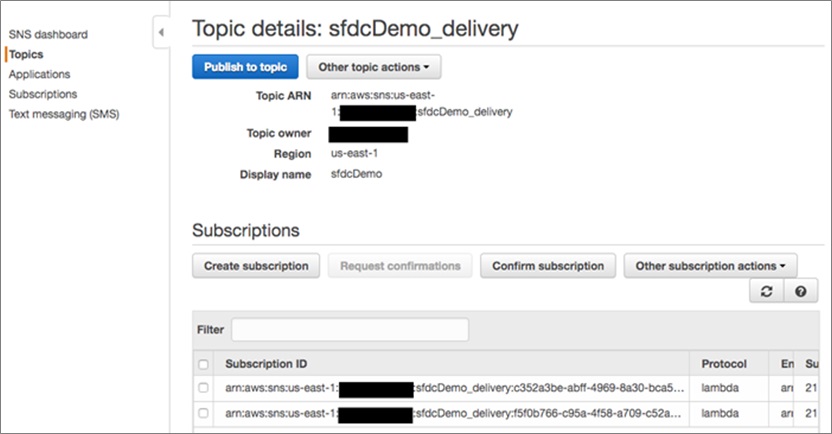

This function logs into Salesforce and receives an OAuth token, which is leveraged on every subsequent API action. Once an event is received from Salesforce, the Lambda function writes it to an SNS topic.

A simple SNS topic can be used to make it easier for other AWS interactions to happen without having to connect to Salesforce directly. SNS supports delivery to various subscribers via pub/sub, which includes AWS Lambda.

In our demonstration, we sent it to only one Lambda, but this is where you can add additional functionality to the solution.

The second Lambda function sfdcDemo_Example is triggered by an SNS message to the topic sfdcDemo_delivery. The payload for the SNS message mirrors the payload from the Salesforce Platform Event. The Lambda function takes the Opportunity Id from the SNS message and queries for the relevant Opportunity Record.

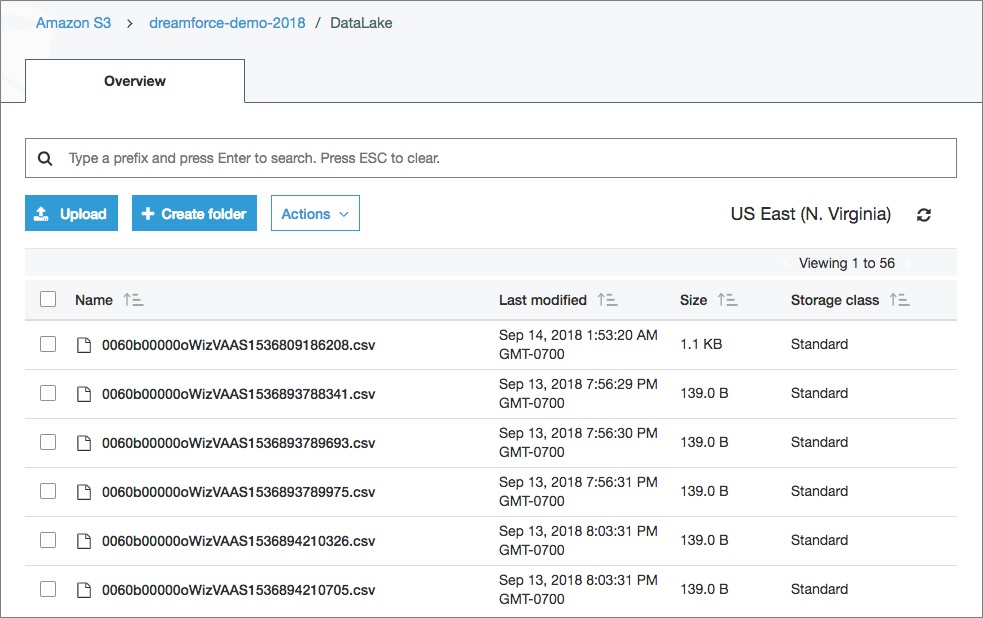

Once the Opportunity Record is updated, the Lambda function writes the updated Opportunity Record to an Amazon S3 bucket as a CSV (comma separated value) file named using the DataLake key + OpportunityId + a millisecond date timestamp.

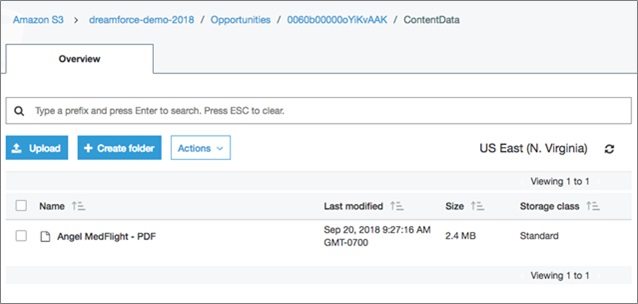

Next, the Lambda function queries the ContentDocumentLink table for the ContentDocumentId associated with the Opportunity Id. The function then queries the ContentDocument and determines the LatestPublishedVersionId, which is used to getContentVersionData.

This returns a buffer that is written directly to an Amazon S3 bucket with an Amazon S3 key as “Opportunities/opp_id/ContentData/attachmentname”.

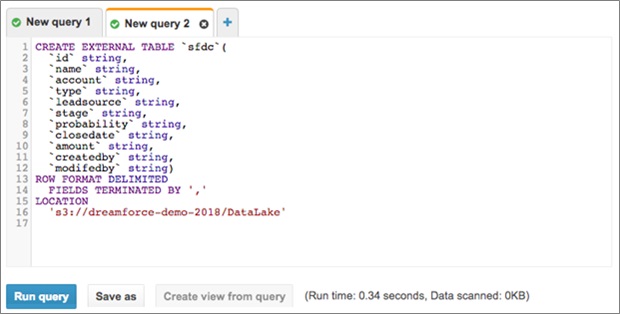

Any data in Amazon Athena can be queried, so we created an external table using the “CREATE EXTERNAL TABLE” query.

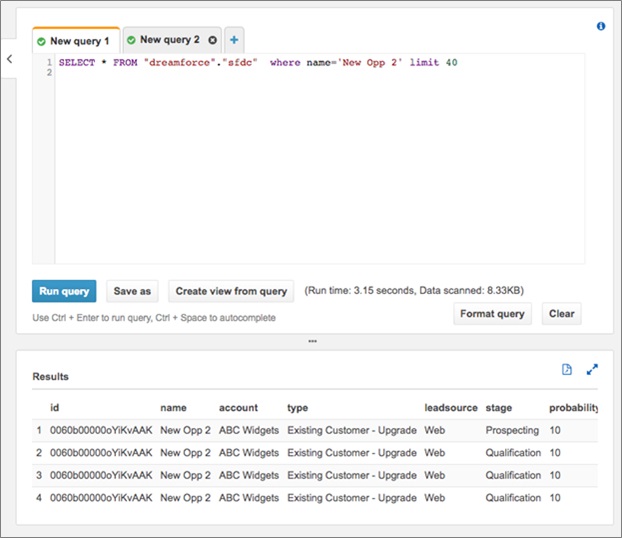

Next, we ran various queries against our archived Opportunities.

Review: How We Solved for Scenario 1

Let’s take a look at what we did in Salesforce to complete the experience:

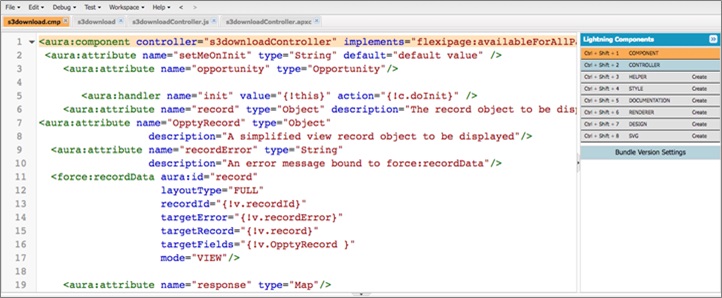

- In the Salesforce Developer Console, we created a Lightning Component for the Amazon S3 storage component.

- We created an Apex Controller to get the content of the Opportunity referred to in the Sales Console.

- We created a Javascript helper to initialize the component and also handle the button click to download the file.

- Lastly, we created a visualforce page that sets the security context to access the secure Amazon S3 bucket. The page generated a signedURL with a very short expiration and opened a separate window with the attachment shown.

The visualforce page is called with query string set to the bucket and key, and is then secured by Salesforce. The visualforce page uses the AWS Software Developer Kit (SDK) for Javascript to take the querystring, parse out bucketname and Amazon S3 key, and create a signed redirect URL to show the page with the attachment.

The final product is a component that appears only when the attachment has been archived.

Scenario 2: Meeting Security Requirements

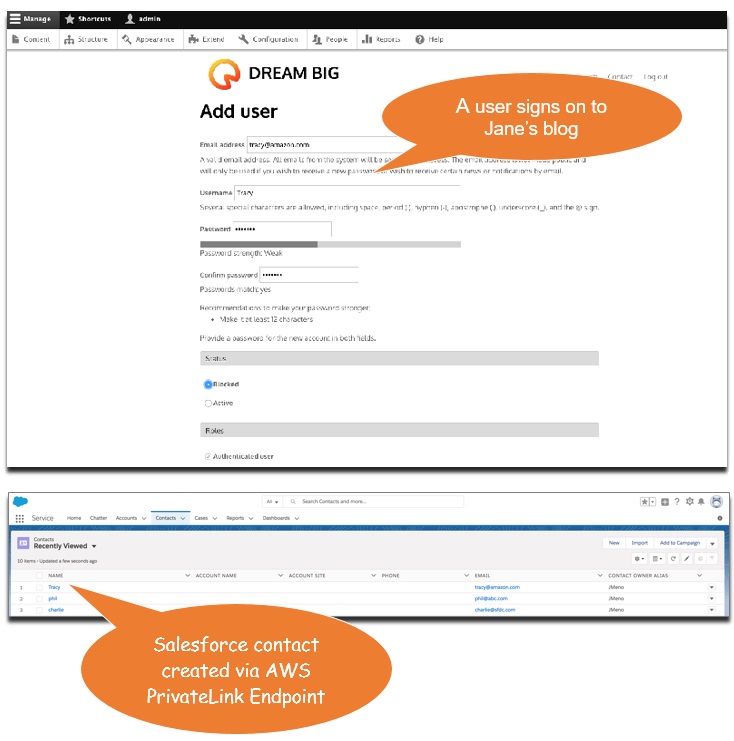

Jane is a web developer for Acme who is working on an assignment to deliver an external-facing blog portal that would be leveraged by Acme’s employees to communicate their engineering and innovation thoughts to the world.

A critical requirement stemming from Acme’s marketing team is to capture blog visitor information, especially the visitors who register on the site for updates, as Contacts within Acme’s Salesforce instance for lead generation and opportunity identification purposes.

While designing the solution, Jane hosts Drupal (an open source web Content Management System) on an Amazon EC2 instance to create the blog solution. She struggles to satisfy the security requirements from Acme’s Net-Sec team, which demands a secured end-to-end connectivity and refuses to allow connections to Acme’s Salesforce instance from untrusted networks.

Proposed Solution

Jane’s problem can also be addressed by the proposed architecture in Figure 2.

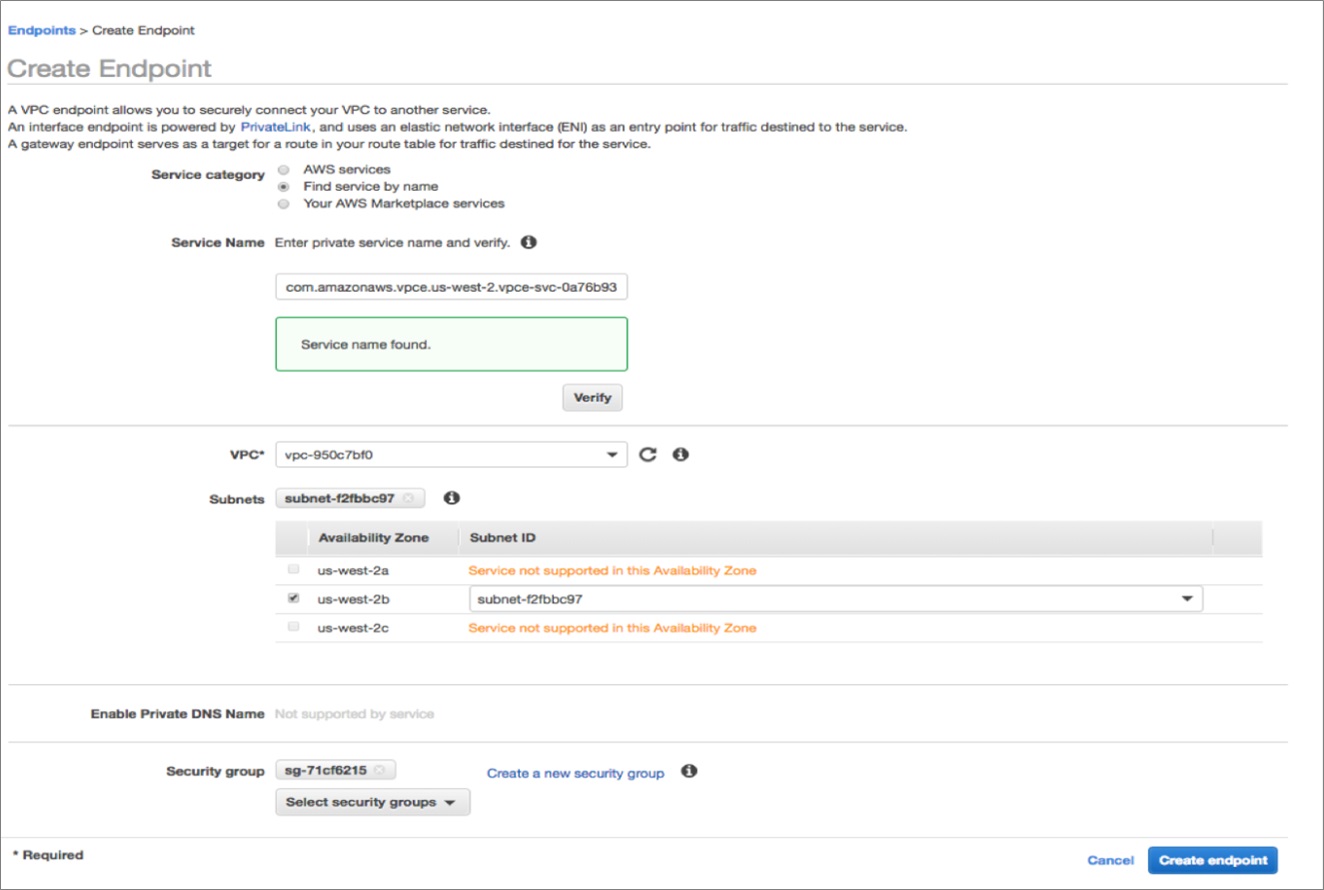

She decides to use the Salesforce plugin within Drupal to integrate Drupal with Salesforce. The blog page she created runs on Acme’s VPC on an Amazon EC2 instance and allows readers to input their contact information. To satisfy the network security requirements, Jane creates an AWS PrivateLink Endpoint within her VPC that is mapped to Acme’s Salesforce Service advertised by the SaaS integrator service.

Implementation

The first step is to create a VPC Endpoint in Jane’s VPC. After selecting the appropriate service, click on the “Create endpoint”.

With this setup, Jane avoided the need to route integration traffic over the internet and also supported a strong security posture wherein Acme’s Salesforce instance only needs to trust traffic originating from the SaaS integrator.

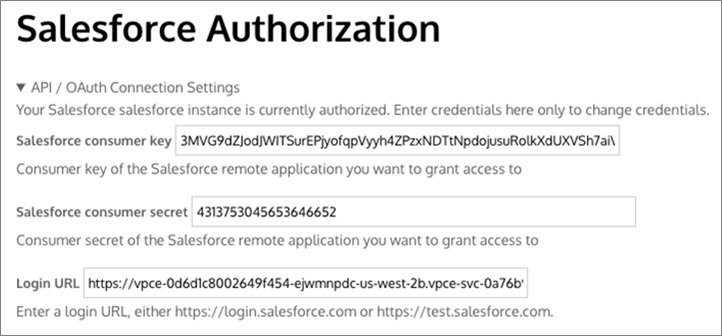

To access Salesforce APIs from within her app, all that Jane needs to do is to reconfigure it with the VPC Endpoint she just created.

Now, when a blog reader inputs their contact details in Jane’s application, a Salesforce contact is created by the application over the AWS PrivateLink Endpoint.

Review: How We Solved for Scenario 2

Let’s take a look at what we did in Salesforce to complete the experience:

- In the AWS Console, we created a VPC Endpoint powered by AWS PrivateLink to which we will send our requests so the traffic is routed privately via the AWS network.

- We replaced the original login URL within the Salesforce authorization of the application to point to the new VPC Endpoint.

- We sent our Add Contact request to Salesforce using the new VPC Endpoint we created.

- Lastly, we can see the new contact record is added in Salesforce.

Conclusion

Both scenarios in this post demonstrate the value that enterprises can realize by leveraging AWS service to integrate customer data between AWS and Salesforce.

Closer integration between AWS and Salesforce opens up a plethora of opportunities for enterprises to do more with their customer data and develop new and unique ways of serving their customers. The announcement of our expanded strategic alliance with Salesforce and the new product integrations we are building will go further in providing pre-built support for the common patterns customers want to implement.

We can’t wait to demonstrate how the announced product integrations will add more out-of-the box functionality and support for advanced scenarios.

Check back on this blog for the open source code and configurations that you can use to implement these integrations or extend them to create your own.

.

|

|

Salesforce – APN Partner Spotlight

Salesforce is an AWS Competency Partner. They are a leading customer relationship management (CRM) platform, and the announcement from AWS and Salesforce about our extended strategic alliance underscores the opportunity to help enterprises get more out of their customer data.

Contact Salesforce | Solution Overview

*Already worked with Salesforce? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.