AWS Partner Network (APN) Blog

Deploying a High-Volume Application on AWS with Kubernetes

By Kiril Dubrovsky, Senior Solutions Architect at Mission

|

|

|

|

When I work with customers, I like to think of myself like House, the TV doctor who can solve strange and unique challenges.

Mission Cloud Services client Your Call Football (YCF) presented one of those challenges: deploying a high-volume mobile application on Amazon Web Services (AWS) that could handle large traffic spikes at known times.

This was not your typical auto scaling situation. To meet this challenge, we turned to Kubernetes, an open-source system that handles automated deployments, scaling, and management of containers.

In this post, I will both discuss and illustrate how Mission helped YCF get into game shape and scale their application by building out the infrastructure as code, determining the right instance type for the job, prepping the load balancers, and employing Amazon Elastic Container Service for Kubernetes (Amazon EKS).

Mission Cloud Services is an AWS Partner Network (APN) Advanced Consulting Partner with AWS Competencies in DevOps, Healthcare, and Life Sciences. Mission is also a member of the AWS Managed Service Provider (MSP) Partner Program.

Overview

Your Call Football is a wholly unique football experience, where fans get to call plays in real-time, and see them run on the field by real players. Fans can stream the game live from anywhere, vote by phone for the play they want to see executed, and win cash prizes based on their play-calling prowess.

The company came to us with a very specific challenge regarding their application. They were developing a product that would either have a handful of active users at a time, or potentially 100,000 active users—and nothing in between.

This wasn’t a simple situation of auto scaling for production because the app had to work at a large scale at very specific, scheduled times.

The other wrench—and it’s a big one—is that the voting would happen simultaneously, in a matter of seconds, for everyone involved. Any lag, delay in service, or timed-out request would defeat the whole purpose and represent a complete failure of the system.

These tight performance requirements meant that even auto scaling could be too slow. So, we had a checklist of what we had to solve:

- Mobile application infrastructure.

- Real-time, high availability.

- High load at predictable times.

- Short bursts of usage.

- No delay is acceptable.

Now that we knew our challenge, it was time to get building.

Getting Started with Infrastructure as Code

The first step was building out the infrastructure as code (IaC). We knew YCF would be a perfect fit for IaC because pieces needed to be enabled quickly.

Infrastructure as code is something Mission tries to do for all of our clients. When the networks, servers, and services on AWS are defined with coded language, it provides a documented and automated mechanism for deploying and managing the infrastructure.

Next, we had to find the right tools for this. We prescribed Terraform, Kubernetes, Amazon Relational Database Service (Amazon RDS), and Amazon ElastiCache.

I have written extensively about the benefits of Amazon RDS—it’s the best database solution out there. As for ElastiCache, we needed a way to ensure seamless deployment of in-memory data across thousands of users—otherwise, all the voting would be for nothing.

ElastiCache alleviated load to the database, but the final piece of the puzzle was Kubernetes. The main benefit of Kubernetes is that it’s scalable and powered by a large community of users who regularly add new tools. I also find it a fast and easy process to get a Kubernetes cluster up and running—and they are highly configurable, as well.

The Right Instance Type for the Job

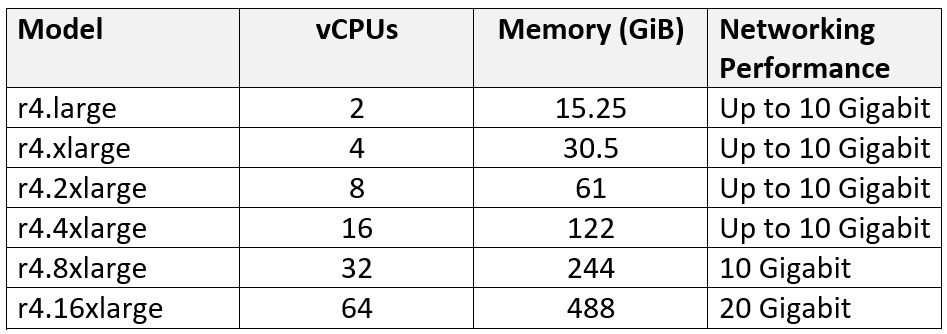

To build this system, we needed to do some extensive instance research. AWS offers a broad choice of Amazon Elastic Compute Cloud (Amazon EC2) instances that serve different use cases.

Benchmarking is a good idea and helps determine which instance type performs best. We found that the R4.large instance type performed better than T2s, and provided optimal networking for our solution.

With Amazon RDS, you need a terabyte of storage to max out your I/O. Anything less than a terabyte volume size and you run the risk of hitting I/O walls in the Amazon Elastic Block Store (EBS) I/O Credit and Burst economy when using SSD. We learned this the hard way.

While we were trying to handle this with a smaller 20 GB database volume size, we hit I/O speed limits. By resizing the volume to one terabyte—which is far larger than we theoretically needed—we were able to leverage the additional I/O without having to use provisioned IOps—something that is a lot more expensive.

Figure 1 – Different r4.large instance types.

Iteration and Improvement to Get High Volume

We built a system with pre-warmed Elastic Load Balancers so we could prep the load balancers before the massive load spikes for a smoother experience.

Service limits were another area of constant improvement as we tested out a variety of options before finding the instance type that worked best. YCF’s performance requirements pushed some AWS services pretty hard, and we tested some of the theoretical limits of the AWS platform.

I’ve heralded the benefits and value of AWS Business Support—it’s one of the most essential tools for anyone running on AWS. They deserve another shout-out on this one, as they came through multiple times during this project. We knew our infrastructure would work when the time came because of the confidence AWS support supplied.

Kubernetes Design in Review

Kubernetes is like surfing—you just have to grab on and ride the waves as they come. It’s self-healing, and I’m a big fan of that. Honestly, it’s such a cool tool that there are still moments when it feels like magic to me.

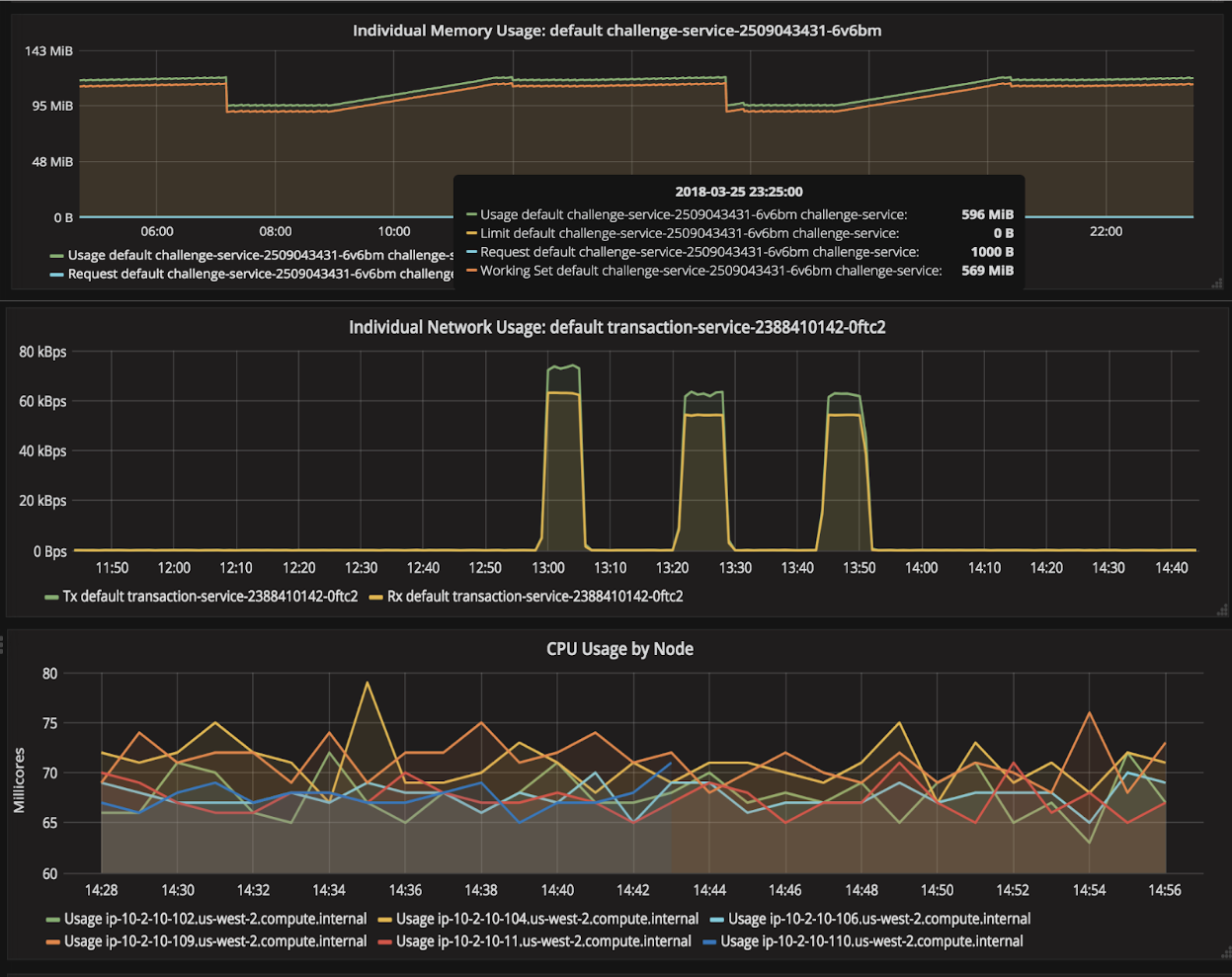

The graph in Figure 2 shows some examples of the Grafana performance graphs, and it’s a little like finding a needle in a haystack because there is so much information available.

Figure 2 – Examples of Grafana performance graphs.

You can’t just kick back and let Kubernetes do all your work for you, though. We used kops (Kubernetes Operations) to create the Terraform templates we needed to create the clusters. This is a good solution because it allowed us to use variables for the values we are continually changing and can automate rolling out those changes.

After the first season ended with YCF, we took some time to look at improvement opportunities. Luckily, Amazon EKS was released around this time. We migrated and consolidated the Kubernetes clusters we built into a single Amazon EKS cluster. This showed gains in performance of the master nodes, or Kubernetes control plane.

We also saw improvements in operability and cost due to the consolidation of the clusters, while maintaining the key performance metrics of 100,000 concurrent users and 10 millisecond voting timings.

Similar to how we replaced T2 instance types with R4s, we employed the same iteration for Amazon RDS and ElastiCache clusters.

Summary

In this post, we describe how Mission’s deft implementation of Amazon EKS, infrastructure as code, Elastic Load Balancers, and other successful strategies helped ensure that Your Call Football’s backend remains poised in the pocket even when blitzed by thousands of users.

By leveraging Kubernetes and other solutions to optimize the speed and performance of YCF’s cloud environment, the app provides thousands of football fans with a fun, unique, and issue-free gaming experience—all in real-time.

The content and opinions in this blog are those of the third party author and AWS is not responsible for the content or accuracy of this post.

.

AWS Competency Partners: The Next Smart

Mission Cloud Services is an AWS Competency Partner. If you want to be successful in today’s complex IT environment and remain that way into the future, teaming up with an AWS Competency Partner is The Next Smart.

|

|

Mission – APN Partner Spotlight

Mission Cloud Services is an AWS Competency Partner. Through its dedicated team of expert cloud operations professionals and solutions architects, Mission delivers a unique breadth and depth of AWS-recognized technical and strategic proficiencies.

Contact Mission | Practice Overview

*Already worked with Mission? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.