AWS Partner Network (APN) Blog

Diving Deep on the Foundational Blocks of VMware Cloud on AWS Outposts

By Aarthi Raju, Sr. Manager, Solutions Architecture – AWS

By Schneider Larbi, Sr. Partner Solutions Architect – AWS

As customers look to modernize their workloads and migrate to the cloud, depending on their use cases some choose to migrate fully into the cloud and land their applications on Amazon Elastic Compute Cloud (Amazon EC2) or containers or serverless technologies.

Customers with vSphere workloads have been using VMware Cloud on AWS to migrate applications into the cloud in a fast and seamless manner.

VMware Cloud on AWS allows customers to migrate their workloads faster without having to refactor or change any application code or logic. As our team has worked with customers migrating their vSphere workloads to the cloud, we got feedback on specific workloads that need to stay on premises or at the edge due to the need for low latency, local data processing, or data sovereignty and compliance requirements.

To meet these use cases for our vSphere customers, Amazon Web Services (AWS) launched VMware Cloud on AWS Outposts which enables organizations to run these workloads on premises on a fully-managed VMware Cloud on AWS stack.

VMware Cloud on AWS Outposts allows AWS to extend the boundaries of AWS Availability Zones (AZs) to bring VMware’s software-defined data center (SDDC) powered by the vSphere foundation from the AWS Region to the edge. These are in the form of racks that customers can use to run vSphere workloads on premises using cloud operating models.

Customers can now run legacy applications that could not otherwise be migrated to the cloud due to latency, data residency, and local data processing requirements. Additionally, customers have the ability to run vSphere workloads on a fully-managed solution in locations without AWS Regions.

In this post, we will dive deep into the foundational blocks of VMware Cloud on AWS Outposts and how customers and partners can leverage these to scale and modernize vSphere workload deployment on premises.

What is VMware Cloud on AWS Outposts?

AWS Outposts brings AWS infrastructure, services, and operating models to customers on premises. Think of it as a logical construct AWS uses to pool capacity from one or more racks of servers for customer consumption.

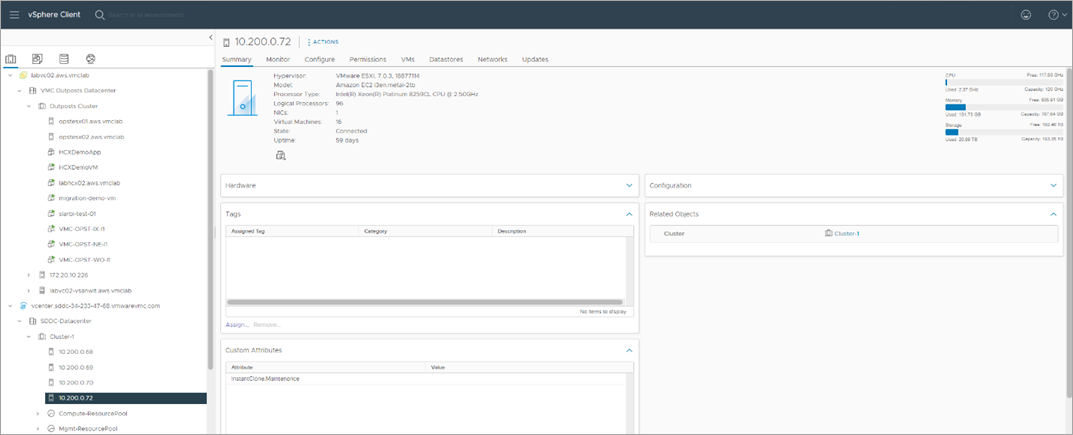

In the context of VMware Cloud on AWS Outposts, we run VMware SDDC powered by ESXi, vSAN as software-defined storage, NSX as software-defined networking, and vCenter for central management on the Outposts racks with connectivity back to a parent AWS Region.

VMware Cloud on AWS Outposts maintains the same SDDC components used in the cloud. The architecture below provides a logical overview of how it looks.

Figure 1 – VMware Cloud on AWS Outposts architecture.

The illustration above shows the logical representation of a deployed AWS Outpost service. The architecture indicates how every logical Outpost comprises racks that contain compute, storage, and networking components and has connectivity back to an AZ in an AWS Region through the use of a service link.

This shows that VMware Cloud on AWS Outposts is not designed to operate independent of its connectivity back to the Region as standalone racks. Rather, it’s treated as part of our Availability Zones with a logical extension through a link to customer sites. AWS fully manages the underlying infrastructure running the SDDC, and VMware manages all SDDC constructs on the rack.

Compute

At launch, VMware Cloud on AWS Outposts uses i3en.metal instances powered by Amazon EC2. These instances are hyper-converged instances, meaning the server provides compute together with storage and networking.

The instances are bare metal hosts running ESXi and comes with Intel Xeon Cascade Lake processor with 48 Core CPU@ 2.5Ghz with hyperthreading enabled by default, providing a total of 96 logical CPUs per node.

This provides plenty CPU power to run vSphere workloads that are CPU-intensive at the edge with low latency. Each node also provides 768GiB of memory.

Additionally, every configuration ordered by customers comes with a spare or “dark capacity.” This extra node is used for elastic distributed resource scheduling (eDRS) scale out and lifecycle management activities. For example, when there is host degradation, we bring up the spare capacity until the technical team gets onsite to replace the node.

Within the rack, there are four M5 EC2 instances or rack servers that serve as management servers for the entire Outpost service. These instances allow AWS to remotely manage underlying hardware components in the rack.

Additionally, the M5 instances in the rack allow the SDDC software to be downloaded from the AWS Region and deployed to the racks. The illustration below shows the logical representation of a six-node rack.

Figure 2 – VMware Cloud on AWS Outposts compute structure.

VMware Cloud on AWS Outposts is designed to allow customers to scale their SDDC nodes up or down with a simple click or through the use of VMware APIs. Depending on the number of nodes ordered in the rack, customers can use them as they see fit.

For example, if customers purchase a rack with six nodes, they can start by deploying three nodes—the base nodes required for SDDC deployment on VMware Cloud on AWS Outposts—and scale up by adding more nodes until they consume all of the nodes purchased in the rack.

Storage

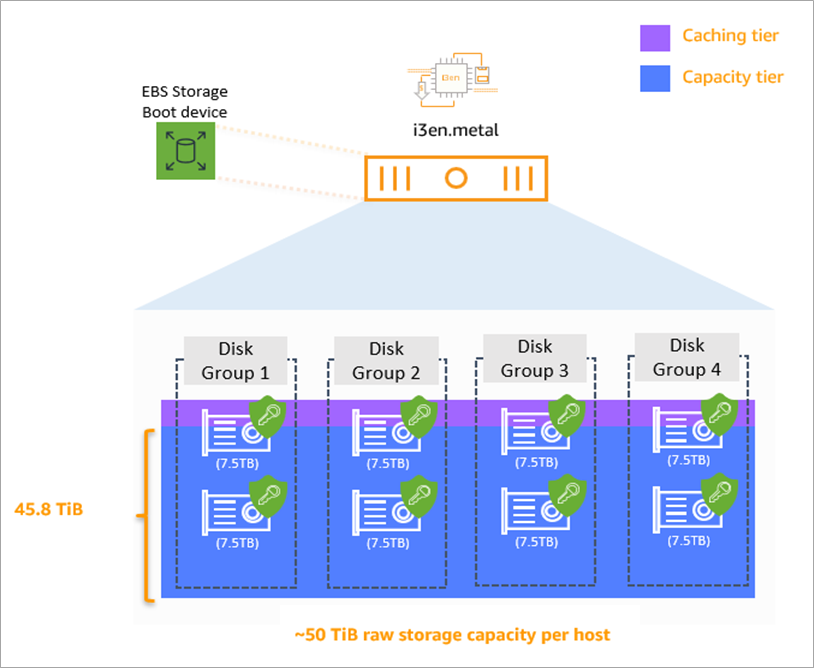

The primary storage that backs VMware Cloud on AWS Outposts comes from the internal dense non-volatile memory express (NVMe) SSD instance storage optimized for low latency, high random I/O performance, and high sequential disk throughput within the i3en.metal bare metal instances.

Each node provides 50TiB raw storage and 45.8TB of usable storage space. These drives are consumed and used by VMware vSAN to provide storage for virtual machine (VM) consumption within the rack.

Each of the NVMe SSD drives are encrypted within the bare metal instances. Additionally, vSAN adds another layer of encryption using AWS Key Management Service (AWS KMS) providing encryption for data at rest. Users can leverage VMware’s SPBM (storage policy-based management) VM storage policies at individual vdisk level. Below, see how a single i3en.metal node is designed.

Figure 3 – i3en.metal VSAN storage design.

From this design, we reserve a portion of each SSD drive for vSAN caching tier and the rest for capacity tier. In some configurations, customers use mixed disk types fast disks for cache and capacity disks for running workloads.

For VMware Cloud on AWS Outposts, all of the disks used for vSAN are SSDs, so performance is not impacted. It’s designed to run almost all workloads that can be virtualized.

Networking

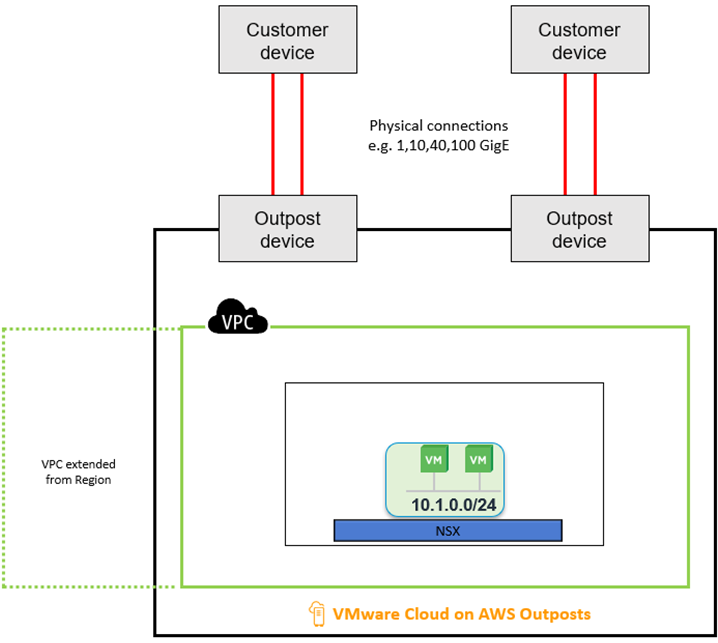

Every VMware Cloud on AWS Outpost has connectivity from on premises back to a parent AZ within an AWS Region. The underlying networking that powers VMware Cloud on AWS Outposts is the Amazon Virtual Private Cloud (VPC). This network construct operates on the AWS Cloud and the Outpost service connects to it.

This is done by stretching the VPC from the cloud to on premises using the service link to allow the underlying components for Outposts to be deployed into the VPC on premises so customers can consume the AWS Cloud closer to them.

You can think of the VPC networking as the uplink network for the bare metal instances within the Outpost rack. With the underlying VPC, NSX is put on the SDDC to abstract the workload networks from the underlying infrastructure management networks.

The illustration below shows the logical configuration of how an Outpost looks from the network design perspective.

Figure 4 – Logical VMware Cloud on AWS Outposts representation.

Local Connectivity

Customers can seamlessly integrate their on-premises networks to VMware Cloud on AWS Outposts. We configure physical connections from the Outpost to your network devices. The architecture below provides a logical re-presentation of how it looks.

Figure 5 – Physical connections for VMware Cloud on AWS Outposts.

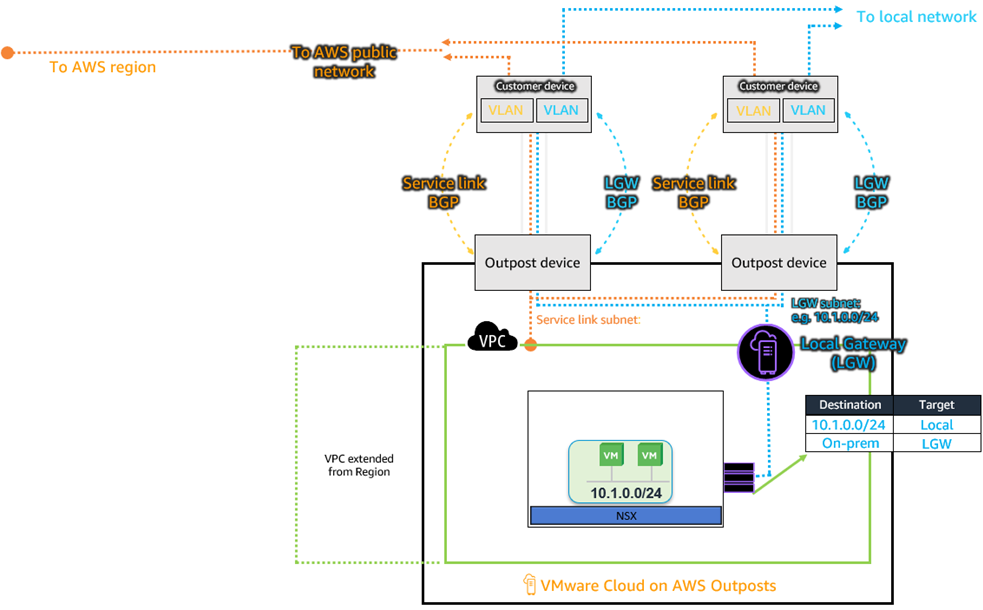

With the physical connection in place, dedicated VLANs are allocated from the customer network and applied across customer network devices and the Outpost network device to configure link aggregation using link aggregation protocol (LACP). These VLANs are dedicated for the service link traffic to the region and local gateway (LGW) traffic, which is the traffic destined toward the on-premises networks. Customers provide the VLANs to support the configuration.

Figure 6 – Link aggregation between customer network and Outpost network devices.

With the configured physical links with link aggregation, the final step is to configure route exchange between the Outpost network devices and customer devices using border gateway protocol (BGP). Before configuring the BGP session, you’ll need to provide a minimum IP range of /26 for the service link; this range could be public IPs or should have access to the internet.

The service link range will be split into two contiguous ranges and advertised across the customer devices and the Outpost network devices. For the local gateway VLAN, no network ranges need to be allocated to it for VMware Cloud on AWS Outposts. Logical networks from the SDDC that needs to communicate to on premises will use the local gateway BGP session to exchange routes towards on premises.

Figure 7 – BGP sessions between VMware Cloud on AWS Outposts and customer network devices.

The architecture above shows how the NSX software-defined networking interacts with the LGW routing construct. The underlying VPC network provides uplink connectivity for the i3en.metal bare metal instances in the rack. The NSX networking stack provides connectivity for the VMs deployed on the Outposts.

Due to this design, the NSX logical networks created on the Outposts are automatically advertised to the local gateway through the VPC, and the LGW also forwards it to the customer network devices through the Outpost network devices.

This is how route exchange is set up to allow on-premises networks to communicate with the networks created within SDDCs of an Outpost. To learn more about configuring local connectivity from Outposts to on-premises networks, check out the documentation.

Connecting Back to the Region

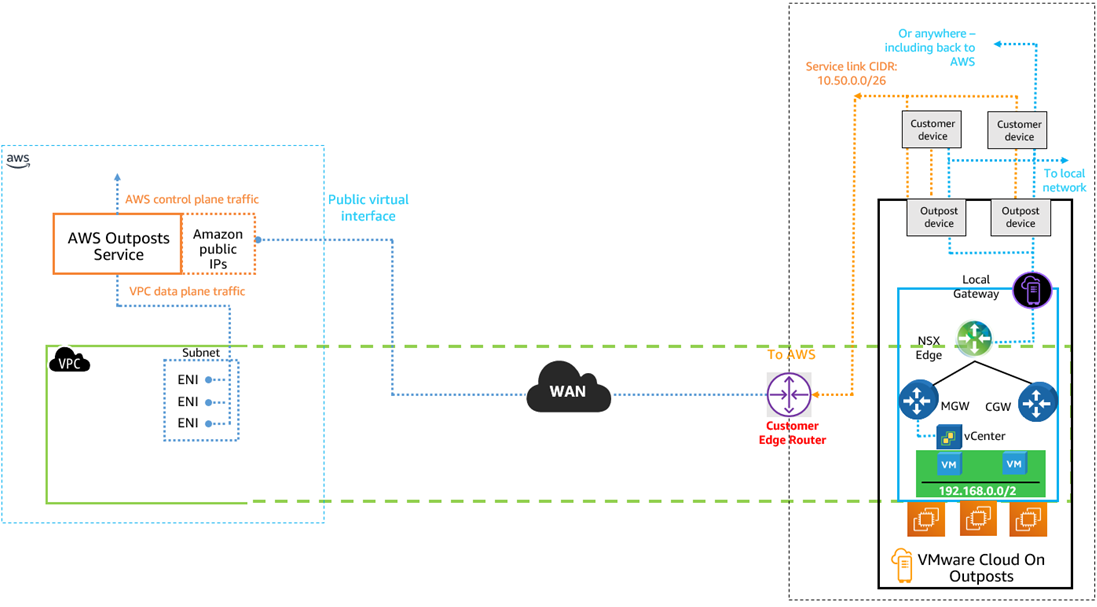

VMware Cloud on AWS Outposts connects to its parent region through wide area networking (WAN) connectivity. Customers have two options when connecting the service link for the Outposts to on premises connection.

The first is through the use of AWS Direct Connect with a public virtual interface (VIF), and the architecture below explains how we configure WAN connectivity for the service link.

Figure 8 – Service link connectivity using AWS Direct Connect with public VIF.

The architecture above shows service link connectivity to the region through AWS Direct Connect. After a physical Direct Connect circuit is established, customers can establish a public VIF that terminates at the region where the Outposts will be connected.

The public interface is a BGP session that allows AWS to exchange all of its public routes to your on-premises edge device. When using private IP ranges for your service link network range, you’ll have to configure network address translation (NAT) to a public IP to allow the service link to use the configured public virtual interface over AWS Direct Connect to get to the Outposts service in the region.

The NAT can be configured either on your edge device on-premises or the edge device located at the AWS Direct Connect region.

We also have customers who do not want to traverse service link traffic over the public internet. If you have that requirement, we have the ability to configure the service link using private VIF over AWS Direct Connect.

Figure 9 – Service connectivity using AWS Direct Connect with private virtual interface.

With the architecture above, service link traffic will not traverse the public internet; rather, it will be sent over a private VIF using on-premises IP ranges. This means no network address translating is required for the service link network ranges if customers choose to use private IPs that don’t have internet access.

For this option, customers must have existing AWS Direct Connect circuits in place before making an order. Additionally, they will have to provision a private virtual interface in their AWS account and share it with the VMware-managed AWS account that is running VMware Cloud on AWS Outposts.

With this prerequisite complete, customers will need to provide a private network range that is not used on premises and does not conflict with any other IP range in the network. Then, customers must provide an autonomous system number (ASN) for the virtual private gateway and ID for the configured Direct Connect private VIF. These details will be entered during the order for VMware Cloud on AWS Outposts to automatically configure the private connectivity.

Figure 10 – Screen shot from the Outposts connectivity order page.

Finally, customers can also use public internet connectivity from their ISP to connect the service link from the Outposts to the service in the AWS Cloud. The architecture below shows how that is set up.

Figure 11 – Internet connectivity for service link.

High Availability

High availability is a flagship feature in VMware that allows virtual machines to restart on surviving hosts when there is host degradation or other network issues. VMware Cloud on AWS Outposts SDDCs maintains the same feature as it is in VMware in the cloud and on premises.

Besides protection at the virtual machine level, VMware Cloud on AWS Outposts is also designed to be highly available. Power and networking devices in the rack are designed to be resilient.

Additionally, VMware Cloud on AWS Outposts comes with additional capacity for each configuration purchased to compliment VMware high availability. Outside the rack, we recommend customers architect service link connectivity for the service link in a resilient manner.

We recommend resilient connections either through multiple direct connect locations to the region when AWS Direct Connect is used. To learn more, follow the Direct Connect resiliency recommendations.

Customers can also utilize multiple logical Outposts onsite that connects to different Availability Zones in the Region to ensure there is resiliency for the service link should one AZ become unavailable. When using internet connection for the service link, ensure you have at least two connections from different ISPs to the service link configuration. The architecture below demonstrates how to set this up.

Figure 12 – Recommended HA configuration for VMware Cloud on AWS Outposts.

Hybrid Linked Mode (HLM)

A single pane of glass to view and manage on-premises resources powered on VMware, as well as VMware Cloud on AWS Outposts, is provided through vCenter Hybrid Linked Mode (HLM). This allows you to link the VMware Cloud on AWS Outposts vCenter to your on-premises vCenter to provide a hybrid management interface across edge and on-premises resources.

This can also be configured between VMware Cloud on AWS Outposts in a data center and another VMware Cloud on AWS in the Region. To take advantage of this feature, users need to be running vSphere 6.5 or later versions.

Figure 13 – HLM between VMware Cloud on AWS Outposts and on premises.

Additional Resources

In this post, we have discussed the foundations for VMware Cloud on AWS Outposts. Below are useful resources to help you when deploying one of these: