AWS for M&E Blog

Managing hybrid video processing workflows with Apache Airflow

September 8, 2021: Amazon Elasticsearch Service has been renamed to Amazon OpenSearch Service. See details.

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post. Authored by Riccardo Vescovi, Head of Solution Engineering – Fincons; Donato Fraccalvieri, Solution Architect – Fincons; Gianluca De Giuseppe, Solution Architecture – Fincons; and Zavisa Bjelogrlic, Partner Solution Architect – AWS.

Fincons Group is a multinational IT consulting company, operating various industrial segments (Media, Energy and Utilities, Financial Services, Transportation, Manufacturing and Public Administration). In the Media segment, Fincons has been instrumental in spreading the HbbTV standard in Europe and has actively contributed to the development and promotion of ATSC 3.0 in the United States.

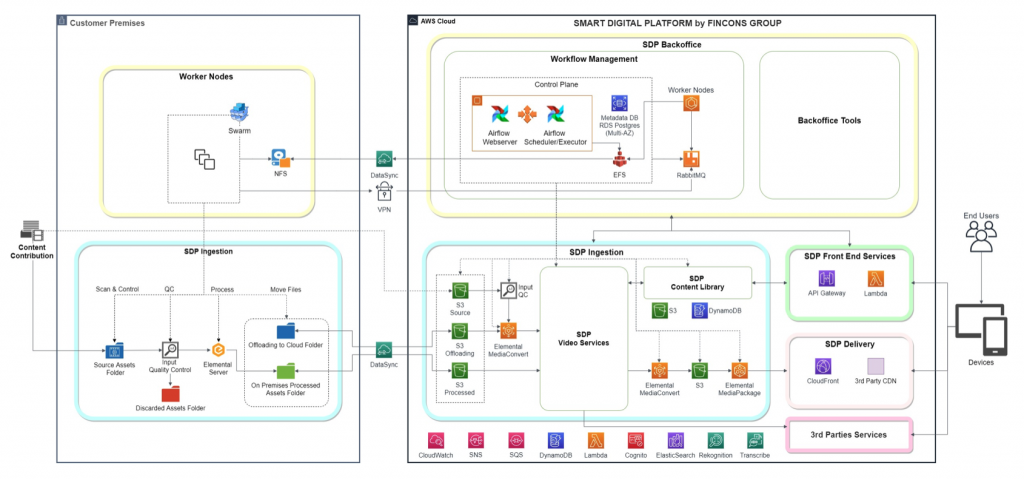

The company has developed and launched the Smart Digital Platform (SDP). SDP manages the overall video workflows from ingests of original video content to the distribution of interactive Hybrid TV (HbbTV/ATSC3) and Over-the-Top (OTT) applications. With its modular framework in the AWS Cloud, SDP can be launched quickly to support fast interactive applications and deliveries.

Frequently deploying SDP as well as other Media & Entertainment (M&E) workloads to the cloud requires a hybrid approach. The main reasons are:

- Hybrid approach allows for immediate migration of workloads to the cloud while maximizing return on investment for content and on-premises equipment.

- Customers are looking for a seamless transition to the cloud. A hybrid solution supports gradual migration without service disruptions.

In large operations, the architecture can easily become more complicated with workflows spawning among multiple nodes: different company datacenters and multiple cloud regions. “Hybrid” evolves to “Distributed” here. To manage such workloads efficiently, we ideally need a unique Workflow Management System (WMS) to allow easy reconfiguration of processing steps executed among nodes to optimize available resources. A unique WMS also simplifies monitoring and control of the process from a single interface, and makes it easier to build an orchestration layer to optimize processes for faster return time, lowest cost of operations or highest availability.

Solution Architecture

Fincons SDP embeds Apache Airflow as its de-facto engine for WMS. Apache Airflow is an open source framework that is well suited for authoring, scheduling, and monitoring distributed workflows. Apache Airflow is currently supported by a large and active community; many reference implementations are available. SDP handles the full end-to end workflow: content acquisition, initial quality control (QC), transfer to cloud, and video processing – transcoding to mezzanine formats – using a combination of AWS Elemental on-premises appliances and AWS Media Services in the cloud. Video is further analyzed using Amazon Rekognition and Amazon Transcribe services. External services like IMDb or others can also be used to enrich content. Typical interactive applications include celebrity and scene recognition, linking to related content, and preparation for interactive advertising.

Enriched content is saved in the SDP Content Library, which uses Amazon S3 for storage of video and transcribed texts. Amazon DynamoDB is used to store metadata supporting the interactivity (time, personalities detected, scenes identified, etc.). The Amazon Elasticsearch Service is used to search text files. Interactivity is supported through the Amazon API Gateway and AWS Lambda functions. This approach can easily scale to support the growth of the interactive application from the initial testing up to the large audience sizes possible during primetime or promotions.

The final element in the chain is dedicated to the distribution of interactive content. Content is converted to distribution formats using AWS Elemental MediaConvert, stored to S3 and packaged just in time by AWS Elemental MediaPackage. Video is delivered via Amazon CloudFront or using a third-party CDN.

The Smart Digital Platform comprises four subsystems:

- SDP Ingestion controls all the processes associated with ingesting and validating contributions and enriching content for interactive applications. The process can be distributed between on-premises and cloud. It is configured to handle nominal and exceptional peak loads.

- SDP Front Services provides the API integrations for interactive applications using content metadata and additional information extracted during the analysis and enrichment process. Enriched content and metadata are stored in the SDP Content Library.

- SDP Delivery processes just-in-time-packaging and delivers video content to interactive clients using CloudFront or via a third-party CDNs.

- SDP Backoffice manages the workflow using Apache Airflow. It provides additional Backoffice Tools to configure and publish interactive content using templates.

Workflows

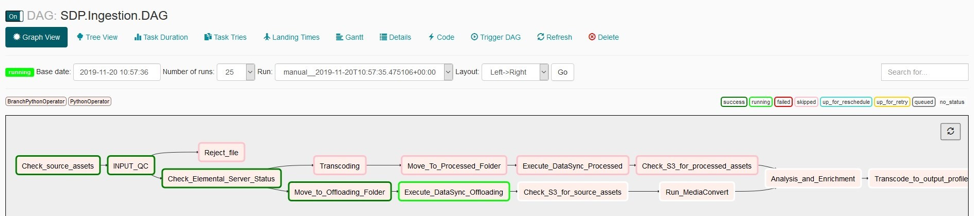

Here we have two versions of the workflow: (1) The end-to-end processing chain described above, and (2) a variant of the same workflow with initial transcoding offloaded to the cloud to manage the peak loads.

In Apache Airflow, workflows are represented as Directed Acyclic Graphs (DAG). The figure below shows the DAG defining workflows (1) and (2). The web interface also shows the progress of the workflow and the color-coded status of each task.

Apache Airflow Implementation

Apache Airflow serves as primary component for SDP Backoffice. It is composed of the following functions:

- Webserver provides user interface and shows the status of jobs

- Scheduler controls scheduling of jobs and Executor completes the task

- Metadata Database stores workflow status

- Pool of workers listen to task queues and execute the tasks

Apache Airflow is well suited for a hybrid configuration. While Control Plane (Scheduler/Executor, Metadata Database, and Webserver) runs on AWS, its Workers are embodied in Docker Containers running either on AWS or in on-site datacenters. The solution is designed to process on average of 50 pieces of content per hour, with peaks up to 150 per hour. This is a relatively low load; therefore, the simplest high available configuration has been used. AWS resources can easily be scaled up by a more powerful instance of Scheduler/Executor and Metadata Database. We can also augment the number of workers by scaling out the cluster accordingly.

Apache Airflow’s Celery Executor uses RabbitMQ as message broker for communication between Executor and workers. RabbitMQ is the simplest and most reliable mechanism for our distributed workloads. Scheduler needs also to share DAGs with its workers. On AWS, DAGs write to Amazon Elastic File System (EFS) mounted by all workers. For remote workers, a mirror copy is kept in the DAG folder on-premises and synchronized using AWS DataSync.

High availability is a critical aspect for the Airflow Control Plane. A failure in this subsystem may jeopardize all workflow executions. To assure proper availability, we provision the Metadata Database with RDS Postgres in multiple Availability Zone (AZ) configurations. Scheduler/Executor and Webserver are configured to run in two AZs using autoscaling to ensure each component instance is always on. Workers are run as Docker containers. Amazon Elastic Container Service (ECS) is running on an Amazon EC2 cluster with scaling policies based on custom Amazon CloudWatch alarms. This ensures high availability and scaling out/in during peak and normal workloads. Dockers Swarm provisioning is used in on-premises containers for high availability.

DataSync bridges video transfer between on-premises infrastructure and the AWS Cloud. DataSync accelerates data transfer and protects the content during transfers. Providers upload original contributions to the on-premises input watch folder using Secure FTP (SFTP) protocol. Direct uploading to an S3 bucket is also possible for some providers.

Next, the processing workflow applies Rekognition and Transcribe and links the content to external sources and metadata services like IMDb. The details of the enrichment and analysis architecture depend on the type of processing (celebrity and scene recognition, enrichment of third-party content, preparation for interactive advertising, etc.).

Fincons Smart Digital Platform

Fincons SDP supports interactivity in an elastic way, from small groups of beta testers in initial phases of an interactive application, up to operations in primetime situations when a large number of viewers need to be supported. The platform manages the hybrid video processing chain for interactive content creation using Apache Airflow, simplifying workflow control, provisioning, and monitoring between on-premises appliances and AWS cloud resources.

To learn more about tailoring SDP to your fit your specific workflow, contact Fincons at sdp.info@finconsgroup.com.