AWS Big Data Blog

The art and science of data product portfolio management

This post is the first in a series dedicated to the art and science of practical data mesh implementation (for an overview of data mesh, read the original whitepaper The data mesh shift). The series attempts to bridge the gap between the tenets of data mesh and its real-life implementation by deep-diving into the functional […]

How Ontraport reduced data processing cost by 80% with AWS Glue

This post is written in collaboration with Elijah Ball from Ontraport. Customers are implementing data and analytics workloads in the AWS Cloud to optimize cost. When implementing data processing workloads in AWS, you have the option to use technologies like Amazon EMR or serverless technologies like AWS Glue. Both options minimize the undifferentiated heavy lifting […]

Introducing Apache Airflow version 2.6.3 support on Amazon MWAA

Amazon Managed Workflows for Apache Airflow (Amazon MWAA) is a managed orchestration service for Apache Airflow that makes it simple to set up and operate end-to-end data pipelines in the cloud. Trusted across various industries, Amazon MWAA helps organizations like Siemens, ENGIE, and Choice Hotels International enhance and scale their business workflows, while significantly improving security […]

Perform Amazon Kinesis load testing with Locust

February 9, 2024: Amazon Kinesis Data Firehose has been renamed to Amazon Data Firehose. Read the AWS What’s New post to learn more. Building a streaming data solution requires thorough testing at the scale it will operate in a production environment. Streaming applications operating at scale often handle large volumes of up to GBs per […]

Monitor data pipelines in a serverless data lake

AWS serverless services, including but not limited to AWS Lambda, AWS Glue, AWS Fargate, Amazon EventBridge, Amazon Athena, Amazon Simple Notification Service (Amazon SNS), Amazon Simple Queue Service (Amazon SQS), and Amazon Simple Storage Service (Amazon S3), have become the building blocks for any serverless data lake, providing key mechanisms to ingest and transform data […]

Configure SAML federation for Amazon OpenSearch Serverless with Okta

Modern applications apply security controls across many systems and their subsystems. Keeping all of these systems in sync would be a major undertaking if you tried to implement it separately. Centralized identity management is the way to maintain a single identity provider (IdP) that can authenticate actors and manage and distribute their rights. OpenSearch is […]

Perform time series forecasting using Amazon Redshift ML and Amazon Forecast

Amazon Redshift is a fully managed, petabyte-scale data warehouse service in the cloud. Tens of thousands of customers use Amazon Redshift to process exabytes of data every day to power their analytics workloads. Many businesses use different software tools to analyze historical data and past patterns to forecast future demand and trends to make more […]

Configure cross-Region table access with the AWS Glue Catalog and AWS Lake Formation

Today’s modern data lakes span multiple accounts, AWS Regions, and lines of business in organizations. Companies also have employees and do business across multiple geographic regions and even around the world. It’s important that their data solution gives them the ability to share and access data securely and safely across Regions. The AWS Glue Data […]

Create an Apache Hudi-based near-real-time transactional data lake using AWS DMS, Amazon Kinesis, AWS Glue streaming ETL, and data visualization using Amazon QuickSight

We recently announced support for streaming extract, transform, and load (ETL) jobs in AWS Glue version 4.0, a new version of AWS Glue that accelerates data integration workloads in AWS. AWS Glue streaming ETL jobs continuously consume data from streaming sources, clean and transform the data in-flight, and make it available for analysis in seconds. AWS also offers a broad selection of services to support your needs. A database replication service such as AWS Database Migration Service (AWS DMS) can replicate the data from your source systems to Amazon Simple Storage Service (Amazon S3), which commonly hosts the storage layer of the data lake. This post demonstrates how to apply CDC changes from Amazon Relational Database Service (Amazon RDS) or other relational databases to an S3 data lake, with flexibility to denormalize, transform, and enrich the data in near-real time.

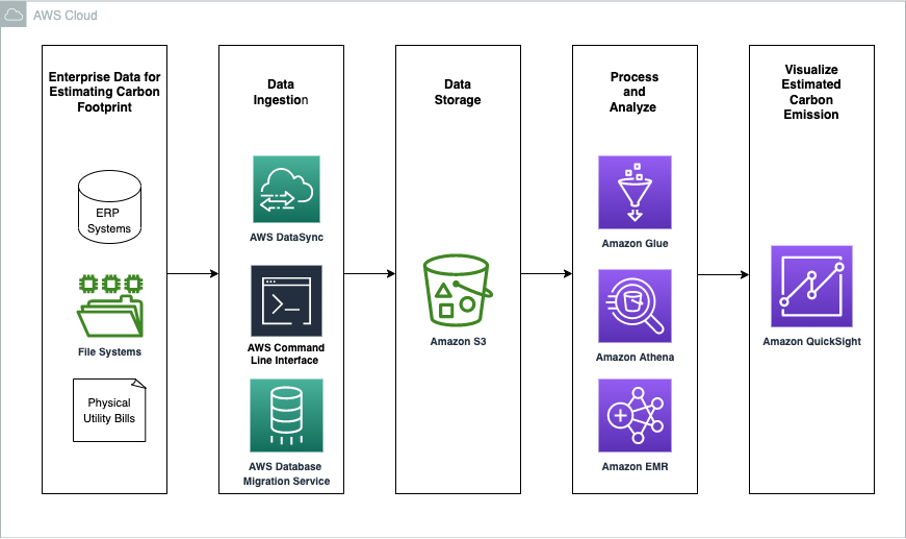

Estimating Scope 1 Carbon Footprint with Amazon Athena

Today, more than 400 organizations have signed The Climate Pledge, a commitment to reach net-zero carbon by 2040. Some of the drivers that lead to setting explicit climate goals include customer demand, current and anticipated government relations, employee demand, investor demand, and sustainability as a competitive advantage. AWS customers are increasingly interested in ways to […]