AWS for Industries

How GumGum provides sub-10 ms contextual data for real-time digital ad bidding with AWS Outposts

Overview

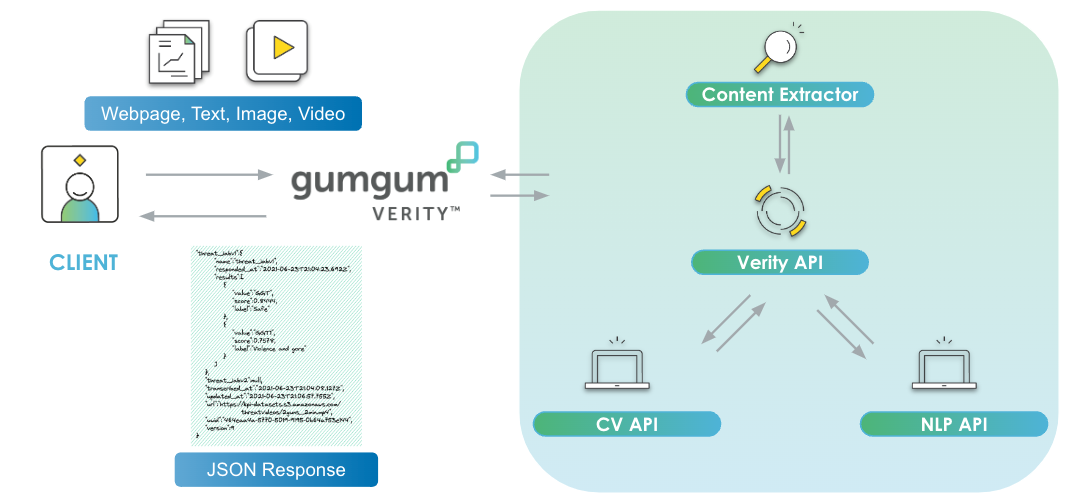

GumGum is a global digital advertising platform that specializes in contextual marketing, facilitating advertisers in marketing ads based on digital media content. GumGum offers Verity, which uses in-house computer vision (CV) and natural-language processing (NLP) models to scan images, audio, text, and videos across webpages, social media, over-the-top (OTT) video, and connected TV (CTV), providing contextual, sentiment, and brand-safety analysis. GumGum additionally uses various Amazon Web Services (AWS) solutions, including Amazon Rekognition, which offers pretrained and customizable CV capabilities to extract information and insights from images and videos, and Amazon Transcribe, which automatically converts speech to text. Verity facilitates brands in connecting with customers in a nonintrusive way. This cookieless solution is provided at a sub-10 ms time interval and does not target, profile, or retain content consumer data. Verity is used by advertisers to implement contextual targeting and demand-side platforms (DSPs) as a data provider.

In this blog post, we discuss how GumGum used AWS Outposts, a family of fully managed solutions delivering AWS infrastructure and services, in New York and Los Angeles to integrate with major DSPs that require sub-10 ms time intervals. A reference architecture is presented, as well as considerations for implementing the solution and the results of the integration.

Why is sub-10 ms contextual analysis necessary?

When a consumer loads ad-supported content, like a blog or video, in their browser, the website’s ad server forwards a request to an ad exchange or supply-side platform (SSP) that holds a real-time auction for the publisher. The ad exchange or SSP sends bid requests to DSPs, which represent advertisers and their ad campaigns. Advertisers specify ad targeting criteria, and the DSP is responsible for evaluating each bid request and placing a bid on behalf of the advertiser. The SSP chooses a winning bid from partner DSPs and serves an ad to the consumer. So that advertisements load synchronously with content, the entire ad delivery process must happen in under 300 ms. An advertiser might specify ad targeting criteria that is provided by third-party data services. Third-party data services can be allocated in as little as 10 ms to respond to bid requests.

More guidance for contextual intelligence for advertising on AWS can be found here.

AWS Outposts integration reference architecture

The Verity service is fairly complex under the hood, but from the viewpoint of an incoming request, the relevant processing steps are straightforward. Verity receives a request from a DSP containing a page URL as a query parameter and attempts to look up a classification result in a table within Amazon DynamoDB—a fully managed, serverless, key-value NoSQL database—using the page URL as a partition key. If the page URL is already processed, it will return the classification. If not, it will respond by indicating that no classification is available and queue the request for processing with the classification service.

GumGum partners with DSPs in Los Angeles and New York, which have very strict requirement of sub-10 ms latency in responding to a request. When conducting an integration experiment with those DSPs, GumGum found that the latency numbers were well outside of the required target of single-digit milliseconds. GumGum attempted to fix the problem by addressing bottlenecks in the system, optimizing Amazon DynamoDB tables and using bespoke Java virtual machines (JVMs) to smooth garbage-collection times, but all of these still failed to deliver single-digit millisecond latency. Upon further analysis, GumGum realized that the crux of the problem was the physical distance between the DSP client and the Verity server.

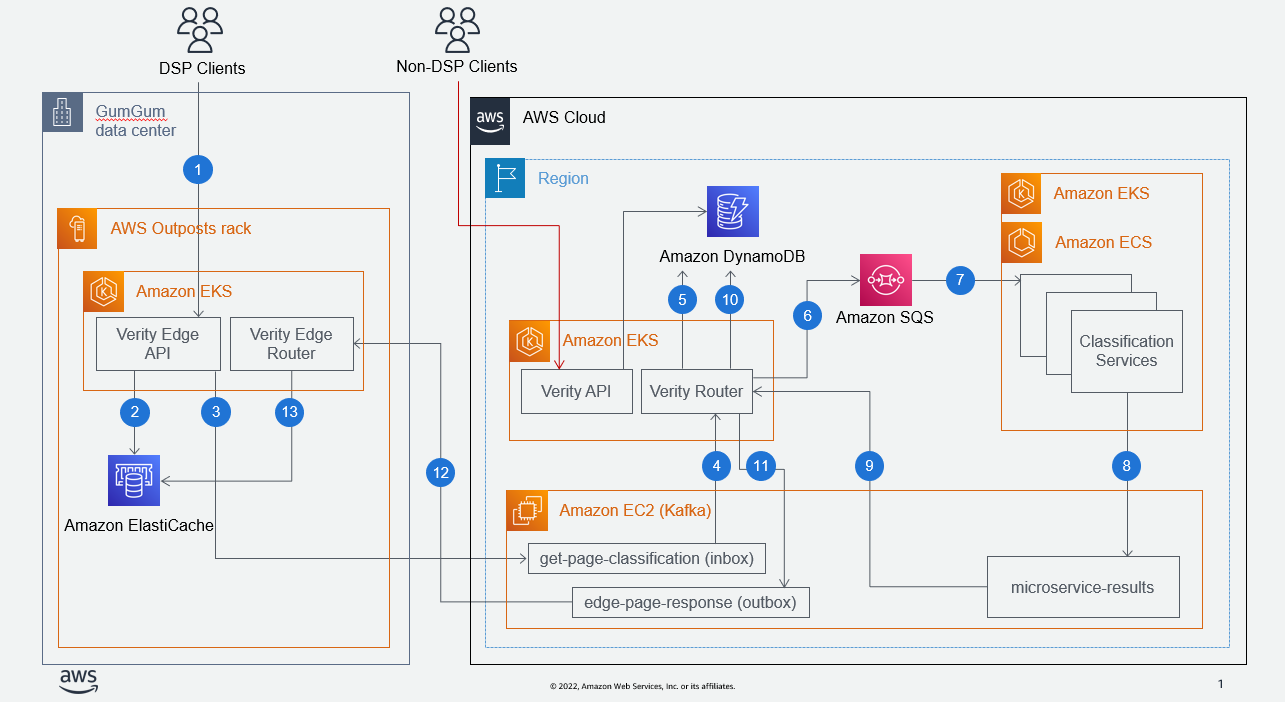

To solve the problem, GumGum decided to deploy a Verity edge service running on AWS Outposts rack—a fully managed service that extends AWS infrastructure, services, APIs, and tools on premises—located geographically closer to the DSP client. Below is GumGum’s Verity reference architecture using AWS Outposts rack where DSPs hit the Verity edge API instead of the regional API.

- Verity edge router is a Java Kafka consumer app running at the edge on nodes in Amazon Elastic Kubernetes Service (Amazon EKS), a managed Kubernetes service to run Kubernetes in the AWS Cloud, on AWS Outposts rack.

- Classification consumer is an AWS regional consumer microservice that consumes from many topics in Kafka, including the topics produced by services running on AWS Outposts rack.

- Get-page classification handler is a method and main routine implemented by the classification consumer. Verity core is made of the RESTful API and its router (a Kafka consumer producer) that acts as a coordinator; it has all the business logic and knows which topic it needs to send data to and in what order.

- Classification services are a set of multiple microservices running on Amazon Elastic Container Service (Amazon ECS), a fully managed container orchestration service; Amazon EKS; or a third party all acting as black boxes.

- Get-page classification (input topic to request classification data from the mothership running in the AWS Region; AWS Outposts serves as a producer in this scenario).

- Edge-classification response (topic consumed by AWS Outposts that contains classification responses pulled from the regional Amazon DynamoDB table; this is also the central table that stores about 15 TB of classification data).

Workflow

- The DSP client calls the Verity edge API on AWS Outposts using an HTTP request that contains the page URL as a query parameter.

- The Verity edge API checks to see if a cached response exists in Amazon ElastiCache for Redis, a fast in-memory data store that provides submillisecond latency. If the response is available in the cache, a response with an HTTP OK status will be sent to the DSP. If not, the Verity edge API will return a 404 error to the DSP and continue to Step 3.

- The Verity edge API posts a message to the get-page classification Kafka topic in the AWS Region.

- The Verity router ingests messages from the get-page classification topic.

- The Verity router checks if the page has already been classified by looking up the page URL in an Amazon DynamoDB table. If the classification is available in Amazon DynamoDB, it sends the classification metadata to the edge-classification response topic.

- When the classification is not available in Amazon DynamoDB, Verity router initiates a classification by sending a message to a queue in Amazon Simple Queue Service (Amazon SQS), a fully managed message queuing for microservices.

- On the backend, the system, which consists of multiple microservices, consumes messages from Amazon SQS and other internal topics. These microservices are implemented on a variety of platforms, including Amazon EKS, Amazon ECS, and Databricks.

- The microservices publish their processing statuses and classification results back into Kafka topics.

- The Verity router consumes from multiple topics to ingest classification microservices responses.

- It is responsible for storing microservices results into Amazon DynamoDB and determines when the classification request is ready for client delivery.

- When the classification result is ready, a message containing the result is sent to the edge-classification result topic.

- The Verity edge router consumes results from the regional Kafka topic.

- The Edge cache is updated accordingly with the result.

Considerations

Setting up AWS Outposts was new to GumGum. Here are a few things to keep in mind about AWS Outposts, based on our experience that we describe in this section.

Networking

Our initial assumption was that we could install AWS Outposts in a data center, plug it into the data center’s network, and be up and running. However, we learned along the way that this was not the case. The AWS Outposts customer (in this case, GumGum) is responsible for supplying its own networking equipment, including routers, firewalls, and monitoring equipment. Because GumGum’s core competency is in ad tech and not networking, we employed the services of another company, STN, to procure and install this hardware in a separate stand-alone rack adjacent to the AWS Outposts.

Slotting

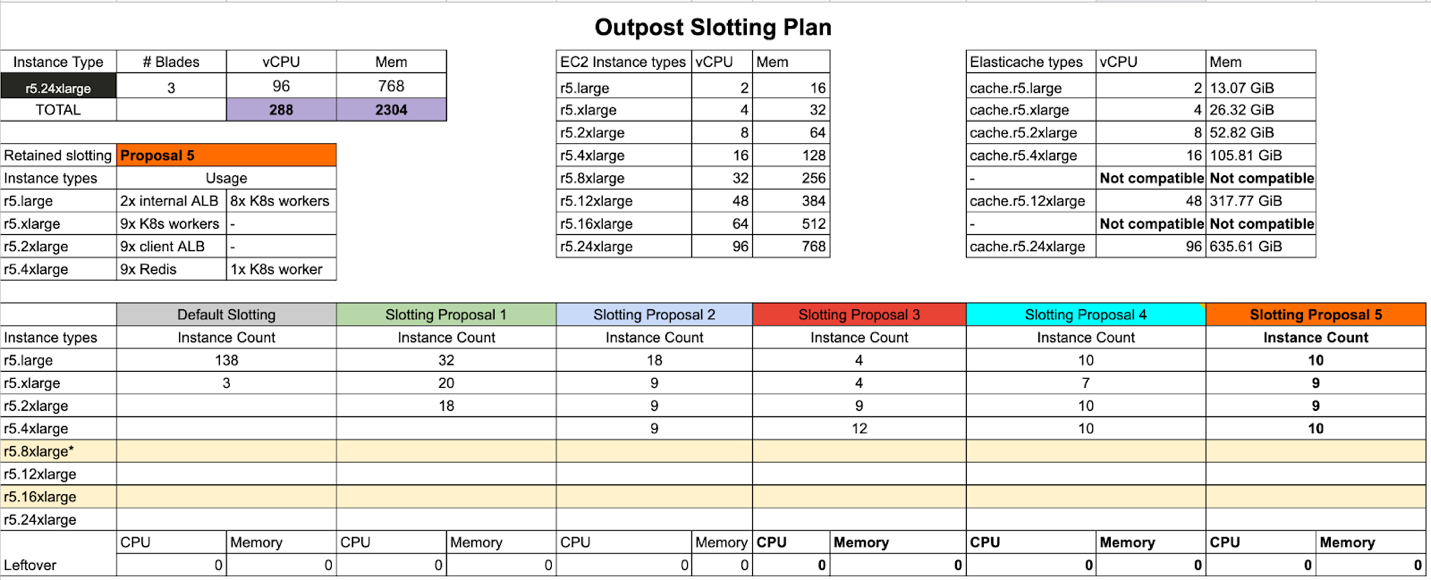

When you purchase AWS Outposts, you usually get beefy machines in the range of 12xlarge or 24xlarge. The GumGum team purchased three r5.24xlarge racks to accommodate the load required by DSP clients.

GumGum needed to run only three virtual machines. The AWS Nitro System, which is the underlying platform for AWS next-generation Amazon EC2 instances running in the AWS Global Infrastructure, provided required virtual machines on AWS Outposts. AWS Nitro System can be configured to see a rack as a set of smaller machines.

This principle is called slotting. Nitro is given an initial slotting configuration that might not fit your needs for your AWS Outposts use case. For example, ours came out with 138 r5.large and 3 r5.xlarge, which wasn’t ideal for our application needs, given that we had predicted using a large Amazon ElastiCache for Redis cluster (1 TB of RAM).

You can ask AWS to reslot your AWS Outposts with a simple support ticket, but we recommend doing this before you start any Amazon EC2 workloads. It’s also worth spending the time to put your resources into a spreadsheet and run some simulations.

Load balancer scaling

One of the benefits of using AWS Outposts rack is having the ability to use the Application Load Balancer service, which is ideal for advanced load balancing of HTTP and HTTPS traffic and provides the same features as those run with an AWS Region. This is convenient when applying blue/green deployments using weighted target group load balancing.

(We were already aware that an Application Load Balancer running in AWS Regions will autoscale to accommodate increasing traffic going through this piece of infrastructure).

Here are a couple of key points that are worth noting if you plan on running a heavy Application Load Balancer workload on AWS Outposts rack.

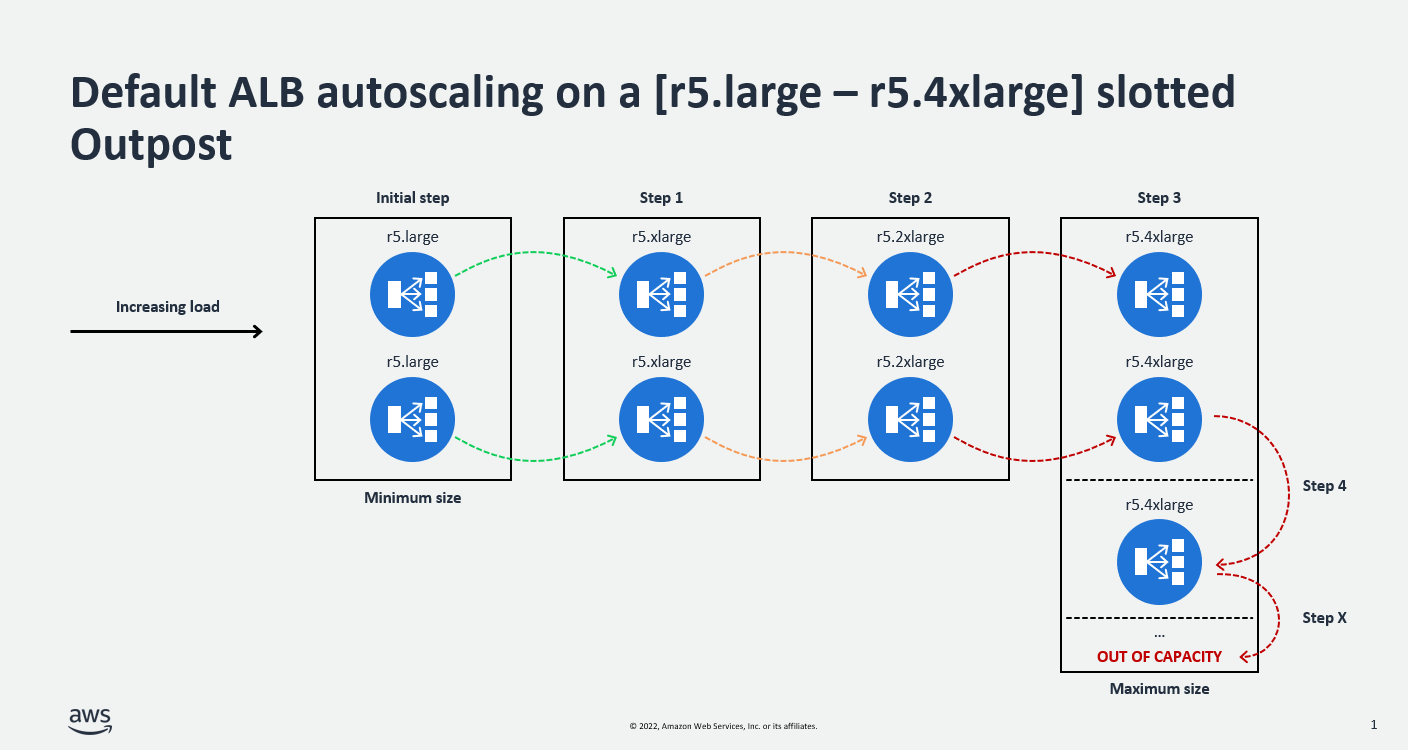

- At creation time, an Application Load Balancer always starts with two machines of the smallest size available on your AWS Outposts. In this example, you will need to make sure at least two r5.large are available; otherwise, the Application Load Balancer will not be an option.

- The default Application Load Balancer algorithm will first scale vertically until it reaches the maximum instance size configured on your rack. If more capacity is required, it will then scale horizontally. It’s also worth mentioning that you must have all the intermediate instance sizes if you keep the default autoscaling behavior, or your Application Load Balancer might enter an active impaired (This usually means that you don’t have enough capacity to support the scaling activity.)

- Finally, you should be aware that you can work with the AWS team to disable the autoscaling feature of an Application Load Balancer running on AWS Outposts, define a fixed size, and choose the node types that should always run.

Running multiple load tests on the AWS Outposts helped us determine that a fixed size of nine r5.2xlarge instances was required to handle the volume of traffic that we expected. It is also important to take this into consideration when you plan for your total AWS Outposts capacity. The total virtual CPU (vCPU) count used by the Application Load Balancer (9 x 8vCPU = 72vCPU) is currently identical to the sum of vCPU required by our backend application (35 x 2vCPU = 70vCPU).

AWS Direct Connect CIDR allocation

When the AWS Outposts were delivered to us, we went through a series of networking configurations so that our on-premises infrastructure would be able to talk to our main services hosted in AWS Regions. This part of the setup might feel intimidating at first, especially if you have been running exclusively in the cloud for a long time and your knowledge is limited to the basics of Amazon Virtual Private Cloud (Amazon VPC)—which gives you full control over your virtual networking environment—such as VPC, subnets, route tables, and VPC end points.

At first, we did not fully understand what the different subnets would host and ended up allocating a large subnet (/20) to host only two IPs that were required by the AWS service link. After discussing this with AWS, we decided to take on a migration of our AWS Outposts networking layer to isolate the service link component in its own VPC and thus reduce our blast radius.

Here are the different steps involved in reconfiguring the networking layer between AWS Outposts and the AWS Region. These steps provide high-level guidance, but you should refer to official AWS documentation for further details.

- From the Amazon VPC console:

- Create a new VPC (for example, 10.202.0.0/16) that will be used to host the service link in isolation. You will need a single, private subnet to host the service link elastic network interface (ENI).

- Create a virtual private gateway (VGW) and attach it to the previously created VPC.

- Create a route table associated with the private subnet of your VPC and add a route that will target the VGW that was previously created. The destination classless inter-domain routing (CIDR) block will be provided by the AWS team (for example, 192.168.201.0/26).

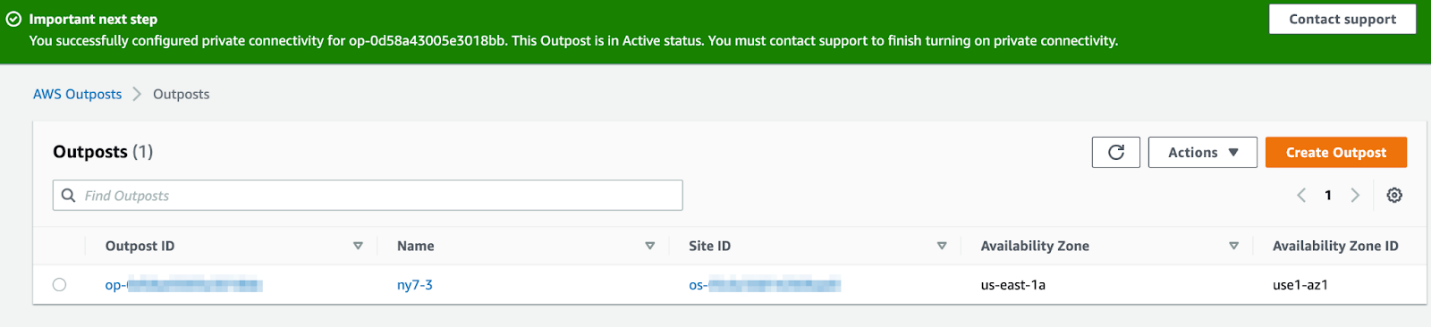

- From the AWS Outposts console:

- Select the AWS Outposts ID for which you want to create private connectivity. In the Actions menu, you will need to select Add private connectivity.

-

- Select the local gateway route table associated with your AWS Outposts and associate the VPC that is dedicated to the service link.

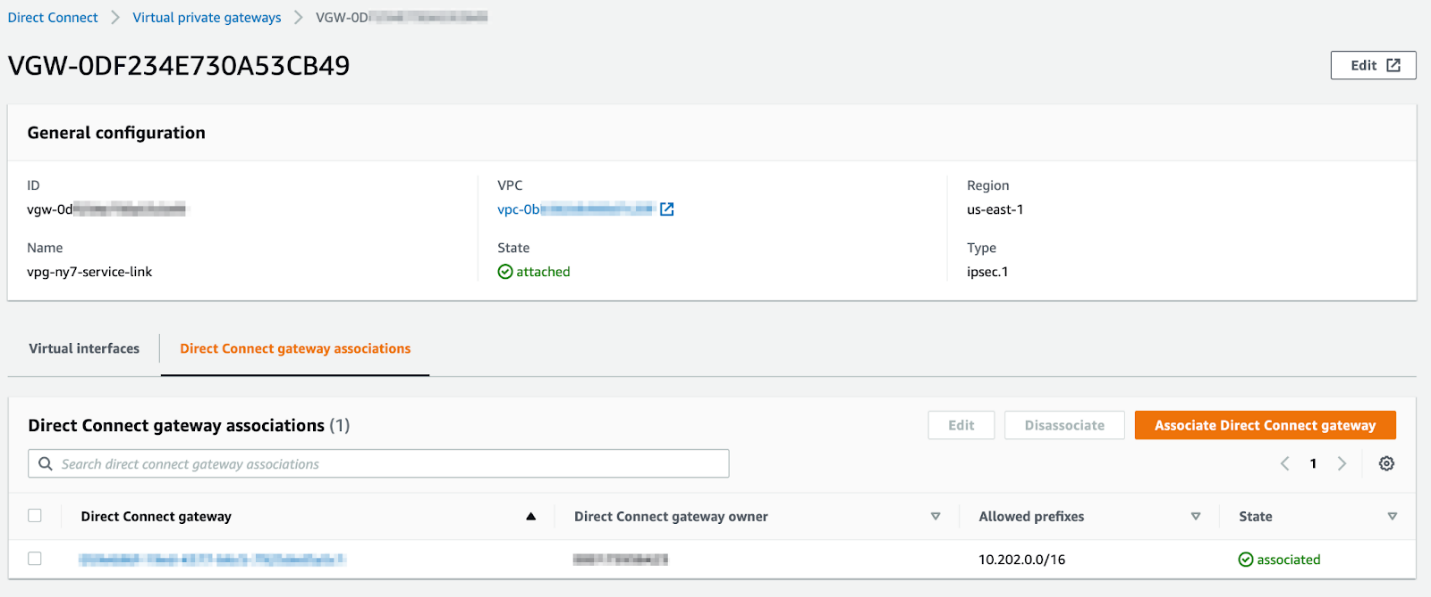

- From the console in AWS Direct Connect, the shortest path to your AWS resources:

- In the VGW section, you should see the VGW created in the first step. You will need to associate the VGW with the AWS Direct Connect link used by your AWS Outposts. Allowed prefixes should be set to the CIDR block of your VPC.

Results

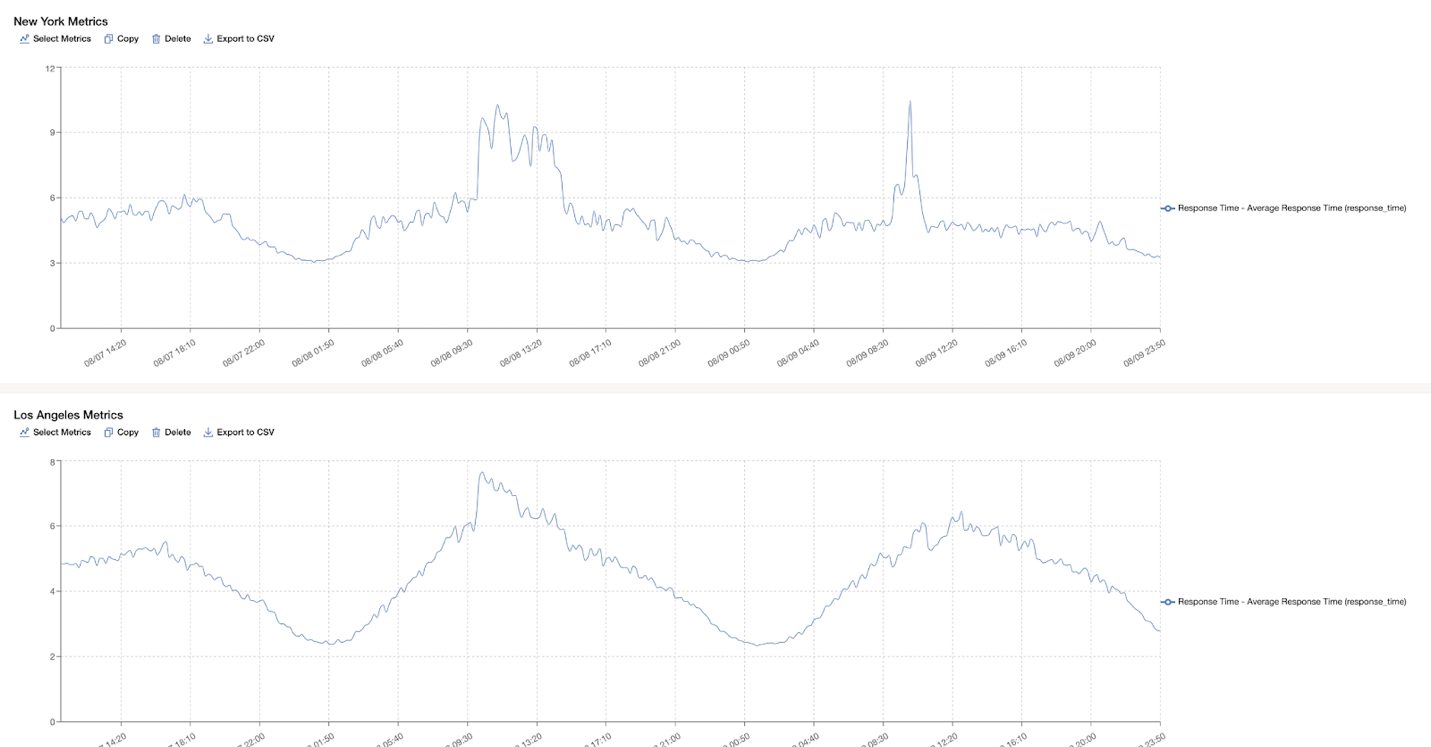

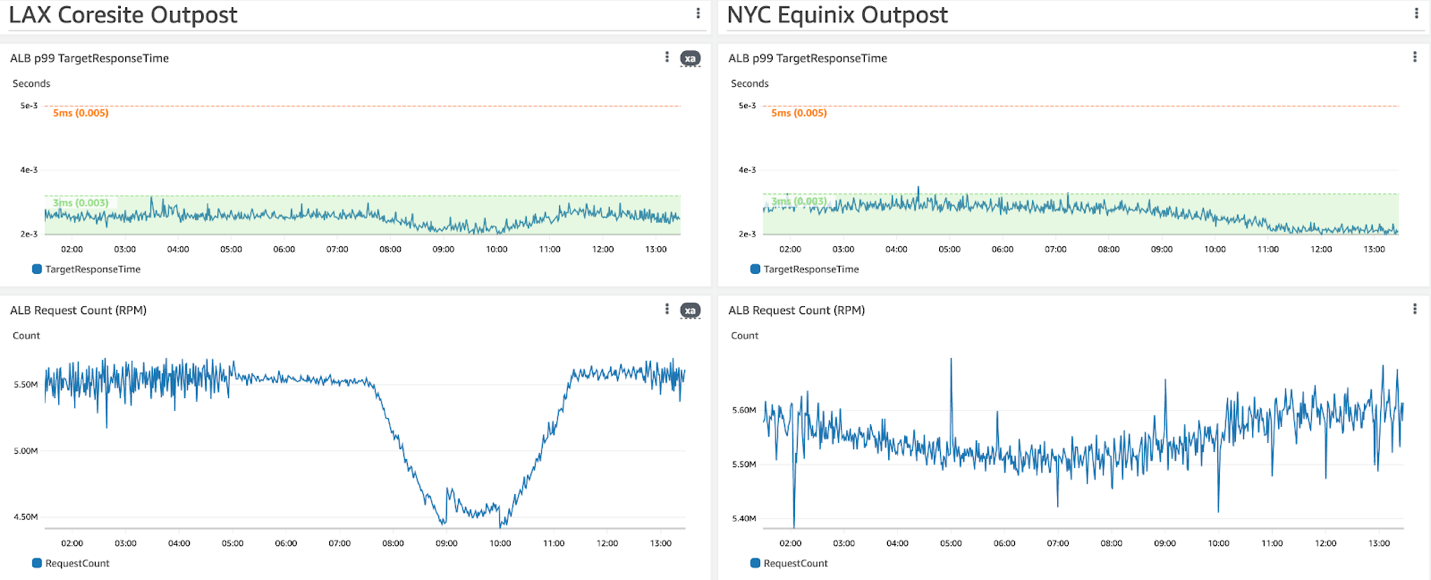

The AWS Outposts deployment allowed us to successfully achieve our latency and throughput objectives. In each data center, we currently observe an average of about 6 ms latency p99 at about 92,000 requests per second. We achieved about 3 ms latency p99 on the application backend. The AWS Outposts implementation facilitated GumGum in physically setting up business-critical end points in close proximity with DSP clients, providing contextual targeting for real-time bidding.

Response time in milliseconds observed on the client side:

Amazon CloudWatch internal metrics observed on the Application Load Balancer running on AWS Outposts:

Acknowledgements

The authors would like to thank Edwin Galdamez, David Williams, Vaibhav Puranik, Lane Schechter, and the Verity team for their efforts in seeing this project through to completion. The authors additionally thank AWS principal solutions architect Josh Coen and senior solutions architects Chris Lunsford, Akhil Aendapally, and Cedric Snell for their guidance and contributions.