Artificial Intelligence

Generate images from text with the stable diffusion model on Amazon SageMaker JumpStart

March 2023: This post was reviewed and updated with support for Stable Diffusion inpainting model.

Today, we announce that Stable Diffusion 1 and Stable Diffusion 2 are available in Amazon SageMaker JumpStart. JumpStart is the machine learning (ML) hub of SageMaker that provides hundreds of built-in algorithms, pre-trained models, and end-to-end solution templates to help you quickly get started with ML. Stable Diffusion is an image generation model that can generate realistic images given a raw input text. You can use Stable Diffusion to design products and build catalogs for ecommerce business needs or to generate realistic art pieces or stock images.

In this post, we provide an overview of how to deploy and run inference with Stable Diffusion in two ways: via JumpStart’s user interface (UI) in Amazon SageMaker Studio, and programmatically through JumpStart APIs available in the SageMaker Python SDK.

Stable Diffusion

Generative AI technology is improving rapidly, and it’s now possible to generate text and images simply based on text input. Stable Diffusion is a text-to-image model that empowers you to create photorealistic applications.

A diffusion model trains by learning to remove noise that was added to a real image. This de-noising process generates a realistic image. These models can also generate images from text alone by conditioning the generation process on the text. For instance, Stable Diffusion is a latent diffusion where the model learns to recognize shapes in a pure noise image and gradually brings these shapes into focus if the shapes match the words in the input text. The text must first be embedded into a latent space using a language model. Then, a series of noise addition and noise removal operations are performed in the latent space with a U-Net architecture. Finally, the de-noised output is decoded into the pixel space.

The following are some examples of input texts and the corresponding output images generated by Stable Diffusion.

The following images are in response to the inputs “a photo of an astronaut riding a horse on mars,” “a painting of new york city in impressionist style,” and “dog in a suit.”

The following images are in response to the inputs: (i) dogs playing poker, (ii) A colorful photo of a castle in the middle of a forest with trees, and (iii) A colorful photo of a castle in the middle of a forest with trees. Negative prompt: Yellow color

Although primarily used to generate images conditioned on text, Stable Diffusion models can also be used for other tasks such as inpainting, outpainting, and generating image-to-image translations guided by text.

You can upscale images with Stable Diffusion models in JumpStart. To learn more, please see Upscale images with Stable Diffusion in Amazon SageMaker JumpStart blog and the Introduction to JumpStart – Enhance image quality guided by prompt notebook.

Update (March 2023): You can now Stable Diffusion inapainting model in JumpStart. It can be used to replace part of an image guided by prompt. To learn more, please refer to the Introduction to JumpStart Image editing – Stable Diffusion Inpainting example notebook.

To learn more about the model and how it works, see the following resources:

- Stable Diffusion Launch Announcement

- Stable Diffusion with Diffusers

- High-Resolution Image Synthesis with Latent Diffusion Models

- Stable Diffusion 2.0 Launch Announcement

Note that by using this model, you agree to the CreativeML Open RAIL-M license.

Solution overview

To use a large model such as Stable Diffusion in Amazon SageMaker, you need inference scripts and end-to-end tests for scripts, models, and the desired instance types to validate that all three can work together. JumpStart simplifies this process by providing ready-to-use scripts that have been robustly tested. You can access these scripts with one click through the Studio UI or with very few lines of code through the JumpStart APIs.

The following sections provide an overview of how to deploy the model and run inference using either the Studio UI or the JumpStart APIs.

Access JumpStart through the Studio UI

In this section, we demonstrate how to train and deploy JumpStart models through the Studio UI. The following video shows you how to find the pre-trained Stable Diffusion model on JumpStart and deploy it. The model page contains valuable information about the model and how to use it. You can deploy any of the pre-trained models available in JumpStart. For inference, we pick the ml.p3.2xlarge instance type because it provides the GPU acceleration needed for low inference latency at a low price point. After you configure the SageMaker hosting instance, choose Deploy. It may take 5–10 minutes until your persistent endpoint is up and running.

After a few minutes, your endpoint is operational and ready to respond to inference requests!

To accelerate your time to inference, JumpStart provides a sample notebook that shows you how to run inference on your freshly deployed endpoint. To access the notebook in Studio, choose Open Notebook under the Use Endpoint from Studio section of the model endpoint page.

Use JumpStart programmatically with the SageMaker SDK

You can use the JumpStart UI to deploy a pre-trained model interactively in just a few clicks. However, you can also use JumpStart models programmatically by using APIs that are integrated into the SageMaker Python SDK.

In this section, we choose an appropriate pre-trained model in JumpStart, deploy this model to a SageMaker endpoint, and run inference on the deployed endpoint, all using the SageMaker Python SDK. The following examples contain code snippets. For the full code with all of the steps in this demo, see the Introduction to JumpStart – Text to Image example notebook.

To deploy the model in SageMaker Studio Lab, please to the notebook.

Deploy the pre-trained model

SageMaker is a platform that makes extensive use of Docker containers for build and runtime tasks. JumpStart uses the available framework-specific SageMaker Deep Learning Containers (DLCs). We first fetch any additional packages, as well as scripts to handle training and inference for the selected task. Finally, the pre-trained model artifacts are separately fetched with model_uris, which provides flexibility to the platform. You can use any number of models pre-trained on the same task with a single inference script. See the following code:

Note: To use Stable Diffusion 2.0, please change model_id to model-txt2img-stabilityai-stable-diffusion-v2. Next, we feed the resources into a SageMaker model instance and deploy an endpoint:

After our model is deployed, we can get predictions from it in real time!

Run inference

The following code snippet gives you a glimpse of what the outputs look like. To send requests to a deployed model, we use a JSON dictionary encoded in UTF-8 format:

The endpoint response is a JSON object containing the generated images and the prompt:

We get the following set of images as our outputs.

Stable Diffusion supports many parameters for image generation:

- negative_prompt – This feature guides the Stable Diffusion model towards prompts that the model should avoid during text generation. These prompts represent the undesirable features that would otherwise be present in the generated image either due to the prompt or the training data that the model was trained on.

- num_inference_steps – This feature increases the quality of generated images at the expense of inference time.

- guidance_scale – This feature finds a balance between how closely the image is related to a prompt with the quality of the generated image. A higher guidance scale results in images closely related to the prompt.

Some of the other parameters supported for the model are width, height, num_images_per_prompt and seed. For detailed information on valid values for each parameter and their impact on the output, see the Introduction to JumpStart – Text to Image example notebook. To run inference only images, please see the Introduction to JumpStart – Text to Image (Inference only).

Writing prompts can sometimes be an art. Even small changes to the prompt template can result in significant changes in the generated image. For advice on writing effective prompts, see What are Stable Diffusion Models and Why are they a Step Forward for Image Generation?

Prompt Engineering

Writing a good prompt can sometime be an art. It is often difficult to predict whether a certain prompt will yield a satisfactory image with a given model. However, there are certain templates that have been observed to work. Broadly, a prompt can be roughly broken down into three pieces: (i) type of image (photograph/sketch/painting etc.), (ii) description (subject/object/environment/scene etc.) and (iii) the style of the image (realistic/artistic/type of art etc.). You can change each of the three parts individually to generate variations of an image. Adjectives have been known to play a significant role in the image generation process. Also, adding more details help in the generation process.

To generate a realistic image, you can use phrases such as “a photo of”, “a photograph of”, “realistic” or “hyper realistic”. To generate images by artists you can use phrases like “by Pablo Piccaso” or “oil painting by Rembrandt” or “landscape art by Frederic Edwin Church” or “pencil drawing by Albrecht Dürer”. You can also combine different artists as well. To generate artistic image by category, you can add the art category in the prompt such as “lion on a beach, abstract”. Some other categories include “oil painting”, “pencil drawing, “pop art”, “digital art”, “anime”, “cartoon”, “futurism”, “watercolor”, “manga” etc. You can also include details such as lighting or camera lens such as 35mm wide lens or 85mm wide lens and details about the framing (portrait/landscape/close up etc.).

Note that model generates different images even if same prompt is given multiple times. So, you can generate multiple images and select the image that suits your application best.

Here are a few images generated by model-txt2img-stabilityai-stable-diffusion-v1-4 with prompts in the caption.

Here are a few images generated by model-txt2img-stabilityai-stable-diffusion-v2-fp16 with prompts in the caption.

For additional examples of sample prompts and generated images, please see Section Prompts and Generated Images in the Appendix. For a corpus of prompts including the ones used , please see Gustavosta Stable Diffusion Prompts. To learn more about prompting, please refer to the following:

- Stable Diffusion prompt guide: Basics of prompt engineering, using Stable Diffusion

- How to Write an Awesome Stable Diffusion Prompt

- A Beginner’s Guide to Prompt Design for Text-to-Image Generative Models

- Stable Diffusion Prompt Book

Negative Prompt

Negative prompt is an important parameter while generating images using Stable Diffusion Models. It provides you an additional control over the image generation process and let you direct the model to avoid certain objects, colors, styles, attributes and more from the generated images.

On the left is image generated by the Stable Diffusion 2.1 base model with the prompt “emma watson as nature magic celestial, top down pose, long hair, soft pink and white transparent cloth, space, D&D, shiny background, intricate, elegant, highly detailed, digital painting, artstation, concept art, smooth, sharp focus, illustration, artgerm, bouguereau”. On the right is the image generated by the model with the same prompt and negative prompt “windy”.

Even though, you can specify many of these concepts in the original prompt by specifying negative words “without”, “except”, “no” and “not”, Stable Diffusion models have been observed to not understand the negative words very well. Thus, you should use negative prompt parameter when tailoring the image to your use case.

On the left is the image generated by the Stable Diffusion 2.1 bas model with the prompt “a portrait of a man without beard” and on the right is the image generated with prompt “a portrait of a man” and negative prompt “beard”.

You can also use negative prompts to substitute parts of the prompt. For instance, instead of using “sharp”/“focused” in the prompt, you can use “blurry” in the negative prompt.

You can also use negative prompts to substitute parts of the prompt. For instance, instead of using “sharp”/“focused” in the prompt, you can use “blurry” in the negative prompt.

Negative Prompts have been observed to be critical especially for Stable Diffusion V2 (model-txt2img-stabilityai-stable-diffusion-v2, model-txt2img-stabilityai-stable-diffusion-v2-fp16, model-txt2img-stabilityai-stable-diffusion-v2-1-base). Thus, we recommend usage of negative prompts especially when using version 2.x. To learn more about negative prompting, please see How to use negative prompts? and How does negative prompt work?.

FP16 revisions

Image generation from Stable Diffusion models can be a slow process. It may take more than 20 seconds to generate a single image. To speed up the image generation process, you can now use Stable Diffusion 1 and Stable Diffusion 2 with floating point 16 precision during computation. This is at the expense of slight degradation in the image quality. This can lead to a speedup of more than twice as fast while generating images. Furthermore, you can generate twice as many images in a single request without experiencing an out of CUDA memory issue. To use the FP16 revision, use model_id= stabilityai-stable-diffusion-v1-4-fp16 or model_id = stabilityai-stable-diffusion-v2-fp16.

The following are the images generated by the model for the prompt “a photo of an astronaut riding a horse on mars.”

Fine-tune the Model

In ML, the ability to transfer the knowledge learned in one domain to another is called transfer learning. You can use transfer learning to produce accurate models on your smaller datasets, with much lower training costs than the ones involved in training the original model. Using transfer learning, you can fine-tune the stable diffusion model and adapt on your own dataset with as little as four images and in a matter of minutes. Following image show the train data images and the image of a dog sketch generated by the fine-tuned model.

To learn more, please see the Introduction to JumpStart – Text to Image example notebook and Fine-tune text-to-image Stable Diffusion models with Amazon SageMaker JumpStart.

Limitations and bias

Even though Stable Diffusion has impressive performance and can generate realistic images from raw text, it suffers from several limitations and biases. These include but are not limited to:

- The model can’t generate good text within images

- The model may not generate accurate faces or limbs because the training data doesn’t include sufficient images with these features

- The model may not work well with non-English languages because the model was trained on English language text

- The model was trained on the LAION-5B dataset, which has adult content and may not be fit for product use without further considerations

For further discussion on the limitations and biases of Stable Diffusion, see the Stable Diffusion v1 Model Card.

Open-source fine-tuned models in JumpStart

Even though Stable Diffusion models released by StabilityAI have impressive performance, they have some limitations in terms of the language or domain they were trained on. For instance, Stable Diffusion models were trained on English text, but you may need to generate images from non-English text. Or the Stable Diffusion models were trained to generate photorealistic images, but you may need to generate animated or artistic images.

In February 2023, JumpStart added over 80 open-source models with various languages and themes. These models are often fine-tuned versions from Stable Diffusion models released by StabilityAI. If your use case matches with one of the fine-tuned models, you don’t need to collect your own dataset and fine-tune it. You can simply deploy one of these models through the Studio UI or using the easy-to-use JumpStart APIs. For more information about deploying a pre-trained Stable Diffusion model in JumpStart, refer to Generate images from text with the stable diffusion model on Amazon SageMaker JumpStart.

The following are some examples of images generated by the different models available in JumpStart.

Note that these models are not fine-tuned using JumpStart scripts or DreamBooth scripts.

Upscaling

Upscaling is a process by which an image that is low resolution, blurry, and pixelated can be converted into a high-resolution image that appears smoother, clearer, and more detailed. It can be applied to both real images and images generated by text-to-image Stable Diffusion models. This can be used to enhance image quality in various industries such as ecommerce and real estate, as well as for artists and photographers. Additionally, upscaling can improve the visual quality of low-resolution images when displayed on high-resolution screens.

In Jan 2023, SageMaker JumpStart announced the support of upscaling using Stable Diffusion models. Unlike non-deep-learning techniques such as nearest neighbor, Stable Diffusion takes into account the context of the image, using a textual prompt to guide the upscaling process.

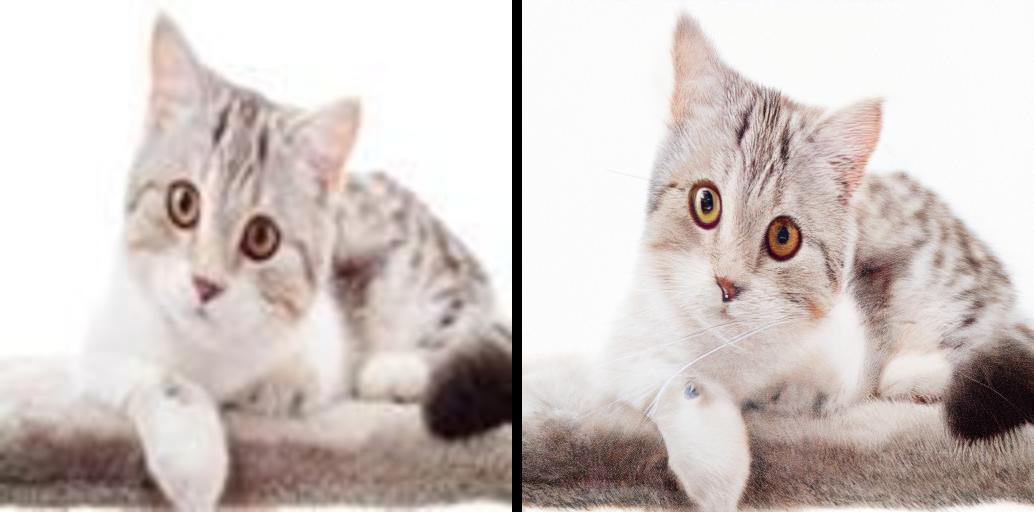

On the left is the low resolution cat image fed into the model with the prompt “a white cat.” On the right is the high resolution image generated by the model.

To learn more, please see Upscale images with Stable Diffusion in Amazon SageMaker JumpStart blog and the Introduction to JumpStart – Enhance image quality guided by prompt notebook.

Inpainting

Inpainting is the task of replacing portion of a image with another image described by a textual prompt. Given the original image, a mask image (describing the portion to be replaced) and a textual prompt, Stable Diffusion model generates a new image where masked part is replaced by the object/subject/environment described by the textual prompt. It can be used to reconstruct deteriorated image or generate new images with new subject/style in part of the image.

On the left is the original image, in the middle is the mask image, and on the right is the inpainted image generated by the model when fed in the original image, mask image and the prompt “a white cat, blue eyes, wearing a sweater, lying in park”, and negative prompt poorly drawn feet, body out of frame”.

To learn more, please refer to the Introduction to JumpStart Image editing – Stable Diffusion Inpainting example notebook.

Conclusion

In this post, we showed how to deploy a pre-trained image generation model using JumpStart. You can accomplish this without writing any code. Try out the solution on your own and send us your comments.

Disclaimer: The content and opinions contained in third-party blogs are those of the third-party author and AWS is not responsible for the content or accuracy therein.

JumpStart overview

JumpStart helps you get started with ML models for a variety of tasks without writing a single line of code. Currently, JumpStart enables you to do the following:

- Deploy pre-trained models for common ML tasks – JumpStart enables you to address common ML tasks with no development effort by providing easy deployment of models pre-trained on large, publicly available datasets. The ML research community has put a large amount of effort into making a majority of recently developed models publicly available for use. JumpStart hosts a collection of over 300 models, spanning the 15 most popular ML tasks such as object detection, text classification, and text generation, making it easy for beginners to use them. These models are drawn from popular model hubs such as TensorFlow, PyTorch, Hugging Face, and MXNet.

- Fine-tune pre-trained models – JumpStart allows you to fine-tune pre-trained models without needing to write your own training algorithm. In ML, the ability to transfer the knowledge learned in one domain to another domain is called transfer learning. You can use transfer learning to produce accurate models on your smaller datasets, with much lower training costs than the ones involved in training the original model. JumpStart also includes popular training algorithms based on LightGBM, CatBoost, XGBoost, and Scikit-learn, which you can train from scratch for tabular regression and classification.

- Use pre-built solutions – JumpStart provides a set of 17 solutions for common ML use cases, such as demand forecasting and industrial and financial applications, which you can deploy with just a few clicks. Solutions are end-to-end ML applications that string together various AWS services to solve a particular business use case. They use AWS CloudFormation templates and reference architectures for quick deployment, which means they’re fully customizable.

- Refer to notebook examples for SageMaker algorithms – SageMaker provides a suite of built-in algorithms to help data scientists and ML practitioners get started with training and deploying ML models quickly. JumpStart provides sample notebooks that you can use to quickly use these algorithms.

- Review training videos and blogs – JumpStart also provides numerous blog posts and videos that teach you how to use different functionalities within SageMaker.

JumpStart accepts custom VPC settings and AWS Key Management Service (AWS KMS) encryption keys, so you can use the available models and solutions securely within your enterprise environment. You can pass your security settings to JumpStart within Studio or through the SageMaker Python SDK.

- Run text generation with Bloom and GPT models on Amazon SageMaker JumpStart

- AlexaTM 20B is now available in Amazon SageMaker JumpStart

- Run image segmentation with Amazon SageMaker JumpStart

- Run text classification with Amazon SageMaker JumpStart using TensorFlow Hub and Hugging Face models

- Amazon SageMaker JumpStart models and algorithms now available via API

- Incremental training with Amazon SageMaker JumpStart

- Transfer learning for TensorFlow object detection models in Amazon SageMaker

- Transfer learning for TensorFlow text classification models in Amazon SageMaker

- Transfer learning for TensorFlow image classification models in Amazon SageMaker

About the Authors

Dr. Vivek Madan is an Applied Scientist with the Amazon SageMaker JumpStart team. He got his PhD from University of Illinois at Urbana-Champaign and was a Post Doctoral Researcher at Georgia Tech. He is an active researcher in machine learning and algorithm design and has published papers in EMNLP, ICLR, COLT, FOCS, and SODA conferences.

Dr. Vivek Madan is an Applied Scientist with the Amazon SageMaker JumpStart team. He got his PhD from University of Illinois at Urbana-Champaign and was a Post Doctoral Researcher at Georgia Tech. He is an active researcher in machine learning and algorithm design and has published papers in EMNLP, ICLR, COLT, FOCS, and SODA conferences.

Santosh Kulkarni is an Enterprise Solutions Architect at Amazon Web Services who works with sports customers in Australia. He is passionate about building large-scale distributed applications to solve business problems using his knowledge in AI/ML, big data, and software development.

Santosh Kulkarni is an Enterprise Solutions Architect at Amazon Web Services who works with sports customers in Australia. He is passionate about building large-scale distributed applications to solve business problems using his knowledge in AI/ML, big data, and software development.

Leonardo Bachega is a Senior Scientist and Manager in the Amazon SageMaker JumpStart team. He’s passionate about building AI services for computer vision.

Leonardo Bachega is a Senior Scientist and Manager in the Amazon SageMaker JumpStart team. He’s passionate about building AI services for computer vision.

Appendix: Sample prompts and generated images

Here are a set of images generated by model-txt2img-stabilityai-stable-diffusion-v1-4 for different prompts:

Here are a set of images generated by model-txt2img-stabilityai-stable-diffusion-v2-fp16 for different prompts: