Artificial Intelligence

Model hosting patterns in Amazon SageMaker, Part 7: Run ensemble ML models on Amazon SageMaker

Model deployment in machine learning (ML) is becoming increasingly complex. You want to deploy not just one ML model but large groups of ML models represented as ensemble workflows. These workflows are comprised of multiple ML models. Productionizing these ML models is challenging because you need to adhere to various performance and latency requirements.

Amazon SageMaker supports single-instance ensembles with Triton Inference Server. This capability allows you to run model ensembles that fit on a single instance. Behind the scenes, SageMaker leverage Triton Inference Server to manage the ensemble on every instance behind the endpoint to maximize throughput and hardware utilization with ultra-low (single-digit milliseconds) inference latency. With Triton, you can also choose from a wide range of supported ML frameworks (including TensorFlow, PyTorch, ONNX, XGBoost, and NVIDIA TensorRT) and infrastructure backends, including GPUs, CPUs, and AWS Inferentia.

With this capability on SageMaker, you can optimize your workloads by avoiding costly network latency and reaping the benefits of compute and data locality for ensemble inference pipelines. In this post, we discuss the benefits of using Triton Inference Server along with considerations on if this is the right option for your workload.

Solution overview

Triton Inference Server is designed to enable teams to deploy, run, and scale trained AI models from any framework on any GPU- or CPU-based infrastructure. In addition, it has been optimized to offer high-performance inference at scale with features like dynamic batching, concurrent runs, optimal model configuration, model ensemble capabilities, and support for streaming inputs.

Workloads should take into account the capabilities that Triton provides to ensure their models can be served. Triton supports a number of popular frameworks out of the box, including TensorFlow, PyTorch, ONNX, XGBoost, and NVIDIA TensorRT. Triton also supports various backends that are required for algorithms to run properly. You should ensure that your models are supported by these backends and in the event that a backend does not, Triton allows you to implement your own and integrate it. You should also verify that your algorithm version is supported as well as ensure that the model artifacts are acceptable by the corresponding backend. To check if your particular algorithm is supported, refer to Triton Inference Server Backend for a list of natively supported backends maintained by NVIDIA.

There may be some scenarios where your models or model ensembles won’t work on Triton without requiring more effort, such as if a natively supported backend doesn’t exist for your algorithm. There are some other considerations to take into account, such as the payload format may not be ideal, especially when your payload size may be large for your request. As always, you should validate your performance after deploying these workloads to ensure that your expectations are met.

Let’s take an image classification neural network model and see how we can accelerate our workloads. In this example, we use the NVIDIA DALI backend to accelerate our preprocessing in the context of our ensemble.

Create Triton model ensembles

Triton Inference Server simplifies the deployment of AI models at scale. Triton Inference Server comes with a convenient solution that simplifies building preprocessing and postprocessing pipelines. The Triton Inference Server platform provides the ensemble scheduler, which you can use to build pipelining ensemble models participating in the inference process while ensuring efficiency and optimizing throughput.

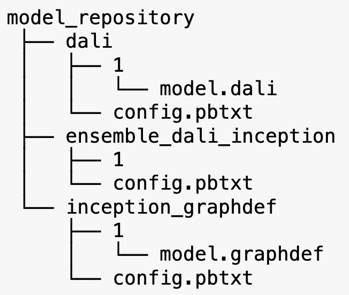

Triton Inference Server serves models from model repositories. Let’s look at the model repository layout for ensemble model containing the DALI preprocessing model, the TensorFlow inception V3 model, and the model ensemble configuration. Each subdirectory contains the repository information for the corresponding models. The config.pbtxt file describes the model configuration for the models. Each directory must have one numeric sub-folder for each version of the model and it’s run by a specific backend that Triton supports.

NVIDIA DALI

For this post, we use the NVIDIA Data Loading Library (DALI) as the preprocessing model in our model ensemble. NVIDIA DALI is a library for data loading and preprocessing to accelerate deep learning applications. It provides a collection of optimized building blocks for loading and processing image, video, and audio data. You can use it as a portable drop-in replacement for built-in data loaders and data iterators in popular deep learning frameworks.

The following code shows the model configuration for a DALI backend:

Inception V3 model

For this post, we show how DALI is used in a model ensemble with Inception V3. The Inception V3 TensorFlow pre-trained model is saved in GraphDef format as a single file named model.graphdef. The config.pbtxt file has information about the model name, platform, max_batch_size, and input and output contracts. We recommend setting the max_batch_size configuration to less than the inception V3 model batch size. The label file has class labels for 1,000 different classes. We copy the inception classification model labels to the inception_graphdef directory in the model repository. The labels file contains 1,000 class labels of the ImageNet classification dataset.

Triton ensemble

The following code shows a model configuration of an ensemble model for DALI preprocessing and image classification:

Create a SageMaker endpoint

SageMaker endpoints allow for real-time hosting where millisecond response time is required. SageMaker takes on the undifferentiated heavy lifting of model hosting management and has the ability to auto scale. In addition, a number of capabilities are also provided, including hosting multiple variants of your model, A/B testing of your models, integration with Amazon CloudWatch to gain observability of model performance, and monitoring for model drift.

Let’s create a SageMaker model from the model artifacts we uploaded to Amazon Simple Storage Service (Amazon S3).

Next, we also provide an additional environment variable: SAGEMAKER_TRITON_DEFAULT_MODEL_NAME, which specifies the name of the model to be loaded by Triton. The value of this key should match the folder name in the model package uploaded to Amazon S3. This variable is optional in cases where you’re using a single model. In the case of ensemble models, this key must be specified for Triton to start up in SageMaker.

Additionally, you can set SAGEMAKER_TRITON_BUFFER_MANAGER_THREAD_COUNT and SAGEMAKER_TRITON_THREAD_COUNT for optimizing the thread counts.

With the preceding model, we create an endpoint configuration where we can specify the type and number of instances we want in the endpoint:

We use this endpoint configuration to create a new SageMaker endpoint and wait for the deployment to finish. The status changes to InService when the deployment is successful.

Inference payload

The input payload image goes through the preprocessing DALI pipeline and is used in the ensemble scheduler provided by Triton Inference Server. We construct the payload to be passed to the inference endpoint:

Ensemble inference

When we have the endpoint running, we can use the sample image to perform an inference request using JSON as the payload format. For the inference request format, Triton uses the KFServing community standard inference protocols.

With the binary+json format, we have to specify the length of the request metadata in the header to allow Triton to correctly parse the binary payload. This is done using a custom Content-Type header application/vnd.sagemaker-triton.binary+json;json-header-size={}.

This is different from using an Inference-Header-Content-Length header on a standalone Triton server because custom headers aren’t allowed in SageMaker.

The tritonclient package provides utility methods to generate the payload without having to know the details of the specification. We use the following methods to convert our inference request into a binary format, which provides lower latencies for inference. Refer to the GitHub notebook for implementation details.

Conclusion

In this post, we showcased how you can productionize model ensembles that run on a single instance on SageMaker. This design pattern can be useful for combining any preprocessing and postprocessing logic along with inference predictions. SageMaker uses Triton to run the ensemble inference on a single container on an instance that supports all major frameworks.

For more samples on Triton ensembles on SageMaker, refer the GitHub repo. Try it out!

About the Authors

James Park is a Solutions Architect at Amazon Web Services. He works with Amazon.com to design, build, and deploy technology solutions on AWS, and has a particular interest in AI and machine learning. In his spare time, he enjoys seeking out new cultures, new experiences, and staying up to date with the latest technology trends.

James Park is a Solutions Architect at Amazon Web Services. He works with Amazon.com to design, build, and deploy technology solutions on AWS, and has a particular interest in AI and machine learning. In his spare time, he enjoys seeking out new cultures, new experiences, and staying up to date with the latest technology trends.

Vikram Elango is a Senior AI/ML Specialist Solutions Architect at Amazon Web Services, based in Virginia, US. Vikram helps financial and insurance industry customers with design and thought leadership to build and deploy machine learning applications at scale. He is currently focused on natural language processing, responsible AI, inference optimization, and scaling ML across the enterprise. In his spare time, he enjoys traveling, hiking, cooking, and camping with his family.

Vikram Elango is a Senior AI/ML Specialist Solutions Architect at Amazon Web Services, based in Virginia, US. Vikram helps financial and insurance industry customers with design and thought leadership to build and deploy machine learning applications at scale. He is currently focused on natural language processing, responsible AI, inference optimization, and scaling ML across the enterprise. In his spare time, he enjoys traveling, hiking, cooking, and camping with his family.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch and spending time with his family.

Saurabh Trikande is a Senior Product Manager for Amazon SageMaker Inference. He is passionate about working with customers and is motivated by the goal of democratizing machine learning. He focuses on core challenges related to deploying complex ML applications, multi-tenant ML models, cost optimizations, and making deployment of deep learning models more accessible. In his spare time, Saurabh enjoys hiking, learning about innovative technologies, following TechCrunch and spending time with his family.