AWS for M&E Blog

Actionable video on demand for audience engagement

As viewers shift from passively viewing into actively engaging with content during video playback, content providers can design and build interactive and actionable experiences for their audiences. Actionable video is context-aware and time-specific, allowing the viewer to respond to prompts about a person, place, or object while watching and interacting with video as it plays.

Examples of actionable video prompts include: add this product to cart, send me more information, deliver the ingredients in this recipe, or show me directions to this location. These are just some examples of creative possibilities that enhance the viewing experience and drive audience engagement through actionable video.

Content providers with existing video-on-demand (VOD) archives, and new VOD productions can create actionable video experiences using managed services from Amazon Web Services (AWS). This blog post describes the framework, and architecture for building actionable video for VOD content on AWS.

Actionable is a type of interactive video

In actionable video experiences, a prompt displays that is time-aligned and context-aware of the people, places, objects, or sentiment of a scene. Each actionable prompt is assigned to a timestamp range where it should be synchronized to the video.

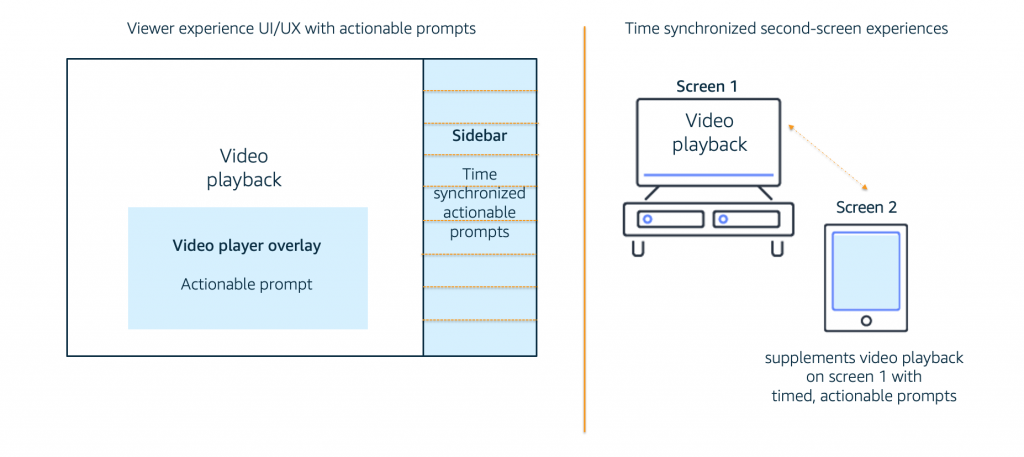

Actionable prompts can be rendered as a video player overlay, in a sidebar, or as a second-screen experience. As video playback progresses, the visible actionable prompt changes as it is synchronized and updated to match the content by timestamp. Both the visual design and synchronization of the prompt to the video timestamp is developed in the front-end technologies of a website, mobile app, and video player.

An interactive video producer identifies, prepares, and produces the content for the timed actionable prompts in advance of publishing the VOD asset to a website or mobile app. AWS AI/ML managed services can accelerate this workflow by analyzing the video and generating metadata and timestamps about people, places, objects, and sentiment of a scene.

Actionable video on-demand through embedded or extra metadata

Actionable experiences can be achieved through embedded or extra timed video metadata (or an architecture that combines both). The video player and browser, or mobile app, receives events during playback when timed metadata aligns with the video. The front-end application parses and renders metadata into an actionable prompt.

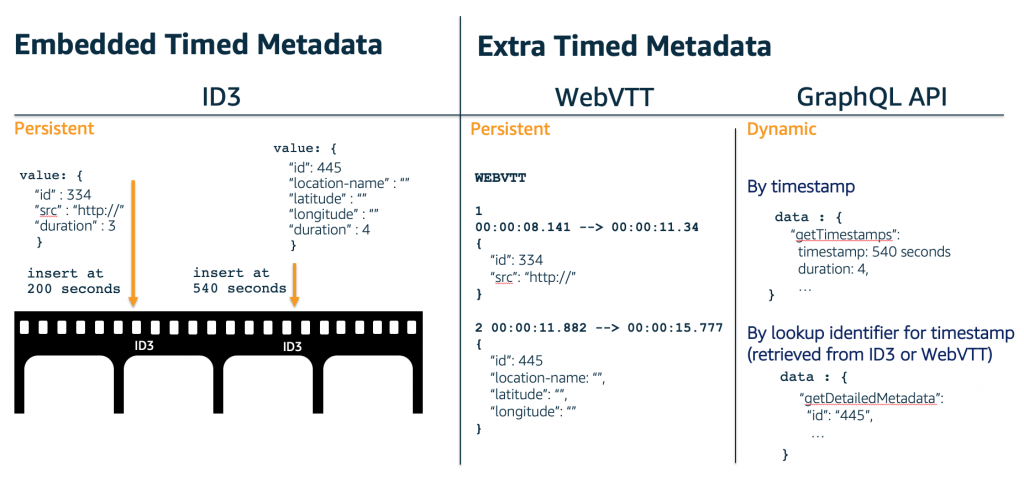

Embedded timed video metadata is persistent and inserted into the video asset during transcoding. Once inserted, embedded metadata is immutable. For HLS, embedded timed metadata is inserted into the video segments as timed ID3 tags. ID3, developed by the ID3 organization, is a standard for embedding metadata into media to describe the media. The ID3 standard originated in 1996 to describe mp3 audio. ID3 version 2 introduced support for streaming media, with ID3v2.4.0 being the latest specification.

There are two extra metadata approaches. Persistent extra metadata uses WebVTT metadata files that requires video player support for mobile apps and browser support for HTML5 players. Dynamic extra metadata is provided by a GraphQL API where a mobile app or browser requests the required metadata parameters aligned by timestamp, or upon an ID3 metadata parsed or WebVTT cue change event.

Extra timed video metadata is flexible and mutable and is retrieved separately from the video asset. Extra metadata can be dynamic and personalized when it is retrieved in real time for each viewer.

The actionable mechanism

The most impactful viewing experiences combine metadata sources to create dynamic and personalized actionable experiences. The persistent approach uses embedded ID3 or extra WebVTT metadata files to trigger time-aligned events for actionable opportunities. These time-specific events can query dynamic, extra metadata from a GraphQL API to augment and personalize metadata for each viewer during playback.

Choosing the persistent approach is also influenced by your VOD content. For existing transcoded assets, you would create metadata in extra WebVTT metadata files as these can be created and maintained separately to the VOD asset.

Embedded ID3 metadata must be inserted during VOD transcode with AWS Elemental MediaConvert, so the embedded approach is suited to new content productions.

For a broader perspective about how embedded ID3 is used in other streaming media contexts, Amazon Interactive Video Service (Amazon IVS) uses the ID3 standard for embedding metadata into live video streams. When Amazon IVS live streams are recorded to Amazon Simple Storage Service (Amazon S3) for VOD playback, the timed metadata persists into the recorded asset.

| Mechanism | Lifespan | Timed metadata format | Playback event

(video player / browser) |

| Embedded | Persistent | ID3 | Provided by video player in metadata parsed event (or similar).

The Amazon IVS player defines this event as TEXT_METADATA_CUE. |

| Extra | Persistent | WebVTT metadata file |

Provided by video player in cue change event (or similar). The Amazon IVS player does not currently support WebVTT metadata. |

| Extra | Dynamic * | GraphQL API | – time update (observing media timestamp)

OR – lookup event triggered from ID3 or WebVTT metadata |

* can aggregate multiple data sources for each viewer

Timed metadata events are triggered differently, depending on the metadata format and the video player and browser/mobile app for playback:

- Timed embedded ID3 tags are reported by the video player in an event name defined by the video player (like metadata parsed). The Amazon IVS video player reports the event TEXT_METADATA_CUE.

- Timed WebVTT metadata files must be supported by the web browser (for HTML5 video players) and/or the video player (for mobile apps). Video players typically report WebVTT timed metadata events as cue change events. The Amazon IVS video player does not currently support WebVTT metadata.

- Extra metadata defined by a GraphQL API by media timestamp requires a web browser or mobile app to monitor the time update event of the current playback time and render the actionable prompt when timestamps align.

Actionable video on AWS

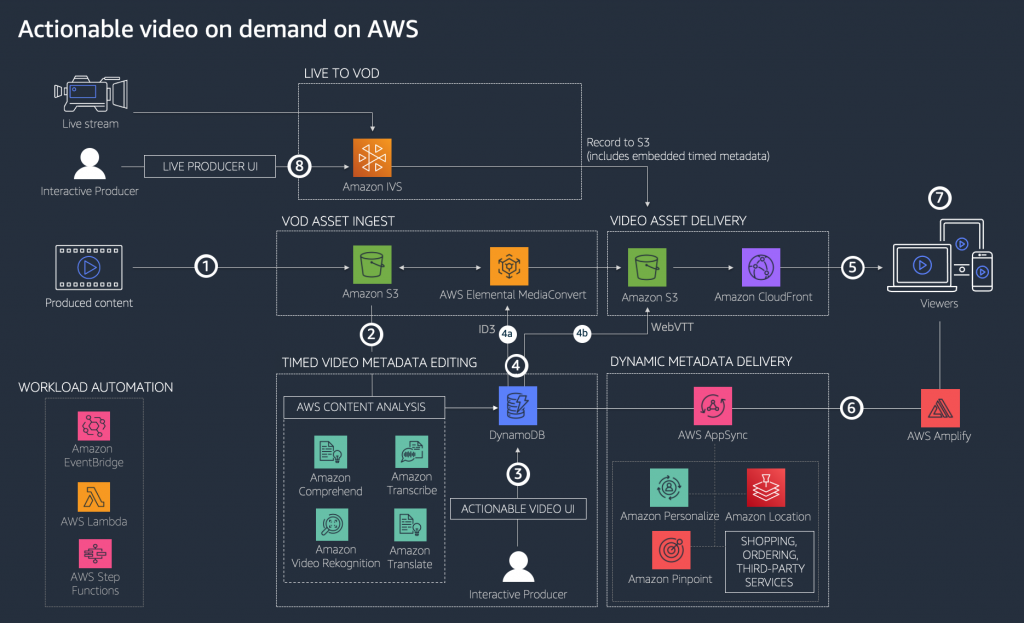

The following architecture demonstrates how actionable video can be achieved on AWS.

Interactive producers can identify actionable opportunities with the AWS Content Analysis solution to analyze video content and generate timed metadata about the people, places, objects, sentiment, scene detection, and audio transcription. AWS Content Analysis uses pre-trained AI/ML services such as Amazon Rekognition, Amazon Transcribe, Amazon Translate, and Amazon Comprehend to provide ready-made intelligence and accelerate editing and production of impactful actionable prompts.

Diagram Summary

- Content providers upload video assets into Amazon S3 using the AWS Content Analysis solution web UI. Alternatively, assets can be uploaded directly to an Amazon S3 bucket by creating an S3 trigger with AWS Lambda to initiate the AWS Content Analysis workflow.

- The AWS Content Analysis workflow is initiated for each asset to analyze content with Amazon Rekognition Video, Amazon Comprehend, Amazon Transcribe, and Amazon Translate. Results are stored as JSON metadata with timestamps in Amazon DynamoDB.

- The interactive producer defines timed actionable prompts for each VOD asset. The interactive producer is accelerated by the metadata and timestamps generated by the AWS AI/ML services used by the AWS Content Analysis solution to detect people, places, objects, sentiment, and scenes.

- Persistent actionable metadata is created in the selected format for video delivery. Metadata insertion can be automated using an AWS Lambda function to generate a WebVTT file in the correct format, or insert embedded ID3 metadata using AWS Elemental MediaConvert.

- Embedded persistent metadata is specified by timestamp and ID3 tag in the job template and inserted during video asset transcode using AWS Elemental MediaConvert.

- Use the AWS Console to create a MediaConvert job specification in JSON format with support for embedded, timed ID3 metadata that can be used by the AWS Lambda function using the MediaConvert API.

- On the “Global processing” page of the MediaConvert console, specify the timestamp and base64 payload of each ID3 tag.

- For HLS outputs under the “Transport stream settings” of each video output, set “ID3 metadata” to Passthrough and specify a Program ID (PID) for the ID3 metadata (or leave blank for a default value of 502).

- For DASH or CMAF outputs, under the “CMAF container settings” of each video output, set “ID3 metadata” to Passthrough.

- Full-formed ID3 messages in Base64 format can be generated using an external tool. One option is the ID3 tag generator provided in Apple’s HTTP Live Streaming (HLS) tools which are provided as a Unix executable. AWS Lambda can run arbitrary executables in functions to make use of this tool.

- Extra persistent metadata is generated as a WebVTT metadata file using AWS Lambda to query the interactive producer’s time-edited captions for the video asset and generate metadata in the WebVTT file format to save on Amazon S3.

- Embedded persistent metadata is specified by timestamp and ID3 tag in the job template and inserted during video asset transcode using AWS Elemental MediaConvert.

- Video on-demand asset delivery includes VOD assets (HLS, DASH, CMAF) and WebVTT files containing actionable, timed-aligned metadata.

- Extra metadata is retrieved from AppSync using a GraphQL API capable of delivering dynamic data for the viewer by connecting data from multiple sources. AWS services for viewer personalization and targeting include Amazon Personalize, Amazon Location, and Amazon Pinpoint. The AWS Amplify SDK is optimized to connect easily and securely to AppSync by performing Signature Version 4 request signing.

- The video player listens for cue change, metadata parsed, or time update events and renders actionable prompts on the client app or website UI.

- Actionable VOD assets can also be created when recording an Amazon IVS live stream by using auto-record to S3. A recorded Amazon IVS session will contain embedded metadata that was inserted during the IVS live stream using the Timed Metadata into Amazon IVS

Conclusion

The creative possibilities for building actionable prompts into your VOD playback experience can be accelerated by using the AWS Content Analysis solution and by selecting the video metadata strategy that supports your desired call-to-actions for viewer engagement.

Learn more about AWS media services and video analysis solutions

The AWS Content Analysis solution can be installed into your AWS environment within minutes using the one-click cloud formation template on the AWS Content Analysis solution page.

A video on demand workflow can be installed into your AWS environment within minutes with solutions available at Video On Demand on AWS.

To create VOD assets with embedded metadata, timed ID3 tags can be inserted into the MediaConvert job by using the AWS console. Transcode jobs can be automated through the AWS API. For more details, refer to the MediaConvert API specification and Getting Started with the MediaConvert API.

For Amazon IVS live streams recorded to Amazon S3, learn more about events provided by the Amazon IVS player to parse and render Amazon IVS Timed Metadata.

For building a GraphQL API for Extra Metadata, get started with the AWS AppSync documentation and Building a Client App with AppSync for front-end web and mobile integration with AWS Amplify.