AWS Partner Network (APN) Blog

How to Automate Cloud Governance to Achieve Safety at Speed

By Matt Tyler, Consultant at Mechanical Rock

|

|

|

|

Many organizations use AWS Service Catalog to create and manage collections of approved IT services. Cloud services can be restricted to an approved subset that is defined in AWS CloudFormation and distributed to IT staff, and AWS Config is used to assess and audit resources in their AWS environments.

Organizations can take a “compliance-as-code” approach by combining AWS Service Catalog and AWS Config. This is an effective way to maintain governance over a complex cloud environment and is more attractive now due to recent pricing changes to AWS Config.

Compliance-as-code is an alternative to performing a manual audit. It’s an automated method to check that controls are being followed where rules are written in a programming language that can be run on an event. The source of this event could be from a recurring schedule, or from a change that has happened in the environment.

Mechanical Rock is an AWS Partner Network (APN) Advanced Consulting Partner with the AWS DevOps Competency.

Mechanical Rock uses AWS Service Catalog to distribute common infrastructure patterns that enable development teams to quickly build and deploy modern cloud native applications. We continually improve our patterns using a process we call “Behavior Driven Infrastructure” by applying Behavior Driven Development (BDD) techniques to cloud infrastructure.

In this post, I will demonstrate how to use AWS Config to execute tests against products that are instantiated from AWS Service Catalog. I’ll do this by enabling AWS Config and configuring a custom rule that targets an instance of a product that we will upload to AWS Service Catalog. We will then learn how to view the results of our tests in the AWS Management Console.

Shift-Left with Compliance-as-Code

The last decade has seen the rise of many new software development practices, including BDD and DevOps. A common theme in each is the “shift-left” approach where activities that would have been done later are instead done earlier, often during implementation.

This approach reduces the likelihood a change will need to be made late in development when the cost of doing so is often high. In recognizing that taking a shift-left approach can lower a project’s risk, let’s consider how we can apply this to compliance-as-code.

It’s important to understand that compliance-as-code is basically a testing activity. It can be applied at any stage, whether that’s developing or operating software. Relevant stakeholders should be approached early to define a specification for a new product.

An output of the shift-left approach should be a list of rules that determine what constitutes a compliant design. These rules are translated to AWS Config rules and evaluated to assess the compliance of the product. As an organization’s risk profile changes, these rules may need to be updated to reflect the new conditions. They can also be used to drive products to meet new demands.

Creating a Compliant Product

Ensuring product designs are compliant with organisational policy is a continuous process that begins with the idea of a reusable product that can benefit the business.

Requirements are elicited from various stakeholders, which may include end users and other supporting functions within the organization, such as security. We can then define these requirements in code as a set of rules, which can be executed against the product to validate that it meets expectations.

The product is developed and published to AWS Service Catalog so that developers can consume it as a component of a larger application. We can continue to execute our rules against all instances of a product to provide assurances they are still meeting business requirements.

Additionally, product end users and other stakeholders can provide feedback to improve products, which may result in updates to business rules and improved functionality.

Figure 1 – Visualizing the product development cycle.

Identifying a Product

In this next section of the post, I’ll step through this approach with a concrete example. First, let’s assume the organization gives development teams three accounts. One is to be used for development; another for production. The final account is used for tooling and contains the pipeline used to deploy to the other two accounts.

Our goal is to make it easy to configure pipelines that deploy to these accounts.

Meeting with Stakeholders

Discussions should be held with security staff and developers that will use the product. Meeting with stakeholders can help you learn, for example, that developers have different levels of access based on whether the account is for development or production.

Security will likely raise a few concerns, such as that pipelines may be misconfigured and workloads intended for development environments could be prematurely deployed to production. To address this, it can be decided the pipeline account should be configured with a pair of SSM parameters. These can be referenced in any future products to prevent the pipelines from being misconfigured.

In our example, these two parameters are:

- /config/production (for the production account)

- /config/development (for the development account)

There’s an expectation that these parameters do not reference the same account, and you should provide stakeholders with assurances that this is the case.

An appropriate BDD scenario describing this requirement might be written as:

- Given that we have provisioned an account-config product, then the values of the development and production parameter should not equal the same value.

Implementation

To get started, we need to write a function that checks that our parameters are configured with unique values.

In this example, we assume the underlying stack for the product exposes parameters as stack outputs.

Next, we need to import our rules into a serverless AWS Lambda handler, which will be invoked when the rule is evaluated.

Our Lambda functions will be triggered on any events that occur to our account configuration product. When this is invoked, it receives metadata about the item that triggered it.

Furthermore, a CloudFormation stack’s metadata includes resource names, tags, inputs, and outputs. We can use this metadata to check the state of compliance. Once this is done, we can send the result back to AWS Config.

Now that the rule has been created, we can begin development on the product. This product is relatively simple and could be implemented via the following CloudFormation, and then distributed to end-users via AWS Service Catalog.

Deploy an Example

Now, let’s look at how to deploy all of the components required to create a working example in an AWS account.

We’ll deploy an example product that we can later instantiate two instances from. We’ll then deploy a Lambda function that contains our test logic, enable AWS Config, and deploy an appropriate rule linked to our Lambda that executes against instances of our product.

Next, we will provision two instances of our product; one which will fail our tests, and one which will pass our tests. After the two instances of our product have been provisioned, we will learn how to review the results of our rule execution in the AWS Management Console.

Upload the Product Template to a Bucket

The quickest way to do this is to copy the CloudFormation template above into a file, and then upload that file to an Amazon Simple Storage Service (Amazon S3) bucket. Next, get the URL of the file you uploaded because you’ll need to pass it in as a parameter to another stack.

Deploy the AWS Lambda Function

Click the button below to create the Lambda function in your account. This creates the function with the necessary permissions to work with AWS Config, and gives AWS Config permission to invoke the function.

This stack will output the function Amazon Resource Name (ARN), which we’ll need to use later, so make sure to note it down somewhere safe.

Enable AWS Config

Configuring AWS Config will record changes to items in our account; you can deploy the template to configure everything.

This template configures an AWS Config Recorder, which records any changes that occur to CloudFormation stacks and any AWS Service Catalog resources. If you’ve already configured an AWS Config Recorder, please ensure you are recording configuration changes to CloudFormation and Service Catalog resources.

Create the Catalog

Before we can deploy the rule and related triggers, we need to create the product. The CloudFormation template below can be deployed to create a portfolio and associated product. This takes two parameters: PrincipalArn and ProductUrl.

Use your current identity ARN, whether that’s an AWS Identity and Access Management (IAM) role or user. You will also need to use the Amazon S3 URL of the product you uploaded earlier.

After the stack is created, it should have an output called “ProductId”. Make a note, as we’ll use this in the next stack.

Configure the Config Rule

Executing against AWS Service Catalog-provisioned products is a little unintuitive. We should not select a provisioned product resource type, because that would execute our rule against all types of products and we would rather restrict execute to one particular type of product.

Provision an Instance of the Product

Now, all we need to do is provision two instances of our product. AWS Config will detect these product instances and evaluate them against our rule. The stack below will create the two product instances.

View the Evaluation of the Rule

Navigate back to the AWS Config console to view the results. It can take up to 30 minutes for the rule to be triggered, so you may need to wait before you can review the results. Keep this in mind if the results have not appeared immediately.

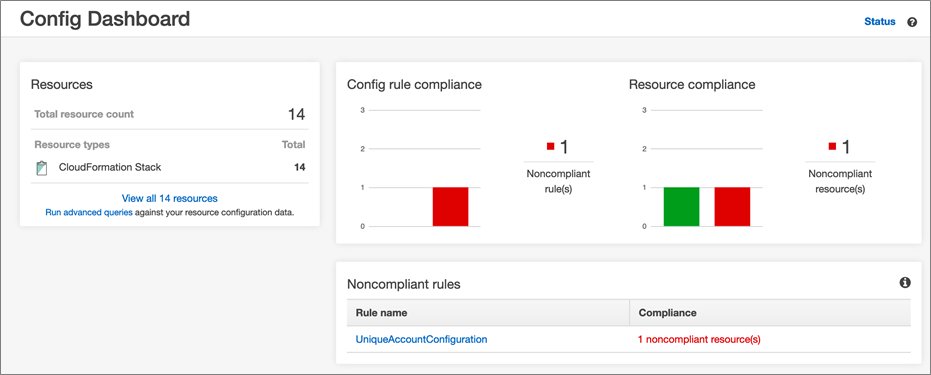

The dashboard should show that one item is compliant, and another is non-compliant.

Figure 2 – Viewing the compliance status of two provisioned products.

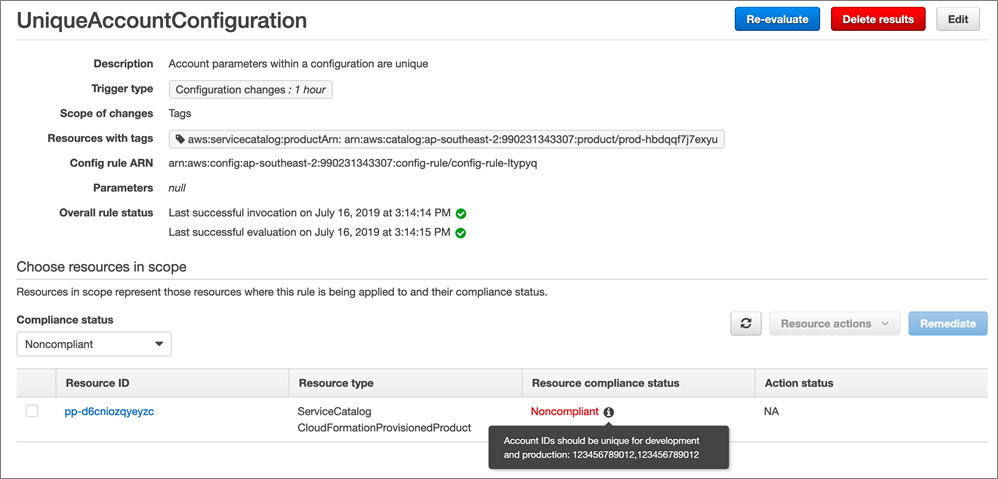

Upon viewing the results, we see that our “fail” product has failed the rule evaluation and is showing as non-compliant. The reason it failed can be shown by hovering over it. Our “passing” version is recorded as compliant.

Figure 3 – Determining why a provisioned product failed the test.

Taking This Further

There are plenty of ways this approach can be expanded on. The product used in our example above is simple but demonstrates how easy it can be to evaluate custom rules against products in AWS Service Catalog.

You could expand this to:

- Interrogate the security headers on the response of an Amazon API Gateway or CloudFront distribution.

- Check if unauthorized requests to a web server are rejected.

- Ensure domains follow your organization’s naming standards.

There are additional features of AWS Config that I have not demonstrated, including:

- Aggregating results back to a central location in order to gain a single view into the compliance status of an organization’s products.

- Collating information on product usage within an organization.

Summary

Compliance-as-code is great way to provide governance over cloud resources, and AWS Config makes it easy to implement. When this is combined with AWS Service Catalog, sophisticated tests can be created to verify products meet various requirements from stakeholders such as end users, security, risk, compliance, and more.

We identified earlier that taking a “shift-left” approach to compliance is the most effective way to ensure products meet organizational requirements. If stakeholders are engaged early in the process, these requirements can be captured in code and used to guide the entire development lifecycle. This ensures products continue to meet user expectations and compliance obligations both now and in the future.

We went through an example showing how to encapsulate a product as CloudFormation template that is distributed through AWS Service Catalog. We looked at how to develop a test that can be executed through AWS Lambda, and how this function can be configured and invoked via AWS Config. Finally, we learned how to review the results of an AWS Config rule execution in the AWS Management Console.

If you’re interested in learning more about authoring custom AWS Config rules, I can recommend investigating the AWS Config Rules Development Kit provided by AWS Labs. Additionally, if you are interested in how to manage AWS Config rules at scale in multi-account, multi-regional deployments, check out AWS Config Engine for Compliance-as-Code.

The content and opinions in this blog are those of the third party author and AWS is not responsible for the content or accuracy of this post.

.

Mechanical Rock – APN Partner Spotlight

Mechanical Rock is an AWS DevOps Competency Partner whose test-first approach delivers results quickly. They focus on enterprise DevOps, infrastructure modernization, cloud-native application development, and automated data platforms (AI/ML).

Contact Mechanical Rock | Practice Overview

*Already worked with Mechanical Rock? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.