AWS Partner Network (APN) Blog

Developing Payment Card Industry Compliant Solutions on AWS to Protect Customer Data

By Amit Mukherjee, Sr. Solutions Architect at AWS

By Satyajit Das, Director, Digital Practice at Capgemini

By Rohit Sinha, Director, Digital Practice at Capgemini

|

|

|

Financial institutions possess and process data that are very sensitive and have immense business value. In recent years, regulations like open banking and data residency law have forced organizations to be even more adaptive to frequent challenges to systems storing and processing the data.

In 2019, one of the central banks mandated a new data residency regulation for the payment industry. According to the regulation, all data containing end-to-transaction details—as well as information collected, carried, and processed as part of message or payment instructions—must be stored only in the same geographic region it originated.

One of Capgemini’s financial customers is a worldwide credit card provider that has existing data centers outside the geographic region where data were being stored. The customer didn’t have a facility in the geographic region that could comply with this new regulation, but they had to comply with the new regulation in the short time span of three months.

The total cost of ownership (TCO) for the system had to be low, and ongoing operations needed to be efficient. The system developed needed to have high availability and fault tolerance.

Systems handling financial data are prime targets of cyber attacks to exploit data. The Payment Card Industry Data Security Standard (PCI DSS) must be implemented to protect financial data from getting exploited by a rogue agency, and since the customer’s system processes and stores data, it must comply with the PCI DSS standard.

In this post, we’ll explore how Capgemini developed an application to address this customer challenge. We’ll also elaborate how the approach we took helped the credit card provider comply with PCI DSS security standards.

Capgemini is an AWS Partner Network (APN) Premier Consulting Partner and Managed Service Provider (MSP) with the AWS Financial Services Competency. With a multicultural team of 220,000 people in 40+ countries, Capgemini has more than 6,000 people trained on AWS and 1,500 AWS Certified professionals.

Building the Solution

As we got started on this project, there were four primary functionalities the customer needed for their solution:

- Receive data from customers securely and store it on the AWS Cloud.

- Process and store data into a database.

- Build visualization platform and run ad hoc queries on data.

- Implement PCI requirements in very short time span.

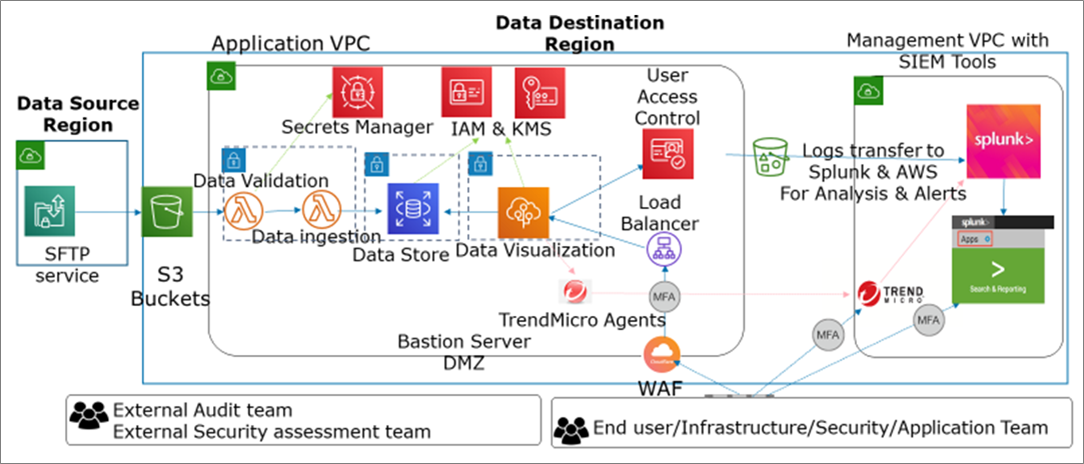

The architecture in Figure 1 represents how we designed the application to provide high reliability, performance efficiency, and fault tolerance.

Figure 1 – High-level solution architecture.

Here are details of the high-level solution architecture:

- Through AWS Transfer for SFTP, incoming files are pushed securely into Amazon Simple Storage Service (Amazon S3) object storage from the source to destination region on AWS.

- Incoming data is validated, encrypted, and ingested to the Amazon Aurora database using an AWS Lambda function.

- The data visualization platform accessed by the end user is deployed to AWS Elastic Beanstalk and provides data based on access rights.

- All components are deployed in a fault tolerant and highly available manner to multiple AWS Availability Zones.

- Application requests are distributed using Elastic Load Balancing, and application users access control is managed by Amazon Cognito.

- Access and privilege is strictly controlled using AWS Identity and Access Management (IAM), while data are encrypted at rest and in motion using AWS Key Management Service (KMS).

- Application, infrastructure, and monitoring logs that are generated are transferred to Splunk’s Security Information and Event Management (SIEM) tool for centralized monitoring and event handling.

- TrendMicro is used for security and vulnerability protection, and the application is protected using Cloudflare’s Web Application Firewall (WAF).

- A separate network is created using Amazon Virtual Private Cloud (VPC) so the application and management have a reduced blast radius in advent of any security issue.

Ensuring Security to Comply with PCI Requirements

Here are high-level objectives of PCI DSS compliance, and an overview of how Capgemini implemented each for the customer’s application.

Building and Maintaining a Secure Network and Systems

Data is secured in the cloud by setting a firewall around AWS resources. Data access is secured by restricting access to and from restricted VLAN in the Capgemini office. Strict governance is put in place for Capgemini and AWS firewall rules.

An access password rotation policy is put in place for added security, and the vendor default password is changed before deploying configuration in production

Protecting Cardholder Data

Cardholder data is encrypted at rest and in motion. At rest PCI data is masked using a non-reversible hash key. Capgemini employed a zero-touch process of security key creation to avoid vulnerability because of human intervention.

Cloudflare’s WAF was deployed to scan and secure data transmitted to open, public networks.

Maintaining a Vulnerability Management Program

A comprehensive vulnerability management program was designed and deployed. To protect against malware, a periodic internal and external vulnerability scan, penetration testing, and anti-virus definition file updates were also put in place.

All components are kept up-to-date with the latest security patches. Portions of this program were automated using a state-of-the-art continuous integration and continuous deployment (CI/CD) pipeline. Code reviews and Open Web Application Security Project (OWASP) coding guidelines were administered via CI/CD.

Implementing Strong Access Control Measures

Authorization to access cardholder data is granted based on business justification (need to know). Additionally, virtual access for a limited time was provided to systems accessing data.

Any access request is authenticated and authorized, and their actions are logged for audit trails. Multi-Factor Authentication (MFA) is employed to reduce reliance on a single password. To further strengthen the security, strict password rules and rotation policy were put in place.

Regularly Monitoring and Testing Networks

Capgemini’s application was designed to not allow data download in any form or shape. There is no physical access to data on AWS.

The system that’s used to access cardholder data was secured by disabling wireless, printer, mail, and instant messaging access. Traffic from this secure system was allowed only to AWS and the host application.

All network and application access is monitored and analyzed 24×7 for any issues. An alert system was put in place to notify for remediation.

Maintaining an Information Security Policy

Policies are created and operationalized to identify roles and responsibilities of each person involved. These policies are maintained and kept up-to-date based on evolving needs of the business.

Achieving Operational Excellence

Implementing operational excellence helps organizations to determine system state and transaction traceability. It also helps to reduce defects, ease remediation, and improve flow into production.

Here’s how we mitigated deployment risks and ensured readiness to support a workload by deploying small improvements incrementally:

- Perform operations-as-code for control plane and management plane. Capgemini leveraged infrastructure-as-code (IaaC) using AWS CloudFormation, CloudCustodian, and Splunk for implementing comprehensive DevSecOps. This is done to recreate the whole system in the event of any disaster automatically without any manual intervention.

- Make frequent, small, reversible changes. We used CI/CD pipeline for application code, and control plane and management plane was implemented as well. This is done for deploying small reversible incremental changes to the system, and helps roll back the changes quickly in the event of any deployment issue.

- Refine operations procedures frequently. Capgemini created a playbook and runbooks to efficiently handle failure and error scenarios easily and automate them is possible.

- Anticipate failure. Service Integration and Management (SIAM) is used to monitor system and alert issues, which are mostly auto healed or taken care of by the respective team that supports the system 24×7.

- Learn from all operational failures. We documented new runbooks for failure and created playbooks from repeated failure to help automate or quick recover from failure scenarios.

Designing for Reliability

Designing applications for reliability helps withstand component failures for critical components, and offers a simplified component failure recovery process with less mean time to recover (MTR) for less critical components.

Most system components are designed for fault tolerance and are auto-recoverable in case of any fault. System or component issues are detected through SIAM and replace the failed component with a new one, or take steps to recover from failure using automated scripts.

System components also recovers automatically from failure by virtue of IaaC. These components are recreated in case of any disaster, ensuring the system is reliable in case of any failure.

Lessons Learned

The on-demand nature of cloud services that are also PCI compliant helped Capgemini assemble the components to build the customer’s system. Initial investment in infrastructure-as-code and DevSecOps goes a long way to build systems incrementally and repeatably across multiple environments.

Traditional ways of building systems need to be challenged for better agility, ease of operationalization, and cost efficiency. Automation helps to reduce fault in the system and attain high service level agreement (SLA) that customers are looking for.

Furthermore, performance testing and tuning helps build an efficient system. Security is a concern that needs continuous focus as new ways of exploiting systems are evolving. Cost management is an ongoing process that may be achieved by exploiting new and innovative services that are available.

Summary

Capgemini helped the customer, a worldwide credit card provider, solve their challenges and build an application on AWS to comply with PCI DSS security standards. We developed a reliable, secure application architecture to resolve all functional and non-functional requirements.

To comply with PCI DSS security standards, we made the network, application components, and data secure, while implementing processes, policies, and programs to keep it protected.

Capgemini – APN Partner Spotlight

Capgemini is an APN Premier Consulting Partner with the AWS Financial Services Competency. With a multicultural team of 220,000 people in 40+ countries, Capgemini has more than 6,000 people trained on AWS and 1,500 AWS Certified professionals.

Contact Capgemini | Practice Overview

*Already worked with Capgemini? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.