AWS Database Blog

Use Amazon ElastiCache for Redis as a near-real-time feature store

Customers often use Amazon ElastiCache for real-time transactional and analytical use cases. It provides high throughout and low latencies, while meeting a variety of business needs. Because it uses in-memory data structures, typical use cases include database and session caching, as well as leaderboards, gaming and financial trading platforms, social media, and sharing economy apps.

Incorporating ElastiCache alongside AWS Lambda and Amazon SageMaker batch processing provides an end-to-end architecture to develop, update, and consume custom-built recommendations for each of your customers.

In this post, we walk through a use case in which we set up SageMaker to develop and generate custom personalized products and media recommendations, trigger machine learning (ML) inference in batch mode, store the recommendations in Amazon Simple Storage Service (Amazon S3), and use Amazon ElastiCache for Redis to quickly return recommendations to app and web users. In effect, ElastiCache stores ML features processed asynchronously via batch processing. Lambda functions are the architecture glue that connects individual users to the newest recommendations while balancing cost, performance efficiency, and reliability.

Use case

In our use case, we need to develop personalized recommendations that don’t need to be updated very frequently. We can use SageMaker to develop an ML-driven set of recommendations for each customer in batch mode (every night, or every few hours), and store the individual recommendations in an S3 bucket.

For customers with specific requirements, having an in-memory data store provides access to data elements with sub-millisecond latencies. For our use case, we use a Lambda function to fetch key-value data when a customer logs on to the application or website. In-memory data access provides sub-millisecond latency, which allows the application to deliver relevant ML-driven recommendations without disrupting the user experience.

Architecture overview

The following diagram illustrates our architecture for accessing ElastiCache for Redis using Lambda.

The architecture contains the following steps:

- SageMaker trains custom recommendations for customer web sessions.

- ML batch processing generates nightly recommendations.

- User predictions are stored in Amazon S3 as a JSON file.

- A Lambda function populates predictions from Amazon S3 to ElastiCache for Redis.

- A second Lambda function gets predictions based on user ID and prediction rank from ElastiCache for Redis.

- Amazon API Gateway invokes Lambda with the user ID and prediction rank.

- The user queries API Gateway to get more recommendations by providing the user ID and prediction rank.

Prerequisites

To deploy the solution in this post, you need the following requirements:

- The AWS Command Line Interface (AWS CLI) configured. For instructions, see Installing, updating, and uninstalling the AWS CLI version 2.

- The AWS Serverless Application Model (AWS SAM) CLI already configured. For instructions, see Install the AWS SAM CLI.

- Python 3.7 installed.

Solution deployment

To deploy the solution, you complete the following high-level steps:

- Prepare the data using SageMaker.

- Access recommendations using ElastiCache for Redis.

Prepare the data using SageMaker

For this post, we refer to Building a customized recommender system in Amazon SageMaker for instructions to train a custom recommendation engine. After running through the setup, you get a list of model predictions. With this predictions data, upload a JSON file batchpredictions.json to an S3 bucket. Copy the ARN of this bucket to use later in this post.

If you want to skip this SageMaker setup, you can also download the batchpredictions.json file.

Access recommendations using ElastiCache for Redis

In this section, you create the following resources using the AWS SAM CLI:

- An AWS Identity and Access Management (IAM) role to provide required permissions for Lambda

- An API Gateway to provide access to user recommendations

- An ElastiCache for Redis cluster with cluster mode on to store and retrieve movie recommendations

- An Amazon S3 gateway endpoint for Amazon VPC

- The

PutMovieRecommendationsLambda function to fetch the movie predictions from the S3 file and insert them into the cluster - The

GetMovieRecommendationsLambda function to integrate with API Gateway to return recommendations based on user ID and rank

Run the following commands to deploy the application into your AWS account.

- Run

sam init --location https://github.com/aws-samples/amazon-elasticache-samples.git --no-inputto download the solution code from the aws-samples GitHub repo. - Run

cd lambda-feature-storeto navigate to code directory. - Run

sam buildto build your package. - Run

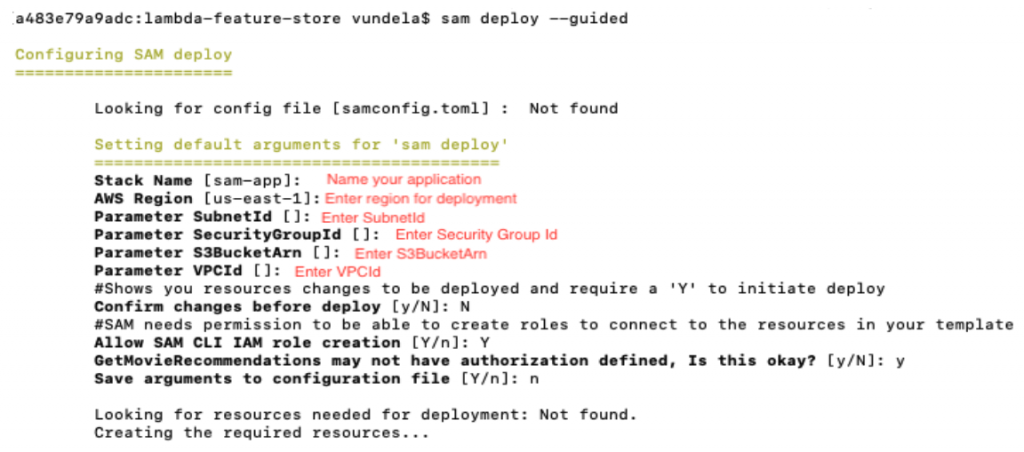

sam deploy --guidedto deploy the packaged template to your AWS account.

The following screenshot shows an example of your output.

Test your solution

To test your solution, complete the following steps:

- Run the

PutMovieRecommendationsLambda function to put movie recommendations in the Redis cluster: - Copy your API’s invoke URL, enter it in a web browser, and append

?userId=1&rank=1to your invoke URL (for example,https://12345678.execute-api.us-west-2.amazonaws.com?userId=1&rank=1).

You should receive a result like the following:

Monitor the Redis cluster

By default, Amazon CloudWatch provides metrics to monitor your Redis cluster. On the CloudWatch console, choose Metrics in the navigation pane and open the ElastiCache metrics namespace to filter by your cluster name. You should see all the metrics provided for your Redis cluster.

Monitoring and creating alarms on metrics can help you detect and prevent issues. For example, a Redis node can connect to a maximum of 65,000 clients at one time, so you can avoid reaching this limit by creating an alarm on the metric NewConnections.

- In the navigation pane on the CloudWatch console, choose Alarms.

- Choose Create Alarm.

- Choose Select Metric and filter the metrics by

NewConnections. - Under ElastiCache to Cache Node Metrics, select the Redis cluster you created.

- Choose Select metric.

- Under Graph attributes, for Statistic, choose Maximum.

- For Period, choose 1 minute.

- Under Conditions, define the threshold as

1000. - Leave the remaining settings at their default and choose Next.

- Enter an email list to get notifications and continue through the steps to create an alarm.

As a best practice, any applications you create should reuse existing connections to avoid the extra cost of creating a new connection. Redis provides libraries to implement connection pooling, which allows you to pull from a pool of connections instead creating a new one.

For more information about monitoring, see Monitoring best practices with Amazon ElastiCache for Redis using Amazon CloudWatch.

Clean up your resources

You can now delete the resources that you created for this post. By deleting AWS resources that you’re no longer using, you prevent unnecessary charges to your AWS account. To delete the resources, delete the stack via the AWS CloudFormation console.

Conclusion

In this post, we demonstrated how ElastiCache can serve as the focal point for a custom-trained ML model to present recommendations to app and web users. We used Lambda functions to facilitate the interactions between ElastiCache for Redis and Amazon S3 as well as between the front end and a custom-built ML recommendation engine.

For use cases that require a more robust set of features that leverage a managed ML service, you may want to consider Amazon Personalize. For more information, see Amazon Personalize Features.

For more details about configuring event sources and examples, see Using AWS Lambda with other services. To receive notifications on the performance of your ElastiCache cluster, you can configure Amazon Simple Notification Service (Amazon SNS) notifications for your CloudWatch alarms. For more information about ElastiCache features, see Amazon ElastiCache Documentation.

About the author

Kalhan Vundela is a Software Development Engineer who is passionate about identifying and developing solutions to solve customer challenges. Kalhan enjoys hiking, skiing, and cooking.

Kalhan Vundela is a Software Development Engineer who is passionate about identifying and developing solutions to solve customer challenges. Kalhan enjoys hiking, skiing, and cooking.