AWS Public Sector Blog

Using advanced analytics to accelerate problem solving in the public sector

Organizations across the globe are using advanced analytics and data science to predict and make decisions. They are finding ways to use their diverse data stores to predict the best place to put their next retail store, what products to recommend to customers, how many employees they need for peak hours of operation, and how long a piece of machinery has until it needs maintenance. Public sector organizations in government, education, nonprofit, and healthcare are looking to use data to advance their missions too.

Expanding and improving government response through analytics

Advanced and predictive analytics are being applied in a range of areas including fraud detection, security, safety, healthcare, and disaster response. In the recent article, “Anticipatory government: Preempting problems through predictive analytics,” AWS Partner Network (APN) Premier Consulting Partner Deloitte highlighted examples of analytics at work in the public sector including improving natural disaster readiness, fighting human trafficking, predicting cyberattacks, and preventing child abuse and fatalities. According to the article, 34% of the chief data officers in the US government use predictive modeling.

Cities and governments across the globe are using predictive analytics to predict and align resources for natural disasters like flooding. Transportation authorities are using advanced analytics to improve traffic flow and detect potential flight risks. Federal tax agencies are using big data and predictive analytics to identify tax evaders and improve compliance. Other government agencies are using predictive modeling to identify children at risk of abuse and mistreatment.

Analytic deployments are ideal for the cloud because of their data intensive formations. Cloud services can support the growing pools of data more cost-effectively and offer improved business continuity to mission-critical data. Database and data warehouse solutions can be spun up in minutes and can dynamically scale to adjust to data volumes. Data lakes can combine diverse datasets for more complex business insights. And the experimentation and implementation of newer technologies such as artificial intelligence (AI), machine learning (ML), or a serverless computing foundation is easier, faster, and more cost-effective in the cloud.

Check out these examples of AWS customers who have implemented analytics solutions on AWS.

FINRA

To respond to rapidly changing market dynamics, the Financial Industry Regulatory Authority (FINRA) moved 90 percent of its data volumes to AWS, to capture, analyze, and store a daily influx of 37 billion records. FINRA uses AWS to power their big data analytics pipeline that handles 135 billion events per day to help monitor the market, prevent financial fraud, protect investors, and maintain market integrity.

Watch the video below and read, “Analytics Without Limits: FINRA’s Scalable and Secure Big Data Architecture.”

Brain Power

Brain Power, together with AWS Professional Services, built a system to analyze body language and help analyze clinical trial videos of children with autism and/or ADHD. The system can use accessible technologies such as webcams and mobile devices to stream video directly to Amazon Kinesis Video Streams and later to Amazon Rekognition to detect body signals. Raw data is ingested into Amazon Kinesis Data Streams and consumed by AWS Lambda functions to analyze and mathematically compute attention and body motion metrics.

Watch the video below and read, “Build automatic analysis of body language to gauge attention and engagement using Amazon Kinesis Video Streams and Amazon AI Services.”

Healthdirect Australia

Healthdirect Australia built a system that supports health services, providers, and practitioners in Australia. The architecture is split into two sides—write-intensive and read-intensive—and uses multiple AWS services including Amazon API Gateway, AWS Lambda, Amazon DynamoDB, Amazon Kinesis, Amazon Simple Storage Service (Amazon S3), Amazon EMR, Amazon ElasticSearch Service, and Amazon Athena.

Store and transform data for building predictive and prescriptive applications

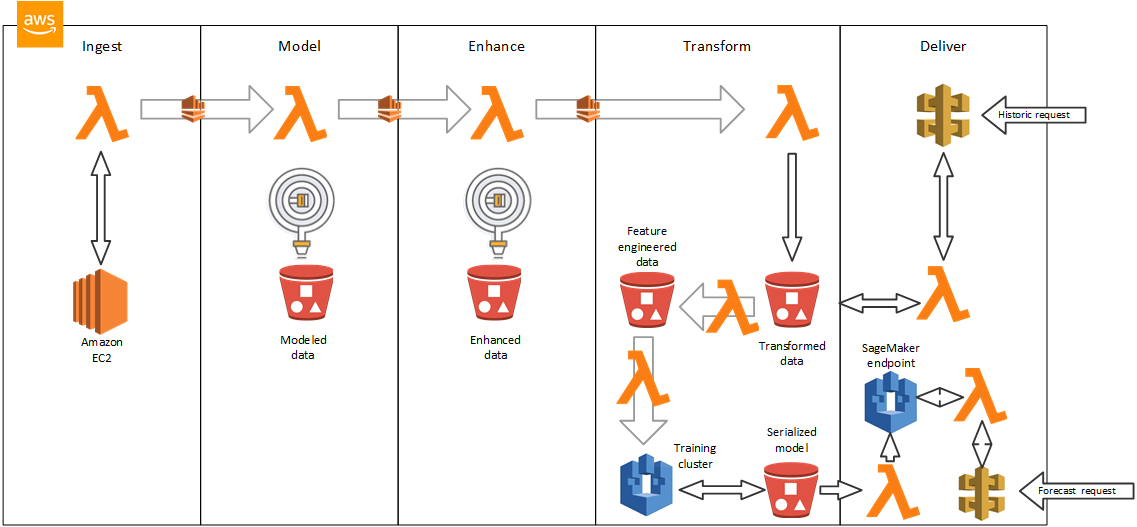

The Predictive Data Science with Amazon SageMaker and a Data Lake on AWS Quick Start Reference Deployment is for users who want to use their data to make predictive and prescriptive models for business value, without needing to configure complex ML hardware clusters. It enables end-to-end data science, starting with raw data and ending with a prediction REST API in a production system. This Quick Start builds a data lake environment for building, training, and deploying machine learning (ML) models with Amazon SageMaker on the AWS Cloud. The deployment, which takes about 10-15 minutes, uses AWS services such as Amazon S3, Amazon API Gateway, AWS Lambda, Amazon Kinesis Data Streams, and Amazon Kinesis Data Firehose.

Learn more

Customers looking to migrate analytic solutions to AWS can take the AWS cloud adoption readiness assessment. This online tool measures your level of cloud readiness across six key perspectives: business, people, process, platform, operations, and security. The tool then provides you with a custom report to use in a professional services engagement, or to help kick-off your migration business plan, executive engagement, and the building of your migration roadmap.

To learn more about advanced analytics, check out the Predictive Data Science with Amazon SageMaker and a Data Lake on AWS Quick Start Reference Deployment, and find other technical reference implementations designed to help you solve common problems and build faster on the AWS Solutions webpage. Read more stories on analytics and data lakes in the public sector.